Tech

Major software issue occurred in PSNI emergency call system | Computer Weekly

A “major issue” with the ControlWorks software used by police to monitor emergency calls led to a delay in officers receiving critical information during a fast-moving investigation, Computer Weekly has learned.

The Police Service of Northern Ireland (PSNI) uses ControlWorks as part of its command and control system. The software is primarily used for managing, logging and categorising calls received by the emergency services from the public.

Sources have confirmed that a “major issue” with ControlWorks in 2020 meant information was not passed on to an inquiry team in a fast-moving investigation until the day after it was received.

A PSNI ControlWorks operator indicated to frontline officers that alerts on the system related to the investigation could be lost or delayed, Computer Weekly has been told.

Later, a senior officer in the case confirmed that a crucial tip-off in the fast-moving police inquiry was delayed because of an issue with ControlWorks.

The PSNI told Computer Weekly that there had been no incidents with ControlWorks that had led to loss of data, and that if there were issues, any delays to police response time would be minimal.

It is understood that the PSNI keeps records of incidents with ControlWorks and refers any serious incidents to its supplier for investigation.

ControlWorks aims to improve response times

The ControlWorks suite includes computer-aided dispatch and customer relationship management capabilities, which are designed to improve response times by speeding up decision-making for call handlers.

The PSNI announced that it was using Capita Communications and Control Solutions’ ControlWorks software in 2018, replacing its 20-year-old Capita Atlas Command and Control System, which had reached the end of its life.

From February 2018, ControlWorks was installed across the PSNI’s three regional contact management centres. The contract was for an initial seven-year term, with options to extend it by up to a decade. The current contract renewal date is 30 September 2028.

ControlWorks, which is used by senior commanders and call handlers, was launched by Capita in 2013. One of its selling points was that it offered auditable logs for greater accountability and better resilience.

After investing heavily in the software, Capita sold its Secure Solutions and Services business, which included ControlWorks and other emergency services software, to NEC Software Solutions UK for £62m. After a long review by the UK’s Competition and Markets Authority (CMA), the sale was completed in 2023.

ControlWorks’ use by police

ControlWorks is used by a number of police forces in the UK, including Greater Manchester, West Midlands, Derbyshire, South Wales, the British Transport Police and the Ministry of Defence Police.

An independent review in 2020 found serious problems with Greater Manchester Police’s Capita-supplied iOPS IT system, which attempted to integrate ControlWorks with Capita’s PoliceWorks record management software used by police officers for managing day-to-day investigations and intelligence records.

“Even when staff have received training, users reported that searches on ControlWorks and PoliceWorks sometimes returned inconsistent or incorrect information about risks,” the review found.

Greater Manchester Police subsequently announced plans to replace PoliceWorks, a process that is expected to be completed next year, after concluding it could not be adapted or fixed to meet the needs of the organisation. It has continued to use ControlWorks.

How ControlWorks errors are categorised

According to freedom of information requests to West Midlands Police, incidents in ControlWorks are categorised depending on their level of severity.

Critical incidents, which affect force-wide availability of ControlWorks, are categorised as P1 and must be corrected within eight hours by the force’s IT suppliers.

A force-wide degradation in the service offered by ControlWorks is categorised as P2 and must be resolved in six hours.

Less serious incidents are categorised as P3, which must be resolved by the force’s supplier in 24 hours, and P4, which do not require urgent remediation.

PSNI: No major disruption

The PSNI said there had been no major disruption to ControlWorks.

“Police can confirm that, to date, there has been no instance of major disruption which has led to data loss as there is significant resilience built into the application, servers and infrastructure,” a spokesperson said.

“If a fault was to occur with ControlWorks, it would be dealt with internally by trained colleagues who also have resilience in place to ensure that in the event of an error, a delay in police response time would be minimal,” the spokesperson added.

The Northern Ireland Policing Board, which oversees the PSNI, said it had not received any reports from the PSNI about errors in ControlWorks.

A spokesperson said that if a major system disruption or significant information or data loss occurred, the board would expect to be informed.

The PSNI has made no reference to the issue with ControlWorks in its annual reports.

NEC, which completed the purchase of ControlWorks from Capita in August 2023, said it had not been made aware of any major issues relating to ControlWorks since it acquired the business.

“We work closely with police forces and other agencies to ensure it is reliable and secure, and have not been made aware of any major issues related to ControlWorks since we acquired the business in 2023,” it said.

A spokesperson for Capita, which originally supplied ControlWorks to the PSNI, said: “Because this is a business we sold several years ago, we can’t comment.”

Tech

A Danish Couple’s Maverick African Research Finds Its Moment in RFK Jr.’s Vaccine Policy

In 1996, Guinea-Bissau seemed like an ideal research post for budding pediatrician Lone Graff Stensballe. Her supervisor, a fellow Dane named Peter Aaby, had spent nearly two decades collecting data on 100,000 people living in the mud brick homes of the West African country’s capital.

Aaby and his partner, Christine Stabell Benn, believed that the years of research in the impoverished country had yielded a major discovery about vaccines—and what they described as “non-specific effects”: The measles and tuberculosis vaccines, which were derived from live, weakened viruses and bacteria, they said, boosted child survival beyond protecting against those particular pathogens.

But, the scientists said, shots made from deactivated whole germs, or pieces of them, such as the diphtheria-tetanus-pertussis (DTP) shot, caused more deaths—especially in little girls—than getting no vaccine at all.

The World Health Organization repeatedly and inconclusively examined these astonishing findings. They tended to elicit shrugs from other global health researchers, who found Aaby’s research techniques unusual and his results generally impossible to replicate.

Then came Donald Trump, Covid, and the administrative reign of anti-vaccine advocate Robert F. Kennedy Jr.

Suddenly, Aaby and Benn weren’t just sending up distant smoke signals from a far corner of the planet. They were confidently voicing their views and policy prescriptions online and in medical journals. The “framework” for “testing, approving, and regulating vaccines needs to be updated to accommodate non-specific effects,” their team wrote in a 2023 review.

And the Trump administration has taken notice.

“They became more strident in saying that their findings were real and that the world needed to do something about it,” said Kathryn Edwards, a Vanderbilt University vaccinologist who has been aware of Aaby’s work since the 1990s. “And they became more aligned with RFK.”

Kennedy, as secretary of the Department of Health and Human Services, cited one of Aaby’s papers to justify slashing $2.6 billion in US support for Gavi, a global alliance of vaccination initiatives. The cut could result in 1.2 million preventable deaths over five years in the world’s poorest countries, the nonprofit agency has estimated. Kennedy has frozen $600 million in current Gavi funding over largely debunked vaccine safety claims.

Kennedy described the 2017 paper as a “landmark study” by “five highly regarded mainstream vaccine experts” that found that girls who received a diphtheria-tetanus-pertussis, or DTP, shot were 10 times more likely to die from all causes than unvaccinated children.

In fact, the study was far too small to confidently make such assertions, as Benn acknowledged. In a study of historical data that included 535 girls, four of those vaccinated against DTP in a three-month period of infancy died of unrelated causes, while one unvaccinated girl died during that period. A follow-up published by the same group in 2022 found that the DTP shot by itself had no effect on mortality. Critics say the 2017 study, rather than being a landmark, exemplified the troubling shortfalls they perceive in the Danish team’s research.

As Aaby and Benn’s US profile has risen, scientists in Denmark have set upon the work of their compatriots. In news and journal articles published over the past 18 months, Danish statisticians and infectious disease experts have said the duo’s methods were unorthodox, even shoddy, and were structured to support preconceived views. A national scientific board is investigating their work.

Stensballe, who worked with Aaby and Benn for 20 years, has been among those voicing doubts.

“It took years to see what I see clearly today, that there is a strange concerning pattern in their work,” Stensballe said in a phone interview from Copenhagen, where she treats children at Rigshospitalet, the city’s largest teaching hospital. She said their work is full of confirmation bias—favoring interpretations that fit their hypotheses.

Tech

Gartner: How AI will transform managed network services | Computer Weekly

In 2024, nearly all the service providers Gartner profiled in its Magic Quadrant for global WAN services report and the Magic Quadrant for managed network services report said they had started leveraging artificial intelligence (AI) in several ways to support the operation of enterprise networks. Areas of usage include AI for IT operations (AIOps), generative AI (GenAI) as a network assistant, enhanced service delivery, and AI in secure access service edge (SASE) and network security.

AIOps has emerged as a foundational capability in managed networking. Leading service providers, such as HCLTech, Microland and NTT Data, have begun to integrate AIOps capabilities and network automation for service onboarding and customer experience improvements. Also, service providers are deploying AI and/or machine learning (ML) to monitor network health, detect anomalies and automate routine tasks in network operations centres (NOCs).

The goal is to shift from reactive troubleshooting to proactive assurance. For example, if latency on a wide-area network (WAN) link starts spiking intermittently, a machine learning model might recognise the pattern as a precursor to link failure and alert engineers or trigger failover before a major outage occurs.

One such service provider is Tata Communications, which has invested in AI-based fault diagnosis using AI/ML for 85% accuracy, while AI-driven telemetry predicts and addresses issues for proactive network monitoring.

Also, many network equipment suppliers now embed AI features to support service providers for network monitoring.

GenAI as a network assistant

Over the past year, Gartner has seen a great deal of interest from managed network service (MNS) providers in applying GenAI to IT operations, including network management. The vision is to provide a network AI assistant that can interact with the provider’s operations teams via a natural language chat interface, help troubleshoot issues, document networks and even implement changes by generating configurations from intent.

One example is HCLTech, which is focusing on leveraging GenAI integrations with software-defined wide-area networking (SD-WAN) to deliver complete automation for lifecycle operations. It is building a supplier-focused GenAI large language model (LLM) as part of its service delivery platform (SDP).

Enhanced service delivery

AI is also leveraged in customer-facing aspects of MNS. Service providers are increasingly using AI to improve support and transparency for clients. This includes AI-powered customer service bots, service portals, and AI-generated reports or insights.

For example, many MNS providers profiled in the Gartner Magic Quadrant for managed network services report use bots, which are increasingly enhanced with AI capabilities, to automate repetitive tasks. Some have thousands of bots as part of their network automation codebases.

AI in SASE and network security

AI and ML are proving just as critical in the security side of MNS as they are in performance management. In fact, many service providers (for example, XTIUM and Microland) pitch AI-powered enhancements of their network security offerings, where the platform uses advanced analytics, AI and GenAI to strengthen and simplify management of local area network (LAN), WAN and cloud security.

For SASE and network security, AI can be used for automated anomaly detection. Here, the system quarantines a suspicious device or triggers multifactor authentication for a user behaving abnormally.

In policy optimisation, AI can recommend tightening or adjusting security policies, based on observed usage. For example, it can suggest zero-trust rules for an application, based on the context – location, time, company departments and so on.

Some advanced service providers, such as HCLTech, are exploring LLMs to assist security analysts – for example, summarising multistep attacks, or even writing firewall rules based on high-level descriptions of a threat.

Also, many SASE platform suppliers emphasise their AI/ML capabilities. For example, Versa Networks touts AI/ML-powered unified SASE that blends SD-WAN and cloud security, using ML to continuously adapt to network conditions and security threats. Similarly, Cato Networks highlights that it leverages AI/ML across its cloud-native SASE service to provide “reliable, accurate network security”, applying advanced data science to threat prevention and smart traffic management.

AI in MNS in 2028 and beyond

The integration of AI in MNS will increasingly enhance operational efficiency and enable more informed decision-making, ensuring that networks are robust and agile enough to adapt to changing demands and traffic patterns. Looking ahead three to five years from now, significant transformation in MNS is expected due to extensive use of AI – traditional, generative and agentic – and automation.

Widespread NOC assistants

The current rapid pace of development suggests that, by 2028, GenAI will have become a mature, trusted assistant in network operations. The experimental and nascent deployments of 2023 to 2024 will give way to robust network AI assistants embedded in MNS workflows.

These assistants will interface through natural language (text or voice) and be integrated with monitoring and ticketing systems. They will be able to answer complex queries about the network, draft change plans, and summarise incidents and problems.

Essentially, if 2023 was the introductory year for network AI assistants (see What is a network AI assistant?), by 2028, they will become a standard capability for NOCs to boost productivity.

The models behind the AI assistants are expected to be more specialised in network engineering and fine-tuned with each provider’s historical data, making them more accurate and context-aware than current tools are.

The best providers will leverage proprietary models – or at least proprietary fine-tuning – that become part of their intellectual property. For example, a provider can use a model trained on years of network event management data, which is exceptionally good at diagnosing telecoms network issues or in network security design efficacy. This will be a differentiator versus others that are using off-the-shelf network AI assistants.

By 2028, agentic AI will likely manifest as automated “Tier 0” responders in NOCs. These are AI agents capable of perceiving network incidents, understanding intent, making autonomous decisions, and executing actions for handling specific tasks and incident types end-to-end without human intervention.

By 2028, it is likely that many service providers will have enabled fully automated remediation for known issues. For example, if a branch SD-WAN router goes offline, the AI agent can perceive the incident, decide on a sequence of fixes – restart a virtual instance, fail over to backup, and so on – and execute them. It will alert a human only if those fail.

Another example could be the detection of a known bug, such as a memory leak in a firewall causing a slowdown. The AI agent, after perceiving the issue, will decide on a temporary configuration workaround or initiate a software patch, and execute these actions.

This goes beyond today’s static scripts by adding autonomous decision-making and action. The agent can verify if the issue truly matches a known pattern, using machine learning, and check if conditions are safe to execute the fix now, using policy – for example, it will reboot after business hours only if it is critical.

Fully autonomous networks will likely remain out of reach until well after 2028. But we expect that, by 2028, such self-healing actions will be accepted for narrow scopes, as service providers will have gained trust in AI for these repetitive tasks, thanks to long training and previous successful outcomes.

Nevertheless, the complexity of coordinating across domains means humans will still handle high-level decision-making. But for routine faults and performance tweaks, automated agents could become the norm, improving service reliability.

This article is based on an excerpt of Gartner’s AI will transform managed network services in the next three years report, by Gartner senior director analyst Gaspar Valdivia.

Tech

This Solar-Powered Smart Sprinkler Keeps My Lawn Watered Without Any Power Cables

Once configured, setup proceeds much like the Aiper and pricier Irrigreen apps: You create a zone, then use the app to define its boundaries. Similar to the aforementioned systems, Oto’s sprinkler is designed for precision watering, firing water in a beam in a single direction instead of a wide spray. That said, Oto’s spray is comparably narrow, only hitting a single, designated patch instead of producing a two-dimensional curtain of water like Irrigreen’s “water printing” system. You get a nice preview of this as you set the boundaries of your yard.

Like its competitors, Oto lets you set each zone as a spot (for watering a single tree, perhaps), a line (for a flowerbed), or a 2-D area (for a yard). I tested all of these modes but spent most of my time working with area zones, which are the most complex option. When defining an area zone, I found Oto’s system to be virtually identical to that of Irrigreen and Aiper, though ever so slightly slower to respond to commands. Even so, it’s very easy to use: A simple interface lets you drop points around the sprinkler to define the boundaries of the zone. When you’ve made a full circle around the sprinkler, the area is complete.

Once configured, you can assign each zone a schedule, with copious options available around which days to water (odd days, even days, select days of the week, every day), and designate a start time (though there is no tying time to sundown or sunrise). Each schedule also gets a weekly watering limit (in inches of depth), which you’ll then parse out over each week’s watering runs. Weather intelligence features let you elect to skip watering if your zip code receives measurable rainfall or if winds are high (both based on internet reports); the user can tweak both the amount of rain and windspeed needed to trigger a skip. The app logs the 20 most recent runs and includes a calendar that details upcoming events.

When watering an area, Oto takes a novel approach to covering the lawn, first moving in circular arcs directly around the sprinkler, then slowly increasing in range with each successive swipe. When finished, it does additional “clean-up” runs to hit any areas that the initial watering arcs didn’t reach. The speed is slow enough and the size of the water’s beam is large enough that the resulting coverage is solid. After test runs, I found the yard to be plenty wet across the entire zone, with no dry patches.

As with all sprinklers, changes in water pressure can make for occasional over- or underwatering of areas, but I found this to be a minimal problem when using the Oto. However, when watering at the terminus of Oto’s range, the power needed to throw the water that far can make for a strong splashdown, which may result in some soil erosion or damage to more sensitive plants.

The Oto also has a “play mode” option that lets you use the sprinkler for a watery game of chase or a more random “splash tag” mode, aka “try to avoid getting hit by the water.” Pro tip: It’s impossible not to get hit.

-

Entertainment5 days ago

Entertainment5 days agoConan O’Brien hat tricks as Oscar host

-

Tech1 week ago

Tech1 week agoCould Contact-Tracing Apps Help With the Hantavirus? Not Really

-

Tech1 week ago

Tech1 week agoI Tried the Best Captioning Smart Glasses, and Only One Leads the Pack

-

Fashion5 days ago

Fashion5 days agoItaly’s Zegna Group’s Q1 growth boosted by strong organic performance

-

Sports1 week ago

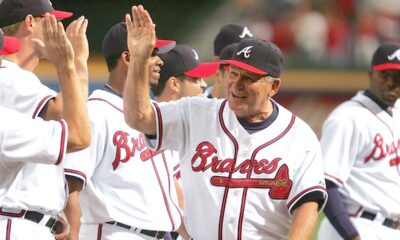

Sports1 week agoBobby Cox, legendary Atlanta Braves manager who led 1995 World Series champions, dead at 84

-

Entertainment1 week ago

Entertainment1 week agoMartin Short: Facing tragedy with joy

-

Entertainment1 week ago

Entertainment1 week agoTom Brady gets back at Kevin Hart during Netflix roast

-

Entertainment1 week ago

Entertainment1 week agoMartha Stewart: How to make an omelet