Tech

A neural blueprint for human-like intelligence in soft robots

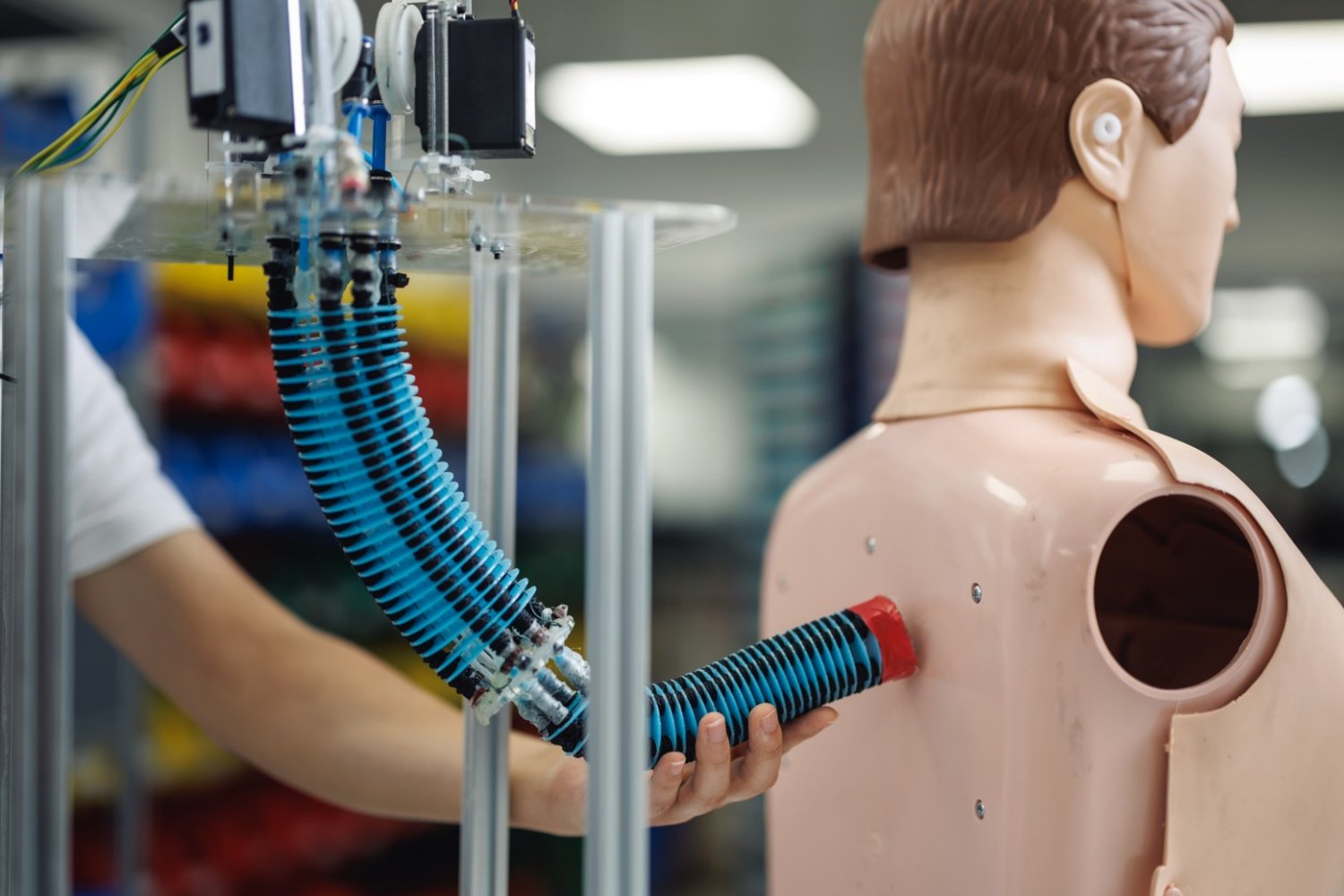

A new artificial intelligence control system enables soft robotic arms to learn a wide repertoire of motions and tasks once, then adjust to new scenarios on the fly, without needing retraining or sacrificing functionality.

This breakthrough brings soft robotics closer to human-like adaptability for real-world applications, such as in assistive robotics, rehabilitation robots, and wearable or medical soft robots, by making them more intelligent, versatile, and safe.

The work was led by the Mens, Manus and Machina (M3S) interdisciplinary research group — a play on the Latin MIT motto “mens et manus,” or “mind and hand,” with the addition of “machina” for “machine” — within the Singapore-MIT Alliance for Research and Technology. Co-leading the project are researchers from the National University of Singapore (NUS), alongside collaborators from MIT and Nanyang Technological University in Singapore (NTU Singapore).

Unlike regular robots that move using rigid motors and joints, soft robots are made from flexible materials such as soft rubber and move using special actuators — components that act like artificial muscles to produce physical motion. While their flexibility makes them ideal for delicate or adaptive tasks, controlling soft robots has always been a challenge because their shape changes in unpredictable ways. Real-world environments are often complicated and full of unexpected disturbances, and even small changes in conditions — like a shift in weight, a gust of wind, or a minor hardware fault — can throw off their movements.

Despite substantial progress in soft robotics, existing approaches often can only achieve one or two of the three capabilities needed for soft robots to operate intelligently in real-world environments: using what they’ve learned from one task to perform a different task, adapting quickly when the situation changes, and guaranteeing that the robot will stay stable and safe while adapting its movements. This lack of adaptability and reliability has been a major barrier to deploying soft robots in real-world applications until now.

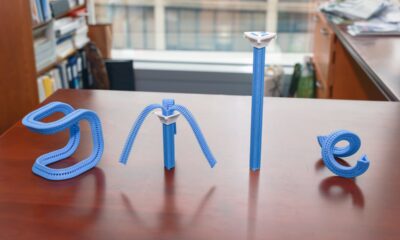

In an open-access study titled “A general soft robotic controller inspired by neuronal structural and plastic synapses that adapts to diverse arms, tasks, and perturbations,” published Jan. 6 in Science Advances, the researchers describe how they developed a new AI control system that allows soft robots to adapt across diverse tasks and disturbances. The study takes inspiration from the way the human brain learns and adapts, and was built on extensive research in learning-based robotic control, embodied intelligence, soft robotics, and meta-learning.

The system uses two complementary sets of “synapses” — connections that adjust how the robot moves — working in tandem. The first set, known as “structural synapses”, is trained offline on a variety of foundational movements, such as bending or extending a soft arm smoothly. These form the robot’s built‑in skills and provide a strong, stable foundation. The second set, called “plastic synapses,” continually updates online as the robot operates, fine-tuning the arm’s behavior to respond to what is happening in the moment. A built-in stability measure acts like a safeguard, so even as the robot adjusts during online adaptation, its behavior remains smooth and controlled.

“Soft robots hold immense potential to take on tasks that conventional machines simply cannot, but true adoption requires control systems that are both highly capable and reliably safe. By combining structural learning with real-time adaptiveness, we’ve created a system that can handle the complexity of soft materials in unpredictable environments,” says MIT Professor Daniela Rus, co-lead principal investigator at M3S, director of the MIT Computer Science and Artificial Intelligence Laboratory (CSAIL), and co-corresponding author of the paper. “It’s a step closer to a future where versatile soft robots can operate safely and intelligently alongside people — in clinics, factories, or everyday lives.”

“This new AI control system is one of the first general soft-robot controllers that can achieve all three key aspects needed for soft robots to be used in society and various industries. It can apply what it learned offline across different tasks, adapt instantly to new conditions, and remain stable throughout — all within one control framework,” says Associate Professor Zhiqiang Tang, first author and co-corresponding author of the paper who was a postdoc at M3S and at NUS when he carried out the research and is now an associate professor at Southeast University in China (SEU China).

The system supports multiple task types, enabling soft robotic arms to execute trajectory tracking, object placement, and whole-body shape regulation within one unified approach. The method also generalizes across different soft-arm platforms, demonstrating cross-platform applicability.

The system was tested and validated on two physical platforms — a cable-driven soft arm and a shape-memory-alloy–actuated soft arm — and delivered impressive results. It achieved a 44–55 percent reduction in tracking error under heavy disturbances; over 92 percent shape accuracy under payload changes, airflow disturbances, and actuator failures; and stable performance even when up to half of the actuators failed.

“This work redefines what’s possible in soft robotics. We’ve shifted the paradigm from task-specific tuning and capabilities toward a truly generalizable framework with human-like intelligence. It is a breakthrough that opens the door to scalable, intelligent soft machines capable of operating in real-world environments,” says Professor Cecilia Laschi, co-corresponding author and principal investigator at M3S, Provost’s Chair Professor in the NUS Department of Mechanical Engineering at the College of Design and Engineering, and director of the NUS Advanced Robotics Centre.

This breakthrough opens doors for more robust soft robotic systems to develop manufacturing, logistics, inspection, and medical robotics without the need for constant reprogramming — reducing downtime and costs. In health care, assistive and rehabilitation devices can automatically tailor their movements to a patient’s changing strength or posture, while wearable or medical soft robots can respond more sensitively to individual needs, improving safety and patient outcomes.

The researchers plan to extend this technology to robotic systems or components that can operate at higher speeds and more complex environments, with potential applications in assistive robotics, medical devices, and industrial soft manipulators, as well as integration into real-world autonomous systems.

The research conducted at SMART was supported by the National Research Foundation Singapore under its Campus for Research Excellence and Technological Enterprise program.

Tech

This Backpack From Topo Designs Will Happily Tag Along to Europe, Down a Dusty Trail, or to Starbucks

As we get out of the house, the gear-obsessed WIRED Reviews team is writing about our favorite bags and EDCs. Today, reviewer Martin Cizmar raves about his Topo Designs backpack. You can also check out other Bag Check stories where WIRED writers share their carryall of choice.

Topo Designs may just make the best bags in the world. The Denver-based gorpcore brand sells gear that looks cool, lasts forever, and has every feature a sensible person desires in a bag without making the product feel overbuilt. If I ever win the lottery, I won’t tell anyone, but there will be signs—like me hauling groceries from Trader Joe’s in two Mountain Gear bags. (I currently use blue polypropylene Ikea bags and shop at Aldi.)

In March, I took a spring break trip to Ireland and Scotland with a carry-on-sized roller bag and the Topo Designs Rover Trail pack as my personal item. I am frequently testing new bags, and I didn’t think much about the decision to commit to the Rover for a week. I quickly learned that you get to know a bag pretty well when you take it on seven flights and stay at eight different hotels in 10 days. By the time I landed back home, I was fully convinced the Rover is the best backpack I have ever used.

Like the six or seven other models of Topo Designs bags I’ve tested—and maybe more extensively than any of the others—the Rover manages to artfully incorporate all the thoughtful little features I appreciated in other backpacks without even a hint of showiness.

At the top of the bag, there’s a zipped compartment that flips open to reveal the rucksack-style opening, which closes with a drawstring. This is where I like to put my keys, any important paperwork I may have on me, and sometimes my wallet. Typically, I find myself double- and triple-checking the zipper to make sure nothing is falling out. No need with the Rover, because inside that zipped compartment, there’s also a clip for keys and an additional zipped mesh sleeve. This feature lets you double-bag anything you don’t want to risk falling out—in my case, passports for myself and my daughter. When I got through the TSA line at the airport, I clipped in my car keys for the week, zipped the passports into the mesh sleeve, and never worried about losing either.

Photograph: Martin Cizmar

Tech

A Fundamental Principle of Aeronautical Engineering Has Been Overturned

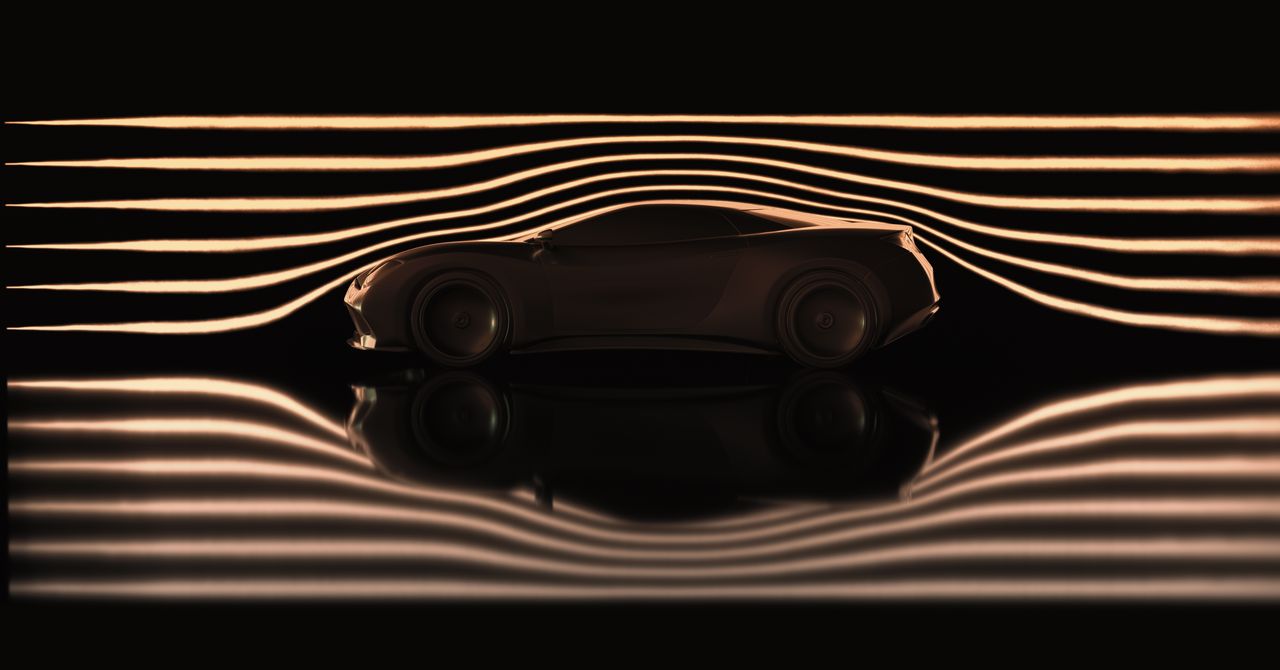

Aerodynamic drag is a major “barrier” in high-speed airplanes, automobiles, and bullet trains. This is because a design with less aerodynamic drag allows the aircraft to move at higher speeds with less energy.

When an aircraft or car body moves at high speed, a thin layer of air called the “boundary layer” is formed on its surface. This boundary layer has two states: laminar flow, in which air flows in an orderly fashion, and turbulent flow, which involves turbulence.

The longer the air stays in the laminar flow state with low friction, the smaller the air resistance becomes, but as the air speed increases, it transitions to turbulent flow. The key to reducing aerodynamic drag is how to delay this transition to turbulence.

For more than 80 years, the principle of “the surface of an object must be smooth” has been the basic premise of aeronautical engineering throughout the world in order to suppress the transition to turbulence and reduce aerodynamic drag. This premise was based on the results of a 1940 study by Ichiro Tani, a Japanese aerodynamicist who quantitatively demonstrated the relationship between “surface roughness” (an indicator of the state of the machined surface) and turbulent transition, arguing that surface roughness, which was unavoidable with the manufacturing technology of the time, prevented laminar flow from being realized.

However, in 1989 Tani reinterpreted the experimental data on rough-surface pipes obtained by fluid engineer Johann Nikulase in the 1930s, bringing a new perspective that “roughness may not necessarily only promote turbulent transition and increase fluid resistance.” Inheriting this idea, a research group led by Yasuaki Kohama of Tohoku University experimentally demonstrated in the 1990s that fibrous rough surfaces, which have fine fibrous irregularities on their surface, have the effect of delaying transition under certain conditions.

The same Tohoku University research team recently announced a discovery that significantly advances this trend. Aiko Yakino, associate professor at Tohoku University’s Institute of Fluid Science, and her research group were the first in the world to demonstrate that aerodynamic drag can be reduced by up to 43.6 percent simply by applying distributed micro-roughness (DMR), a surface roughness so fine and irregular that it cannot be distinguished by the naked eye.

This technology is fundamentally different from the “rivulet (shark skin) process,” which is known as a typical aerodynamic drag reduction technology. The rivulet process mimics the fine longitudinal grooves in shark skin, and by carving grooves approximately 0.1 mm wide along the direction of airflow, it aligns the vortices that occur near the wall surface of turbulent airflow areas. DMR, on the other hand, delays the switch from laminar to turbulent flow by means of random and minute irregularities. The flow zones it affects and the mechanisms it employs are based on completely different concepts.

Precise Measurement in a Wind Tunnel Without Support Bars

A key factor in this achievement was the use of a different wind tunnel experiment method than before. Conventional wind tunnel experiments had structural limitations: the support rods and wires essential for supporting the model disrupted the airflow, negating the minute changes in air resistance caused by micro-scale roughness.

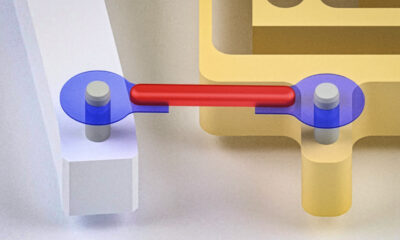

The world’s largest 1-meter magnetic support balance system (1m-MSBS), owned by the Institute of Fluid Science, Tohoku University, has fundamentally solved this problem. This device can levitate a streamlined model approximately 1.07 m in length inside a wind tunnel without contact using electromagnetic force. Because it does not use any support rods or other means, it completely eliminates interference with the airflow around the model.

Yakino and his team precisely measured the total drag coefficient on smooth and DMR-coated surfaces over a wide range of Reynolds numbers (ratio of inertial to viscous forces acting on the fluid) (Re = 0.35 x 10⁶ to 3.6 x 10⁶).

Tech

Some of Dyson’s Top Vacuums Are on Sale for Memorial Day

Shopping for a Dyson vacuum is an experience. There are many models to navigate and serious price tags on most of them. As someone who tests vacuums for a living, I have to admit that a Dyson blows most other vacuums away. There are a few cheaper models I’ll still grab (check out my full guide to cordless and robot vacuums for more recommendations), but if you’re dreaming of a Dyson, this weekend is a great time to buy.

Several Dyson models I love are on sale for the long weekend. This weekend’s sale includes Dyson’s newest robot vacuum and the PencilVac that I can’t stop using, and my overall favorites like the V15 Detect and Gen5Detect, and more models our team has loved using. Read on to find out every on-sale Dyson I’d buy this weekend.

Best Dyson Vacuums on Sale for Memorial Day

The Best Dyson for the Price

If you’re looking for the best features for the best price, I already recommend the Dyson V15 Detect when it’s not on sale, making this an even better time to buy. You’ll get both a Fluffy Optic cleaner head and a Digital Motorbar cleaner head to use for hard floors, carpet, or rugs, trigger control, and details about the particles you suck up while you vacuum. It’s lightweight and easy to use anywhere in the house, and the hour-long battery life should be plenty for a whole-home clean.

A More Powerful Dyson

Dyson’s more powerful stick vacuum is the Gen5Detect, which is a great option if you have pets since it has a faster motor with more suction power than the V15 Detect to suck up more pet hair (it’s our top vacuum for pet hair for a reason) and has a HEPA filter to keep allergens contained inside of the vacuum instead of being released back into the air. It also comes with a true power button, so you don’t have to hold onto the trigger button the entire time to use it. Similar to the V15 Detect, it comes with both a Digital Motorbar cleaner head and a Fluffy Optic cleaner head to use on carpet and hard floors, respectively. You’ll also get two more attachments, plus a built-in dusting and crevice tool (it’s nice not to have to wonder where this attachment is!) It’s an expensive vacuum, but well worth the investment when it’s on sale.

If You Only Have Hard Floors

I shouldn’t like the PencilVac so much, but I find myself reaching for it often, and I think it’s plenty worth its abilities when it’s on sale. Part of what makes it so easy to grab compared to my other stick vacuums is how easy it is to store and keep charged with the freestanding charging base, letting it stand wherever I like in my home as long as there’s an outlet nearby. The PencilVac has two versions, the Fluffy and Fluffycones, with the latter having a design that has fluffy cone-shaped rollers to best collect debris. It is limited to only hard floors and has a short battery life, but I love how maneuverable and lightweight this vacuum is. It’s usually a high price tag for its abilities, and even on sale, it’s not what I would call cheap, but it’s a great, quick daily vacuum.

Dyson’s Latest Robot Vac

Dyson’s newest robot vacuum, the Spot+Scrub Ai, is its first that doubles as both a vacuum and a mop. It has a large base station that reminds me of Dyson’s vacuums, since the dry debris canister is clear and rounded like the ones you’d see attached to a Dyson stick vacuum or one of its upright models. It does a good job mopping and vacuuming, and can learn multiple floors, and the navigation has improved since the older Dyson 306 Vis Nav. Still, it’s not perfect navigation, since the camera sits below the top of the vacuum and doesn’t always see low-profile furniture that it’ll bump into. If you don’t have a ton of low furniture (or tons of IKEA pieces, as I do), this vacuum could be just perfect for you.

A Stick Vac and Mop

If you want a vacuum that doubles as a mop, look no further than this variation of the V15 Detect that’s also on sale for the holiday weekend. The V15s Detect Submarine comes with the Submarine wet roller head that transforms it from a regular Dyson vacuum (that still comes with both the Fluffy optic cleaner head and Digital motorbar cleaner head for you to use on hard floors and carpet) into a wet roller mop. You can’t buy a regular V15 Detect and add this attachment on; this V15s is made to work with this Submarine head. You’ll fill the small reservoir on the roller head with water and can start mopping away, but you will have to rinse the mop head afterwards by hand, which is a little gross.

A Handheld-Only Dyson

If you’re not looking to spend a ton but want a Dyson that’s super portable and great for stairs, cars, and even boats, the Dyson Car+Boat is made for that. It’s in the name, after all. This handheld-only vacuum packs solid power and has a great battery life for a handheld vacuum. It uses a trigger-style control like the V15 Detect, which I actually find ideal for cleaning compact spaces like stairs and cars so that you’re not fumbling to switch it off as you move around the car or to the next set of stairs. It’s an affordable way to get into the Dyson ecosystem, especially since it’s on sale.

Power up with unlimited access to WIRED. Get best-in-class reporting and exclusive subscriber content that’s too important to ignore. Subscribe Today.

-

Entertainment1 week ago

Entertainment1 week agoWhere Pete Davidson, Elsie Hewitt stand after breakup: Details revealed

-

Politics1 week ago

Politics1 week agoRising diesel costs from Iran war strain US school budgets

-

Tech1 week ago

Tech1 week agoWhy Is Your Grill So Dumb? The Best Grills Set Temp Like an Oven

-

Fashion1 week ago

Fashion1 week agoRMG trade bodies seek policy support from Bangladesh PM

-

Tech1 week ago

Tech1 week agoThis Solar-Powered Smart Sprinkler Keeps My Lawn Watered Without Any Power Cables

-

Tech1 week ago

Tech1 week agoTesla Reveals New Details About Robotaxi Crashes—and the Humans Involved

-

Fashion6 days ago

Fashion6 days agoNigeria Kwara Garment Factory, KWS Garment Production Village ink pact

-

Sports1 week ago

Sports1 week agoPakistan steady after Das ton | The Express Tribune

-Reviewer-Photo-SOURCE-Brenda-Stolyar.jpg)