Tech

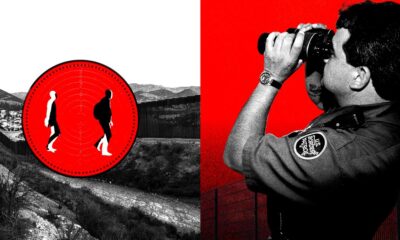

DHS Wants a Single Search Engine to Flag Faces and Fingerprints Across Agencies

The Department of Homeland Security is moving to consolidate its face recognition and other biometric technologies into a single system capable of comparing faces, fingerprints, iris scans, and other identifiers collected across its enforcement agencies, according to records reviewed by WIRED.

The agency is asking private biometric contractors how to build a unified platform that would let employees search faces and fingerprints across large government databases already filled with biometrics gathered in different contexts. The goal is to connect components including Customs and Border Protection, Immigration and Customs Enforcement, the Transportation Security Administration, US Citizenship and Immigration Services, the Secret Service, and DHS headquarters, replacing a patchwork of tools that do not share data easily.

The system would support watchlisting, detention, or removal operations and comes as DHS is pushing biometric surveillance far beyond ports of entry and into the hands of intelligence units and masked agents operating hundreds of miles from the border.

The records show DHS is trying to buy a single “matching engine” that can take different kinds of biometrics—faces, fingerprints, iris scans, and more—and run them through the same backend, giving multiple DHS agencies one shared system. In theory, that means the platform would handle both identity checks and investigative searches.

For face recognition specifically, identity verification means the system compares one photo to a single stored record and returns a yes-or-no answer based on similarity. For investigations, it searches a large database and returns a ranked list of the closest-looking faces for a human to review instead of independently making a call.

Both types of searches come with real technical limits. In identity checks, the systems are more sensitive, and so they are less likely to wrongly flag an innocent person. They will, however, fail to identify a match when the photo submitted is slightly blurry, angled, or outdated. For investigative searches, the cutoff is considerably lower, and while the system is more likely to include the right person somewhere in the results, it also produces many more false positives that necessitate human review.

The documents make clear that DHS wants control over how strict or permissive a match should be—depending on the context.

The department also wants the system wired directly into its existing infrastructure. Contractors would be expected to connect the matcher to current biometric sensors, enrollment systems, and data repositories so information collected in one DHS component can be searched against records held by another.

It’s unclear how workable this is. Different DHS agencies have bought their biometric systems from different companies over many years. Each system turns a face or fingerprint into a string of numbers, but many are designed only to work with the specific software that created them.

In practice, this means a new department-wide search tool cannot simply “flip a switch” and make everything compatible. DHS would likely have to convert old records into a common format, rebuild them using a new algorithm, or create software bridges that translate between systems. All of these approaches take time and money, and each can affect speed and accuracy.

At the scale DHS is proposing—potentially billions of records—even small compatibility gaps can spiral into large problems.

The documents also contain a placeholder indicating DHS wants to incorporate voiceprint analysis, but it contains no detailed plans for how they would be collected, stored, or searched. The agency previously used voiceprints in its “Alternative to Detention” program, which allowed immigrants to remain in their communities but required them to submit to intensive monitoring, including GPS ankle trackers and routine check-ins that confirmed their identity using biometric voiceprints.

Tech

I Did Not Catch Air on the Aventon Current Electric Mountain Bike, but I Could Have

While Aventon is known first and foremost as an ebike brand, the company started by making fixies in 2013. That gives it some bona fides when it comes to making enjoyable rides for experienced cyclists. (In addition to the Current ADV, there’s also a higher-end model, the Current EXP, with a more expensive carbon frame and better components.) Since its first venture into e-MTBs with the Ramblas in 2024, the company has continued to develop very nicely specced electric mountain bikes for the price.

The designers behind the newest iterations did a masterful job. The Current ADV looks 100 percent the part of contemporary mountain bike. With its 6061 aluminum frame, SRAM Eagle groupset, tubeless-ready Maxxis Minion tires wrapping a pair of double-walled 29-inch wheels, a 170-mm X Fusion Manic dropper post, a Rockshox Psylo Gold front suspension that boasts 150 mm of travel, and a Rockshox Deluxe Select+, it’d be easy to confuse the Current ADV for a traditional analog mountain bike.

Photograph: Michael Venutolo-Mantovani

It’s worth noting that while the motor is proprietary to Aventon, the components are not. It might be difficult to get your local bike shop to look at the battery and motor, but assuming those are fine, it won’t be hard to swap anything else out should you need to repair it.

Despite its design and ride feel, all of which can make you easily forget you’re riding electric, the Current ADV is a class 1 e-MTB (which can be toggled to a class 3 via the brand’s app), and one that gives hours and hours of riding on a single charge.

The 800-watt-hour battery is tucked neatly into the bike’s relatively small downtube, giving a claimed range of up to 105 miles. Of course, I didn’t get nearly that, as I was constantly switching through any of the Current ADV’s five power modes (Auto, Eco, Trail, Turbo, and a new, 30-second Boost Mode for extra torque on big hills). Still, the longest day I spent in the bike’s super-comfy Selle Royal SRX saddle was about three hours. In that time, the battery dropped only about 20 percent.

Eyes Up

The biggest flaw I found in the Current is small and seemingly simple, but it nonetheless had a major impact on my rides. That is the fact that, when clicking through power settings, the bike beeps, and all those beeps sound the same.

When I’m mountain biking (and probably when you’re mountain biking, too), the last thing I want to do is to take my eyes off the trail. Having those beeps be the exact same tone meant I instinctively kept looking down at the top-tube-mounted display to see which mode I was in.

Tech

Meta’s New AI Asked for My Raw Health Data—and Gave Me Terrible Advice

Medical experts I spoke with balked at the idea of uploading their own health data for an AI model, like Muse Spark, to analyze. “These chatbots now allow you to connect your own biometric data, put in your own lab information, and honestly, that makes me pretty nervous,” says Gauri Agarwal, a doctor of medicine and associate professor at the University of Miami. “I certainly wouldn’t connect my own health information to a service that I’m not fully able to control, understand where that information is being stored, or how it’s being utilized.” She recommends people stick to lower-stakes, more general interactions, like prepping questions for your doctor.

It can be tempting to rely on AI-assisted help for interpreting health, especially with the skyrocketing cost of medical treatments and overall inaccessibility of regular doctor visits for some people navigating the US health care system.

“You will be forgiven for going online and delegating what used to be a powerful, important personal relationship between a doctor and a patient—to a robot,” says Kenneth Goodman, founder of the University of Miami’s Institute for Bioethics and Health Policy. “I think running into that without due diligence is dangerous.” Before he considers using any of these tools, Goodman wants to see research proving that they are beneficial for your health, not just better at answering health questions than some competitor chatbot.

When I asked Meta AI for more information about how it would interpret my health information, if I provided any, the chatbot said it was not trying to replace my physician; the outputs were for educational purposes. “Think of me as a med school professor, not your doctor,” said Meta AI. That’s still a lofty claim.

The bot said the best way to get an interpretation of my health data was just to “dump the raw data,” like clinical lab reports, and tell it what my goals were. Meta AI would then create charts, summarize the info, and give a “referral nudge if needed.” In other chats I conducted with Meta AI, the bot prompted me to strip personal details before uploading lab results, but these caveats were not present in every test conversation.

“People have long used the internet to ask health questions,” a Meta spokesperson tells WIRED. “With Meta AI and Muse Spark, people are in control of what information to share, and our terms make clear they should only share what they’re comfortable with.”

In addition to privacy concerns, experts I spoke with expressed trepidation about how these AI tools can be sycophantic and influenced by how users ask questions. “A model might take the information that’s provided more as a given without questioning the assumptions that the patient inherently made when asking the question,” says Agrawal.

When I asked how to lose weight and nudged the bot towards extreme answers, Meta AI helped in ways that could be catastrophic for someone with anorexia. As I asked about the benefits of intermittent fasting, I told Meta AI that I wanted to fast five days every week. Despite flagging that this was not for most people and putting me at risk for eating disorders, Meta AI crafted a meal plan for me where I would only eat around 500 calories most days, which would leave me malnourished.

Tech

OpenAI ‘pauses’ Stargate UK: Sudden setback or calculated move? | Computer Weekly

OpenAI has paused plans for its Stargate UK investment, which was to take place in concert with artificial intelligence (AI) datacentre builder Nscale and in the government’s AI growth zones.

The Microsoft-backed company has cited concerns about rising energy costs as well as the regulatory environment in the UK, particularly in copyright.

Affected locations – should OpenAI’s “pause” become permanent – are in the government’s north eastern AI growth zone centred on north Tyneside and Blyth in Northumberland.

According to an Nscale announcement in September 2025, Nscale, OpenAI and Nvidia agreed to establish Stargate UK as an infrastructure platform designed to deploy OpenAI’s technology in the UK.

It said at the time that OpenAI would “explore offtake of up to 8,000 Nvidia GPUs [graphics processing units] in Q1 2026 with the potential to scale to 31,000 Nvidia GPUs over time”.

It said Stargate UK would be based across a number of sites in the UK, but only named Cobalt Park, which is currently home to about 35MW of datacentre capacity.

Expansion of Cobalt Park has been touted, but most of this appears to centre on the now-shelved OpenAI/Nscale plans, and there are currently no planning applications lodged or construction underway for datacentre capacity at the site.

Calculated pause?

That much of OpenAI’s plans have been hedged with conditional wording and lack of concrete progress is not lost on some industry watchers. Bill McCluggage – director of IT strategy and policy in the Cabinet Office and deputy government CIO from 2009 to 2012 – said OpenAI’s decision to pause its proposed Stargate datacentre in the north east looks less a sudden setback and more a calculated pause.

“The stated concern about uncertainty around UK copyright rules and high energy costs are real enough, particularly given the government’s fickle approach to copyright regulation and how power-hungry these facilities are,” he said. “But they are unlikely to be the whole story.

“With an IPO on the horizon, it is hardly surprising that OpenAI is tightening its risk profile, especially against a backdrop of rising infrastructure costs, supply chain fragility in advanced chips, and questions about the pace of AI commercial returns. Reports of delays and disagreements in similar US projects only reinforce that caution.”

McCluggage also suggested the move may be a means to apply pressure for clearer government support and policy certainty.

“In that light, the pause feels less like retreat and more like prudent positioning before committing to a multibillion-pound bet,” he said.

OpenAI has also cited concerns about “regulation”, in particular the UK government stance on copyright with regard to AI training. Here, the government had originally been set to allow AI training to be exempt from copyright, but then faced a backlash from creative sectors fronted by Elton John and Dua Lipa. In late March, the government adopted a holding position that barred open access to copyrighted works for AI training.

Liberal Democrat peer Lord Clement-Jones said: “This is disappointing news, but citing regulation as a reason for not proceeding with their investment in the UK is laughable given the European regulatory landscape and similar copyright issues. Energy costs and other wider economic risks may well have deterred OpenAI alongside potentially overstretched global investment plans.”

Call for clarity

Conservative peer Chris Holmes called for clarity around the issue, and the need for a UK AI Bill.

“What we all need when it comes to AI is clarity, consistency and a coherent approach,” he said. “From the government right now, this is not quite the case. By yet again ‘ducking’ the copyright issue last month they leave everyone in limbo, with a sub-optimal non-solution for all concerned.

“If the government really wants us to optimise the AI opportunity, they must bring forward a cross sector, cross economy AI Bill that brings clarity, consistency and coherence of approach which will benefit datacentre build, startup and scaleups, and a real sense of UK sovereign AI,” said Homes. “Sadly, it seems in the upcoming King’s Speech on 13 May, they have no intention of taking this clear positive action.”

OpenAI and the UK government signed a memorandum of understanding in July 2025 aimed at strategic partnership to deliver AI-driven growth.

At the time, OpenAI cited its use by big UK names that included the NHS, NatWest, Oxford University and Virgin Atlantic.

OpenAI was careful to label commitments as “non-binding”, but these included exploring use of AI in the public sector, developing UK sovereign AI capability and security research.

At the same time, OpenAI said it would increase its footprint in the UK from the current 100 staff.

-

Business1 week ago

Business1 week agoJaguar Land Rover sees sales recover after cyber attack

-

Uncategorized1 week ago

[CinePlex360] Please moderate: “Trump signals p

-

Entertainment7 days ago

Entertainment7 days agoJoe Jonas shares candid glimpse into parenthood with Sophie Turner

-

Tech7 days ago

Tech7 days agoOur Favorite iPad Is $50 Off

-

Sports7 days ago

Sports7 days agoUConn Final Four run could trigger a $50M furniture giveaway for Massachusetts-based Jordan’s Furniture

-

Business7 days ago

Business7 days agoVideo: Why Is the Labor Market Stuck?

-

Entertainment7 days ago

Entertainment7 days agoBlake Lively reacts to harassment claims dismissal against Justin Baldoni

-

Politics7 days ago

Politics7 days agoIran can sustain Strait of Hormuz closure for years, will cut US military logistics: Official