Tech

SuperMicro takes on server leaders as AMD pushes on-premise AI | Computer Weekly

Market data from analyst IDC has shown that SuperMicro has leapfrogged established server makers Lenovo and HPE as the second-largest PC server maker behind Dell.

SuperMicro experienced growth of almost 134% for the fourth quarter of 2025 with revenue of $11.7bn, which means it accounts for over 9% of the global server market. Dell was ahead with 10% market share and revenue of $12.6bn, while Chinese manufacturer IEIT Systems took the third spot, with revenue of $5.2bn and a 4% market share ahead of Lenovo, which posted revenue of $5.1bn, and HPE ($3.9bn).

“The race for AI [artificial intelligence] adoption is settling the market pace, and with companies starving for infrastructure looking not only at GPUs [graphics processing units], but also consuming more CPUs [central processing units] among other components in order to feed their needs, we are going to see more price pressures, and that may impact on market dynamics with less units but higher average selling prices going forward,” said Juan Seminara, research director of Worldwide Enterprise Infrastructure Trackers at IDC.

IDC noted that volatile increasing prices on certain components such as GPUs, dynamic random access memory (DRAM) and solid state drives (SSDs) has meant that some companies have been trying to secure prices ahead while the industry is accommodating to the new reality. It predicted that the impact of this price volatility could be hitting harder during 2026 as demand keeps outpacing service capacity in the near term.

Besides Dell, the established server makers seem to be losing ground in the server market. But they appear to be looking at a new market opportunity being pushed by chipmaker AMD, which is the deployment of on-premise PC servers optimised to run agentic AI.

In a bid to entice IT buyers away from cloud-based AI hardware, AMD has unveiled what it sees as a new category of PC called Agent Computers. In a post on the AMD website, the company described how to run OpenClaw, the open source AI agent, locally on AMD Ryzen AI Max+ processors and Radeon GPUs using a Windows 11 PC with the Windows Subsystem for Linux (WSL).

AMD said the PC system configured with 128GB unified memory is capable of running “cloud-quality AI agent workloads efficiently” using OpenClaw. According to its own benchmark data, with the Qwen 3.5 35B A3B model, the system delivers around 45 tokens per second and processes 10,000 input tokens in about 19.5 seconds. AMD said the configuration supports a maximum context window of 260,000 tokens, and can run up to six agents concurrently, which it said means it is able to deliver scalable local AI experimentation while maintaining strong responsiveness on consumer hardware.

AMD sees such a system running autonomously rather like the pre-cloud era branch office servers, handling tasks sent by users through a browser user interface on another Windows PC, or via Slack or WhatsApp.

PC makers that have “agent-ready” PCs include HP, Lenovo and Asus. The IDC figures show that revenue for servers with an embedded GPU in the fourth quarter of 2025 grew 59.1% year-over-year, representing more than half of the total server market revenue.

The AMD Ryzen AI Max+ has an integrated GPU, and is currently one of the processor options for PCs certified as Copilot+ devices. While these devices are either laptops or desktop PCs with monitors, AMD’s Agent Computer appears to be positioned as more of a traditional desktop Windows PC running as a server, without a screen or keyboard. The setup AMD provides is optimised to run LM Studio. This uses Ubuntu on the WSL to provide access to large language models, which then work with an OpenClaw server running locally on the same hardware.

Tech

Top Uplift Desk Coupon Codes: Save up to $570

Upgrading your home office can feel like going down a rabbit hole. A simple search for a basic new desk can quickly turn into hours down the drain and endless tabs open on your computer, with every option starting to blur together. Uplift has a loyal following for its super customizable desks, smart (and creative—under-desk hammock, anyone?) accessories, and a solid build quality that makes long workdays more manageable.

We’ve explored the perks of a standing desk, and the takeaway is pretty clear: even if it won’t magically fix everything, the right standing desk setup can make all the difference in the way you work. If you’re ready to make the leap into the standing desk space, starting with an Uplift coupon code is a smart move.

Save up to $570 With This Uplift Desk Coupon Code

If you’ve been waiting for the right time to upgrade your workspace, this is your sign. Right now, you can save up to $570 on standing desks through a mix of tiered discounts and bundled accessories. With the Uplift promo code SPRING, you’ll get $100 off orders of $999 or more, $150 off $1,499, $200 on $1,999, and $300 on $2,999 or more.

Uplift also includes five free accessories (worth up to $270), which is where this deal really comes in clutch, especially if you’re building a full setup. Think practical upgrades like monitor arms to lift your screen to eye level, cable management kits to tame cords, or an anti-fatigue standing mat to make standing on your feet more comfortable. The right ergonomic add-ons can make a real difference in day-to-day comfort, and this Uplift desk promo code accessories offer helps you get there.

Get $20 Off When You Sign up for Uplift Emails

Like many other brands, Uplift rewards their loyal customers. When you sign up for Uplift emails, which include things like product drops and restock alerts, you can save $20 on your order over $199. Not only will you get exclusive discounts, you’ll get email-only deals, early sale access, and special promotions with this Uplift newsletter sign up. Plus, the $20 off your next purchase.

Score Free Shipping on all Orders This Month

Who can say no to free shipping, especially when it’s for a major furniture item like a standing desk? Right now, Uplift is offering free and fast shipping on all orders, no Uplift desk coupon code required. And timing can work in your favor: most orders placed before 3 pm CST ship the same business day, so you’re not stuck refreshing the tracking page for a week. Whether you’re in the middle of an office refresh (or just impatient when it comes to deliveries), this is a major perk.

Claim up to 5 Free Accessories With Your Standing Desk Purchase

A built-in bonus when you buy an Uplift standing desk is that you get up to five free accessories baked into the purchase. You can choose from a huge catalog of over 400 add-ons to go with the desk (honestly, I was overwhelmed at first). Options range from practical to fun, like cable management kits and desk organizer sets to a desk-mounted cup holder and an under-desk hammock. There are even some branded extras, like a stainless steel tumbler and a t-shirt, depending on your vibe.

Use Your FSA Dollars to Get the Most out of Your Desk

Uplift desks may be eligible for reimbursement through your HSA or FSA, which means you could effectively pay for part of your desk setup with pre-tax dollars. This can lead to major savings, especially when stacked with an Uplift promo code.

The process is pretty straightforward: Check out normally (no need to use your HSA/FSA card upfront), then complete a quick health survey through Uplift’s partner program, which will be on your confirmation screen or through your email receipt. If you qualify, a licensed provider will issue a Letter of Medical Necessity, which you can then submit for reimbursement. It’s a few extra steps, but the payoff is worth it, especially if you’ve been eyeing a bigger purchase.

Tech

Greg Brockman Defends $30B OpenAI Stake: ‘Blood, Sweat, and Tears’

Two days before the Musk v. Altman trial began, Elon Musk asked OpenAI cofounder and president Greg Brockman about reaching a settlement. When Brockman suggested both sides drop their claims, Musk responded, “By the end of this week, you and Sam [Altman] will be the most hated men in America. If you insist, so be it.”

The message—which OpenAI’s lawyers made public on Sunday, and which Judge Yvonne Gonzalez Rogers subsequently refused to let the jury hear about—underscores what may be Musk’s larger goal in this trial. He appears to be trying to not only win over the jurors to potentially remove Brockman and CEO Sam Altman from power, but also stir up dirt on the two men and damage OpenAI’s public image.

As Brockman took the stand on Monday, Musk’s attorney Steven Molo quickly started questioning him about his compensation at OpenAI. Brockman revealed that his equity stake at OpenAI is currently worth more than $20 billion, and perhaps up to $30 billion. While Brockman initially promised to donate $100,000 to OpenAI when it was being set up, he said he ultimately never followed through.

Brockman has held a number of instrumental roles at OpenAI since he cofounded the company in 2015. In the startup’s early days, it operated out of his apartment in the Mission District of San Francisco. Today, he’s deeply involved with refocusing OpenAI on a few key products, such as Codex. In the past year, Brockman has also given millions to super PACs promoting AI and President Trump, and has previously said this increased political spending is related to OpenAI’s founding mission to create artificial general intelligence that benefits all of humanity.

In court on Monday, Molo tried to make the case that Brockman and Altman had essentially looted OpenAI’s original nonprofit, which Musk funded and helped create.

In its early days, OpenAI told investors and employees that its nonprofit mission took precedence over generating profit. Brockman testified that his financial interests are still, to this day, second to OpenAI’s nonprofit mission.

When OpenAI created its for-profit arm in 2019, which received assets from the nonprofit, Brockman testified that he was given a significant stake in the new entity. Early in OpenAI’s history, Brockman had referenced wanting to be a billionaire, writing in his personal journal, “Financially what will take me to $1B?”

On Monday, Molo pressed Brockman for several minutes about the vast wealth he had accumulated beyond his initial goal.

“Why not donate that $29 billion to the OpenAI nonprofit? Why didn’t you do that?” Molo asked. Brockman responded that he and others had poured “blood, sweat, and tears” into building OpenAI in the years since Musk left the company.

OpenAI’s foundation holds a stake of over $150 billion in the company, making it one of the richest nonprofits in history, Brockman said. That’s roughly five times Brockman’s ownership interest. Altogether, OpenAI employees hold about 25 percent of shares. The foundation has 27 percent. Brockman testified that OpenAI’s nonprofit had received less than $150 million from donors, implying Musk had been incidental to the company’s success and that the real drivers were those who stuck around to build out OpenAI.

Of course, Brockman’s stake in OpenAI could be worth much more than $30 billion if the company successfully goes public in the next two years. When asked whether OpenAI was exploring a potential IPO, Brockman said he believes so.

Tech

It took 40 years for technology to catch up to this zipper design

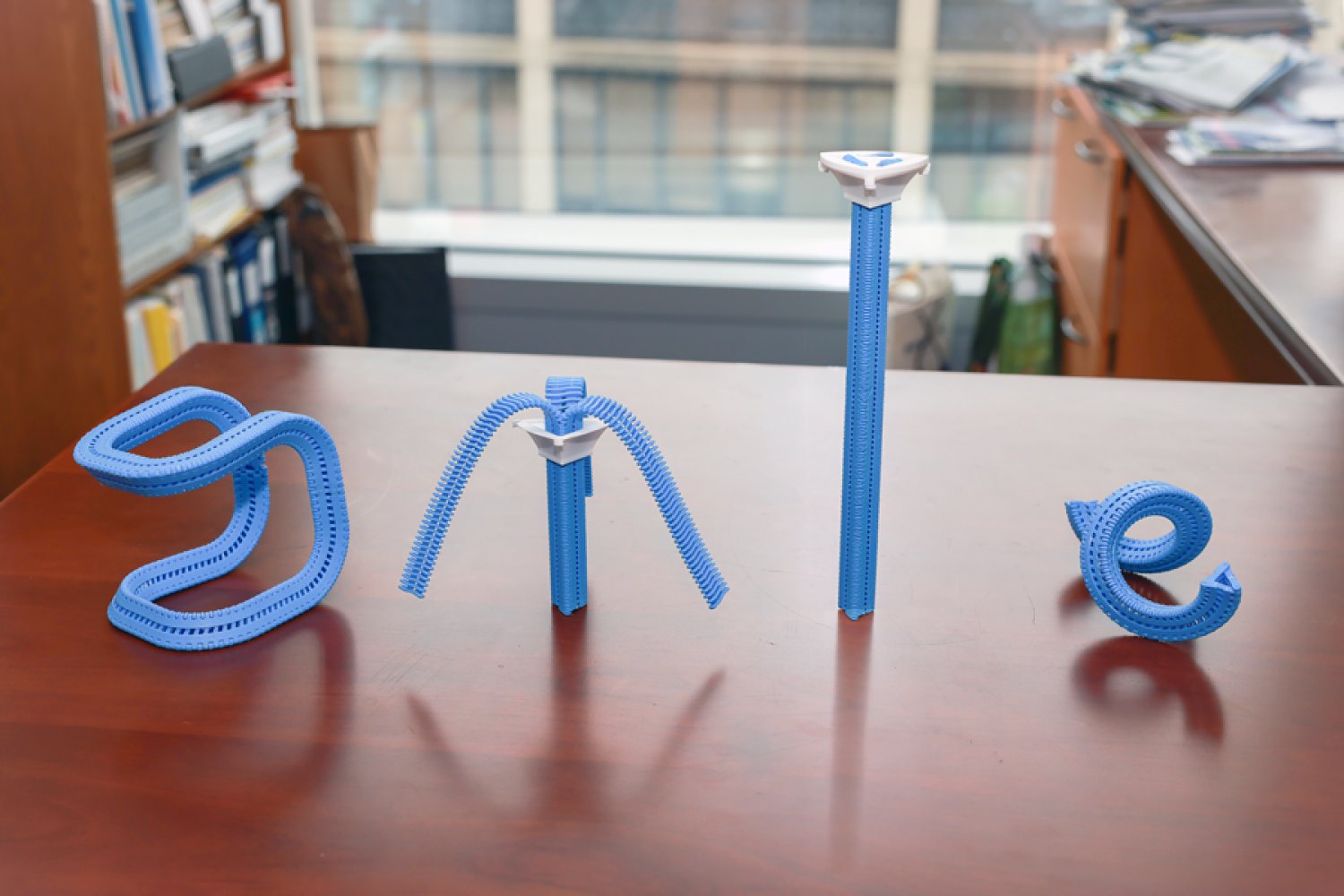

In 1985, the Innovative Design Fund placed an ad in Scientific American offering up to $10,000 to support clever prototypes for clothing, home decor, and textiles. William Freeman PhD ’92, then an electrical engineer at Polaroid and now an MIT professor, saw it and submitted a novel idea: a three-sided zipper. Instead of fastening pants, it’d be like a switch that seamlessly flips chairs, tents, and purses between soft and rigid states, making them easier to pack and put together.

Freeman’s blueprint was much like a regular zipper, except triangular. On each side, he nailed a belt to connect narrow wooden “teeth” together. A slider wrapping around the device could be moved up to fasten the three strips into place, straightening them into a triangular tube. His proposal was rejected, but Freeman patented his prototype and stored it in his garage in the hopes it might come in handy one day.

Nearly 40 years later, MIT Computer Science and Artificial Intelligence Laboratory (CSAIL) researchers wanted to revive the project to create items with “tunable stiffness.” Prior attempts to adjust that weren’t easily reversible or required manual assembly, so CSAIL built an automated design tool and adaptable fastener called the “Y-zipper.” The scientists’ software program helps users customize three-sided zippers, which it then builds on its own in a 3D printer using plastics. These devices can be attached or embedded into camping equipment, medical gear, robots, and art installations for more convenient assembly.

“A regular zipper is great for closing up flat objects, like a jacket, but Freeman ideated something more dynamic. Using current fabrication technology, his mechanism can transform more complex items,” says MIT postdoc and CSAIL researcher Jiaji Li, who is a lead author on an open-access paper presenting the project. “We’ve developed a process that builds objects you can rapidly shift from flexible to rigid, and you can be confident they’ll work in the real world.”

Why zippers?

Users can customize how the fasteners look when they’re zipped up in CSAIL’s software program; they can select the length of each strip, as well as the direction and angle at which they’ll bend. They can also choose from one of four motion “primitives” to select how the zipper will appear when it’s zipped up: straight, bent (similar to an arch), coiled (resembling a spring), or twisted (looks like screws).

The Y-zipper that results will appear to “shape-shift” in the real world. When unzipped, it can look like a squid with three sprawling tentacles, and when you close it up, it becomes a more compact structure (like a rod, for instance). This flexibility could be useful when you’re traveling — take pitching a tent, for example. The process can take up to six minutes to do alone, but with the Y-zipper’s help, it can be done in one minute and 20 seconds. You simply attach each arm to a side of the tent, supporting the structure from the top so that the zipper seemingly pops the canopy into place.

This seamless transition could also unlock more flexible wearables, often useful in medical scenarios. The team wrapped the Y-zipper around a wrist cast, so that a user could loosen it during the day, and zip it up at night to prevent further injuries. In turn, a seemingly stiff device can be made more comfortable, adjusting to a patient’s needs.

The system can also aid users in crafting technology that moves at the push of a button. One can attach a motor to the Y-zipper after fabrication to automate the zipping process, which helps build things like an adaptive robotic quadruped. The robot could potentially change the size of its legs, tightening up into taller limbs and unzipping when it needs to be lower to the ground. Eventually, such rapid adjustments could help the robot explore the uneven terrain of places like canyons or forests. Actuated Y-zippers can also build dynamic art installations — for example, the team created a long, winding flower that “bloomed” thanks to a static motor zipping up the device.

Mastering the material

While Li and his colleagues saw the creative potential of the Y-zipper, it wasn’t yet clear how durable it would be. Could they sustain daily use?

The team ran a series of stress tests to find out. First, they evaluated the strength and flexibility of polylactic acid (PLA) and thermoplastic polyurethane (TPU), two plastics commonly used in 3D printing. Using a machine that bent the Y-zippers down, they found that PLA could handle heavier loads, while TPU was more pliable.

In another experiment, CSAIL researchers used an actuator to continuously open and close the Y-zipper to see how long it’d take to snap. Some 18,000 cycles of zipping and unzipping later, they finally broke. Y-zipper’s secret to durability, according to 3D simulations: its elastic structure, which helps distribute the stress of heavy loads.

Despite these findings, Li envisions an even more durable three-sided zipper using stronger materials, like metal. They may also make the zippers bigger for larger-scale projects, but that’s not yet possible with their current 3D printing platform.

Jiaji also notes that some applications remain unexplored, like space exploration, wherein Y-zipper’s tentacles could be built into a spacecraft to grab nearby rock samples. Likewise, the zippers could be embedded into structures that can be assembled rapidly, helping relief workers quickly set up shelters or medical tents during natural disasters and rescues.

“Reimagining an everyday zipper to tackle 3D morphological transitions is a brilliant approach to dynamic assembly,” says Zhejiang University assistant professor Guanyun Wang, who wasn’t involved in the paper. “More importantly, it effectively bridges the gap between soft and rigid states, offering a highly scalable and innovative fabrication approach that will greatly benefit the future design of embodied intelligence.”

Li and Freeman wrote the paper with Tianjin University PhD student Xiang Chang and MIT CSAIL colleagues: PhD student Maxine Perroni-Scharf; undergraduate Dingning Cao; recent visiting researchers Mingming Li (Zhejiang University), Jeremy Mrzyglocki (Technical University of Munich), and Takumi Yamamoto (Keio University); and MIT Associate Professor Stefanie Mueller, who is a CSAIL principal investigator and senior author on the work. Their research was supported, in part, by a postdoctoral research fellowship from Zhejiang University and the MIT-GIST Program.

The researchers’ work was presented at the ACM’s Computer-Human Interaction (CHI) conference on Human Factors in Computing Systems in April.

-

Tech1 week ago

Tech1 week agoA Brain Implant for Depression Is About to Be Tested in Humans

-

Business1 week ago

Business1 week ago‘I had £20,000 stolen and had to fight a 13-month fraud reporting rule to get it back’

-

Tech1 week ago

Tech1 week agoAlmost 90% of women leave tech industry within 10 years | Computer Weekly

-

Sports7 days ago

Sports7 days agoPro wrestling star Steph De Lander reveals how colleague’s advice helped lead her to title triumph at ACW

-

Business1 week ago

Business1 week agoPakistan’s oil market is fuelling the crisis | The Express Tribune

-

Entertainment1 week ago

Entertainment1 week agoNorway joins Type 26 Frigate Programme to boost NATO naval power

-

Entertainment1 week ago

Entertainment1 week agoMelania Trump says ABC should ‘take a stand’ on late-night host Kimmel

-

Tech7 days ago

Tech7 days agoThis Ambitious Laptop Doesn’t Leave Much Room for Your Hands