Tech

Bremont Is Sending a Watch to the Moon’s Surface

A multifaceted decahedral black ceramic bezel and sandwich-style three-piece case—a reworking of Bremont’s signature Trip-Tick construction—house a chronometer-rated automatic chronograph movement made by Sellita, with a 62-hour power reserve.

The watch will be a passenger aboard the FLIP rover, due to launch as part of Astrobotic’s Griffin Mission One (Griffin-1), expected to land at the lunar south pole at some point in the second half of this year.

It’s a one-way mission: The rover will remain permanently on the lunar surface, with the watch ticking away as it roams the landscape. FLIP’s objectives include reaching elevated positions on the lunar terrain, gathering data on lunar dust accumulation, testing dust-mitigation coatings, and surviving a two-week lunar night in hibernation (which would be a first for a US rover).

In terms of serious timekeeping data for Bremont, the mission is frankly symbolic. The watch will be positioned vertically in a specially designed housing within the FLIP’s chassis, between its front wheels. Only the watch head, weighing 107 grams, is included, glued in place using a specialist composite, its face visible to FLIP’s HD cameras. But the hibernatory periods will mean the watch (whose mechanical movement is driven in normal circumstances by the motion of the wearer’s arm) will stop running once its 62-hour power reserve runs down.

When the FLIP is on the move again, its motion should—in theory—jolt the mechanism into action once more. Despite the gravitational pull that’s a sixth of the Earth’s, the acceleration, pitches, and tilts of the rover should swing the winding rotor, if with less torque and efficiency than on Earth.

“My guess is that the watch will function from time to time, but for short periods,” Cerrato says. “We will learn along the way. But that’s what is exciting—it projects us into a thinking process that is absolutely out of the box. Just the fact of having it there is inspiring.” However, there is little doubt that Bremont will, just like other brands with any ties to the cosmos, mine its new space connection for all it is worth.

FLIP itself, which weighs just 1,058 pounds and carries a mix of commercial and government payloads, four HD cameras, and a deployable solar array, is fundamentally a technology demonstrator for Flexible Logistics and Exploration (FLEX), Astrolab’s much larger SUV-sized rover destined to support NASA’s Artemis program. The firm developed the FLIP from scratch after NASA’s equivalent vehicle for which the Griffin-1 mission was contracted, the VIPER, was put on pause in 2024. This left Astrobotic seeking a stand-in in short order. Astrolab, which signed the contract within a month of hearing about the opportunity in the fall of 2024, took the FLIP from blank sheet to finished rover in roughly a year.

Its standout feature is its hyper-deformable wheels, minutely structured from silicone, composite, and stainless steel, which create a soft, enlarged contact surface with the terrain. “It’s like if you’re off-roading in a Jeep or Land Rover where you let some air out of the tires to go softer and spread the load over a larger area,” explains Astrolab’s founder, Jaret Matthews. While the moon’s nighttime temperatures of around -200 degrees Celsius (around -328 Fahrenheit) would cause conventional rubber tires to become glass-like and shatter, Astrolab’s solution is intended to keep the rover from sinking into the unconsolidated lunar dust—or regolith—that covers the environment.

Tech

Human-machine teaming dives underwater

The electricity to an island goes out. To find the break in the underwater power cable, a ship pulls up the entire line or deploys remotely operated vehicles (ROVs) to traverse the line. But what if an autonomous underwater vehicle (AUV) could map the line and pinpoint the location of the fault for a diver to fix?

Such underwater human-robot teaming is the focus of an MIT Lincoln Laboratory project funded through an internally administered R&D portfolio on autonomous systems and carried out by the Advanced Undersea Systems and Technology Group. The project seeks to leverage the respective strengths of humans and robots to optimize maritime missions for the U.S. military, including critical infrastructure inspection and repair, search and rescue, harbor entry, and countermine operations.

“Divers and AUVs generally don’t team at all underwater,” says principal investigator Madeline Miller. “Underwater missions requiring humans typically do so because they involve some sort of manipulation a robot can’t do, like repairing infrastructure or deactivating a mine. Even ROVs are challenging to work with underwater in very skilled manipulation tasks because the manipulators themselves aren’t agile enough.”

Beyond their superior dexterity, humans excel at recognizing objects underwater. But humans working underwater can’t perform complex computations or move very quickly, especially if they are carrying heavy equipment; robots have an edge over humans in processing power, high-speed mobility, and endurance. To combine these strengths, Miller and her team are developing hardware and algorithms for underwater navigation and perception — two key capabilities for effective human-robot teaming.

As Miller explains, divers may only have a compass and fin-kick counts to guide them. With few landmarks and potentially murky conditions caused by a lack of light at depth or the presence of biological matter in the water column, they can easily become disoriented and lost. For robots to help divers navigate, they need to perceive their environment. However, in the presence of darkness and turbidity, optical sensors (cameras) cannot generate images, while acoustic sensors (sonar) generate images that lack color and only show the shapes and shadows of objects in the scene. The historical lack of large, labeled sonar image datasets has hindered training of underwater perception algorithms. Even if data were available, the dynamic ocean can obscure the true nature of objects, confusing artificial intelligence. For instance, a downed aircraft broken into multiple pieces, or a tire covered in an overgrowth of mussels, may no longer resemble an aircraft or tire, respectively.

“Ultimately, we want to devise solutions for navigation and perception in expeditionary environments,” Miller says. “For the missions we’re thinking about, there is limited or no opportunity to map out the area in advance. For the harbor entry mission, maybe you have a satellite map but no underwater map, for example.”

On the navigation side, Miller’s team picked up on work started by the MIT Marine Robotics Group, led by John Leonard, to develop diver-AUV teaming algorithms. With their navigation algorithms, Leonard’s group ran simulations under optimal conditions and performed field testing in calm waters using human-paddled kayaks as proxies for both divers and AUVs. Miller’s team then integrated these algorithms into a mission-relevant AUV and began testing them under more realistic ocean conditions, initially with a support boat acting as a diver surrogate, and then with actual divers.

“We quickly learned that you need more sensing capabilities on the diver when you factor in ocean currents,” Miller explains. “With the algorithms demonstrated by MIT, the vehicle only needed to calculate the distance, or range, to the diver at regular intervals to solve the optimization problem of estimating the positions of both the vehicle and diver over time. But with the real ocean forces pushing everything around, this optimization problem blows up quickly.”

On the perception side, Miller’s team has been developing an AI classifier that can process both optical and sonar data mid-mission and solicit human input for any objects classified with uncertainty.

“The idea is for the classifier to pass along some information — say, a bounding box around an image — to the diver and indicate, “I think this is a tire, but I’m not sure. What do you think?” Then, the diver can respond, “Yes, you’ve got it right, or no, look over here in the image to improve your classification,” Miller says.

This feedback loop requires an underwater acoustic modem to support diver-AUV communication. State-of-the-art data rates in underwater acoustic communications would require tens of minutes to send an uncompressed image from the AUV to the diver. So, one aspect the team is investigating is how to compress information into a minimum amount to be useful, working within the constraints of the low bandwidth and high latency of underwater communications and the low size, weight, and power of the commercial off-the-shelf (COTS) hardware they’re using. For their prototype system, the team procured mostly COTS sensors and built a sensor payload that would easily integrate into an AUV routinely employed by the U.S. Navy, with the goal of facilitating technology transition. Beyond sonar and optical sensors, the payload features an acoustic modem for ranging to the diver and several data processing and compute boards.

Miller’s team has tested the sensor-equipped AUV and algorithms around coastal New England — including in the open ocean near Portsmouth, New Hampshire, with the University of New Hampshire’s (UNH) Gulf Surveyor and Gulf Challenger coastal research vessels as diver surrogates, and on the Boston-area Charles River, with an MIT Sailing Pavilion skiff as the surrogate.

“The UNH boats are well-equipped and can access realistic ocean conditions. But pretending to be a diver with a large boat is hard. With the skiff, we can move more slowly and get the relative motion in tune with how a diver and AUV would navigate together.”

Last summer, the team started testing equipment with human divers at Michigan Technological University’s Great Lakes Research Center. Although the divers lacked an interface to feed back information to the AUV, each swam holding the team’s tube-shaped prototype tablet, dubbed a “tube-let.” The tube-let was equipped with a pressure and depth sensor, inertial measurement unit (to track relative motion), and ranging modem — all necessary components for the navigation algorithms to solve the optimization problem.

“A challenge during testing was coordinating the motion of the diver and vehicle, because they don’t yet collaborate,” Miller says. “Once the divers go underwater, there is no communication with the team on the surface. So, you have to plan where to put the diver and vehicle so they don’t collide.”

The team also worked on the perception problem. The water clarity of the Great Lakes at that time of year allowed for underwater imaging with an optical sensor. Caroline Keenan, a Lincoln Scholars Program PhD student jointly working in the laboratory’s Advanced Undersea Systems and Technology Group and Leonard’s research group at MIT, took the opportunity to advance her work on knowledge transfer from optical sensors to sonar sensors. She is exploring whether optical classifiers can train sonar classifiers to recognize objects for which sonar data doesn’t exist. The motivation is to reduce the human operator load associated with labeling sonar data and training sonar classifiers.

With the internally funded research program coming to an end, Miller’s team is now seeking external sponsorship to refine and transition the technology to military or commercial partners.

“The modern world runs on undersea telecommunication and power cables, which are vulnerable to attack by disruptive actors. The undersea domain is becoming increasingly contested as more nations develop and advance the capabilities of autonomous maritime systems. Maintaining global economic security and U.S. strategic advantage in the undersea domain will require leveraging and combining the best of AI and human capabilities,” Miller says.

Tech

Novo Nordisk partners with OpenAI to AI-power drug development | Computer Weekly

Danish pharmaceutical company Novo Nordisk has partnered with OpenAI to support drug research and development. Through the partnership, Novo Nordisk said it plans to deploy advanced artificial intelligence (AI) capabilities to analyse complex datasets, identify promising drug candidates and reduce the time required to move from research to patient.

The company said its use of AI has been structured with strict data protection, governance and human oversight to ensure ethical and compliant use. This latest partnership is being positioned as a key part of the company’s strategy to use AI to transform healthcare and enable it to bring new and better treatment options to patients faster.

In 2024, a break-out session run during its Capitals Market Day presented Novo Nordisk’s strategy, discussing how it uses data science and AI and its future plans. The presentation shows that the company set up an AI centre of excellence in 2021, and had begun ramping up investment in high performance computing and graphics processor units (GPUs) by 2023. The company said it has deployed a data pool called FounData, where all data from completed clinical trials are pooled and prepared for insights-generation.

It has also deployed NovoScribe, an AI-powered platform built using MongoDB Atlas Vector Search, Amazon Bedrock and LangChain to automate and accelerate the creation of clinical study reports. Novo Nordisk said NovoScribe reduces the time to regulatory submissions.

At the time, the company said external partnerships and collaborations would continue to play an important role in reaching its AI ambitions.

Earlier this year, Christos Nicolaou, a senior scientific director at Novo Nordisk, posted on LinkedIn that the company has now joined Ligand-AI, a new project funded by the EU public-private partnership, Innovative Health Initiative (IHI).

In the post, he said the project’s goal is to generate high quality, large, open datasets of protein-ligand interactions for thousands of proteins. “In the spirit of open science collaboration, these datasets will be shared and used to implement models and methods to improve AI-driven drug discovery,” he said.

This latest partnership with OpenAI builds on technology partnerships it has with AWS, Microsoft, Google and Hugging Face, as well as its existing collaboration with OpenAI.

“This partnership is one important step in positioning Novo Nordisk to lead in the next era of healthcare,” said Mike Doustdar, president and CEO of Novo Nordisk. “There are millions of people living with obesity and diabetes who need treatment options, and we know there are therapies still waiting to be discovered that could change their lives.

“Integrating AI in our everyday work gives us the ability to analyse datasets at a scale that was previously impossible, identify patterns we could not see, and test hypotheses faster than ever. This means discovering new therapies and bringing them to market faster than ever before.”

OpenAI said it would be assisting Novo Nordisk in upskilling the company’s global workforce and enhancing AI literacy. Through the partnership OpenAI’s capabilities will also be used to improve efficiency in manufacturing, supply chain and distribution, and corporate operations. The company is starting pilot programmes across research and development, and manufacturing and commercial operations, with full integration by the end of 2026.

Tech

Flood warning: How citizens’ AI agents will swamp public services | Computer Weekly

The people running UK public services are busy working out how artificial intelligence (AI) might improve things.

There’s some good stuff happening, like tools to digitise planning information, transcribe probation officers’ conversations or rapidly assess stroke victims. There’s some nicely radical thinking coming out of various pockets of the Government Digital Service and the Department for Science, Innovation and Technology. Teams across government are running countless experiments.

But what if governments are looking through the AI telescope from the wrong end? What if citizens’ own use of AI to access public services proves to be an even more transformative force?

Creating friction

Many public services rely on friction to stay viable. They depend on slow, confusing, frustrating user experiences to put off those otherwise eligible – how often do people just get fed up trying, and give up? This is both unfair and politically convenient. You could say “’twas ever thus” – until now.

From parents seeking special needs support to property owners appealing council tax bands, it’s often the friction of bad service design that restrains demand, not the law.

AI – specifically AI agents – will remove that friction. Your AI agent will be doggedly relentless in how it accesses public services on your behalf, however byzantine those services may be. It will make sure your application is perfectly crafted to maximise your chances of getting what you want, treating any appeals process as just another stage to be navigated.

Ask your agent

I’m lucky to have played a lot with AI agents recently – the likes of OpenClaw, PicoClaw and Claude Cowork. I recently ran an experiment with OpenClaw – what would an AI agent do if I asked it to tell me whether the council tax band for my house was fair, in comparison to my neighbours?

It came back immediately to tell me the band was higher than all my neighbours and suggested some next steps it could take. At this point I stopped it, as I’d realised something stark.

If I’d have let it, my AI agent would happily have run off to compare neighbours’ floor areas by querying the Gov.uk Energy Performance Certificate API; it would have measured neighbours’ extensions from Ordnance Survey; downloaded Land Registry’s historic house price dataset; searched property websites for number of bedrooms; and researched how best to craft an appeal over my council tax band to the Valuation Office Agency. It would then have written a far better appeal letter than I ever could and submitted it on my behalf. Just like that – all without any intervention on my part.

Now I might still have lost the appeal, but the cost to me in time and hassle would have been negligible compared to even three months ago – one click and about 12p, which is the most expensive it’ll ever be. The friction that stops people from appealing their council tax band just disappeared. Ditto every other public service.

Now what?

Agentic flooding

Welcome to the new frontier of “agentic flooding”, a term coined by Chris Schmitz, a PhD student at Berlin’s Centre for Digital Governance. He’s created a dashboard highlighting increased demand for public services which might be attributed to citizens’ use of AI.

For example, benefit appeals to the Department for Work and Pensions have increased by over 60% since the first benefit-specific AI tools appeared in 2022. And this was before AI agents appeared on the scene at the end of 2025.

Governments are not remotely ready for the coming explosion in demand for their services driven by AI agents. It might take a couple of years, but it’s coming.

Much of this demand will be entirely legitimate. Some of it doubtless will be fraudulent. But demand is demand, and AI agents don’t ever get bored – they negate the friction that used to keep demand in check.

Adding more friction to restrict AI agents would see the government kicking off an AI arms race against its citizens in which both sides lose. Instead, governments will need to clarify – if not tighten – countless rules, policies, processes and regulations, otherwise public services risk being swamped. Along the way, policy grey areas will be eliminated, and that’ll be a loss.

All this won’t be popular, particularly if done in a hurry in response to a crisis.

There’s been a great emphasis in Whitehall on using AI to write better policy papers. I hope they’re using AI to explore the myriad tricky policy responses required to respond to the imminent explosion in demand.

Oh, and by the way – the same will apply for the private sector.

Tom Loosemore is a partner at consultancy Public Digital.

-

Fashion1 week ago

Fashion1 week agoIndia’s exports face reset as EU links trade to carbon metrics: EY

-

Entertainment6 days ago

Entertainment6 days agoQueen Elizabeth II emotional message for Archie, Lilibet sparks speculation

-

Tech6 days ago

Tech6 days agoAs the Strait of Hormuz Reopens, Global Shipping Will Take Months to Recover

-

Tech6 days ago

Tech6 days agoAzure customers up in arms over ‘full’ UK South region | Computer Weekly

-

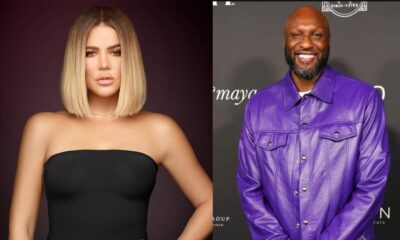

Entertainment1 week ago

Entertainment1 week agoLamar Odom shocking response to Khloé Kardashian account of his overdose

-

Sports1 week ago

Sports1 week agoWith Messi goal, Inter Miami open new stadium with dream moment

-

Tech5 days ago

Tech5 days agoThis AI Button Wearable From Ex-Apple Engineers Looks Like an iPod Shuffle

-

Fashion6 days ago

Fashion6 days agoCII submits 20-pt agenda to Indian govt to back firms hit by Iran war