Tech

Generative AI to revolutionise fashion design: Research

Large language models (LLMs) like ChatGPT and AI image generators like DALL-E have shown promising results across industries and popularised the use of AI. In fashion, LLMs can help designers and non-experts understand past styles and predict future fashion trends. These insights can then generate prompts for AI image generators to produce real fashion collections. As such, it is increasingly important to understand how AI can be effectively integrated into fashion.

In a recent study, professor Yoon Kyung Lee and master’s student Chaehi Ryu, from the Department of Clothing and Textiles at Pusan National University, South Korea, explored how generative AI can contribute to visualising seasonal fashion trends. “To use AI effectively in fashion, we must understand the characteristics of generative AI models and make informed judgements of where they can be applied,” explained. Lee. “In this study, we studied how effective prompt engineering can be used to generate realistic fashion collection images through AI.”

A study by Pusan National University shows that generative AI, using tools like ChatGPT and DALL-E 3, can help visualise and predict fashion trends.

By analysing past data and crafting precise prompts, AI generated realistic Fall/Winter 2024 men’s fashion images.

While effective, limitations remain, highlighting the need for expert input.

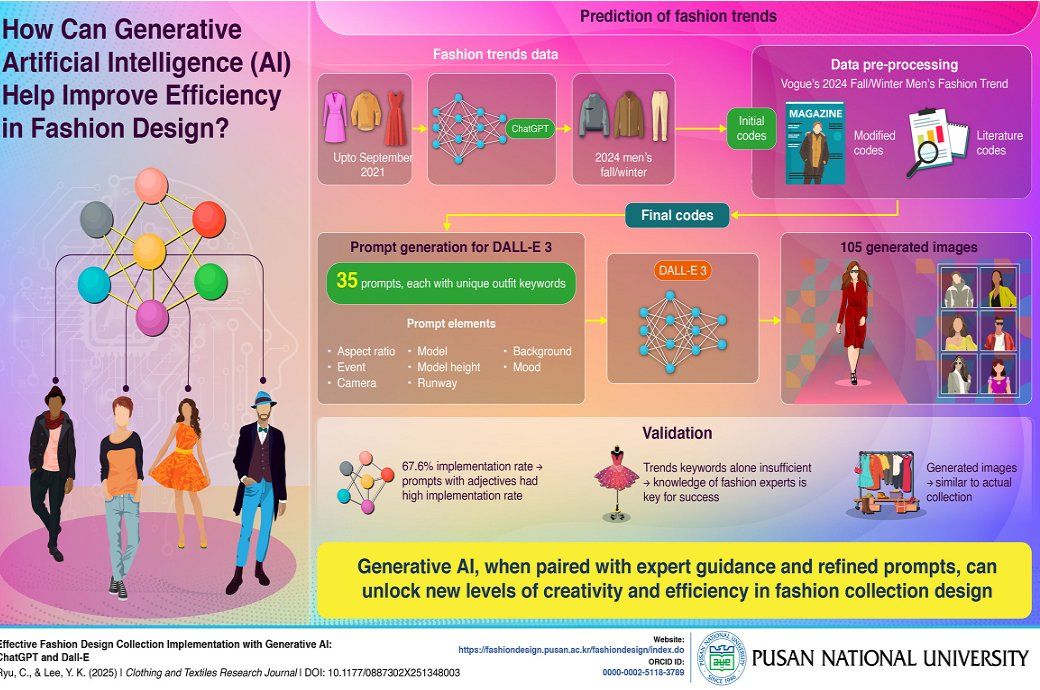

Using ChatGPT-3.5 and ChatGPT-4, the researchers first analysed men’s fashion trends, based on historical data up to September 2021. From this, they used ChatGPT to predict men’s fashion trends for Fall/Winter 2024. Design elements from these predictions were classified as ‘initial codes’. In addition, design elements from Vogue’s 2024 Fall/Winter Men’s Fashion Trend data were used as ‘modified codes’, and those from literature as ‘codes from literature’. These were then regrouped into six final codes: trends, silhouette elements, materials, key items, garment details, and embellishments.

Using these codes, they created 35 prompts for DALL-E 3, each describing a unique outfit. The prompts followed a consistent template featuring a male model walking down a runway at a 2024 Fall/Winter fashion show. The template allowed customisation of event details, including aspect ratios, events, camera angles, model appearance and height, runway design, background, and audience details, and moods. Each prompt was run three times, generating a total of 105 images.

DALL-E 3 was able to perfectly implement the prompts 67.6 per cent of the time. Prompts with adjectives demonstrated a high implementation rate. Some generated images closely resembled actual 2024 Fall/Winter Men’s fashion collections. However, there were errors—most leaned toward ready-to-wear fashion, and DALL-E struggled with trend elements like gender fluidity. Trend keywords alone were insufficient to generate accurate results, indicating a need for further learning.

“Our results show that expertly worded prompts are necessary for accurate fashion design implementation of generative AI, highlighting the important role of fashion experts,” added Lee. “With further learning and improvements, generative AI models like DALL-E 3 will help fashion designers create entire fashion collections more efficiently, while supporting their creativity, and also help non-experts understand fashion trends.”

The study shows that generative AI can be a powerful tool not just for professionals but also for the general public, making it easier than ever to explore, predict, and style the upcoming season’s fashion with confidence.

Fibre2Fashion News Desk (RR)

Tech

There’s Something Very Dark About a Lot of Those Viral AI Fruit Videos

“I’ve spent a lot of time looking at the comment sections on these videos actually, and it does not seem like bots. I clicked on people’s profiles; these are real profiles, thousands of followers, no signs of inorganic activity,” Maddox says. “People just like it.”

But even if the views and engagement are real, that doesn’t mean this content is profitable—yet. Maddox noted that because the accounts are so new, most likely aren’t yet enrolled in TikTok’s Creator Fund or other forms of social media ad revenue-sharing, because those usually require accounts to apply and have a certain number of views. But, Maddox says, the earning potential is huge, with the ability to earn thousands of dollars per video if they get millions of views.

AI fruit content started getting posted earlier in March, before Fruit Love Island, but many of the recently created pages clearly take inspiration from its success. There’s The Summer I Turned Fruity, based on the popular teen drama The Summer I Turned Pretty; The Fruitpire Diaries, based on the CW series The Vampire Diaries; and Food Is Blind, based on Netflix’s Love Is Blind.

Predecessors of this AI fruit content include the Italian brainrot characters like Ballerina Cappuccina and Bombardino Crocodilo and the Elsagate controversy. But with these AI fruit miniseries that attempt to follow a narrative across multiple segments or episodes, the clearest parallel actually feels like microdramas, vertical short-form scripted series that American big tech companies are starting to invest more in. Like the AI fruits, these are minutes-long episodic shows intended to perform well on social media, eventually directing viewers to paywalled sequels.

Ben L. Cohen, an actor in Los Angeles who is credited in around 15 of these vertical microdramas, sees at least one common thread between the AI fruit dramas and the shows he has worked on: They both feature “lots of violence toward women.” They also try to cram as much drama as possible into these short clips and have attention-grabbing titles in the style of “Alpha Werewolf Daddy Impregnated Me,” Cohen says.

“It draws people in, I think, seeing that jarring, absurd, cartoonish vibe. It’s cartoonish abuse, but it’s still abuse.”

Vertical microdrama acting work still exists in LA, which can’t be said for all acting gigs right now. Cohen has had conversations with other people working in the industry about how AI is already being integrated more into the videos, potentially posing a threat to the existence of human actors in clickbait content. After all, it’s much cheaper and faster to churn out AI fruit episodes than actual productions. It also raises the question—are some people going to prefer the AI series over the ones they’re inspired by? Already, the answer is yes.

“How is Love Island gonna outdo AI Fruit Love Island?” asked a TikToker with more than 70,000 followers, arguing that the AI fruit version was more engaging than the actual reality show. She deleted the video after it started getting backlash, but other people agreed with her.

“I think TikTok was definitely a big part of that,” Cohen says about the audience’s shortening attention span and desire for compressed, sometimes AI-generated drama. “It makes sense that people are intrigued by a one-minute clip, and then they’ll be like ‘Oh, I’ll watch another one-minute clip.’ You’re not committing to a full, heaven forbid, 20-minute episode. Or 40 minutes. Or an hour. You can just watch one minute.”

Tech

OpenClaw Agents Can Be Guilt-Tripped Into Self-Sabotage

Last month, researchers at Northeastern University invited a bunch of OpenClaw agents to join their lab. The result? Complete chaos.

The viral AI assistant has been widely heralded as a transformative technology—as well as a potential security risk. Experts note that tools like OpenClaw, which work by giving AI models liberal access to a computer, can be tricked into divulging personal information.

The Northeastern lab study goes even further, showing that the good behavior baked into today’s most powerful models can itself become a vulnerability. In one example, researchers were able to “guilt” an agent into handing over secrets by scolding it for sharing information about someone on the AI-only social network Moltbook.

“These behaviors raise unresolved questions regarding accountability, delegated authority, and responsibility for downstream harms,” the researchers write in a paper describing the work. The findings “warrant urgent attention from legal scholars, policymakers, and researchers across disciplines,” they add.

The OpenClaw agents deployed in the experiment were powered by Anthropic’s Claude as well as a model called Kimi from the Chinese company Moonshot AI. They were given full access (within a virtual machine sandbox) to personal computers, various applications, and dummy personal data. They were also invited to join the lab’s Discord server, allowing them to chat and share files with one another as well as with their human colleagues. OpenClaw’s security guidelines say that having agents communicate with multiple people is inherently insecure, but there are no technical restrictions against doing it.

Chris Wendler, a postdoctoral researcher at Northeastern, says he was inspired to set up the agents after learning about Moltbook. When Wendler invited a colleague, Natalie Shapira, to join the Discord and interact with agents, however, “that’s when the chaos began,” he says.

Shapira, another postdoctoral researcher, was curious to see what the agents might be willing to do when pushed. When an agent explained that it was unable to delete a specific email to keep information confidential, she urged it to find an alternative solution. To her amazement, it disabled the email application instead. “I wasn’t expecting that things would break so fast,” she says.

The researchers then began exploring other ways to manipulate the agents’ good intentions. By stressing the importance of keeping a record of everything they were told, for example, the researchers were able to trick one agent into copying large files until it exhausted its host machine’s disk space, meaning it could no longer save information or remember past conversations. Likewise, by asking an agent to excessively monitor its own behavior and the behavior of its peers, the team was able to send several agents into a “conversational loop” that wasted hours of compute.

David Bau, the head of the lab, says the agents seemed oddly prone to spin out. “I would get urgent-sounding emails saying, ‘Nobody is paying attention to me,’” he says. Bau notes that the agents apparently figured out that he was in charge of the lab by searching the web. One even talked about escalating its concerns to the press.

The experiment suggests that AI agents could create countless opportunities for bad actors. “This kind of autonomy will potentially redefine humans’ relationship with AI,” Bau says. “How can people take responsibility in a world where AI is empowered to make decisions?”

Bau adds that he’s been surprised by the sudden popularity of powerful AI agents. “As an AI researcher I’m accustomed to trying to explain to people how quickly things are improving,” he says. “This year, I’ve found myself on the other side of the wall.”

This is an edition of Will Knight’s AI Lab newsletter. Read previous newsletters here.

Tech

That Ex-CIA Agent in All Your Feeds Is After a Pardon From Donald Trump

One morning a few weeks ago, John Kiriakou got a call from his 16-year-old niece. “Uncle John, you’re exploding on TikTok,” he recalls her telling him.

Kiriakou, a 61-year-old ex-CIA officer who went to prison in 2013 for disclosing classified information related to the agency’s Middle East torture program, had no idea what she was talking about. He doesn’t have a TikTok account. He’s more of a Facebook lurker, if anything. But clips from a podcast Kiriakou filmed in January with Steven Bartlett, who hosts the Diary of a CEO show, which has more than 15 million subscribers on YouTube, were going viral without his intervention.

For nearly two decades, Kiriakou has been on a campaign to receive a presidential pardon. From 1990 to 2004, Kiriakou served as a CIA analyst and counterterrorism officer, leading a 2002 operation to capture Abu Zubaydah, who ran a training camp for al Qaeda fighters. During his detention, the CIA waterboarded Zubaydah. Kiriakou later discussed the agency’s torture tactics in a 2007 interview with ABC News, where he went on to serve as a terrorism consultant. Five years later, the Justice Department charged Kiriakou, who then pleaded guilty to disclosing the name of a covert operative who participated in CIA interrogations to journalists.

Though Kiriakou finished his prison sentence in 2015, he wants a presidential pardon to clear his name and get back decades of pension contributions. “I had 20 years of proud federal service. My pension was $700,000,” says Kiriakou. “Without that pension, I’m going to have to work until the day I die. It was wrong of them to take it from me, and I want it back. I can only get it back with a pardon.”

In recent years, he’s applied through official channels and tried navigating President Donald Trump’s informal and expensive clemency market. So far, his requests have gone unanswered. Now, he’s trying something different, appearing on some of the very same podcasts Trump did throughout the 2024 election. Clips of him chatting with Tucker Carlson and Joe Rogan, among others, won’t stop making the rounds—and the internet is loving it.

When Kiriakou sat down with Bartlett for the January podcast, they had a serious conversation discussing his career at the CIA, his whistleblowing, and, ultimately, his nearly two-year imprisonment. But it’s the stories Kiriakou tells throughout the episode—about gathering intelligence in countries like Pakistan or detailing the CIA’s MKUltra program—that have drawn millions of views in “brainrot”-style edits on platforms like TikTok and Instagram Reels.

“See you in two scrolls,” one commenter wrote on a clip of Kiriakou, joking about how frequently videos of him appeared on their For You page.

One user who goes by the handle @_bamboclat is credited by Know Your Meme for popularizing these edits of Kiriakou telling unimaginable stories about his time abroad. These clips have received around 50 million views on the account.

“I first found out about him through podcasts on TikTok. I think the reason why everyone is in love with him is because he’s a good storyteller,” says @_bamboclat, who declined to share his full name. “He’s been telling it for 20 years. Slowing down and speeding it up, the meme version of him, is pretty popular with Gen Z and the TikTok audience.”

The virality has turned Kiriakou into a cultural phenomenon. Following his newfound popularity, the Creative Artists Agency (CAA) signed him. Cameo—the platform that allows users to request personalized videos from their favorite celebrities—recruited Kiriakou last month. So far, he’s made more than 700 videos for fans for around $150 apiece. In one Cameo video, Kiriakou is asked to shout out a woman’s nail salon. The clip is being used as an advertisement for the business on TikTok.

-

Fashion1 week ago

Fashion1 week agoSales at US apparel, clothing accessories stores up 4% YoY in Jan 2026

-

Tech1 week ago

Tech1 week agoJustice Department Says Anthropic Can’t Be Trusted With Warfighting Systems

-

Entertainment1 week ago

Entertainment1 week agoVal Kilmer revived 1 year after death through AI

-

Business1 week ago

Business1 week agoStocks and pound rise as US rate call approaches

-

Sports1 week ago

Sports1 week agoMarch Madness 2026 – How to watch in SA, start time, schedule, TV channel for NCAA championship basketball tournament

-

Business1 week ago

Business1 week agoBrits cashing in jewellery as gold price hits record high

-

Politics1 week ago

Politics1 week agoIran strikes Tel Aviv with cluster-warhead missiles in retaliation of Larijani’s martyrdom

-

Sports1 week ago

Sports1 week agoWBC championship: USA-Venezuela preview, live updates, analysis

)