Tech

Myriota introduces satellite-based scalable global asset tracking | Computer Weekly

Blind spots and outages have been the traditional weak spots of terrestrial networks designed to offer coverage for internet of things (IoT) applications, and Myriota believes it can address these challenges by combining native 5G non-terrestrial network (NTN) satellite connectivity in a purpose-built tracking device called AssetHawk.

Myriota says supply chains are growing increasingly complex, and that blind spots and outages in terrestrial coverage create significant operational and financial risk – particularly across industries such as transport and logistics, equipment leasing, mining, and agriculture.

Powered by its existing HyperPulse connectivity system, AssetHawk is said to be able to address these challenges by combining native 5G NTN satellite connectivity in a purpose-built tracking device – delivering an affordable, feature-rich satellite asset tracker.

AssetHawk is engineered to deliver reliable global visibility beyond the reach of traditional cellular networks. It can support scalable tracking of trailers, containers, pallets, vehicles and unpowered assets to verify delivery milestones, reduce asset loss, improve utilisation, lower operating costs and improve margins as fleets and deployments scale. Native 5G NTN connectivity provides global visibility for broad use cases including trailers, cargo, vehicles and unpowered assets.

Intended for rapid deployment at the edge, Myriota describes AssetHawk as a ready-to-use device that installs in minutes and integrates seamlessly with third-party visualisation and analytics platforms.

The company says that the tracker’s compact, low-profile design and flexible mounting options, including magnetic mounting, make it well-suited to rotating fleets and temporary assets. An IP68-rated enclosure has been used to offer reliable operation in harsh conditions, surviving submersion, dust, impact and extreme temperatures commonly encountered in mining, agriculture and heavy industry.

For long-term deployments, AssetHawk is said to have been engineered to minimise operational overheads. Low-power hardware delivers a battery life of up to 10 years on two AA batteries, while intelligent firmware automatically increases location update frequency when movement is detected. The result is said to be sharper insights while optimising power consumption and operational costs.

The tracker will soon be available with optional Bluetooth Low Energy capabilities to enable the capture of valuable condition data from Bluetooth sensors, including temperature, vibration and other environmental metrics.

The device operates on a standards-based 3GPP Release 17 architecture, using private data paths to protect against unauthorised access or interference – meaning security and data integrity are built into the platform.

AssetHawk is also said to be purpose-built for operations at the edge, supporting use cases such as tracking trailers and containers across borders, monitoring leased equipment throughout its lifecycle, locating shared agricultural assets in remote paddocks, and gaining early visibility of critical equipment during mining exploration.

Developed on a TAA-compliant supply chain and backed by its experience in operating secure satellite networks commercially, Myriota is fundamentally confident that AssetHawk can meet the needs of government, and enterprise customers where trust and resilience are critical.

“Most tracking projects fail not in the lab, but at scale – when battery swaps, coverage gaps and complex integrations erode the business case,” said Myriota CEO Ben Cade. “AssetHawk is designed to flip that equation. By delivering global coverage, predictable multi‑year life and straightforward integration in a single device, we’re giving solution providers and systems integrators a way to scale tracking profitably, even for assets that were previously too remote or low‑value to justify a tracker.”

Tech

This Solar-Powered Smart Sprinkler Keeps My Lawn Watered Without Any Power Cables

Once configured, setup proceeds much like the Aiper and pricier Irrigreen apps: You create a zone, then use the app to define its boundaries. Similar to the aforementioned systems, Oto’s sprinkler is designed for precision watering, firing water in a beam in a single direction instead of a wide spray. That said, Oto’s spray is comparably narrow, only hitting a single, designated patch instead of producing a two-dimensional curtain of water like Irrigreen’s “water printing” system. You get a nice preview of this as you set the boundaries of your yard.

Like its competitors, Oto lets you set each zone as a spot (for watering a single tree, perhaps), a line (for a flowerbed), or a 2-D area (for a yard). I tested all of these modes but spent most of my time working with area zones, which are the most complex option. When defining an area zone, I found Oto’s system to be virtually identical to that of Irrigreen and Aiper, though ever so slightly slower to respond to commands. Even so, it’s very easy to use: A simple interface lets you drop points around the sprinkler to define the boundaries of the zone. When you’ve made a full circle around the sprinkler, the area is complete.

Once configured, you can assign each zone a schedule, with copious options available around which days to water (odd days, even days, select days of the week, every day), and designate a start time (though there is no tying time to sundown or sunrise). Each schedule also gets a weekly watering limit (in inches of depth), which you’ll then parse out over each week’s watering runs. Weather intelligence features let you elect to skip watering if your zip code receives measurable rainfall or if winds are high (both based on internet reports); the user can tweak both the amount of rain and windspeed needed to trigger a skip. The app logs the 20 most recent runs and includes a calendar that details upcoming events.

When watering an area, Oto takes a novel approach to covering the lawn, first moving in circular arcs directly around the sprinkler, then slowly increasing in range with each successive swipe. When finished, it does additional “clean-up” runs to hit any areas that the initial watering arcs didn’t reach. The speed is slow enough and the size of the water’s beam is large enough that the resulting coverage is solid. After test runs, I found the yard to be plenty wet across the entire zone, with no dry patches.

As with all sprinklers, changes in water pressure can make for occasional over- or underwatering of areas, but I found this to be a minimal problem when using the Oto. However, when watering at the terminus of Oto’s range, the power needed to throw the water that far can make for a strong splashdown, which may result in some soil erosion or damage to more sensitive plants.

The Oto also has a “play mode” option that lets you use the sprinkler for a watery game of chase or a more random “splash tag” mode, aka “try to avoid getting hit by the water.” Pro tip: It’s impossible not to get hit.

Tech

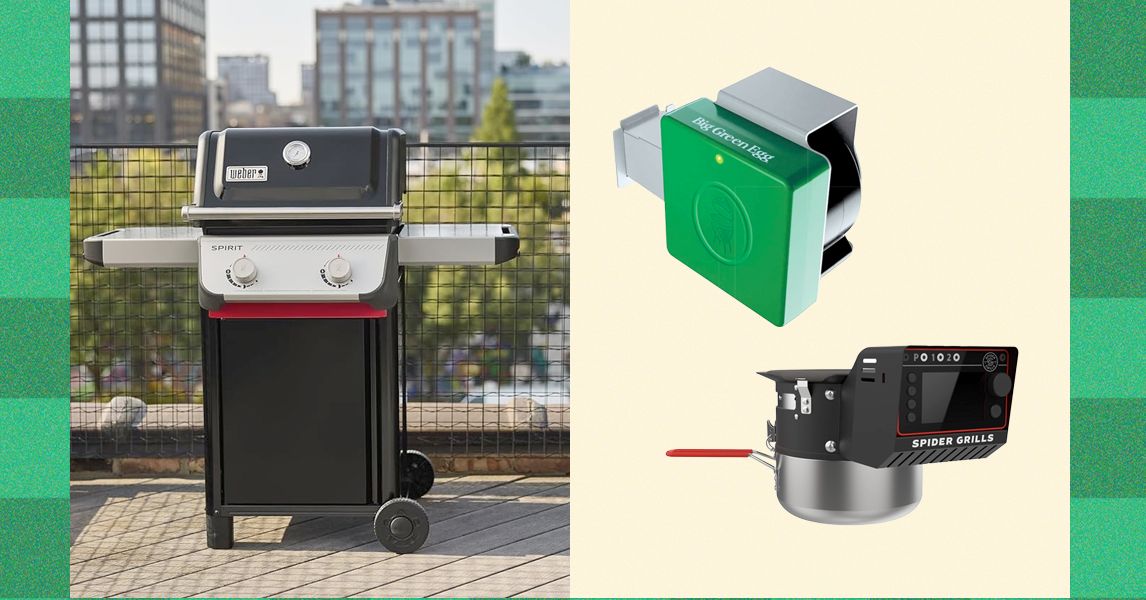

Why Is Your Grill So Dumb? The Best Grills Set Temp Like an Oven

It’s likewise smartly designed, packing up into—as you likely already gleaned—the shape of a suitcase. The heavy-duty handles and latches are strong. Though the Nomad is 28 pounds, which is a bit on the heavy side for a single-hand carry, the shape and large handle actually make it easier to carry than smaller and cheaper models.

The Nomad uses a dual-venting system to achieve good airflow, even when the lid is closed. The vents, combined with the raised fins on the bottom of the grill (which elevate your charcoal, allowing air to flow underneath), allow for very precise control of both high and low temperatures. If you live and die by overlanding, this grill could be your new constant companion.

Photograph: Weber

A Great Budget Portable Grill: WIRED reviewer Scott Gilbertson also loves the simple Weber Jumbo Joe ($90), a smaller version of the classic Original Kettle. It’s an easy choice for tailgates, especially. And if you want to use it at home, you can build yourself a stand for home cookouts. It’s low-cost, light, and dead simple. All are virtues.

Other Grills I Recommend

Recteq X-Fire Pro 825 for $1,400: Pellet smokers rarely crest much over 450 degrees Fahrenheit, which does not offer the sear you’d get on a charcoal or gas grill. But Recteq’s 825-square-inch, dual-pot X-Fire Pro wants to be your everything device, notes WIRED reviewer Kat Merck. In Smoke Mode, the left fire pot ignites for classic low-and-slow smoking. Switch the big knob to Grill Mode, and both pots fire up, with an adjustable damper over the right side. The damper, controllable with another knob, allows you to open access to the right fire pot just a little bit, or all the way to the gates of hell—1,200 degrees Fahrenheit. It takes about 20 minutes for the fire pot to get going this high, and if you don’t clean the fire pot first, it’ll kick off a lot of sparks in the process. Who knows why you need to get to 1,200 degrees? But as Merck notes, this is a company known for a cartoon bull logo and bull-horn handles. “Recteq likes to be extreme, so it tracks,” she says. If you keep your sear to a more human 600 degrees Fahrenheit, it’s a solid grill and sear experience. But keep in mind that the high power draw from the dual igniters will require a 10- or-12-gauge extension cord, which is probably better than the cord you’ve got at home. The X-Fire also didn’t produce the same smokiness as WIRED’s top-pick Recteq Flagship 1600, according to Merck’s testing, which means you’ll end up using smoke tubes at low temperature if you want to get more smoke in the meat. Note, too, that the advertised 20-pound pellet capacity is split between fire pots. This could mean refilling a 10-pound hopper multiple times during a long cook.

Photograph: Brad Bourque

Traeger Woodridge Pro for $1,000: The Traeger Woodridge Pro is WIRED’s previous top-pick pellet grill and smoker for most people. It still exists beautifully at the intersection of value and utility, and is likely to make you popular in the neighborhood. It’s a straightforward beast of a thing that’s easy to clean, easy to dial in for a perfect rack of ribs, and big enough to cook up two pork bellies at the same time. My new top-pick Recteq has a couple smart features that make us prefer it, like temperature history on its meat probes, and an easier learning curve on smart features. But this Woodridge will still make you quite popular in the neighborhood.

Photograph: Traeger

Traeger Timberline Wi-Fi Wood Pellet Grill for $3,300: If you’re serious about grilling and smoking, Traeger’s Timberline is almost a step up from a smoker. It’s the perfect all-in-one outdoor kitchen. It uses the same wireless smoking smarts as the Woodridge but adds some extras, like an induction burner (perfect for adding a last-minute sear with a cast-iron pan or steaming some veggies). The insulated smoke box has room for six pork shoulders, or about the equivalent racks of ribs or chickens. Former WIRED editor Parker Hall has managed to feed hundreds of people using it. (As a longtime food and barbecue critic, I can vouch heartily for Hall’s resulting brisket and ribs.) If that’s not enough, there’s also an XL version that’s even bigger. “All of my meats heated evenly and were perfectly cooked right when the smoker said they would be,” Hall says. If you want flawless smoking from the comfort of your couch and price is not a factor, the Timberline delivers.

Courtesy of Masterbuilt

Masterbuilt Gravity Series 800 for $899: This spacious Masterbuilt offers a nice combination, notes WIRED reviewer Chris Smith: charcoal flavor with the temperature precision of gas or electricity. The large, top-loading charcoal hopper uses gravity (hence the name) to feed heat into an internal housing, and an integrated fan enables precise digital temperature control—on the device or via the app. You’ll reach 700 degrees Fahrenheit within 15 minutes. Temperatures are remarkably consistent once stabilized, and if you want to add smoke flavor, just throw wood chunks into the ash bin and let falling charcoal embers do the rest. But the versatility comes with caveats. You may miss the ability to sear directly over a flame, and you’ll need to change out the internal housing before switching to the flat-top grill.

Courtesy of Yoder

Yoder YS640S Pellet Smoker for $2,700: Most grills do one thing well and several others poorly or not at all. Yoder’s YS640S is a more versatile tool, thanks to a design that allows easy access to the auto-feed firebox. Like Traegers that are half the price, this Kansas-made grill uses an electric fan and an auger to feed wood pellets in for a slow smoke session. It’s all driven by a control board that sends temp alerts and allows you to adjust the temperature via Wi-Fi. As a smoker, it easily handled ribs and a chuck roast, holding the temperature better than most. This is thanks to its bomb-proof 10-gauge steel construction, which means this grill weighs as much as a refrigerator. Where the Yoder really stands out, though, is as a grill and possible pizza oven. By removing a steel plate positioned over the fire pit, you can sear burgers directly over the flame or remove the grills and plop on a hefty pizza oven attachment ($489), which uses the pellet feed system to maintain a constant 900-plus degrees Fahrenheit.

A Grill to Avoid

Courtesy of Ace

Kamado Joe Konnected Joe for $1,900: There’s a lot to like about this kamado-style grill. Indeed, WIRED previously recommended it for its electric ignition and Wi-Fi connectivity that allows you to measure the temperature of the interior and the meat via two probes. But over long-term use, WIRED commerce director Martin Cizmar has had constant problems with the electric grill tripping the 2-year-old GFCI outlets on his patio. Once it even tripped the breaker. A Reddit thread reveals this is a common problem. Like the Redditors, Cizmar found temporary relief by running an extension cord into an outlet in his kitchen, but even that has failed him a few times during testing. Unfortunately, this grill is a hard pass until the issue is resolved.

Tech

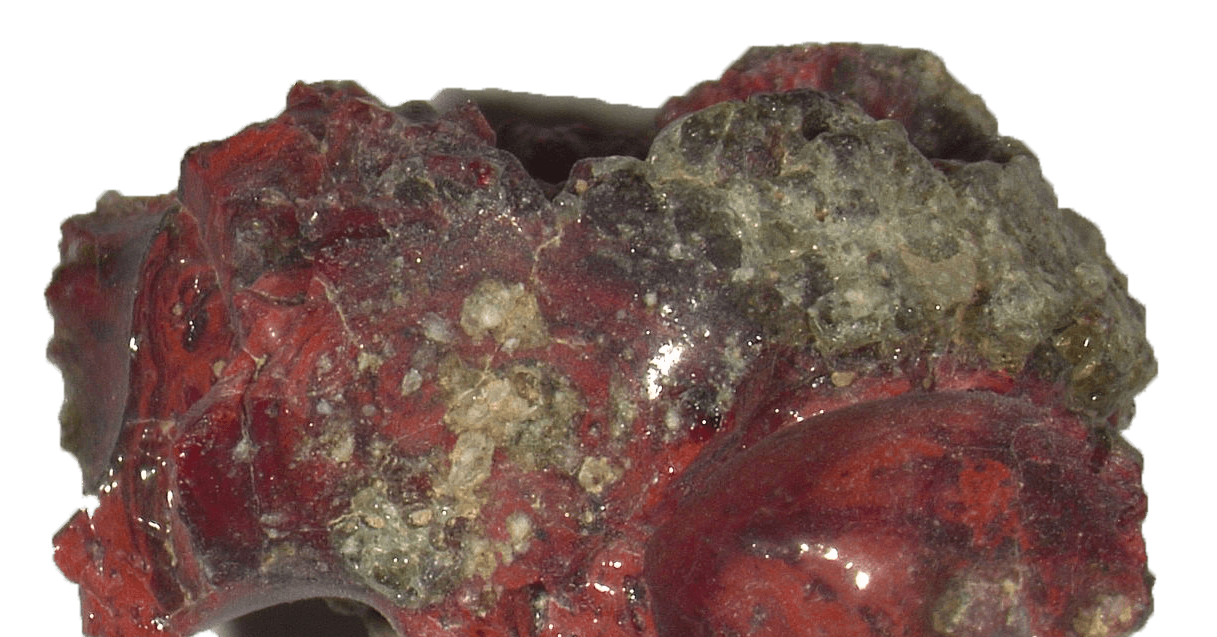

The First Atomic Bomb Test in 1945 Created an Entirely New Material

During the Trinity nuclear test on July 16, 1945, in the New Mexico desert—the world’s very first test of an atomic bomb—a new material spontaneously formed. It was discovered only recently, by an international research team coordinated by geologist Luca Bindi at the University of Florence, which identified the novel clathrate based on calcium, copper, and silicon. It’s a material never before observed either in nature or as an artificial compound created in the laboratory.

What Are Clathrates?

The term “clathrates” denotes materials characterized by a “cage-like” structure that traps other atoms and molecules inside, giving them unique properties. Of great technological interest, these materials are being studied for various applications ranging from energy conversion (as thermoelectric materials capable of transforming heat into electricity) to the development of new semiconductors, to gas storage and hydrogen for future energy technologies.

The New Material

To discover the new material, researchers focused on trinitite, a silicate glass containing rare metallic phases. Using some techniques like x-ray diffraction, the team was able to identify a type I clathrate based on calcium, copper, and silicon within a tiny copper-rich metal droplet embedded in a sample of red trinitite.

The new material, the researchers say, formed spontaneously during a nuclear explosion. This indicates that the extreme conditions, such as extremely high temperatures and pressures, can generate new materials that are impossible to obtain by traditional methods.

Natural Laboratories

The discovery is even more interesting because in the same detonation event another very rare material was formed: a silicon-rich quasicrystal, already documented by the team of experts led by Bindi a few years ago.

A quasicrystal, as Bindi told WIRED at the time, is something that is not a crystal, but looks a lot like one. “Their peculiarity,” he said, “is that the atomic arrangement that is not periodic, but nearly so, creates incredible symmetries from which derive amazing physical properties, among other things, very difficult to predict.”

Establishing the link between these structures therefore helps scientists better understand how atoms organize under extreme conditions and expand the possibilities for designing new materials. “Events such as nuclear explosions, lightning strikes, or meteoritic impacts function as true natural laboratories,” the researchers explain. “They allow us to observe forms of matter that we cannot easily reproduce in the laboratory.”

In essence, this research opens new vistas for the development of innovative technologies, demonstrating that even destructive events can bequeath discoveries useful for the future.

This story originally appeared in WIRED Italia and has been translated from Italian.

-

Entertainment5 days ago

Entertainment5 days agoConan O’Brien hat tricks as Oscar host

-

Tech1 week ago

Tech1 week agoCould Contact-Tracing Apps Help With the Hantavirus? Not Really

-

Tech1 week ago

Tech1 week agoI Tried the Best Captioning Smart Glasses, and Only One Leads the Pack

-

Fashion5 days ago

Fashion5 days agoItaly’s Zegna Group’s Q1 growth boosted by strong organic performance

-

Sports1 week ago

Sports1 week agoBobby Cox, legendary Atlanta Braves manager who led 1995 World Series champions, dead at 84

-

Entertainment1 week ago

Entertainment1 week agoMartin Short: Facing tragedy with joy

-

Entertainment1 week ago

Entertainment1 week agoTom Brady gets back at Kevin Hart during Netflix roast

-

Entertainment1 week ago

Entertainment1 week agoMartha Stewart: How to make an omelet

-Reviewer-Photo-SOURCE-Brad-Bourque.jpg)