Tech

Privacy will be under unprecedented attack in 2026 | Computer Weekly

The privacy of electronic communications will face new risks in 2026, as the UK and other governments push for greater capabilities to harvest and analyse more data on private citizens, and to make it harder to protect communications with end-to-end encryption.

Over the next 12 months, we can expect more pressure from the UK and Europe to restrict the unencumbered use of end-to-end encrypted email and messaging services such as Signal, WhatsApp and many others.

In the 1990s, the US government tried and ultimately failed to persuade telecommunications companies to install a device known as the Clipper chip to provide the US National Security Agency (NSA) with “backdoor” access to voice and data communications.

The Crypto wars of 2026 are more subtle, with controls and restrictions on encryption pushed by governments, law enforcement agencies and intelligence services as a means of detecting child sexual abuse and terrorist material being promulgated through encrypted email and messaging systems.

The answer governments are settling on is to encourage the use of scanning technology in a voluntary or compulsory way, to identify problematic content before it is encrypted.

Cryptographers and computer scientists have repeatedly warned that such plans will create security vulnerabilities that will leave the public less safe than before.

Chat Control and client-side scanning

The European Parliament and Council are expected to adopt the controversial Child Sexual Abuse Regulation (CSAR) in spring 2026. In its current form, it proposes that messaging platforms voluntarily scan private communications for offending content, combined with proposals for age verification to check the age of users.

Known by the nickname Chat Control, its critics – such as former MEP Patrick Breyer, a jurist and digital rights activist – claim the regulation will open the doors to “warrantless and error-prone” mass surveillance of European Union (EU) citizens by US technology companies. The algorithms, say critics, are notoriously unreliable, potentially exposing tens of thousands of legal private chats to police scrutiny.

Chat Control will also put pressure on technology companies to introduce age checks to help them “reliably identify minors”, a move that would likely require every citizen to upload an ID or take a face scan to open an account on an email or messaging service. According to Breyer, this creates a de facto ban on anonymous communication, putting whistleblowers, journalists and political activists who rely on anonymity at risk.

Online Safety Act

In the UK, there remain concerns about provisions in the Online Safety Act that, if implemented by regulator Ofcom, would require technology companies to scan encrypted messages and emails.

These powers attracted widespread criticism from technology companies as the bill passed into law, with Signal warning it would pull its encrypted messaging service from the UK if it was forced to introduce what it called a “backdoor”.

Commentators think there is little current appetite for Ofcom to mandate client-side scanning for private communications, given the level of opposition.

But it may require providers of public and semi-public services, such as cloud storage, to introduce scanning services to detect illegal content.

“I think they may be waiting to see what happens in Europe with the Chat Control proposal, because it’s quite hard for the UK to go alone,” James Baker, campaigner at the Open Rights Group, told Computer Weekly.

Perceptual hash matching

One of the items on Ofcom’s agenda is a form of scanning, known as perceptual hash matching, which uses an algorithm to decide whether images or videos are similar to known child abuse or terrorism images.

A consultation document from Ofcom proposes requiring tech platforms that allow users to upload or share photographs, images and videos – including file storage and sharing services, and social media companies – to introduce the technology for detecting terrorism and abuse-related material.

“We also think some services should go further – assessing the role that automated tools can play in detecting a wider range of content, including child abuse material, fraudulent content, and content promoting suicide and self-harm, and implementing new technology where it is available and effective,” it says in its consultation document.

But there are questions about the accuracy of perceptual hash matching, and the risk that its use may lead to people wrongly being barred from online services for alleged crimes they have not committed.

Critics point out that perceptual hash matching used to be called “fuzzy matching” – and for good reason. Although its new name, “perceptual hash matching”, gives the impression of precision and predictability, in reality, it produces false positives and negatives.

Hundreds of people have been blocked from Instagram, owned by Meta, after being wrongly accused of breaching Meta’s policies on child sexual exploitation and abuse. The company’s actions took a huge emotional toll on the people affected, and in some cases led to people losing their online businesses, the BBC reported in October 2025.

Alec Muffett, security expert and former Facebook engineer, told Computer Weekly that Ofcom’s proposals display “a horrifying lack of safety by design” and said its proposal to force companies to adopt the technology without mitigating the potential risks is “derelict”.

“Perceptual hashing is just a fancy name for what we used to call ‘fuzzy matching’ with ‘digital fingerprints’, and even if we ignore the problem of false positives, we are left with the risk of creating an enormous cloud surveillance engine by logging all queries for even benign digital fingerprints,” he said.

Encryption apps viewed as national security risk

There are signs of increasing government discomfort with encrypted communications. In December 2025, the Independent Reviewer of State Threats Legislation delivered a stark warning that developers of encryption technology could be subject to police stops, detention and questioning, and the seizure of their electronic devices under national security laws.

According to Jonathan Hall KC, the developer of an app whose selling point is that it offers end-to-end encryption, could be considered to be unwittingly engaged in “hostile activity” under Section 3 of the Counterterrorism and Border Security Act 2019.

“It is a reasonable assumption that [the development of the app] would be in the interests of a foreign state even if the foreign state has never contemplated this potential advantage,” he wrote.

Digital ID all over again

The UK’s proposals for a mandatory digital ID scheme look set to be another battleground for privacy in 2026. The government says the scheme will help to crack down on illegal immigration by introducing mandatory “right to work” checks by the end of the Parliamentary term.

MPs were scathing when the bill was introduced in Parliament. “The real fear here is that we will be building an infrastructure that can follow us, link our most sensitive information and expand state control over all our lives,” said Rebecca Long-Bailey during the debate. Others raised concerns about the cyber security risks of storing details of the population on a central government database.

Gus Hosein, executive director of campaign group Privacy International, notes that the Home Office is repeating the same arguments originally put forward in 2023 when Tony Blair attempted to introduce a national identity card. The scheme was scrapped by the Conservative and Liberal Democrat coalition in 2010. “It’s just the same boring rhetoric: ‘It’s going to stop ID fraud, it’s going to stop terrorism, it’s going to stop migration problems,’” he said. “Do we really have to go through the whole process of debunking this again?”

Hosein said the prospects of the Home Office coming up with a workable system before the next election are low. The political climate is different this time. Nearly three million people have signed a Parliamentary petition calling for the idea to be scrapped. “If they try and do the classic thing, which is to try and build something grand and momentous, it will take forever,” he said. “I would not mind an ID system that actually worked, I just don’t want the Home Office within 10,000 miles of it.”

When combined with facial recognition, digital ID raises further privacy issues. Campaign groups are expected to bring a legal challenge in 2026 after Freedom of Information Act requests revealed that the government covertly allowed police forces to search 150 million UK passport and immigration database photos for matches of images captured by facial recognition technology.

Big Brother Watch and Privacy International have issued legal letters before action to the Home Office and the Metropolitan Police. They argue that there is no clear legal basis for the practice and that the Home Office has kept the public and Parliament in the dark.

“There is a risk when you roll out digital facial recognition cameras that the images used for digital ID will be used to track you around town centres,” said the Open Rights Group’s Baker.

Apple backdoors and technical capability notices

This year will see further legal challenges at the Investigatory Powers Tribunal against the Home Office’s secret order issued against Apple, requiring it to facilitate access for law enforcement and intelligence agencies to encrypted data stored by Apple’s customers on iCloud.

Scheduled for the spring, the case brought by Privacy International and Liberty will challenge the lawfulness of the Home Office using a technical capability notice (TCN) to require Apple to disclose the encrypted data of users of its Advanced Data Protection (ADP) service worldwide.

Apple is expected to issue a new legal challenge after the UK government abandoned its original wide-ranging TCN and replaced it with an order focused on providing access only to ADP users in the UK, ending Apple’s legal challenge, at least for now.

The case has the potential to turn into a mammoth battle, reaching the Supreme Court and the European Court of Human Rights.

Surveillance of journalists

This year will also see further legal challenges that will test the boundaries between state intrusion and the professional privileges accorded to lawyers and journalists to protect the confidentiality of their clients or journalistic information.

The Investigatory Powers Tribunal is due to decide on a case brought by the BBC and former BBC journalist Vincent Kearney against the Police Service of Northern Ireland and the Security Service, MI5.

The Security Service broke with the conventions of Neither Disclose Nor Deny (NCND) to acknowledge to the tribunal that it had unlawfully obtained phone communications data from Kearney in 2006 and 2009, while he was working at the BBC, in an attempt to identify his confidential sources.

Although MI5 followed the Communications Data code of practice at the time, the code did not meet the strict legal tests for accessing journalistic material, which is protected under the European Convention of Human Rights.

In a judgment just before Christmas, the IPT rejected arguments that MI5 should disclose further details of surveillance operations against Kearney and other BBC journalists, including operations that had proper legal approval. The IPT will decide what remedy is due in 2026, and whether Kearney and the BBC should receive compensation.

Another legal case will test the boundaries between police surveillance and the legal protection given to lawyers to protect the confidentiality of discussions with their clients when subject to police stops.

Fahad Ansari, a lawyer who acted for Hamas in an attempt to overturn its proscription as a terrorist organisation in the UK, had his mobile phone seized by police after he was detained under Schedule 7 of the Terrorism Act 2000 at a ferry port, after returning from a family holiday.

The case is believed to be the first targeted use of Schedule 7 powers – which allow police to stop and question people and seize their electronic devices without the need for suspicion – against a practising solicitor.

Ansari is seeking a judicial review to challenge the right of police to examine the contents of his phone, which contains confidential and legally privileged material from his clients, accumulated over 15 years.

The legal fallout from EncroChat and SkyECC

The legal fallout from an international police operation to hack encrypted phone network Sky ECC and EncroChat more than five years ago will continue.

French police led operations to harvest tens of millions of encrypted messages used as evidence of criminality to bring prosecutions against drug gangs across Europe and the UK.

Defence lawyers and forensic experts have raised questions about the reliability of the evidence supplied by the French to the UK and EU states through Europol.

France has declared the hacking operation against EncroChat and Sky ECC a state secret and refused to allow members of the French Gendarmerie to give evidence on how the intercepted data was obtained.

This has meant individuals facing charges outside France based on evidence from EncroChat or SkyECC have no legal recourse to challenge the legality of the French hacking operation.

Courts in the EU are obliged to accept the evidence provided by France under the “mutual recognition” principal that applies when one EU state supplies evidence to another under a European Investigation Order.

At the same time, people have been denied the right to challenge the evidence against them in the French courts, leaving people charged with offences based on the hacked phone data without legal recourse to appeal in any jurisdiction.

Decisions by the European Court of Justice and the European Court of Human Rights, expected this year, could end that anomaly.

In one case, the French Supreme Court – La Cour de cassation – has asked the Court of Justice to decide whether France’s refusal to allow non-French citizens to challenge the lawfulness of the French hacking operations in France contravenes EU law. According to La Cour de cassation, the decision is likely to have “significant consequences” for legal proceedings based on intercepted evidence in the EU.

In the second case, the European Court of Human Rights is expected to decide on a complaint from a German citizen, Murat Silgar, who was jailed for drug offences on the basis of EncroChat evidence.

Silgar argues that the German courts had used illegally obtained communications data and that technical details of the French retrieval of EncroChat data were not shared with him, in breach of the European Convention of Human Rights, which protects the right to a fair trial, and the right to private correspondence.

Justus Reisginer, a member of a coalition of defence lawyers known as the Joint Defence Team, told Computer Weekly the cases would address “a fundamental principle” in cross-border and digital investigations. “The law of the European Union requires that people have an effective remedy,” he said.

These are just a few of the battle lines between technology and privacy that will play out in 2026. For governments, the promise of a “technical fix” to deal with wider societal problems, such as child abuse and terrorism offences, is attractive. But history has shown that “technical fixes” rarely work, and often have unforeseen consequences.

Tech

This Windows Laptop Makes the MacBook Neo Look Overpriced

The MacBook Neo made quite a splash when it landed in March. $599 for a MacBook felt groundbreaking, and it was easy for casual onlookers to declare that Windows laptops had no true answer to it.

But what if I told you there was a Windows option that was better in almost every way? That’s the HP OmniBook 5, a laptop you’ve probably never heard of unless you watch the space closely. I’ve been recommending it ever since I tested it last month. The price has been fluctuating, but more often than not, the 14-inch model was selling for $500. You read that right: $500. Today, the cheapest, most consistent price you’ll find it for is $730 over at Walmart, but I’ve seen the HP frequently drop the price from $1,050 down to around $500.

And just take a look at what you get for the price, because it’s absolutely stacked. It comes with 16 GB of RAM and 512 GB of storage, double what you get on the $599 MacBook Neo. There’s a 16-inch version as well, if you like the idea of having a bit more screen real estate work with.

The HP OmniBook 5 is powered by the Qualcomm Snapdragon X, a highly efficient chip that gets great, all-day battery life that’s at least on par with the MacBook Neo. If you haven’t used a Windows laptops in a few years and still think they can’t compete with MacBooks in battery life, you’re sorely mistaken.

The 16 GB of memory on the OmniBook 5 is particularly important to note, as it’s one of the big points of contention with the MacBook Neo. Being stuck at 8 GB in 2026 feels cruel on principle, and while testing it I was able to load up the MacBook Neo and easily find its breaking point. The 16 GB of memory on the HP OmniBook 5 is enough that you’ll never have to worry about how many tabs, applications, installations, or downloads you have going simultaneously. Combined with the better multicore performance of the Snapdragon X, it enables a kind of freedom that lets you forget about the hardware and focus on the task at hand. Don’t get me wrong—the MacBook Neo has its place, but calling it the undisputed king of budget laptops just isn’t right.

The HP OmniBook 5 Is Only $500

Now, I know what you’re thinking. Specs and performance don’t tell the whole story, and Apple has never been known for offering tons of specs for cheap. But the OmniBook 5 14 is also an attractive design in a highly portable package. At 0.5 inches, it’s exactly the same thickness as the MacBook Neo and right around the same weight too. Does the MacBook Neo have a bit more style and personality? Absolutely—especially if you fancy one of the bolder color options. But I’d say the OmniBook 5 is a very pretty laptop in its own right. It’s also made of aluminum, sturdy and well-built in your hands. The hinge is balanced nicely, allowing you to open the lid with one finger. It doesn’t feel cheap.

Tech

The 10 Best TV Shows to Stream This Month

After years of suffering in silence with her trauma, Vega eventually called out her accuser in one of the most public forums in existence: Facebook. Within just a few days, she was contacted by eight other women, most of them also American college students studying abroad, with eerily similar stories of their own encounters with Vela, who was known to many as “Manu.” This three-part docuseries traces how Vega found the courage to stand up to her attacker and how the far-reaching power of using one’s voice on social media can be used for more than just sharing memes and family photos. Ultimately, Vega’s efforts led authorities to determine that Manu had assaulted between 50 and 100 young women.

Star Wars: Maul—Shadow Lord

From The Mandalorian to Skeleton Crew, Disney+ has produced a dozen Star Wars TV shows since its streaming debut, and fans are always clamoring for more. This month, that means the premiere of Star Wars: Maul—Shadow Lord, a gritty, animated series for adults that is set after the events of the universe’s famous Clone Wars and told from the perspective of Maul, one of the space opera’s most notorious supervillains. But it unravels more like a crime-drama, as it follows Maul’s rogue attempts to use his Sith skills to rebuild his Shadow Collective, a massive crime syndicate composed of Sith leaders, Mandalorian warriors, bounty hunters, and more, all united by the goal of usurping Darth Sidious and destroying his Sith Order. IYKYK.

The Testaments

The Handmaid’s Tale marked a watershed moment for Hulu when, in 2017, it became the first streaming series to nab the Emmy for Outstanding Drama Series—solidifying the streamer’s reputation as a bona fide player. As that groundbreaking series signed off in 2025 after six seasons, it’s hardly surprising that Hulu would want to keep Margaret Atwood’s dystopian world alive, so now we have The Testaments. Set 15 years after the events of the original series, much of the series takes place at an elite prep school for young women learning to be the dutiful wives of the next wave of Commanders. Aunt Lydia (Ann Dowd) returns to terrify a new generation of young women, including Agnes (One Battle After Another’s breakout star Chase Infiniti), a pious young woman who is beginning to question the rules she has grown up obeying, and Daisy (Lucy Halliday), a Canadian teen and recent Gilead convert—all of whom have secrets they’re keeping.

Kara Swisher Wants to Live Forever

“There’s so much bad information that the good information gets drowned.” That’s the central thesis behind famed tech journalist Kara Swisher’s decision to dive headfirst into the science (and scams) of longevity—a multibillion-dollar industry that shows no signs of slowing—in this six-episode docuseries. Armed with her investigative skills and famously dry wit, Swisher talks to the brains behind brands promising wellness acolytes longer lives with everything from gene editing and AI-driven medical care to bleeding-edge anti-aging treatments. OpenAI CEO Sam Altman, outspoken “biohacker” Bryan Johnson, nepo baby venture capitalist Reed Jobs, and Nobel Prize–winning biochemist Jennifer Doudna are among those who help Swisher separate fact from fiction in the quest to live forever.

Margo’s Got Money Troubles

Margo Millet (Elle Fanning) is a clever, ambitious young woman with her whole life in front of her—until an affair with her English professor leaves her pregnant and suddenly thrust into adulthood. With mounting bills and limited options to gain real income, Margo ultimately turns to OnlyFans, where she quickly gains a large and lucrative following—and the judgment that comes along with that. Based on Rufi Thorpe’s bestselling 2024 novel, this dark dramedy cleverly uses its setup to challenge the many still-existing stigmas surrounding sex work and even single motherhood. While Fanning is the undoubted star, she is ably supported by an A-list team of costars, including Michelle Pfeiffer as her mom and former Hooters waitress Shyanne, and Nick Offerman as her dad Jinx, a former pro wrestler.

This Is a Gardening Show

First he was Between Two Ferns, now he’s got his own DIY gardening series. Emmy-winning actor-comedian Zach Galifianakis brings his absurdist comedy to this hilarious docuseries, which is (mostly) as earnest as it is funny. Each episode introduces viewers to a new group of gardeners. While it’s largely aimed at laughs, there’s also a real exploration of the many reasons why people choose to garden, which often leads to very real and important questions about mental health, sustainability, the disconnection many people feel in the modern world, the many flaws in our current “perverse” (Galifianakis’ word) food production system, and what that might mean for future generations. Appropriately, the series debuts on Earth Day (April 22).

Stranger Things: Tales From ’85

Much like Hulu wasn’t about to say goodbye entirely to The Handmaid’s Tale, just because Stranger Things said goodbye on New Year’s Eve doesn’t mean the gang from Hawkins, Indiana, is totally parting ways with Netflix. In this animated spinoff, the kids—Eleven, Mike, Will, Dustin, Lucas, and Max—are going back in time slightly, to 1985, where the friends are desperately trying to reacquaint themselves with “normal” life after their terrifying dealings with the Upside Down. But they soon realize that something is still amiss in Hawkins, and they quickly find themselves embroiled in yet another paranormal adventure. Much like the nostalgia-fueled live-action series, the animated show is meant to be reminiscent of the Saturday morning cartoons that were a staple of every ’80s kid’s pop culture diet. Notably, the show is also being heavily promoted as a more family-friendly entry in the series—meaning monsters for all. All 10 episodes will drop on April 23.

Buffy the Vampire Slayer

Buffy the Vampire Slayer is officially dead—at least for now. In mid-March, Sarah Michelle Gellar announced via Instagram that Hulu had put a stake through the heart of the long-awaited Buffy reboot, which would see the ’90s icon reprise her role as the vampire world’s biggest headache. But just because there presumably won’t be new episodes to enjoy doesn’t mean you can’t revisit the beloved original series.

Tech

In the Wake of Anthropic’s Mythos, OpenAI Has a New Cybersecurity Model—and Strategy

OpenAI on Tuesday announced the next phase of its cybersecurity strategy and a new model specifically designed for use by digital defenders, GPT-5.4-Cyber.

The news comes in the wake of an announcement last week by competitor Anthropic that its new Claude Mythos Preview model is only being privately released for now—because, the company says, it could be exploited by hackers and bad actors. Anthropic also announced an industry coalition, including competitors like Google, focused on how advances in generative AI across the field will impact cybersecurity.

OpenAI seemed to be seeking to differentiate its message on Tuesday by striking a less catastrophic tone and touting its existing guardrails and defenses while hinting at the need for more advanced protections in the long term.

“We believe the class of safeguards in use today sufficiently reduce cyber risk enough to support broad deployment of current models,” the company wrote in a blog post. “We expect versions of these safeguards to be sufficient for upcoming more powerful models, while models explicitly trained and made more permissive for cybersecurity work require more restrictive deployments and appropriate controls. Over the long term, to ensure the ongoing sufficiency of AI safety in cybersecurity, we also expect the need for more expansive defenses for future models, whose capabilities will rapidly exceed even the best purpose-built models of today.”

The company says that it has homed in on three pillars for its cybersecurity approach. The first involves so-called “know your customer” validation systems to allow controlled access to new models that is as broad and “democratized” as possible. “We design mechanisms which avoid arbitrarily deciding who gets access for legitimate use and who doesn’t,” the company wrote on Tuesday. OpenAI is combining a model where it partners with certain organizations on limited releases with an automated system introduced in February, known as Trusted Access for Cyber or TAC.

The second component of the strategy involves “iterative deployment,” or a process of “carefully” releasing and then refining new capabilities so the company can get real-world insight and feedback. The blog post particularly highlights “resilience to jailbreaks and other adversarial attacks, and improving defensive capabilities.” Finally, the third focus is on investments that the company says support software security and other digital defense as generative AI proliferates.

OpenAI says that the initiative fits into its broader security efforts, including an application security AI agent launched last month known as Codex Security, a cybersecurity grants program that began in 2023, a recent donation to the Linux Foundation to support open source security, and the “Preparedness Framework” that is meant to assess and defend against “severe harm from frontier AI capabilities.”

Anthropic’s claims last week that more capable AI models necessitate a cybersecurity reckoning have been controversial among security experts. Some say the concern is overstated and could feed a new wave of anti-hacker sentiment—consolidating power even more with tech giants. Others, though, emphasize that vulnerabilities and shortcomings in current security defenses are well known and really could be exploited with new speed and intensity by an even broader range of bad actors in the age of agentic AI.

-

Fashion1 week ago

Fashion1 week agoIndia’s exports face reset as EU links trade to carbon metrics: EY

-

Entertainment1 week ago

Entertainment1 week agoQueen Elizabeth II emotional message for Archie, Lilibet sparks speculation

-

Tech6 days ago

Tech6 days agoAs the Strait of Hormuz Reopens, Global Shipping Will Take Months to Recover

-

Entertainment1 week ago

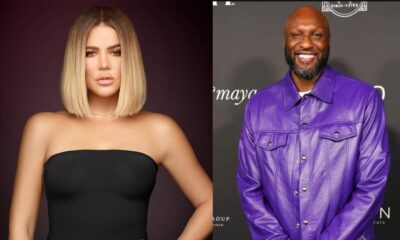

Entertainment1 week agoLamar Odom shocking response to Khloé Kardashian account of his overdose

-

Tech7 days ago

Tech7 days agoAzure customers up in arms over ‘full’ UK South region | Computer Weekly

-

Fashion1 week ago

Fashion1 week agoCII submits 20-pt agenda to Indian govt to back firms hit by Iran war

-

Tech6 days ago

Tech6 days agoThis AI Button Wearable From Ex-Apple Engineers Looks Like an iPod Shuffle

-

Tech1 week ago

Tech1 week agoA Single Strike Won’t Shut Off the Gulf’s Desalination System