Tech

Swedish welfare authorities suspend ‘discriminatory’ AI model | Computer Weekly

A “discriminatory” artificial intelligence (AI) model used by Sweden’s social security agency to flag people for benefit fraud investigations has been suspended, following an intervention by the country’s Data Protection Authority (IMY).

Starting in June 2025, IMY’s involvement was prompted after a joint investigation from Lighthouse Reports and Svenska Dagbladet (SvB) revealed in November 2024 that a machine learning (ML) system being used by Försäkringskassan, Sweden’s Social Insurance Agency was disproportionally and wrongly flagging certain groups for further investigation over social benefits fraud.

This included women, individuals with “foreign” backgrounds, low-income earners and people without university degrees. The media outlets also found the same system was largely ineffective at identifying men and rich people that actually had committed some kind of social security fraud.

These findings prompted Amnesty International to publicly call for the system’s immediate discontinuation in November 2024, which it described at the time as “dehumanising” and “akin to a witch hunt”.

Introduced by Försäkringskassan in 2013, the ML-based system assigns risk scores to social security applicants, which then automatically triggers an investigation if the risk score is high enough.

According to a blog published by IMY on 18 November 2025, Försäkringskassan was specifically using the system to conduct targeted checks on recipients of temporary child support benefits – which are designed to compensate parents for taking time off work when they have to care for their sick children – but took it out of use over the course of the Authorities investigation.

“While the inspection was ongoing, the Swedish Social Insurance Agency took the AI system out of use,” said IMY lawyer Måns Lysén. “Since the system is no longer in use and any risks with the system have ceased, we have assessed that we can close the case. Personal data is increasingly being processed with AI, so it is welcome that this use is being recognised and discussed. Both authorities and others need to ensure that AI use complies with the [General Data Protection Regulation] GDPR and now also the AI regulation, which is gradually coming into force.”

IMY added that Försäkringskassan “does not currently plan to resume the current risk profile”.

Under the European Union’s AI Act, which came into force on 1 August 2024, the use of AI systems by public authorities to determine access to essential public services and benefits must meet strict technical, transparency and governance rules, including an obligation by deployers to carry out an assessment of human rights risks and guarantee there are mitigation measures in place before using them. Specific systems that are considered as tools for social scoring are prohibited.

Computer Weekly contacted Försäkringskassan about the suspension of the system, and why it elected to discontinue before IMY’s inspection had concluded.

“We discontinued the use of the risk assessment profile in order to assess whether it complies with the new European AI regulation,” said a spokesperson. “We have at the moment no plans to put it back into use since we now receive absence data from employers among other data, which is expected to provide a relatively good accuracy.”

Försäkringskassan previously told Computer Weekly in November 2024 that “the system operates in full compliance with Swedish law”, and that applicants entitled to benefits “will receive them regardless of whether their application was flagged”.

In response to Lighthouse and SvB’s claims that the agency had not been fully transparent about the inner workings of the system, Försäkringskassan added that “revealing the specifics of how the system operates could enable individuals to bypass detection”.

Similar systems

Similar AI-based systems used by other countries to distribute benefits or investigate fraud have faced similar problems.

In November 2024, for example, Amnesty International exposed how AI tools used by Denmark’s welfare agency are creating pernicious mass surveillance, risking discrimination against people with disabilities, racialised groups, migrants and refugees.

In the UK, an internal assessment by the Department for Work and Pensions (DWP) – released under Freedom of Information (FoI) rules to the Public Law Project – found that an ML system used to vet thousands of Universal Credit benefit payments was showing “statistically significant” disparities when selecting who to investigate for possible fraud.

Carried out in February 2024, the assessment showed there is a “statistically significant referral … and outcome disparity for all the protected characteristics analysed”, which included people’s age, disability, marital status and nationality.

Civil rights groups later criticised DWP in July 2025 for a “worrying lack of transparency” over how it is embedding AI throughout the UK’s social security system, which is being used to determine people’s eligibility for social security schemes such as Universal Credit or Personal Independence Payment.

In separate reports published around the same time, both Amnesty International and Big Brother Watch highlighted the clear risks of bias associated with the use of AI in this context, and how the technology can exacerbate pre-existing discriminatory outcomes in the UK’s benefits system.

Tech

Light-activated gel could impact wearables, soft robotics, and more

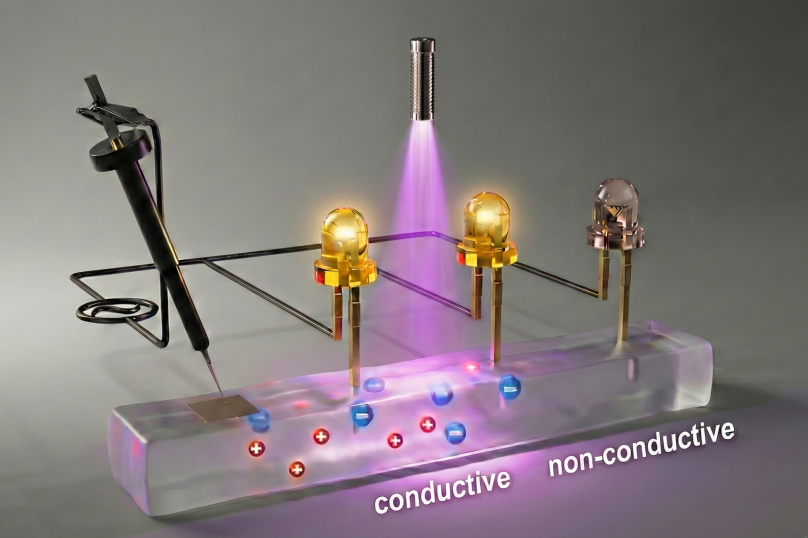

Consider the chief difference between living systems and electronics: The first is generally soft and squishy, while the latter is hard and rigid. Now, in work that could impact human-machine interfaces, biocompatible devices, soft robotics, and more, MIT engineers and colleagues have developed a soft, flexible gel that dramatically changes its conductivity upon the application of light.

Enter the growing field of ionotronics, which involves transferring data through ions, or charged molecules. Electronics does the same, with electrons. But while the latter is well established, ionotronics is still being developed, with one huge exception: living systems. The cells in our bodies communicate with a variety of ions, from potassium to sodium.

Ionotronics, in turn, can provide a bridge between electronics and biological tissues. Potential applications range from soft wearable technology to human-machine interfaces

“We’ve found a mechanism to dynamically control local ion population in a soft material,” says Thomas J. Wallin, the John F. Elliott Career Development Professor in MIT’s Department of Materials Science and Engineering and leader of the work. “That could allow a system that is self-adaptive to environmental stimuli, in this case light.” In other words, the system could automatically change in response to changes in light, which could allow complex signal processing in soft materials.

An open-access paper about the work was published online recently in Nature Communications.

A growing field

Although others have developed ionotronic materials with high conductivities that allow the quick movement of ions, those conductivities cannot be controlled. “What we’re doing is using light to switch a soft material from insulating to something that is 400 times more conductive,” says Xu Liu, first author of the paper and former MIT postdoc in materials science and engineering who is now an incoming assistant professor at King’s College London.

Key to the work is a class of materials known as photo-ion generators (PIGs). These can become some 1,000 times more conductive upon the application of light. The MIT team optimized a way to incorporate a PIG into polyurethane rubber by first dissolving a PIG powder into a solvent, and then using a swelling method to get it into the rubber.

Much potential

In the material reported in the current work, the change in conductivity is irreversible. But Liu is confident that future versions could switch back and forth between insulating and conducting states.

She notes that the current material was developed using only one kind of PIG, polymer (the polyurethane rubber), and solvent, but there are many other kinds of all three. So there is great potential for creating even better light-responsive soft materials.

Liu also notes the potential for developing soft materials that respond to other environmental stimuli, such as heat or magnetism. “We’re inspired to do more work in this field by changing the driving force from light to other forms of environmental stimuli,” she says.

“Our work has the potential to lead to the creation of a subfield that we call soft photo-ionotronics,” Liu continues. “We are also very excited about the opportunities from our work to create new soft machines impacting soft wearable technology, human-machine interfaces, robotics, biomedicine, and other fields.”

Additional authors of the paper are Steven M. Adelmund, Shahriar Safaee, and Wenyang Pan of Reality Labs at Meta.

Tech

Dark Matter May Be Made of Black Holes From Another Universe

A recent cosmological model combines two of the most eccentric ideas in contemporary physics to explain the nature of dark matter, the invisible substance that makes up about 85 percent of all matter in the universe. To understand it, it’s necessary to look beyond the Big Bang we all know and consider two concepts that rarely intersect: cyclic universes and primordial black holes.

A Different Kind of Multiverse

There are different versions of the “multiverse.” The most popular model—that of the Marvel Cinematic Universe—proposes that there are as many universes as there are possibilities and that these versions of reality are parallel. Physics proposes something more sober and mathematically consistent: the cosmic bounce.

In this model, the universe is not born from a singularity, but expands, contracts, and expands again in an endless cycle. Each “universe” is not parallel, but sequential—that is, one arises from the ashes of the previous one.

Is it possible for something to survive the end of its universe and endure into the next? According to a paper published in Physical Review D, yes. Author Enrique Gaztanaga, a research professor at the Institute of Space Sciences in Barcelona, shows that any structure larger than about 90 meters could pass through the final collapse of a universe and survive the rebound. These “relics” would not only persist, but could also seed the formation of giant, unexplained structures observed in the early stages of the present-day universe. Moreover, they could be the key to understanding dark matter.

For decades, the dominant explanation for dark matter has been that it is an unknown particle or particles. But after years of experiments without direct detections, physicists have begun to explore alternatives. One of them proposes that dark matter is not an exotic particle, but an abundant population of small black holes that we overlook.

The idea is appealing, but it has a serious problem. For these black holes to explain dark matter, they would have to exist from the earliest moments of the universe, long before the first stars could collapse. There are indications that these objects could exist, but a convincing physical mechanism to explain their origin is lacking.

A Universe Born With Black Holes

This is where Gaztanaga’s newly proposed model shines. If cosmic bouncing allows compact structures to survive the collapse of the previous universe, then the current universe would have already been born with pre-existing black holes. They would not have to have been generated by extreme fluctuations or finely tuned inflationary processes, but would simply have been there from the first instant.

The assumption has the potential to solve two riddles at once: the origin of black holes and the nature of dark matter. If this model is correct, dark matter would not be a mystery of the early universe but rather a legacy of a cosmos that predates our own.

“Much work remains to be done,” Gaztanaga, also a researcher at the Institute of Cosmology and Gravitation at the University of Portsmouth, said in an article for The Conversation. “These ideas must be tested against data—from gravitational-wave backgrounds to galaxy surveys and precision measurements of the cosmic microwave background.”

“But the possibility is profound,” he added. “The universe may not have begun once, but may have rebounded. And the dark structures shaping galaxies today could be relics from a time before the Big Bang.”

This story originally appeared in WIRED en Español and has been translated from Spanish.

Tech

Europe’s Online Age Verification App Is Here

The European online age verification app is ready.

The app works with passports or ID cards, is built to be “completely anonymous” for the people who use it, works on any device (smartphones, tablets, and PCs), and is open source. “Best of all, online platforms can easily rely on our age verification app, so there are no more excuses,” said European Commission president Ursula von der Leyen at a press conference on Wednesday. “Europe offers a free and easy-to-use solution that can protect our children from harmful and illegal content.”

High Expectations

“It is our duty to protect our children in the online world just as we do in the offline world. And to do that effectively, we need a harmonized European approach,” von der Leyen said at Wednesday’s press conference. “And one of the central issues is the question, how can we ensure a technical solution for age verification that is valid throughout Europe? Today, I can announce that we have the answer.”

This answer takes the form of an open source app that any private company can repurpose, as long as it complies with European privacy standards and offers the same technical solution throughout the European Union. The user downloads the app, agrees to the terms and conditions, sets up a pin or biometric access, and proves their age through an electronic identification system, or by showing a passport or ID card (in which case biometric verification is also provided). The app does not store your name, date of birth, ID number, or any other personal information, according to the European Commission—only the fact that you are over a certain age.

After that, when a person using the app wants to access a social network (minimum age: 13), pornographic site (minimum age: 18), or any other age-protected content, if they are logged in from a computer, they need only scan the QR code shown on the site they want to visit. If, on the other hand, the person logs in from a smartphone, the app sends the proof of age directly. The platform does not access the document with which the user proved it in the first place.

Adoption Event

The need to introduce a common system for the entire European Union has been discussed for some time, and according to commission technicians, the technical work is now complete. Of course, it will still be possible to circumvent the system—all it takes is for an adult to lend their phone to a younger friend—but the technological architecture exists, and it will be up to EU member states to decide whether to integrate it into national digital wallets or develop independent apps.

“No More Excuses”

For the app to really be effective, platforms must be obligated to verify the age of their users—that’s where things get tricky. The Digital Services Act, which went into effect in 2024, requires “very large online platforms”—those with more than 45 million monthly users in the European Union—to take concrete steps to mitigate systemic risks related to child protection, with heavy penalties for noncompliance.

“And that’s why Europe has the DSA: to call online platforms to their responsibilities. Because Europe will not tolerate platforms making money at the expense of our children,” European Commission executive vice president Henna Virkkunen told a press conference. She added that after an investigation into TikTok, the European institutions plan to take similar action against Facebook, Instagram, and Snapchat, as well as four porn sites. “Since the platforms do not have adequate age verification tools, we developed the solution ourselves,” he concluded. In short, as von der Leyen also remarked, “there are no more excuses.”

Bare Minimum

So far, this is the European framework that sets the general rules. On this basis, member states can consider more restrictive measures. Italy was among the first to discuss how to regulate the use of social media by minors but has so far not landed on anything concrete. Elsewhere in the EU, France’s Emmanuel Macron has been a trailblazer on the issue, pushing France to discuss a rule to ban social networks for minors under the age of 15 entirely. So far, this measure has received broad political support—but the outcome depends largely on compatibility with the Digital Services Act and the availability of effective age verification systems like the app the European Commission just released.

This article originally appeared on WIRED Italia and has been translated.

-

Entertainment1 week ago

Entertainment1 week agoQueen Elizabeth II emotional message for Archie, Lilibet sparks speculation

-

Tech1 week ago

Tech1 week agoAzure customers up in arms over ‘full’ UK South region | Computer Weekly

-

Tech1 week ago

Tech1 week agoAs the Strait of Hormuz Reopens, Global Shipping Will Take Months to Recover

-

Fashion1 week ago

Fashion1 week agoCII submits 20-pt agenda to Indian govt to back firms hit by Iran war

-

Tech1 week ago

Tech1 week agoThis AI Button Wearable From Ex-Apple Engineers Looks Like an iPod Shuffle

-

Politics7 days ago

Politics7 days agoIndian airlines hit hardest after Dubai limits foreign flights until May 31

-

Entertainment4 days ago

Entertainment4 days agoPalace left in shock as Prince William cancels grand ceremony

-

Uncategorized1 week ago

[CinePlex360] Please moderate: “Trump considers