Tech

Student trust in AI coding tools grows briefly, then levels off with experience

How much do undergraduate computer science students trust chatbots powered by large language models like GitHub Copilot and ChatGPT? And how should computer science educators modify their teaching based on these levels of trust?

These were the questions that a group of U.S. computer scientists set out to answer in a study that will be presented at the Koli Calling conference Nov. 11 to 16 in Finland. In the course of the study’s few weeks, researchers found that trust in generative AI tools increased in the short run for a majority of students.

But in the long run, students said they realized they needed to be competent programmers without the help of AI tools. This is because these tools often generate incorrect code or would not help students with code comprehension tasks.

The study was motivated by the dramatic change in the skills required from undergraduate computer science students since the advent of generative AI tools that can create code from scratch. The work is published on the arXiv preprint server.

“Computer science and programming is changing immensely,” said Gerald Soosairaj, one of the paper’s senior authors and an associate teaching professor in the Department of Computer Science and Engineering at the University of California San Diego.

Today, students are tempted to overly rely on chatbots to generate code and, as a result, might not learn the basics of programming, researchers said. These tools also might generate code that is incorrect or vulnerable to cybersecurity attacks. Conversely, students who refuse to use chatbots miss out on the opportunity to program faster and be more productive.

But once they graduate, computer science students will most likely use generative AI tools in their day-to-day, and need to be able to do so effectively. This means they will still need to have a solid understanding of the fundamentals of computing and how programs work, so they can evaluate the AI-generated code they will be working with, researchers said.

“We found that student trust, on average, increased as they used GitHub Copilot throughout the study. But after completing the second part of the study–a more elaborate project–students felt that using Copilot to its full extent requires a competent programmer that can complete some tasks manually,” said Soosairaj.

The study surveyed 71 junior and senior computer science students, half of whom had never used GitHub Copilot. After an 80-minute class where researchers explained how GitHub Copilot works and had students use the tool, half of the students said their trust in the tool had increased, while about 17% said it had decreased. Students then took part in a 10-day-long project where they worked on a large open-source codebase using GitHub Copilot throughout the project to add a small new functionality to the codebase.

At the end of the project, about 39% of students said their trust in Copilot had increased. But about 37% said their trust in Copilot had decreased somewhat while about 24% said it had not changed.

The results of this study have important implications for how computer science educators should approach the introduction of AI assistants in introductory and advanced courses. Researchers make a series of recommendations for computer science educators in an undergraduate setting.

- To help students calibrate their trust and expectations of AI assistants, computer science educators should provide opportunities for students to use AI programming assistants for tasks with a range of difficulty, including tasks within large codebases.

- To help students determine how much they can trust AI assistants’ output, computer science educators should ensure that students can still comprehend, modify, debug, and test code in large codebases without AI assistants.

- Computer science educators should ensure that students are aware of how AI assistants generate output via natural language processing so that students understand the AI assistants’ expected behavior.

- Computer science educators should explicitly inform and demonstrate key features of AI assistants that are useful for contributing to a large code base, such as adding files as context while using the ‘explain code’ feature and using keywords such as “/explain,” “/fix,” and “/docs” in GitHub Copilot.

“CS educators should be mindful that how we present and discuss AI assistants can impact how students perceive such assistants,” the researchers write.

Researchers plan to repeat their experiment and survey with a larger pool of 200 students this winter quarter.

More information:

Anshul Shah et al, Evolution of Programmers’ Trust in Generative AI Programming Assistants, arXiv (2025). DOI: 10.48550/arxiv.2509.13253

Conference: www.kolicalling.fi/

Citation:

Student trust in AI coding tools grows briefly, then levels off with experience (2025, November 3)

retrieved 3 November 2025

from https://techxplore.com/news/2025-11-student-ai-coding-tools-briefly.html

This document is subject to copyright. Apart from any fair dealing for the purpose of private study or research, no

part may be reproduced without the written permission. The content is provided for information purposes only.

Tech

Anthropic Plots Major London Expansion

Anthropic is moving into a new London office as it seeks to expand its research and commercial footprint in Europe, setting up a scrap between the leading AI labs for talent emerging from British universities.

The company, which opened its first London office in 2023, is moving to the same neighborhood as Google DeepMind, OpenAI, Meta, Wayve, Isomorphic Labs, Synthesia, and various AI research institutions.

Anthropic’s new, 158,000-square-foot office footprint will have space enough for 800 people—four times its current head count—giving it room to potentially outscale OpenAI, which itself recently announced an expansion in London.

“Europe’s largest businesses and fastest-growing startups are choosing Claude, and we’re scaling to match,” says Pip White, head of EMEA North at Anthropic. “The UK combines ambitious enterprises and institutions that understand what’s at stake with AI safety with an exceptional pool of AI talent—we want to be where all of that comes together.

UK government officials had reportedly attempted to coax Anthropic into expanding its presence in London after the company recently fell out with the US administration. Anthropic refused to allow its models to be used in mass surveillance and autonomous weapon systems, leading to an ongoing legal battle between the AI lab and the Pentagon.

As part of the expansion, Anthropic says it will deepen its work with the UK’s AI Security Institute, a government body that this week published a risk evaluation of its latest model, Claude Mythos Preview. According to Politico, the UK government is one of few across Europe to have been granted access to the model, which Anthropic has released to only select parties, citing concerns over the potential for its abuse by cybercriminals.

The increasing concentration of AI companies in the same London district is an important step in creating a pathway for research to translate into AI products, says Geraint Rees, vice-provost at University College London, whose campus is around the corner from Anthropic’s new office.

“This cluster didn’t emerge from a planning document. It grew because serious researchers and companies understand that proximity isn’t a nice-to-have,” he said last month, speaking at an event attended by WIRED. “That’s how the innovation system actually works. It’s not a clean, linear transfer from lab to market. It’s messier, richer, more human than that.”

Tech

CYBERUK ’26: UK lagging on legal protections for cyber pros | Computer Weekly

The increasingly long-in-the-tooth Computer Misuse Act (CMA) of 1990 remains an albatross around the neck of British cyber security professionals, and even though the UK government committed last December to reforming it, every minute of delay is holding back the nation’s security innovation, resilience, talent, and ability to defend itself against cyber attacks, campaigners have warned.

Ahead of the National Cyber Security Centre’s (NCSC’s) upcoming CYBERUK conference in Glasgow, the CyberUp Campaign for reform of the Computer Misuse Act (CMA) has published a new report, titled Protections for Cyber Researchers: How the UK is being left behind to maintain pressure on Westminster.

The CMA defines the vague offence of unauthorised access to a computer, which the campaigners want changed because it was written 35 years ago and fails to account for the development of the cyber security profession, and the fact that in the course of their day-to-day work, cyber pros may sometimes need to hack into other systems.

“Cyber attacks are growing in scale, sophistication and severity, with a devastating impact on infrastructure, businesses and charities,” said a CyberUp campaign spokesperson.

“While other countries have moved to refresh their cyber laws in response, the UK’s Computer Misuse Act hasn’t been updated since before the modern internet – hardly the best platform for accelerating our defences into the next decade.”

The group’s report highlights how other nations, Australia, Belgium, France, Germany, Hong Kong, Malta, Portugal, and the USA, have already secured legal protections for cyber professionals that enable them to go about their business without fear of prosecution.

In Portugal – Britain’s oldest formal ally under a treaty dating back to the 14th Century – the government last year published Decreto-Lei 125/2025, implementing the European Union (EU) Network and Information Systems (NIS2) Directive and revising the country’s cyber crime law to ensure that ethical hackers and professional cyber security practitioners working in good faith are both recognised and protected.

Portgual’s laws now accept some elements of cyber work may have to happen without explicit permission or involve unanticipated technical overreach that has a legitimate purpose.

As such, Portugal says that security work undertaken in good faith won’t be punished as long as the researcher fulfills a set of conditions. For example, they can act only to find vulnerabilities and these must be reported immediately, they must avoid taking harmful actions, like conducting DDoS attacks or installing malware, and they must respect the integrity of any data they may find or access and delete it within 10 days once the issue is addressed.

CyberUp said Portugal’s example demonstrates how cyber crime laws can be modernised to legally protect research carried out in the public interest.

“Portugal has demonstrated how to modernise their equivalent law through cyber legislation. We urge the government to follow this example and act swiftly through the Cyber Security and Resilience Bill to achieve meaningful reform, or risk lagging even further behind our peers,” the spokesperson said.

Defence Framework

Working with cyber security experts and legal advisors, the CyberUp campaign has developed its own Defence Framework that would allow cyber professionals to present a statutory defence in court as long as they adhere to the Framework’s four core principles.

- Harm Vs. Benefit: The benefits of the activity must outweigh the potential harms;

- Proportionality: Cyber pros must take all reasonable steps to minimise the risks of their activity;

- Intent: They must act honestly, sincerely, and clearly direct themselves towards improving security;

- Competence: Their qualifications and professional memberships should demonstrate they are suitably equipped to perform cyber security work.

The campaigners say this framework will bring clarity and confidence to the security sector, enabling cyber pros to run essential research tasks without fear of criminal prosecution, helping organisations operate to recognised legal standards, and enabling a more open and collaborative relationship between the cyber sector and the UK government.

Tech

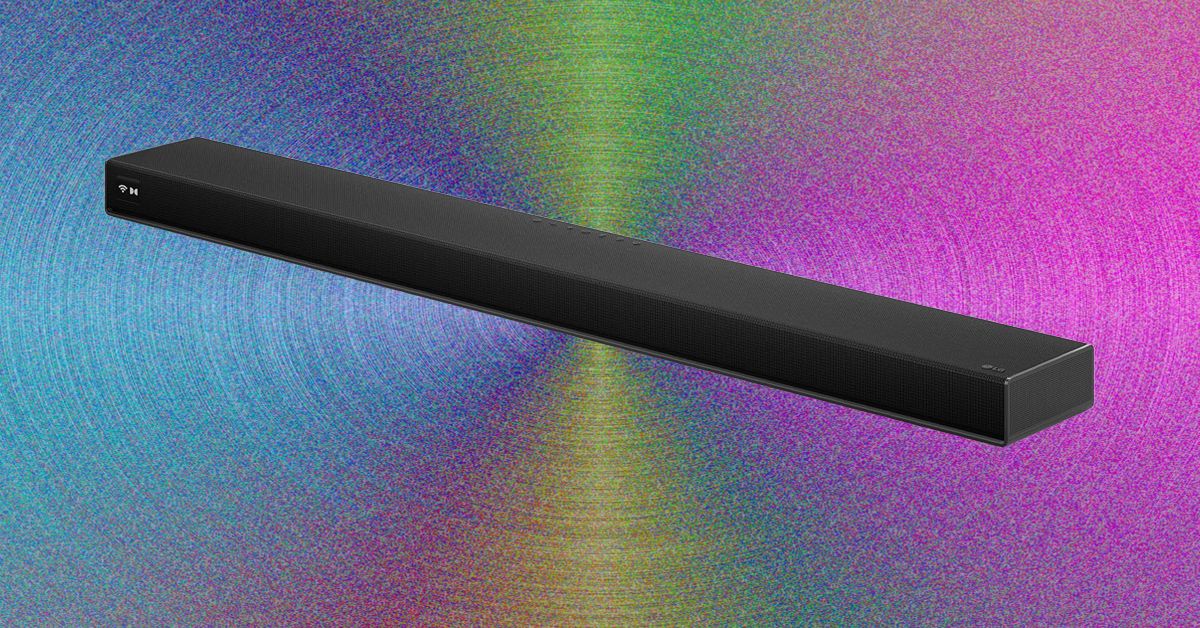

LG’s High-End Soundbar System Makes My Living Room Feel Like a Home Theater

Setup was relatively quick and painless. You just have to unbox four speakers, a soundbar, and a subwoofer, attach their power cables, and plug in everything. Pairing happens through the LG ThinQ app, which allows you to set up the Sound Suite system and tune it to exactly where you’re sitting in the room using your cell phone’s microphone.

You can also set up each speaker to play music and group it with any other LG smart speakers you might have around your home, like the more affordable $250 M5 bookshelf speaker, to create a whole-home system.

Once all the components were synced, I plugged the soundbar into the C5 OLED via HDMI, and was able to easily control everything via the TV remote’s volume and mute buttons. More in-depth settings had to happen in the app, but if you’re anything like me, this won’t become a regular chore. You’ll set it how you like it once and move on. While the pairing functionality with the LG TV was nice, it’s not required–the eARC port lets the Sound Suite work perfectly with any modern TV.

The bar itself runs the show, with a black-and-white display on the far left that shows your mode and volume, among other settings. In the center of the bar and below each speaker, an LED light strip that also shows you the volume when you change it, which is a nice touch.

Getting Musical

Photograph: Parker Hall

The sound of the LG Sound Suite is full and cinematic, thanks in no small part to the extra dedicated speakers. Most competitors lack front left and right, simply opting to use the soundbar for these channels. As such, the width and breadth of the soundstage were bigger than most competitors I’ve tried, with only Samsung’s flagship HW-Q990F as a real contender. Even the Samsung lacked the lower-frequency audio quality that these LG speakers provide.

-

Entertainment1 week ago

Entertainment1 week agoQueen Elizabeth II emotional message for Archie, Lilibet sparks speculation

-

Tech1 week ago

Tech1 week agoAzure customers up in arms over ‘full’ UK South region | Computer Weekly

-

Tech1 week ago

Tech1 week agoAs the Strait of Hormuz Reopens, Global Shipping Will Take Months to Recover

-

Fashion1 week ago

Fashion1 week agoCII submits 20-pt agenda to Indian govt to back firms hit by Iran war

-

Tech1 week ago

Tech1 week agoThis AI Button Wearable From Ex-Apple Engineers Looks Like an iPod Shuffle

-

Politics6 days ago

Politics6 days agoIndian airlines hit hardest after Dubai limits foreign flights until May 31

-

Entertainment4 days ago

Entertainment4 days agoPalace left in shock as Prince William cancels grand ceremony

-

Politics6 days ago

Politics6 days agoChinese, Taiwanese will unite, Xi tells Taiwan opposition leader