Tech

Human-machine teaming dives underwater

The electricity to an island goes out. To find the break in the underwater power cable, a ship pulls up the entire line or deploys remotely operated vehicles (ROVs) to traverse the line. But what if an autonomous underwater vehicle (AUV) could map the line and pinpoint the location of the fault for a diver to fix?

Such underwater human-robot teaming is the focus of an MIT Lincoln Laboratory project funded through an internally administered R&D portfolio on autonomous systems and carried out by the Advanced Undersea Systems and Technology Group. The project seeks to leverage the respective strengths of humans and robots to optimize maritime missions for the U.S. military, including critical infrastructure inspection and repair, search and rescue, harbor entry, and countermine operations.

“Divers and AUVs generally don’t team at all underwater,” says principal investigator Madeline Miller. “Underwater missions requiring humans typically do so because they involve some sort of manipulation a robot can’t do, like repairing infrastructure or deactivating a mine. Even ROVs are challenging to work with underwater in very skilled manipulation tasks because the manipulators themselves aren’t agile enough.”

Beyond their superior dexterity, humans excel at recognizing objects underwater. But humans working underwater can’t perform complex computations or move very quickly, especially if they are carrying heavy equipment; robots have an edge over humans in processing power, high-speed mobility, and endurance. To combine these strengths, Miller and her team are developing hardware and algorithms for underwater navigation and perception — two key capabilities for effective human-robot teaming.

As Miller explains, divers may only have a compass and fin-kick counts to guide them. With few landmarks and potentially murky conditions caused by a lack of light at depth or the presence of biological matter in the water column, they can easily become disoriented and lost. For robots to help divers navigate, they need to perceive their environment. However, in the presence of darkness and turbidity, optical sensors (cameras) cannot generate images, while acoustic sensors (sonar) generate images that lack color and only show the shapes and shadows of objects in the scene. The historical lack of large, labeled sonar image datasets has hindered training of underwater perception algorithms. Even if data were available, the dynamic ocean can obscure the true nature of objects, confusing artificial intelligence. For instance, a downed aircraft broken into multiple pieces, or a tire covered in an overgrowth of mussels, may no longer resemble an aircraft or tire, respectively.

“Ultimately, we want to devise solutions for navigation and perception in expeditionary environments,” Miller says. “For the missions we’re thinking about, there is limited or no opportunity to map out the area in advance. For the harbor entry mission, maybe you have a satellite map but no underwater map, for example.”

On the navigation side, Miller’s team picked up on work started by the MIT Marine Robotics Group, led by John Leonard, to develop diver-AUV teaming algorithms. With their navigation algorithms, Leonard’s group ran simulations under optimal conditions and performed field testing in calm waters using human-paddled kayaks as proxies for both divers and AUVs. Miller’s team then integrated these algorithms into a mission-relevant AUV and began testing them under more realistic ocean conditions, initially with a support boat acting as a diver surrogate, and then with actual divers.

“We quickly learned that you need more sensing capabilities on the diver when you factor in ocean currents,” Miller explains. “With the algorithms demonstrated by MIT, the vehicle only needed to calculate the distance, or range, to the diver at regular intervals to solve the optimization problem of estimating the positions of both the vehicle and diver over time. But with the real ocean forces pushing everything around, this optimization problem blows up quickly.”

On the perception side, Miller’s team has been developing an AI classifier that can process both optical and sonar data mid-mission and solicit human input for any objects classified with uncertainty.

“The idea is for the classifier to pass along some information — say, a bounding box around an image — to the diver and indicate, “I think this is a tire, but I’m not sure. What do you think?” Then, the diver can respond, “Yes, you’ve got it right, or no, look over here in the image to improve your classification,” Miller says.

This feedback loop requires an underwater acoustic modem to support diver-AUV communication. State-of-the-art data rates in underwater acoustic communications would require tens of minutes to send an uncompressed image from the AUV to the diver. So, one aspect the team is investigating is how to compress information into a minimum amount to be useful, working within the constraints of the low bandwidth and high latency of underwater communications and the low size, weight, and power of the commercial off-the-shelf (COTS) hardware they’re using. For their prototype system, the team procured mostly COTS sensors and built a sensor payload that would easily integrate into an AUV routinely employed by the U.S. Navy, with the goal of facilitating technology transition. Beyond sonar and optical sensors, the payload features an acoustic modem for ranging to the diver and several data processing and compute boards.

Miller’s team has tested the sensor-equipped AUV and algorithms around coastal New England — including in the open ocean near Portsmouth, New Hampshire, with the University of New Hampshire’s (UNH) Gulf Surveyor and Gulf Challenger coastal research vessels as diver surrogates, and on the Boston-area Charles River, with an MIT Sailing Pavilion skiff as the surrogate.

“The UNH boats are well-equipped and can access realistic ocean conditions. But pretending to be a diver with a large boat is hard. With the skiff, we can move more slowly and get the relative motion in tune with how a diver and AUV would navigate together.”

Last summer, the team started testing equipment with human divers at Michigan Technological University’s Great Lakes Research Center. Although the divers lacked an interface to feed back information to the AUV, each swam holding the team’s tube-shaped prototype tablet, dubbed a “tube-let.” The tube-let was equipped with a pressure and depth sensor, inertial measurement unit (to track relative motion), and ranging modem — all necessary components for the navigation algorithms to solve the optimization problem.

“A challenge during testing was coordinating the motion of the diver and vehicle, because they don’t yet collaborate,” Miller says. “Once the divers go underwater, there is no communication with the team on the surface. So, you have to plan where to put the diver and vehicle so they don’t collide.”

The team also worked on the perception problem. The water clarity of the Great Lakes at that time of year allowed for underwater imaging with an optical sensor. Caroline Keenan, a Lincoln Scholars Program PhD student jointly working in the laboratory’s Advanced Undersea Systems and Technology Group and Leonard’s research group at MIT, took the opportunity to advance her work on knowledge transfer from optical sensors to sonar sensors. She is exploring whether optical classifiers can train sonar classifiers to recognize objects for which sonar data doesn’t exist. The motivation is to reduce the human operator load associated with labeling sonar data and training sonar classifiers.

With the internally funded research program coming to an end, Miller’s team is now seeking external sponsorship to refine and transition the technology to military or commercial partners.

“The modern world runs on undersea telecommunication and power cables, which are vulnerable to attack by disruptive actors. The undersea domain is becoming increasingly contested as more nations develop and advance the capabilities of autonomous maritime systems. Maintaining global economic security and U.S. strategic advantage in the undersea domain will require leveraging and combining the best of AI and human capabilities,” Miller says.

Tech

There’s New Evidence for How Loneliness Affects Memory in Old Age

Neuroscientists know that there is a link between loneliness and cognitive decline in older adults, although it is still difficult to understand the exact magnitude of the link. A new longitudinal study provides evidence that a proportion of people who feel lonely end up having more memory impairment, though this doesn’t necessarily mean that their brains age faster.

The report, published in Aging & Mental Health, shows that older adults with higher levels of loneliness scored lower on tests of immediate and delayed recall. Even so, the rate at which their memory declined over six years was virtually identical to those who were not lonely.

“It suggests that loneliness may play a more prominent role in the initial state of memory than in its progressive decline,” said Luis Carlos Venegas-Sanabria of the School of Medicine and Health Sciences at Universidad del Rosario, who led the research. “The study underscores the importance of addressing loneliness as a significant factor in the context of cognitive performance in older adults.”

Six-Year Study of Thousands of Single People

The team analyzed data from the Survey of Health, Ageing and Retirement in Europe (SHARE), one of the most robust longitudinal databases for studying aging. For six years, the researchers followed 10,217 adults, aged 65 to 94, from 12 European countries. They assessed their level of loneliness and their performance on memory tests.

The results show that age was the most important determinant of memory level and speed of decline. From the age of 75 onwards, scores began to fall more rapidly. After 85 the decline became more pronounced. Depression and chronic diseases such as diabetes also reduced the initial score. Loneliness, while influencing the starting point, did not accelerate the slope of cognitive decline.

The study also found that physical activity was associated with better initial memory scores. People who engaged in moderate or vigorous physical activity at least once a month recalled more words on immediate and delayed recall tests. This effect did not change the speed of decline, but it did raise the baseline level, which functions as a kind of “cognitive buffer.”

Although the study does not explore the causes of the link between loneliness and cognition, previous research has proposed plausible mechanisms. Loneliness is often associated with less social interaction, a factor that influences cognitive performance. It is also associated with increased risk of depression, which does directly affect memory tests. In addition, lonely people tend to have more health problems, such as hypertension or diabetes, which also affect cognitive function.

By 2050, according to United Nations projections, one in six people in the world will be over the age of 65. Societies are entering a stage where old age will no longer be the exception but will become the norm. Dementia, as well as other neurodegenerative diseases that appear with age, will be a major challenge for health care institutions.

Tech

Managing traffic in space

Chances are, you’ve already used a satellite today. Satellites make it possible for us to stream our favorite shows, call and text a friend, check weather and navigation apps, and make an online purchase. Satellites also monitor the Earth’s climate, the extent of agricultural crops, wildlife habitats, and impacts from natural disasters.

As we’ve found more uses for them, satellites have exploded in number. Today, there are more than 10,000 satellites operating in low-Earth orbit. Another 5,000 decommissioned satellites drift through this region, along with over 100 million pieces of debris comprising everything from spent rocket stages to flecks of spacecraft paint.

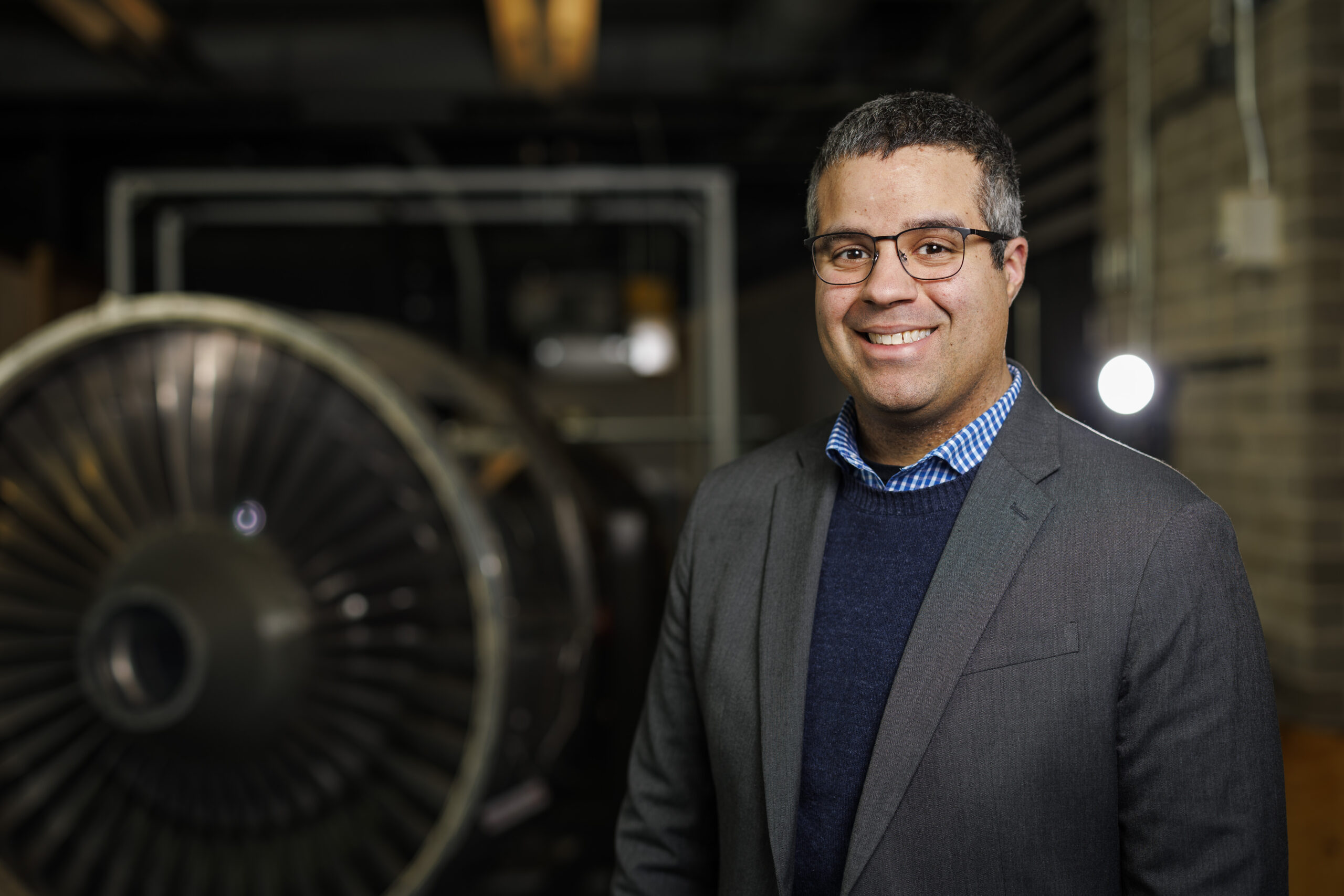

For MIT’s Richard Linares, the rapid ballooning of satellites raises pressing questions: How can we safely manage traffic and growing congestion in space? And at what point will we reach orbital capacity, where adding more satellites is not sustainable, and may in fact compromise spacecraft and the services that we rely on?

“It is a judgement that society has to make, of what value do we derive from launching more satellites,” says Linares, who recently received tenure as an associate professor in MIT’s Department of Aeronautics and Astronautics (AeroAstro). “One of the things we try to do is approach these questions of traffic management and orbital capacity as engineering problems.”

Linares leads the MIT Astrodynamics, Space Robotics, and Controls Lab (ARCLab), a research group that applies astrodynamics (the motion and trajectory of orbiting objects) to help track and manage the millions of objects in orbit around the Earth. The group also develops tools to predict how space traffic and debris will change as operators launch large satellite “mega-constellations” into space.

He is also exploring the effects of space weather on satellites, as well as how climate change on Earth may limit the number of satellites that can safely orbit in space. And, anticipating that satellites will have to be smarter and faster to navigate a more cluttered environment, Linares is looking into artificial intelligence to help satellites autonomously learn and reason to adapt to changing conditions and fix issues onboard.

“Our research is pretty diverse,” Linares says. “But overall, we want to enable all these economic opportunities that satellites give us. And we are figuring out engineering solutions to make that possible.”

Grounding practical problems

Linares was born and raised in Yonkers, New York. His parents both worked as school bus drivers to support their children, Linares being the youngest of six. He was an active kid and loved sports, playing football throughout high school.

“Sports was a way to stay focused and organized, and to develop a work ethic,” Linares says. “It taught me to work hard.”

When applying for colleges, rather than aim for Division I schools like some of his teammates, Linares looked for programs that were strong in science, specifically in aerospace. Growing up, he was fascinated with Carl Sagan’s “Cosmos” docuseries. And being close to Manhattan, he took regular trips to the Hayden Planetarium to take in the center’s immersive projections of space and the technologies used to explore it.

“My interest in science came from the universe and trying to understand our place within it,” Linares recalls.

Choosing to stay close to home, he applied to in-state schools with strong aeronautical engineering departments, and happily landed at the State University of New York at Buffalo (SUNY Buffalo), where he would ultimately earn his bachelor’s, master’s, and doctoral degrees, all in aerospace engineering.

As an undergraduate, Linares took on a research project in astrodynamics, looking to solve the problem of how to determine the relative orientation of satellites flying in formation.

“Formation flying was a big topic in the early 2000s,” Linares says. “I liked the flavor of the math involved, which allowed me to go a layer deeper toward a solution.”

He worked out the math to show that when three satellites fly together, they essentially form a triangle, the angles of which can be calculated to determine where each satellite is in relation to the other two at any moment in time. His work introduced a new controls approach to enable satellites to fly safely together. The research had direct applications for the U.S. Air Force, which helped to sponsor the work.

As he expanded the research into a master’s thesis, Linares also took opportunities to work directly with the Air Force on issues of satellite tracking and orientation. He served two internships with the U.S. Air Force Research Lab, one at Kirtland Air Force Base in Albuquerque, New Mexico, and the other in Maui, Hawaii.

“Being able to collaborate with the Air Force back then kind of grounded the research in practical problems,” Linares says.

For his PhD, he turned to another practical problem of “uncorrelated tracks.” At the time, the Air Force operated a network of telescopes to observe more than 20,000 objects in space, which they were working to label and record in a catalog to help them track the objects over time. But while detecting objects was relatively straightforward, the challenge came in correlating a detected object with what was already in the catalog. In other words, is what they were seeing something they had already seen?

Linares developed image analysis techniques to identify key characteristics of objects such as their shape and orientation, which helped the Air Force “fingerprint” satellites and pieces of space debris, and track their activity — and potential for collisions — over time.

After completing his PhD, Linares worked as a postdoc at Los Alamos National Laboratory and the U.S. Naval Observatory. During that time he expanded his aerospace work to other areas including space weather, using satellite measurements to model how Earth’s ionosphere — the upper layer of the atmosphere that is ionized by the sun’s radiation — affects satellite drag.

He then accepted a position as assistant professor of aerospace engineering at the University of Minnesota at Minneapolis. For the next three years, he continued his research in modeling space weather, tracking space objects and coordinating satellites to fly in swarms.

Making space

In 2018, Linares made the move to MIT.

“I had a lot of respect for the people and for the history of the work that was done here,” says Linares, who was especially inspired by the legendary Charles Stark “Doc” Draper, who developed the first inertial guidance systems in the 1940s that would enable the self-navigation of airplanes, submarines, satellites, and spacecraft for decades to come. “This was essentially my field, and I knew MIT was the best place to continue my career.”

As a junior faculty member in AeroAstro, Linares spent his first years focused on an emerging challenge: space sustainability. Around that time, the first satellite constellations were launching into low-Earth orbit with SpaceX’s Starlink, which aimed to provide global internet coverage via a huge network of several thousand coordinating satellites. The launching of so many satellites, into orbits that already held other active and nonactive satellites, along with millions of pieces of space debris, raised questions about how to safely manage the satellite traffic and how much traffic an orbit can sustain.

“At what level do we reach a tipping point, where we have too many satellites in certain orbital regimes?” Linares says. “It was kind of a known problem at the time, but there weren’t many solutions.”

Linares’ group applied an understanding of astrodynamics, and the physics of how objects move in space, to figure out the best way to pack satellites in orbital “shells,” or lanes that would most likely prevent collisions. They also developed a state-of-the-art model of orbital traffic, that was able to simulate the trajectories of more than 10 million individual objects in space. Previous models were much more limited in the number of objects they could accurately simulate. Linares’ open-source model, called the MIT Orbital Capacity Assessment Tool, or MoCAT, could account for the millions of pieces of space debris, in addition to the many intact satellites in orbit.

The tools that his group has developed are used today by satellite operators to plan and predict safe spacecraft trajectories. His team is continuing to work on problems of space traffic management and orbital capacity. They are also branching out into space robotics. The team is testing ways to teleoperate a humanoid robot, which could potentially help to build future infrastructure and carry out long-duration tasks in space.

Linares is also exploring artificial intelligence, including ways that a satellite can autonomously “learn” from its experience and safely adapt to uncertain environments.

“Imagine if each satellite had a virtual Doc Draper onboard that could do the de-bugging that we did from the ground during the Apollo missions,” Linares says. “That way, satellites would become instantaneously more robust. And it’s not taking the human out of the equation. It’s allowing the human to be amplified. I think that’s within reach.”

Tech

Meta Glasses Are Comfortable, Functional, and Make My Spouse Recoil in Fear

Every time I’ve written about Meta’s AI-enabled glasses, I invariably get asked these questions: Why do you even want these? Why do you want smart glasses that can play music or misidentify native flora in a weirdly cheery voice? I am a lifelong Ray-Ban Wayfarer wearer, and I’m also WIRED’s resident Meta wearer. I grab a pair of Meta glasses whenever I leave the house because I like being able to use one device instead of two or three on a walk. With Meta glasses, I can wear sunglasses and workout headphones in one!

Meta sold more than 7 million pairs in 2025. Take a look at any major outdoor or sporting event, and you’ll see more than a few people wearing these to record snippets for Instagram or TikTok. Meta’s partnership with EssilorLuxottica has made smart glasses accessible, stylish, and useful and is undoubtedly the reason why Google, and now Apple, are trying to horn in on the market. After the notable flop that is the Apple Vision Pro, Apple is recalibrating its face-wearable strategy, moving away from augmented reality (AR) toward simpler, display-less, and hopefully good-looking glasses.

That’s not to say that you shouldn’t be careful how you use these glasses. Meta doesn’t have the greatest track record on privacy, and the company has continued to push forward with policies that are questionable at best. Even if you’re not concerned that face recognition will allow Meta to target immigrants or enable stalkers to find their victims, at the very least, people really do not like the idea that you could start recording them at any moment.

Probably the biggest hurdle to wearing Meta glasses is that even doing so seems like a gross violation of the social contract. After all, these are Mark Zuckerberg’s “pervert glasses.” When I pop these on my head, I’ve had friends (and my spouse) recoil and say, “I have apps to warn me away from people like you.” The best part, though, is that Oakley and Ray-Ban already make really great sunglasses. Even if the battery runs out or you don’t use Meta AI at all, these are stellar at shading your eyes from the sun.

Anyway, if you decide to try them, here’s what you should get. If you do chicken out, check out our buying guides to the Best Smart Glasses or the Best Workout Headphones for more.

Table of Contents

Best Overall

Last year, Meta upgraded the original Meta Ray-Ban Wayfarers that became a smash hit. These are Meta’s entry-level glasses, and they come in a variety of lens styles. You can order them with clear lenses, prescription lenses, transition lenses, or the OG sunglass lenses, as well as in a variety of fits, including standard, large, or high-bridge frames. Improvements to this generation include an upgrade to a 12-MP camera and up to eight hours of battery life; writer Boone Ashworth’s testing clocked in at five to six hours.

-

Fashion4 days ago

Fashion4 days agoFrance’s LVMH Q1 revenue falls 6%, shows resilience amid Iran war

-

Sports7 days ago

Sports7 days agoThe case for Man United’s Fernandes as Premier League’s best

-

Entertainment1 week ago

Entertainment1 week agoPalace left in shock as Prince William cancels grand ceremony

-

Business1 week ago

Business1 week agoUK could adopt EU single market rules under new legislation

-

Entertainment5 days ago

Entertainment5 days agoIs Claude down? Here’s why users are seeing errors

-

Sports1 week ago

Sports1 week agoLamar Jackson hits back at critics with faithful message on social media

-

Fashion1 week ago

Fashion1 week agoEnergy emerges as biggest cost driver in textile margins

-

Business1 week ago

Business1 week agoRoad accidents: a massive economic challenge | The Express Tribune

%2520Shiny%2520Black%2520Green%2520Lenses.png)