Tech

BEAST-GB model combines machine learning and behavioral science to predict people’s decisions

A key objective of behavioral science research is to better understand how people make decisions in situations where outcomes are unknown or uncertain, which entail a certain degree of risk.

The ability to predict people’s choices in these situations could be highly advantageous, as it could help to draft effective initiatives aimed at prompting people to make better decisions for themselves and others in their community.

Researchers at Technion (Israel Institute of Technology) and various institutes in the United States recently developed a new computational model called BEAST-GB, which was found to predict people’s decisions in situations that entail risk and uncertainty.

Their proposed model, outlined in a paper published in Nature Human Behavior, combines advanced machine learning algorithms with behavioral science theory.

“Human-decision research is rich in competing theories, yet none reliably and accurately predicts human choices across contexts,” Ori Plonsky, first author of the paper, told Tech Xplore.

“To see which ideas really work, we organized CPC18, a ‘choice prediction competition’ in which anyone could submit a computational model to predict people’s decisions under risk and uncertainty. We were especially interested in knowing if data-driven machine learning, theory-driven behavioral models, or, as was our guess, a hybrid that embeds behavioral theory inside ML, would excel.”

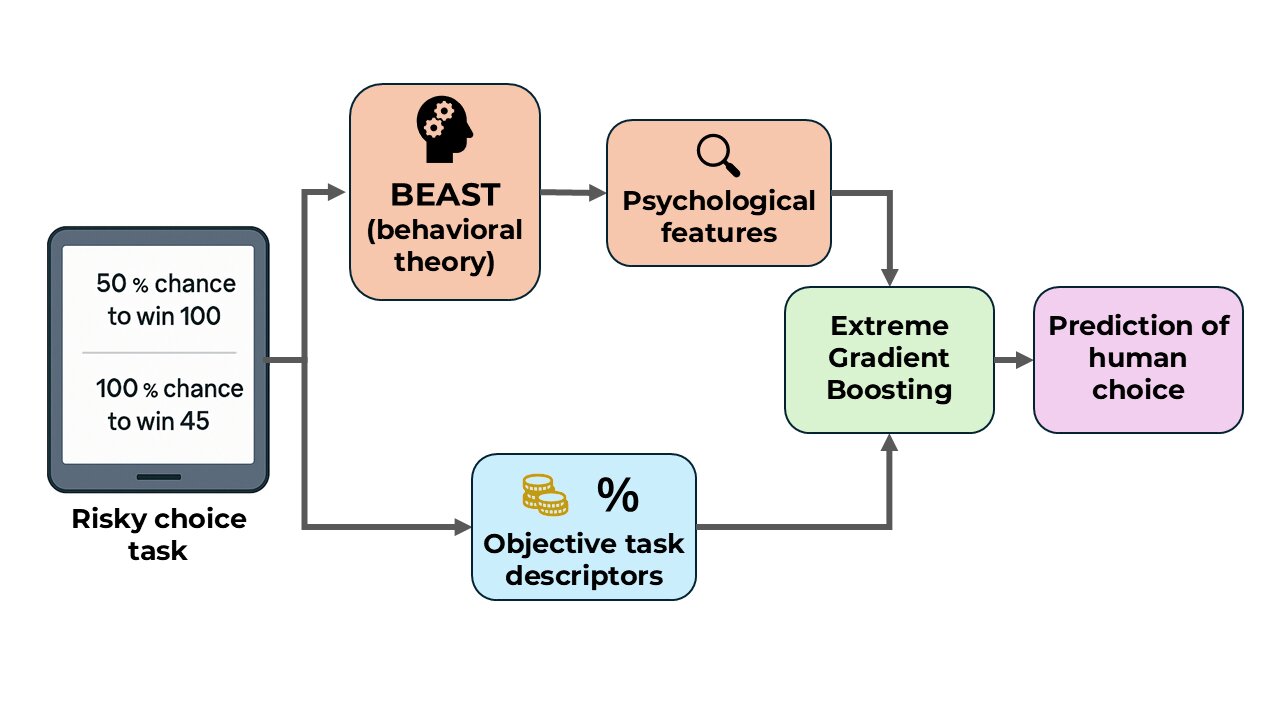

The new machine learning model developed by Plonsky and his colleagues draws from a behavioral science framework known as BEAST (Best Estimate and Sampling Tools). This is a model based on psychological theories that were previously found to predict people’s decisions with good accuracy.

“BEAST assumes that, in choice under risk and uncertainty, people mix several strategies, such as minimizing the chances of immediate regret or hedging against worst outcomes,” explained Plonsky.

“We translated each strategy into a ‘behavioral feature,’ a concise formula that captures how sensitive a decision-maker should be to that consideration in any given choice task. We then fed these theory-based features, plus purely objective task descriptors, into Extreme Gradient Boosting (a machine learning algorithm known to be highly useful in prediction tournaments)—hence the name BEAST-GB.”

With the enhancements implemented by the researchers, the BEAST-GB model could analyze behavioral data and derive the motives driving decisions, as well as the impact of these motives in different decision-making scenarios.

Notably, BEAST-GB won the CPC18 Choice Prediction Competition in 2018, capturing 93% of predictable variation in the data it was fed, and 96% in follow-up tests utilizing a dataset that was 40 times larger.

“BEAST-GB outperformed dozens of mainstream behavioral models and purely data-driven machine learning,” said Plonsky.

“With just 2% of the training data, it has already beat a deep neural network trained on all the training data. The model even accurately predicts choices people make in new experiments it has never seen, implying it captures general human choice patterns. Finally, we used it to improve and enhance the underlying interpretable behavioral theory, so it enhances our ability to explain, not only predict, human decision making.”

This recent work highlights the promise of machine learning models that also draw from behavioral science for predicting people’s decisions and responses in real-world scenarios. In the future, BEAST-GB and other similar models could guide the design of new large-scale interventions aimed at improving people’s decisions via nudges, incentives or other behavioral science-based strategies.

Plonsky and his colleagues eventually plan to collaborate with policymakers and other parties involved in the design or implementation of behavioral science initiatives. This would allow them to test their model “in the wild,” validating its potential in real-world settings, while also yielding insight that could inform its further advancement.

“Other recent publications have suggested that human decision-making and other behaviors can be very effectively predicted using advanced data-driven machine learning methods like large language models tuned on large behavioral data,” added Plonsky.

“We now plan to continue investigating when and how BEAST-like theory can enhance such data-driven methods in predicting behavior. Specifically, we plan to extend our domain of research by including natural-language decision problems, more aligned with the real world.”

Written for you by our author Ingrid Fadelli,

edited by Sadie Harley, and fact-checked and reviewed by Robert Egan—this article is the result of careful human work. We rely on readers like you to keep independent science journalism alive.

If this reporting matters to you,

please consider a donation (especially monthly).

You’ll get an ad-free account as a thank-you.

More information:

Ori Plonsky et al, Predicting human decisions with behavioural theories and machine learning, Nature Human Behaviour (2025). DOI: 10.1038/s41562-025-02267-6.

© 2025 Science X Network

Citation:

BEAST-GB model combines machine learning and behavioral science to predict people’s decisions (2025, August 14)

retrieved 14 August 2025

from https://techxplore.com/news/2025-08-beast-gb-combines-machine-behavioral.html

This document is subject to copyright. Apart from any fair dealing for the purpose of private study or research, no

part may be reproduced without the written permission. The content is provided for information purposes only.

Tech

OpenAI Had Banned Military Use. The Pentagon Tested Its Models Through Microsoft Anyway

OpenAI CEO Sam Altman is still in the hot seat this week after his company signed a deal with the US military. OpenAI employees have criticized the move, which came after Anthropic’s roughly $200 million contract with the Pentagon imploded, and asked Altman to release more information about the agreement. Altman admitted it looked “sloppy” in a social media post.

While this incident has become a major news story, it may just be the latest and most public example of OpenAI creating vague policies around how the US military can access its AI.

In 2023, OpenAI’s usage policy explicitly banned the military from accessing its AI models. But some OpenAI employees discovered the Pentagon had already started experimenting with Azure OpenAI, a version of OpenAI’s models offered by Microsoft, two sources familiar with the matter said. At the time, Microsoft had been contracting with the Department of Defense for decades. It was also OpenAI’s largest investor, and had broad license to commercialize the startup’s technology.

That same year, OpenAI employees saw Pentagon officials walking through the company’s San Francisco offices, the sources said. They spoke on the condition of anonymity as they aren’t licensed to comment on private company matters.

Some OpenAI employees were wary about associating with the Pentagon, while others were simply confused about what OpenAI’s usage policies meant. Did the policy apply to Microsoft? While sources tell WIRED it was not clear to most employees at the time, spokespeople from OpenAI and Microsoft say Azure OpenAI products are not, and were not, subject to OpenAI’s policies.

“Microsoft has a product called the Azure OpenAI Service that became available to the US Government in 2023 and is subject to Microsoft terms of service,” said spokesperson Frank Shaw in a statement to WIRED. Microsoft declined to comment specifically on when it made Azure OpenAI available to the Pentagon, but notes the service was not approved for “top secret” government workloads until 2025.

“AI is already playing a significant role in national security and we believe it’s important to have a seat at the table to help ensure it’s deployed safely and responsibly,” OpenAI spokesperson Liz Bourgeois said in a statement. “We’ve been transparent with our employees as we’ve approached this work, providing regular updates and dedicated channels where teams can ask questions and engage directly with our national security team.”

The Department of Defense did not respond to WIRED’s request for comment.

By January 2024, OpenAI updated its policies to remove the blanket ban on military use. Several OpenAI employees found out about the policy update through an article in The Intercept, sources say. Company leaders later addressed the change at an all-hands meeting, explaining how the company would tread carefully in this area moving forward.

In December 2024, OpenAI announced a partnership with Anduril to develop and deploy AI systems for “national security missions.” Ahead of the announcement, OpenAI told employees that the partnership was narrow in scope and would only deal with unclassified workloads, the same sources said. This stood in contrast to a deal Anthropic had signed with Palantir, which would see Anthropic’s AI used for classified military work.

Palantir approached OpenAI in the fall of 2024 to discuss participating in their “FedStart” program, an OpenAI spokesperson confirmed to WIRED. The company ultimately turned it down, and told employees it would’ve been too high-risk, two sources familiar with the matter tell WIRED. However, OpenAI now works with Palantir in other ways.

Around the time the Anduril deal was announced, a few dozen OpenAI employees joined a public Slack channel to discuss their concerns about the company’s military partnerships, sources say and a spokesperson confirmed. Some believed the company’s models were too unreliable to handle a user’s credit card information, let alone assist Americans on the battlefield.

Tech

Don’t Risk Birdwatching FOMO—Put Out Your Hummingbird Feeders Now

Though most people associate the beginning of March with the hopefulness of spring and the indignities of daylight saving time, there’s another important event taking place yards all over the country: hummingbird season.

While many species of hummingbirds can be seen in regions year-round, others are migratory, and this time typically marks their return from wintering grounds in Central and South America. These tiny birds can lose up to 40 percent of their body weight by the time they arrive here after having flown thousands of miles, and since many flowers haven’t bloomed yet, nectar feeders can be a source of essential fuel.

Though I test smart bird feeders year-round, I don’t use hummingbird feeders as often as I should, as it’s imperative that they be cleaned and refilled with new nectar every two or three days (a ratio of 1:4 granulated sugar to water is best, and avoid any dyes or additives) to prevent deadly bacteria and mold, and I don’t always have the time.

But if you are going to invest the energy in maintaining a hummingbird feeder, right now is the best time, as you have a chance to see migratory species you might not otherwise encounter, such as black-chinned hummingbirds. A smart feeder helps you ID them, whether they’re stopping at your feeder on their way north or arriving at their final destination.

Birdbuddy’s Pro is the smart hummingbird feeder I recommend and use myself when I’m not actively testing. The app is easy to navigate and sends cleaning reminders, the built-in solar roof keeps the battery charged, and, unlike other feeders, only the shallow bottom screws off for refilling. No having to pour sticky nectar through a narrow opening, or turn a giant cylinder upside down and risk spilling.

Note that it’s not perfect; the sensor is inconsistent and doesn’t capture every hummingbird that visits, but for the camera quality (5 MP photos, 2K video with slow-motion, 122-degree field of view) and ease of use, it’s a foible I’m willing to put up with. If you already have another Birdbuddy feeder, the hummingbird feeder images and videos will integrate seamlessly into your app feed.

Right now, the feeder is 37 percent off on Birdbuddy’s website—a deal I usually don’t see outside of shopping events like Black Friday or Amazon Prime Day. Note that the feeder only runs on 2.4 GHz Wi-Fi, and while it is fully functional without a subscription, a Birdbuddy Premium subscription will let you add friends and family members to your account so they can see the birds as well. That’s $99 a year through the app.

Power up with unlimited access to WIRED. Get best-in-class reporting and exclusive subscriber content that’s too important to ignore. Subscribe Today.

Tech

The Controversies Finally Caught Up to Kristi Noem

After a tenure marked by controversy and a contentious week of Congressional hearings, secretary Kristi Noem is out as head of the Department of Homeland Security.

President Donald Trump announced in a Truth Social post on Thursday that Noem would be replaced by senator Markwayne Mullin of Oklahoma, a staunch Trump ally and immigration hardliner. “The current Secretary, Kristi Noem, who has served us well, and has had numerous and spectacular results (especially on the Border!), will be moving to be Special Envoy for The Shield of the Americas, our new Security Initiative in the Western Hemisphere we are announcing on Saturday in Doral, Florida,” Trump wrote. “I thank Kristi for her service at ‘Homeland.’”

DHS did not immediately respond to a request for comment.

The agencies under DHS include Immigration and Customs Enforcement, US Customs and Border Protection, the Cybersecurity and Infrastructure Security Agency, the Federal Emergency Management Agency, US Citizenship and Immigration Services, the US Coast Guard, and others. It’s a sprawling network whose vast responsibilities and rapidly expanding budget have put it at the center of the Trump administration’s radical overhaul of immigration and border policy.

Speculation has swirled around Noem’s departure for months. Critics have assailed DHS’s aggressive immigration enforcement tactics, while Noem and figures like White House border czar Tom Homan have reportedly been at odds over how to execute the administration’s mass deportation agenda, with Noem and senior adviser Corey Lewandowski said to have emphasized sheer numbers of arrests and deportations above other considerations.

The relationship between Noem and Lewandowski has itself been a subject of controversy, with CNN reporting that a September meeting between the two and president Donald Trump grew “contentious.” Last month, the Wall Street Journal reported that Lewandowski attempted to fire a pilot during a flight for failing to bring Noem’s blanket from one plane to another during a transfer.

The ousted secretary faced mounting scrutiny over the deaths of US citizens during federal operations in Minneapolis, including the killings of Renee Good and Alex Pretti by federal agents under Noem’s employ. In both cases, Noem publicly labeled the deceased “domestic terrorists,” framing echoed by Trump and other key administration officials. Video evidence, witness testimony, and an independent autopsy contradicted the agency’s claims, including early assertions that Pretti brandished a firearm.

Scrutiny of Noem’s tenure extends beyond the fatal shootings in Minneapolis to a broader pattern of aggressive enforcement tactics, warrantless raids, and mass detention camps. A secretive policy directive issued in May 2025, first reported by the Associated Press, authorized ICE agents to forcibly enter private residences without a judicial warrant. The memo, signed by acting ICE director Todd Lyons, instructed agents to rely solely on an administrative removal document to bypass Fourth Amendment requirements. The policy led to multiple documented instances of federal agents entering the wrong homes, including a January raid in Minnesota where agents removed a US citizen at gunpoint with no legitimate reason.

A record 53 people died in ICE or CBP custody last year, according to House Democrats on the Committee on Homeland Security. Concurrently, Noem has initiated a $38 billion procurement effort to buy and refurbish up to 24 warehouses across the country, aimed at converting them into mass detention camps for people awaiting deportation.

Noem’s tenure has led to controversy at other DHS agencies as well. Her insistence on approving any contracts or grants over $100,000 at the department have caused particular strain at FEMA, which has experienced a massive backlog of funding that has slowed normal processes at the agency. A report issued from Senate Democrats Wednesday found that Noem’s vetting process at FEMA has caused more than 1,000 contracts, grants, and awards to be held up. Multiple FEMA employees have told WIRED that this process has made the agency less ready to respond to disasters and threats.

-

Politics1 week ago

Politics1 week agoWhat are Iran’s ballistic missile capabilities?

-

Business7 days ago

Business7 days agoIndia Us Trade Deal: Fresh look at India-US trade deal? May be ‘rebalanced’ if circumstances change, says Piyush Goyal – The Times of India

-

Business1 week ago

Business1 week agoAttock Cement’s acquisition approved | The Express Tribune

-

Politics1 week ago

Politics1 week agoUS arrests ex-Air Force pilot for ‘training’ Chinese military

-

Fashion1 week ago

Fashion1 week agoPolicy easing drives Argentina’s garment import surge in 2025

-

Business1 week ago

Business1 week agoHouseholds set for lower energy bills amid price cap shake-up

-

Sports1 week ago

Sports1 week agoSri Lanka’s Shanaka says constant criticism has affected players’ mental health

-

Sports6 days ago

Sports6 days agoLPGA legend shares her feelings about US women’s Olympic wins: ‘Gets me really emotional’