Tech

How to ensure high-quality synthetic wireless data when real-world data runs dry

To train artificial intelligence (AI) models, researchers need good data and lots of it. However, most real-world data has already been used, leading scientists to generate synthetic data. While the generated data helps solve the issue of quantity, it may not always have good quality, and assessing its quality has been overlooked.

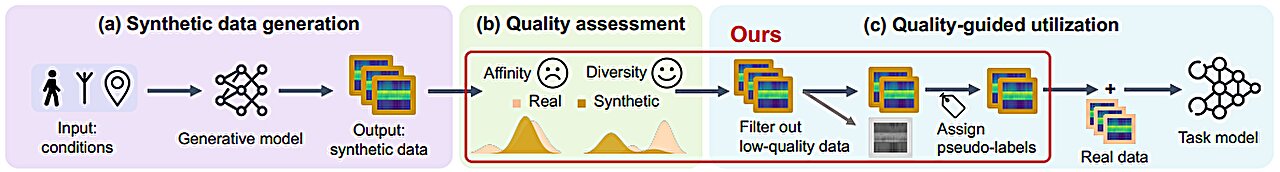

Wei Gao, associate professor of electrical and computer engineering at the University of Pittsburgh Swanson School of Engineering, has collaborated with researchers from Peking University to develop analytical metrics to qualitatively evaluate the quality of synthetic wireless data. The researchers have created a novel framework that significantly improves the task-driven training of AI models using synthetic wireless data.

Their work is detailed on the arXiv preprint server in a study titled “Data Can Speak for Itself: Quality-Guided Utilization of Wireless Synthetic Data,” which received the Best Paper Award in June at the MobiSys 2025 International Conference on Mobile Systems, Applications, and Services.

Assessing affinity and diversity

“Synthetic data is vital for training AI models, but for modalities such as images, video, or sound, and especially wireless signals, generating good data can be difficult,” said Gao, who also directs the Pitt Intelligent Systems Laboratory.

Gao has developed metrics to quantify affinity and diversity, essential qualities for synthetic data to be used for effectively training AI models.

“Generated data shouldn’t be random,” said Gao. “Take human faces. If you’re training an AI model to identify human faces, you need to ensure that the images of faces represent actual faces. They can’t have three eyes or two noses. They must have affinity.”

The images also need diversity. Training an AI model on a million images of an identical face won’t achieve much. While the faces must have affinity, they must also be different, as human faces are. As Gao noted, “AI models learn from variation.”

Different tasks have different requirements for judging affinity and diversity. Recognizing a specific human face is different than distinguishing it from that of a dog or a cat, with each task having unique data requirements. Therefore, in systemically assessing the quality of synthetic data, the team applied a task-specific approach.

“We applied our method to downstream tasks and evaluated the existing work of synthesizing data,” said Gao. “We found that most synthetic data achieved good diversity, but some had problems satisfying affinity, especially wireless signals.”

The challenge of synthetic wireless data

Today, wireless signals are used in technologies such as home and sleep monitoring, interactive gaming, and virtual reality. Cell phone and Wi-Fi signals, as radio waves, hit objects and bounce back toward their source. These signals can be interpreted to indicate everything from sleep patterns to the shape of a person sitting on a couch.

To advance this technology, researchers need more wireless data to train models to recognize human behaviors in the signal patterns. However, as a waveform, the signals are difficult for humans to evaluate.

It’s not like human faces, which can be clearly defined. “Our research found that current synthetic wireless data is limited in its affinity,” said Gao. “This leads to mislabeled data and degraded task performance.”

To improve affinity in wireless signals, the researchers took a semi-supervised learning approach. “We used a small amount of labeled synthetic data, which was verified as legitimate,” Gao said. “We used this data to teach the model what is and isn’t legitimate.”

Gao and his collaborators developed SynCheck, a framework that filters out synthetic wireless samples with low affinity and labels the remaining samples during iterative training of a model.

“We found that our system improves performance by 4.3% whereas a nonselective use of synthetic wireless data degrades performance by 13.4%,” Gao noted.

This research takes an important first step toward ensuring not just an endless stream of data, but of quality data that scientists can use to train more sophisticated AI models.

More information:

Chen Gong et al, Data Can Speak for Itself: Quality-guided Utilization of Wireless Synthetic Data, arXiv (2025). DOI: 10.48550/arxiv.2506.23174

Citation:

How to ensure high-quality synthetic wireless data when real-world data runs dry (2025, September 15)

retrieved 15 September 2025

from https://techxplore.com/news/2025-09-high-quality-synthetic-wireless-real.html

This document is subject to copyright. Apart from any fair dealing for the purpose of private study or research, no

part may be reproduced without the written permission. The content is provided for information purposes only.

Tech

Could Contact-Tracing Apps Help With the Hantavirus? Not Really

After three people died on a cruise ship struck by a hantavirus, authorities are actively tracking down 29 people who had left the ship. They’re trying to trace the spread of the virus. It’s a long, arduous, global process to find and notify people who might be at risk of infection.

Hey, wasn’t there supposed to be an app for that?

Contact-tracing apps were a global effort starting in 2020 during the Covid-19 pandemic. Enabled by phone companies like Apple and Google, contact tracing was designed to use Bluetooth connections to detect when people had come in contact with someone who had or would later test positive for Covid and report as much. It didn’t do much to solve the spread of the pandemic, but tracking the virus became more effective at least. The same process wouldn’t go well for the hantavirus problem.

“There is no use of apps for this hantavirus outbreak,” Emily Gurley, an epidemiologist at Johns Hopkins University, wrote in an email response to WIRED. “The number of cases are small, and it’s important to trace all contacts exactly to stop transmission.”

On a smaller scale of infection like this, officials have to start at the source (an infected individual), then go person-by-person, confirming where they went and who they might have come into contact with. Data collected by apps from a broad swath of devices would not be anywhere close to accurate enough to give a good idea of where the virus might have hitchhiked to next.

Contact tracing on a wider scale, like, say, a global pandemic, is less about tracking the individual infections and more about understanding what parts of the population might be affected, giving people the opportunity to self-quarantine after exposure. But that depends on how people choose to respond, and how the technology is utilized by public emergency systems. During the Covid pandemic, contact-tracing via apps tended to work better in more carefully managed European countries, but did not slow the spread in the US.

Making devices accessible to that kind of proximity information has also brought all sorts of concerns about privacy, given that the technology would require always-on access to work properly. Contact tracing also struggled to maintain accuracy, and in some cases could be providing false negatives or positives that don’t help further real information about the spread of the virus.

Especially in the case of something like the Hantavirus, where every person on that cruise ship can theoretically be directly tracked and contacted, it’s better to do that process the hard way.

“During small but highly fatal outbreaks, more precision is required,” Gurley wrote.

Tech

‘Reservation Hijacking’ Scams Target Travelers. Here’s How to Stay Safe

There’s another type of digital scam to be aware of, as per the BBC. It’s called “reservation hijacking.”

The name gives you a clue as to how it works. Essentially, scammers use details about a booking you’ve placed (perhaps with a hotel or airline) to trick you into sending money somewhere you shouldn’t.

While this type of scam isn’t brand new, a recent data breach at Booking.com has raised the risk of people being caught out. With data about you and your reservation, a far more convincing setup can be put in place—why wouldn’t you believe that someone purporting to be an employee from a spa you’ve got a reservation with is telling the truth about who they are, especially if they know the dates of your trip, your phone number, and your email address?

According to Booking.com, no financial information was exposed in the April 2026 hack. However, names, email addresses, phone numbers, and booking details have been leaked. The travel portal says affected customers have been emailed about the heightened risk of scams, so that’s the first thing to check for when it comes to staying safe.

Minimizing the risk of getting scammed by a reservation hijack involves many of the same security precautions you may already be following, and just being aware that this is a way you might be targeted will make a difference.

How Reservation Hijacks Work

We’ve already outlined the basics of a reservation hijack, but it can take several forms. As with other types of scams, it tends to evolve over time. The basic premise is that someone will get in touch with you claiming to be from a place you have a reservation with, whether it’s a car rental company or a hotel.

The scammers will try to pull together as much information as they can on you and your booking. Sometimes they’ll target employees of the place you’ve got the reservation with in order to get access to their systems, and other times they may take advantage of a wider data breach (as with the recent Booking.com hack).

They might also get information through other means. Maybe they’ve somehow got access to your email, or to some of your social media posts (where you’ve shared your next vacation destination and a countdown of how many days are left to go). Don’t be caught out if you find yourself speaking to someone who knows a lot about your travel plans.

Tech

I Tried the Best Captioning Smart Glasses, and Only One Leads the Pack

Unlike the other glasses I tested, Even doesn’t sell a subscription plan; everything’s included out of the box.

The only downside I could find with the G2 is that it is largely devoid of offline features, so the glasses have to be connected to the internet to do much of anything. Considering the G2’s capabilities, it’s a trade-off I am more than happy to make.

Other Captioning Glasses I Tested

There are plenty of capable captioning eyeglasses on the market, but they are surprisingly similar in both looks and features. While many are quite capable, none had the combination of power and affordability that I got with Even’s G2. Here’s a rundown of everything else I tested.

Leion’s Hey 2 is the price leader in this market, and even its prescription lenses ($90 to $299) are pretty affordable. The hardware, however, is heavy: 50 grams without lenses, 60 grams with them. A full charge gets you six to eight hours of operation; the case adds juice for up to 12 recharges.

I like the Leion interface, which lays out caption, translation, “free talk” (two-way translation), and a teleprompter feature on its clean app. You get access to nine languages; using Pro minutes expands that to 143. Leion sells its premium plan by the minute, not the month, so you need to remember to toggle this mode off when you don’t need it. Pricing is $10 for 120 minutes, $50 for 1,200 minutes, and $200 for 6,000 minutes. There’s no offline use supported, and I often struggled to get AI summaries to show up in English instead of Chinese (regardless of the recorded language).

You’re not seeing double: XRAI and Leion use the same manufacturer for their hardware, and the glasses weigh the same. The battery spec is also similar, with up to eight hours on the frames and another 96 hours when recharging with the case. XRAI claims its display is significantly brighter than competitors’, but I didn’t see much of a difference in day-to-day use.

The features and user experience are roughly the same, though Leion’s teleprompter feature isn’t implemented in XRAI’s app, and it doesn’t offer AI summaries of conversations. I also didn’t find XRAI’s app as user-friendly as Leion’s version, particularly when trying to switch among the admittedly exhaustive 300 language options. Only 20 of these are included without ponying up for a Pro subscription, which is sold both by the month and minute: $20/month gets you a max of 600 upgraded transcription minutes and 300 translation minutes; $40/month gets you 1,800 and 1,200 minutes, respectively. On the plus side, XRAI does have a rudimentary offline mode that works better than most. For prescription lenses, add $140 to $170.

AirCaps does not make its own prescription lenses. Instead, you must purchase a pair of $39 “lens holders” and take them to an optician if you want prescription inserts. I was unable to test these with prescription lenses and ultimately had to try them out over my regular glasses, which worked well enough for short-term testing. Frames weigh a hefty 53 grams without add-on lenses; the company couldn’t tell me how much extra weight prescription lenses would add to that, but it’s safe to say these are the bulkiest and heaviest captioning glasses on the market. Despite the weight, they only carry two to four hours of battery life, with 10 or so recharges packed into the comically large case. Another option is to clip one of AirCaps’ rechargeable 13-gram Power Capsules ($79 for two) to one of the arms, which can provide 12 to 18 extra hours of juice.

The AirCaps feature list and interface make it perhaps the simplest of all these devices, with just a single button to start and stop recording. Transcriptions and translations are available for free in nine languages. For $20/month, you can add the Pro package, which offers better accuracy, access to more than 60 languages, and the option to generate AI summaries on demand (though only if recordings are long enough). As a bonus: Five hours of Pro features are free each month. Offline mode works pretty well, too. The only bad news is that these bulky frames just aren’t comfortable enough for long-term wear.

The most expensive option on the market (up to $1,399 with prescription lenses!) weighs a relatively svelte 40 grams (52 grams with lenses) and offers about four hours of battery life. There’s no charging case; the glasses must be charged directly using the included USB-connected dongle.

The glasses are extremely simple, offering transcription and translation features—with support for about 80 languages, which is impressive. I unfortunately found the prescription lenses Captify sent to be the blurriest of the bunch, making the captions comparatively hard to read. And while the device supports offline transcription, performance suffered badly when disconnected from the internet. I couldn’t get translations to work at all when offline. For $15/month, you get better accuracy and speaker differentiation, and access to AI summaries of conversations. Prescription lenses cost between $99 and $600.

-

Politics1 week ago

Politics1 week agoIran weighs US reply delivered via Pakistan as Trump signals opposition to deal terms

-

Fashion1 week ago

Fashion1 week agoUS cotton export sales show strong recovery, Upland rise 36%

-

Sports1 week ago

Sports1 week agoSajid Ali Sadpara summits world’s fifth-highest peak

-

Tech7 days ago

Tech7 days agoDHS Demanded Google Surrender Data on Canadian’s Activity, Location Over Anti-ICE Posts

-

Business1 week ago

Business1 week agoHeineken to invest £44.5m in hundreds of pubs creating 850 jobs

-

Fashion1 week ago

Fashion1 week agoICE cotton witnesses sharp rise on weaker dollar, strong exports

-

Politics1 week ago

Politics1 week agoTwo women die on migrant boat seeking to reach UK

-

Business1 week ago

Business1 week agoGovernment notifies FDI changes on China funds – The Times of India