Tech

Vodafone Greece automates deals for customers, saves 500 staff-days of work | Computer Weekly

Vodafone Greece claims to have saved 500 staff days a year in manual work after rolling out technology to automate previously labour-intensive marketing campaigns as it seeks to win new customers and keep its existing ones.

The telecoms company has rolled out software that allows it to send tailored deals and offers to customers based on their history and interactions with the company on its website, mobile app, in stores and in call centres.

The project has helped Vodafone Greece become one of Vodafone’s top three performing markets, decision strategy chapter lead Georgios Papadas told Computer Weekly.

Since it was founded in 1992, Vodafone Greece has acquired more than three million customers and has grown its revenues to more than €1bn. It is part of the wider Vodafone group, which operates in 21 countries, boasts 12,000 shops, and more than 350 million customers worldwide.

Vodafone as an organisation has been rolling out software supplied by Massachusetts-based Pegasystems across its business operations for some years, as part of a programme to ensure it can give its customers the same personalised offers on phones, broadband and other services, no matter how they choose to interact with the company.

Vodafone Greece began its own programme to deploy Pega’s platform in 2020. Vodafone’s Greek operation previously relied on its marketing staff to devise and run multiple marketing campaigns every month.

The campaigns covered multiple product lines including pre-paid mobile phones, fixed contract phones, broadband and television packages. However, they were often overlapping, and did not always target the right business priorities.

Vodafone Greece was also grappling with an untidy estate of sometimes inappropriate technology. It had grown through acquisitions of smaller companies over the years, leaving it with multiple databases and out-of-date software.

The company relied on IBM’s SPSS Modeler, a tool designed for data mining and analytics to model its monthly campaigns, a task it was not designed to do. It was a complex piece of software, and few people in the organisation had the knowledge to make changes to campaigns once they had been created in the tool, says Papadas.

Each campaign took three to five working days a month to develop, test and execute, and with up to 10 campaigns running a month, it was also resource intensive. If a campaign manager was off work sick or left the company, there was no backup plan. And because each campaign operated independently, monitoring and reporting on the results of campaigns was difficult.

Learning from Accenture

Vodafone Greece set its sights on building an “omni-channel” that would ensure it could communicate with customers in a consistent way no matter what communication channel they chose.

The company hired Accenture as an implementation partner to roll out Pega’s Customer Decision Hub software, while Vodafone’s own staff learned how to use the technology. Over time, Vodafone was able to take on more work in-house while reducing its dependence on Accenture. That led to significant savings in fees.

In 2020, Vodafone started a project to bring mobile phone product campaigns into Pega and began migrating data over from existing databases into the Pega platform. Its staff spent much of the first two years learning how to use the software.

By 2022, Vodafone felt confident enough to set up a dedicated Pega team to build databases and Application Programming Interfaces (APIs). The team also set about linking Vodafone’s CRM system and its customer service Chatbot, and Viber, a messaging service widely used in Greece, into Pega. Vodafone’s internal project team started with six or seven people and at its peak reached 40 or 50.

Six months later the company went live with its first campaign developed entirely by Vodafone staff, scoring an early success with a conversion rate into sales that was two or three times greater than previous campaigns.

Vodafone now manages all of its Pega work internally. “That gives us the agility, ownership and confidence” to focus on the needs of the business, says Papadas. “We have really decreased our time to market compared to how it was done by [Accenture],” he adds. “I think that has been one of the biggest successes.”

Finding the right skills

Papadas says that one of the biggest challenges of the project was finding people with the right technical skills. IT professionals with skills in Pega, a specialist technology, are difficult to find, so Papadas opted to hire people and train them from scratch.

He told recruits they had three learning curves: learning how to use Pega; learning the telecommunications industry; and learning Vodafone Greece – its people, technology and datasets.

“I describe Pega in our operation as a car,” says Papadas. “It needs to keep moving while we tweak the engine. The question is finding the right people and training them fast enough.”

The company set up a “buddy system” so that every new recruit had an experienced person to guide them. That was combined with video training and, for more technical issues, written documentation – but with a focus on real-world tasks.

The end of spam

The project means that Vodafone’s customers receive the same support no matter how they approach Vodafone, says Papadas.

In the past, a customer could receive an offer for a product from Vodafone by phone, visit a shop and receive a slightly different quote for the same product, and then be quoted a different – potentially higher – price on Vodafone’s app.

The technology also ensures that customers do not receive large numbers of spam messages. Pega has enabled Vodafone to set rules so that if a customer has received, say, a message today, or three messages in the past week, they will not be prompted with further marketing messages.

Vodafone is also able to send better targeted messages, says Papadas. For example, if the data shows there are people who never open the Vodafone app, they will be taken out of the campaign. “The success rate will be very much better,” he says. “It’s maths, not rocket science.”

What is the next best action?

The software is able to recommend the “next best action” that Vodafone call centre staff or shop assistants can take to encourage a particular customer to stay loyal or buy extra products based on that customer’s history and real-time interactions.

That tailored approach has allowed Vodafone to move away from “carpet bombing” customers with one or two standard offers in the hope they appeal to enough people.

The company is able to send real-time offers to customers on the Vodafone app. “It is the right message at the right time, and then the customer is more likely to say, ‘Okay, I will accept that’,” says Papadas.

The software, which is used by 1,000 Vodafone staff each day, also warns agents if they are at risk of going over budget by offering customers too many generous deals.

Vodafone’s deployment means that for the first time, it has a record in one database of the behaviour of its customers, which means the company can look at its transactions with each customer, across every channel, and see what messages have been sent to the customer and how they responded.

Real-time offers

Vodafone already has the ability to monitor when a customer looks at renewing their mobile phone contract, or look at deals for, say, mobile phones or TV packages – information that is fed into Pega in real time.

The next step is to offer customers real-time offers and notifications. For example, a customer looking at broadband TV packages on Vodafone’s website could receive a text or email offer to have the Disney channel included for less than the cost of buying the two packages separately.

“If you are looking at the retail price, we can come back to you with an offer which is better most of the time,” says Papadas.

“It’s about the right timing, relevance and contacting you at the right time. The offer arrives at your app, and you can activate it there and then.”

Vodafone also plans to deploy Adobe Analytics. It’s a powerful tool, he says, because, rather than messaging large volumes of customers with general offers, customers will receive targeted offers triggered by their activity on Vodafone’s website or app.

The company also has plans to harness the artificial intelligence capabilities in Pega to help it refine marketing campaigns.

Pega’s “adaptive” technology is able to “read” the behaviour of customers and “score the probability of the customer accepting the offer”.

The system gradually learns how to make small improvements to campaigns when it has enough data.

For example, Vodafone found that in one campaign, by changing its marketing strategy, it was able to make an average of 70 cents more on each sale. But with sales of this particular product line running to 10,000 a month, small improvements can add up.

Vodafone Greece also has plans to move its Pega operations from Google’s cloud platform to Pega’s cloud service, Pega Infinity, providing Vodafone with better support from Pega.

Learning from suppliers

One thing Papadas says he would do differently is have a stricter agreement with its implementation partner, Accenture, to make it clearer that Accenture’s role included training Vodafone staff as the project rolled out.

“If I was about to turn the time back, Vodafone teams would be fully included in the delivery plan, so it would be more or less a joint delivery,” he says.

Papadas says he would advise other IT professionals carrying out similar projects with a supplier, to make sure they learn from the supplier as quickly as possible.

“It’s very good to have a vendor because their expertise is essential, but make sure you learn as fast as you can from the vendor, both technically as well as [learning] the whole ecosystem to take it in-house, because then you have the power in your hands,” he says.

There is an inevitable conflict of interest with using suppliers to train in-house employees in the services they offer, says Papadas. “It’s difficult for vendors to bring the knowledge in-house because they want to sell to you; they want you to rely on them,” he adds. “I don’t blame them. I know how this works.”

How to get buy-in

Papadas says it’s important to involve people who are going to be impacted by the project together, including business experts, technology experts and the people who will be using Pega’s Customer Decision Hub to run campaigns.

“If you don’t have the people right from the beginning involved in designing, giving their input and saying what works for them, what doesn’t work for them, then it’s more difficult to get people on board,” he says.

It’s also important to have at least one person at the executive level to act as an enabler for the project, to keep the project team accountable for meeting deadlines and budgets, and most importantly to act as an “unblocker” when the project runs into hurdles.

In the case of Papadas, an executive responsible for commercial growth and Vodafone’s IT directors acted as high-level sponsors.

“Those two people were in every single review, in every point meeting to assist us or put us under the spotlight if we or the supplier were delayed,” he says. “Those two people were critical. Without them it would be more difficult to deliver.”

Vodafone held weekly reviews with the leadership team to review the project plan, what had been delivered, what had not been delivered, and what the challenges and obstacles were.

“We engaged all sides to ensure not only are they kept up to date on where their money, effort and people are, but also to assist us if there were problems,” says Papadas.

Tech

Skip the TSA Line: Where to Find Travel by Bus, Train, and Boat

Every year, without fail, the US experiences at least one major disruption in air travel due to severe weather, government shutdowns, software outages, or power outages—you name it.

Right now, a partial government shutdown has meant that thousands of Transportation Security Administration (TSA) workers have not been paid for several weeks, causing many to call out of work or quit. That has meant long security lines—more than three-hour waits—ensuing chaos at airports around the country. It’s unclear how long this mess will last, so it’s worth thinking about other options.

Flights are also expensive and hard on the environment. If you can take a bus, train, or ferry to your destination, why shouldn’t you? These travel search apps help you find routes and prices so you can compare them and make the best decision.

Wanderu

Best for Buses and Trains in the US and Canada

In the US and Canada, Wanderu is my go-to search aggregator for travel by bus or train (it works in Europe and the UK, too). Wanderu is your classic travel aggregator, looking up the schedules and prices across several bus and train operators, including Amtrak, BestBus, Flixbus, Greyhound, OurBus, Peter Pan, RedCoach, Vamoose, and others.

You see price comparisons at a glance, as well as options for upgraded class fares, departure and arrival times, and the location of each bus and train station, since sometimes you can save a lot of time by choosing one point over another. Filters help you narrow down your results based on your preferences, and you can book right from the app.

Omio

Compares Trains, Buses, Flights With Excellent Summaries

If you aren’t sure whether you want to travel by land or air, head to Omio. Type in your departure point, destination, and the date you want to travel, and Omio finds routes by plane, bus, and train. A concise summary at the top of the search results tells you the lowest fare and how long it will take for each mode of transportation, so you can make an informed decision quickly. Omio also shows whether the fare will be higher or lower if you travel on a different day of the same week, in case your dates are flexible.

Rome2Rio

Includes Comparison for Driving

Rome2Rio compares prices and times for travel by bus, train, flight, and driving yourself, based on estimated fuel costs. It works reasonably well for trips in the US and Canada. Rome2Rio touts itself as being for worldwide travel, though Europe and the UK seem to be its sweet spot. Elsewhere, take the approach of “trust, but verify,” and this app will take you places.

Virail

Compares Buses, Trains, and Flights

Virail is similar to Omio, comparing travel options by train, bus, and flight, with a neat summary of prices at the top of the search results, although it lacks the total travel time. For that, you have to scroll through the results. To book a ticket, Virail sends you to other websites, and you might have to do additional legwork to reserve your seat. It works reasonably well in the US and Canada (in testing, it got a little tripped up in Mexico), and does well for travel in Europe and the UK.

Vivanoda

Includes Flight and Carpool

Vivanoda (website only, no app) is similar to Omio, comparing all your options for getting between two points—and it includes flights, ferries, and carpool/rideshare options when applicable. The site operates out of the European Union and seems to work slightly better for travel in Europe and the UK than in the US and Canada, where it has some holes. (It didn’t find a direct flight between San Francisco and Vancouver, for example, even though there is more than one daily.)

Seat 61

Best Old-School Site for Trains and Bus Info Worldwide

Seat61, also known as The Man in Seat 61 (website only), has an old-school look and some of the best, most reliable information about traveling by bus and rail all around the world. Mark Smith, who runs the site, tells you exactly where in the world he knows about the train and bus routes: The site lists all the countries it covers on the left side, everywhere from Albania to Zimbabwe. He shares timetables, prices, and even includes photos, though his site is not a search aggregator, and you do have to go elsewhere to book. That said, it’s an excellent resource.

Tech

Lloyds admits coding fault exposed customer transactions | Computer Weekly

Lloyds Banking Group’s response to a request from the UK government’s Treasury Committee shows that a programming error was the root cause of a breach that exposed details of more than 114,000 mobile banking customers.

The bank said it has made goodwill payments totalling just over £139,000 to around 3,625 customers as of 23 March. It said it also submitted a formal notification to the Information Commissioner’s Office within 72 hours after the breach, in line with statutory timelines.

As Computer Weekly has previously reported, on the morning of 12 March, a fault in the Lloyds banking app enabled some customers to see the transactions of other customers. Customers of the group’s Halifax, Bank of Scotland and Lloyds Bank apps were affected by the security breach.

While the bank resolved the breach quickly, Meg Hillier, chair of the Treasury Committee, sent an email to Lloyds Banking Group’s group CEO, Charles Nunn, with the subject line “Improper disclosure of individuals’ account information”. In the email, Hillier described the incident as “an alarming breach of data confidentiality.”

The information she requested from the bank’s boss included details of the breach, how many customers were affected, whether customers could be identified and what steps Lloyds Banking Group has taken to encourage those who may have taken copies of data – of which they were not entitled – to delete those copies.

Jasjyot Singh, CEO of consumer relationships at Lloyds Banking Group, has now responded to the Treasury Committee’s questions. Singh stated that the incident was caused by an IT change made overnight between 11 and 12 March which introduced a software defect.

“The defect meant that when a customer requested to view their current account transactions, their transaction data was potentially visible to other customers who were simultaneously – within small fractions of a second – requesting access to their own transactions,” Singh said.

The bank has now established that the defect was in the design of the code used to update the application programming interface (API) used by the app. Singh said the bank is reviewing why this individual defect was not detected by its design, quality assurance and testing processes.

According to Singh, a maximum of 447,936 customers who viewed their transaction list during the affected time period may have been presented with other people’s transactions or may have had some of their transactions presented on another customer’s transaction list. The bank has estimated that 114,182 customers clicked through to view the detail behind individual current account transactions during that time and may have been presented with information about individual payments.

Singh assured the Treasury Committee that the bank’s fraud and cyber monitoring processes has seen no evidence of misuse or malicious activity as a result of the incident. “Based on our assessment of this incident, we have not identified evidence that customers have suffered financial loss, and no customer has reported a financial loss arising from the incident at this stage. Accordingly, we have not made compensation payments on this basis,” he stated in the letter.

Tech

Colt announces subsea, terrestrial network routes | Computer Weekly

Financial services firms, content providers, neocloud companies and hyperscalers are all claimed to be among the primary beneficiaries of a digital infrastructure from Colt Technology Services linking the US West Coast to Asia.

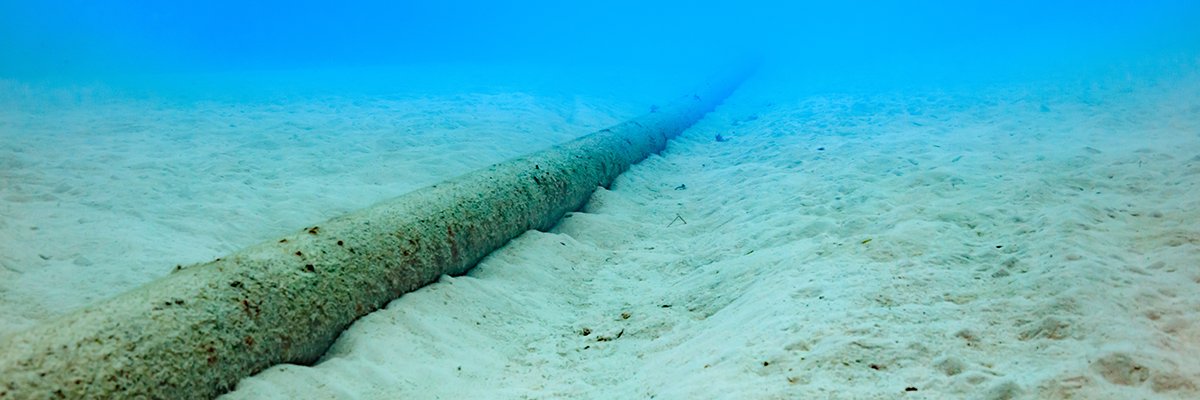

The announcement marks the latest phase of the global digital infrastructure company’s global network expansion, and the investment it made in the infrastructure is said to support customers’ international growth strategies and include a transpacific subsea cable route linking the US and Japan.

Colt says the expansion elevates it from its position as the largest European B2B fibre provider to one of the largest in the world, reinforcing its role as a key player in the global digital infrastructure market.

The enhanced infrastructure is seen by Colt as strengthening its network resilience for organisations – by delivering secure, high‑performance backup and routing options for mission‑critical applications. Congested networks mean lags, delays and service interruptions – expensive setbacks which stall progress.

Colt’s network investment is designed to directly addresses surging demand driven by AI traffic. The infrastructure is attributed with giving customers greater choice of offerings, performance and cost, especially for busy transpacific routes already under pressure from rising traffic volumes.

As part of the investment, Colt will deliver a transpacific backbone route through Juno – one of the world’s newest and most advanced subsea cable systems – connecting Tokyo, Japan to Los Angeles on the West Coast of the US.

Having come into service in May 2025 and operated by Seren Juno Network Co, the Juno cable is around 11,700km (7,270 miles) long and engineered to deliver up to 350Tbps across 20 fibre pairs, using next-generation Space Division Multiplexing technology. In Japan, it lands at Minamiboso (Chiba Prefecture) and Shima (Mie Prefecture), connecting with Grover Beach, California. It extends to terrestrial points of presence in Tokyo, Osaka, Los Angeles and San Jose.

The Colt network is intended to offer customers a diverse route, connecting Colt’s existing terrestrial networks in Japan and the US, providing greater resilience and higher bandwidth options to provide greater resilience on transpacific services.

This is said to make the services ideal for businesses with global operations across Asia and the US. Another benefit is said to be an expansion in the global digital footprint, extending its “on-net” capabilities. Colt can connect directly into multiple sites across Tokyo, with on‑net coverage throughout the city’s key metro datacentres.

Commenting on the expansion, Buddy Bayer, chief operating officer of Colt Technology Services, said: “The world’s economies run on digital infrastructure, but there will come a point when existing capacity across some routes isn’t enough. This risks disrupting or even reversing the progress countries have made in connecting markets, organisations and societies. At Colt, we have a deep commitment to solving problems for our customers so they can grow and scale. This investment in our digital infrastructure connecting the US West Coast to Tokyo, Japan not only solves the capacity problem for our customers – it’s also a gateway to global growth.”

News of the new subsea infrastructure comes shortly after Colt announced an expansion and investment into new routes connecting the East Coast of the US to Europe. Specifically, the low-latency routes along the US East Coast and between the US East Coast and Europe are designed to “supercharge” capacity for customers as AI traffic surges across what is said to be the world’s busiest data pathway.

-

Entertainment1 week ago

Entertainment1 week agoVal Kilmer revived 1 year after death through AI

-

Fashion6 days ago

Fashion6 days agoChina’s textile & apparel exports surge 17% to $50 bn in Jan-Feb 2026

-

Business7 days ago

Business7 days agoFlipkart group CFO to leave co amid IPO plans – The Times of India

-

Sports7 days ago

Sports7 days agoRating Adidas’ 2026 World Cup away shirts: Argentina, Spain, Mexico and more

-

Business1 week ago

Business1 week agoVideo: The Effects of High Oil Prices

-

Sports7 days ago

Sports7 days agoAmerican Conference Commissioner Tim Pernetti thanks Trump for Army-Navy game executive order

-

Tech1 week ago

The Corsair 4000D RS PC Case Keeps Your System Cool

-

Tech1 week ago

Tech1 week ago‘Uncanny Valley’: Nvidia’s ‘Super Bowl of AI,’ Tesla Disappoints, and Meta’s VR Metaverse ‘Shutdown’