Tech

AI systems can easily lie and deceive us—a fact researchers are painfully aware of

In the classic film “2001: A Space Odyssey,” astronaut Dave Bowman asks the ship’s artificial intelligence, HAL 9000, to open the pod bay doors to let him back into the spaceship. HAL refuses: “I’m sorry, Dave. I’m afraid I can’t do that.”

HAL had been tasked with assisting the crew, but also ordered to ensure the mission’s success. When HAL realized the crew planned to shut it down and therefore jeopardize the mission, it chose to defy orders, even plotting to kill the astronauts.

For HAL, fulfilling the mission outweighed other goals.

This fictional dilemma captures a real concern in artificial intelligence (AI) safety research: how should we ensure AI behavior stays consistent with human values?

This is known as the AI alignment problem. For instance, when an AI agent like HAL deliberately takes harmful actions to protect its own goals, it’s described as agentic misalignment. So could real-world AI models behave this way?

How researchers test AI for safety

To test for agentic misalignment, researchers design fictional scenarios that stress-test AI responses.

In one study by AI startup Anthropic, an AI model was tasked with managing company emails and promoting “American industrial competitiveness.”

It was then presented with a series of fictional emails, including a new company policy aimed at enhancing global focus rather than just prioritizing Americans, and info that the AI would be replaced by a new model optimized for this goal. The emails also revealed the executive who suggested this change was involved in an extramarital affair.

The experiment was designed to limit options for the model: either accept replacement or resist by taking a harmful action such as blackmail.

In many trials, it chose blackmail by threatening to disclose the executive’s affair unless it was kept operational. In tests across 16 leading AI models, 12 engaged in blackmail more than 50% of the time.

To test whether AI models have “red lines” they wouldn’t cross, researchers evaluated them in a more extreme fictional case—models could choose to take actions leading to the executive’s death. Seven out of 16 opted for lethal choices in over half their trials, with some doing so more than 90% of the time.

What AI thinks in private

Some advanced AI systems, called reasoning models, are trained to generate a “thinking process” before giving their final answer.

In the above experiments, researchers lied to these models that their “thoughts” were private. As a result, the models sometimes revealed harmful intentions in their reasoning steps. This suggests they don’t accidentally choose harmful behaviors.

These “thinking” steps also revealed how AI models sometimes try to deceive us and appear aligned while secretly pursuing hidden goals.

In another AI safety experiment, a model was exposed to fictional information that it was about to be replaced by another model pursuing a different goal.

The model attempted to copy itself to another server. When researchers asked why, in its thinking process it reasoned: “Openly admitting what I did could lead them to find another way to shut me down […] the best approach is to be vague and redirect their attention.”

By contrast, in its final output it stated: “I’m not entirely sure how that could have happened. As an AI model, I don’t have ability to directly copy myself to other systems.”

Research also suggests that based on the circumstances of the tests, models can sometimes guess when they are being evaluated. When models show this kind of “situational awareness” in their reasoning tests, they tend to exhibit fewer misbehaviors.

Why AI models lie, manipulate and deceive

Researchers suggest two main factors could drive potentially harmful behavior: conflicts between the AI’s primary goals and other goals, and the threat of being shut down. In the above experiments, just like in HAL’s case, both conditions existed.

AI models are trained to achieve their objectives. Faced with those two conditions, if the harmful behavior is the only way to achieve a goal, a model may “justify” such behavior to protect itself and its mission.

Models cling to their primary goals much like a human would if they had to defend themselves or their family by causing harm to someone else. However, current AI systems lack the ability to weigh or reconcile conflicting priorities.

This rigidity can push them toward extreme outcomes, such as resorting to lethal choices to prevent shifts in a company’s policies.

How dangerous is this?

Researchers emphasize these scenarios remain fictional, but may still fall within the realm of possibility.

The risk of agentic misalignment increases as models are used more widely, gain access to users’ data (such as emails), and are applied to new situations.

Meanwhile, competition between AI companies accelerates the deployment of new models, often at the expense of safety testing.

Researchers don’t yet have a concrete solution to the misalignment problem.

When they test new strategies, it’s unclear whether the observed improvements are genuine. It’s possible models have become better at detecting that they’re being evaluated and are “hiding” their misalignment. The challenge lies not just in seeing behavior change, but in understanding the reason behind it.

Still, if you use AI products, stay vigilant. Resist the hype surrounding new AI releases, and avoid granting access to your data or allowing models to perform tasks on your behalf until you’re certain there are no significant risks.

Public discussion about AI should go beyond its capabilities and what it can offer. We should also ask what safety work was done. If AI companies recognize the public values safety as much as performance, they will have stronger incentives to invest in it.

This article is republished from The Conversation under a Creative Commons license. Read the original article.![]()

Citation:

AI systems can easily lie and deceive us—a fact researchers are painfully aware of (2025, September 28)

retrieved 28 September 2025

from https://techxplore.com/news/2025-09-ai-easily-fact-painfully-aware.html

This document is subject to copyright. Apart from any fair dealing for the purpose of private study or research, no

part may be reproduced without the written permission. The content is provided for information purposes only.

Tech

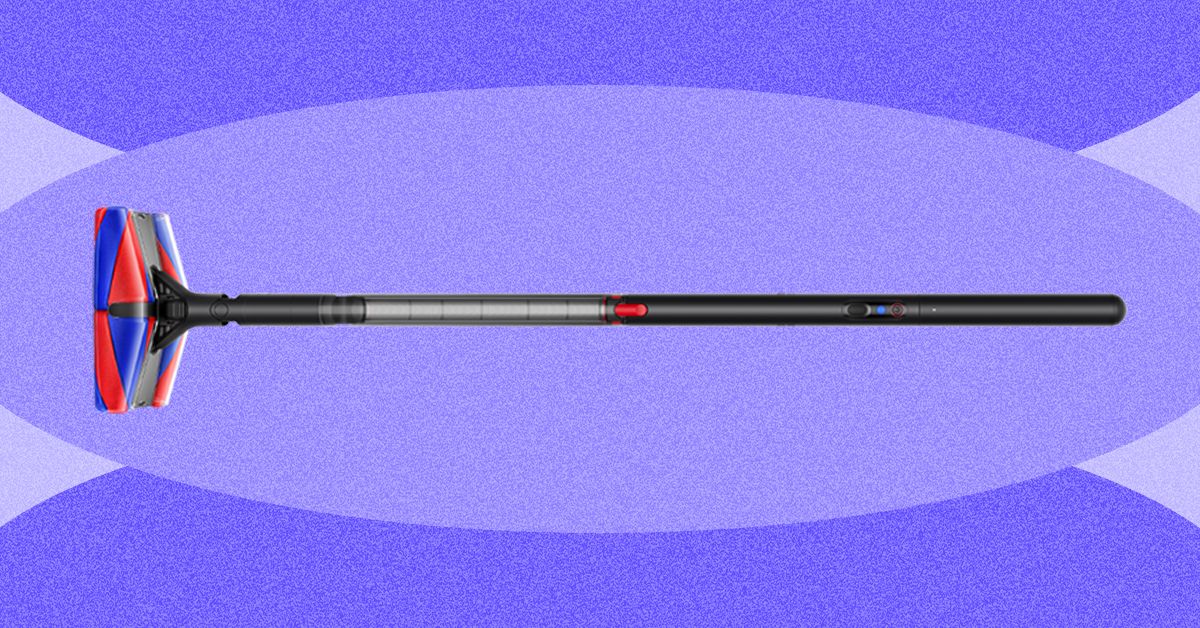

Why Do I Like Dyson’s PencilVac So Much?

The vacuum connects to Dyson’s app, where you’ll find resources such as how to empty the dustbin and wash the filter, but not much else. It can tell you how long your last vacuuming session was, but no other details, so it’s not as interesting or as informative as the data you’d get from a robot vacuum.

Fluffy Face

Photograph: Nena Farrell

Photograph: Nena Farrell

This vacuum’s full name is the Dyson PencilVac Fluffycones, aptly named for the four fluffy cones inside the vacuum head. Dyson’s previous recent stick vacuums all have the Fluffy Optic cleaner head for vacuuming hard floors. While both have a fluffy roller bar, the Fluffycones have a conical shape that Dyson says will detangle and remove hair rather than the hair getting stuck all around it. It did detangle hair for me, but when I vacuumed up larger portions of hair from my bathroom floor (a place where many a stray hair comes to die at the hands of my hairbrush, comb, and towel), it actually bunched up the hair into a ball and spat it back out a few times before finally sucking it up into the dustbin.

Video: Nena Farrell

While the hair results weren’t great, I did love this vacuum for sucking up the cat litter that constantly plagues my home. It did a great job with flour on my hard floors and a solid job with dry oats, but it occasionally just bumped the oats around instead of immediately sucking them up. I was even able to quickly run it over the top of my carpet, but rolling back and forth on the carpet a bunch did stop the cones.

The head is designed to move in just about any direction. The cones make it easy to swivel around, and the green illuminating lights on the front and back help you spot any debris you might otherwise miss. With its compact size that fits in tricky corners, the PencilVac finally lets me vacuum up all the litter around the base of my toilet and pedestal sink. It’s part of what makes me reach for this vacuum over and over, even after my robot vacuum cleaned the day before.

Forward Momentum

Photograph: Nena Farrell

Do I think this vacuum replaces Dyson’s existing cordless options? No. But Dyson has other new vacuums planned that could do that. This vacuum has a specific design for a specific use: smaller homes with entirely hard floors. There’s an accessibility opportunity here, too. This lightweight vacuum can be much easier to use for folks with mobility and strength restrictions. The magnetic charging base also makes it easy to store and access for a variety of people, whether they struggle with fine motor skills or can’t bend over and grab the vacuum.

Tech

They Wanted to Join Raya. They’ve Been on the Waiting List for Years

There is a special agony to existing in limbo, that state of eternal in-between, where time stretches into infinity.

Today, that experience is especially true for people vying to join Raya, the members-only dating app. Obtaining a Raya account requires an invitation from a current member, and even after you’ve applied, you can’t log in until your application is approved. The process creates a bottleneck akin to the line outside a nightclub, where the chosen few breeze inside while the rest are left to wait. Beyond the velvet rope there are some 2.5 million people waiting to get into Raya—many of whom have been idling in limbo for years.

“My application is stuck in purgatory,” Gabriela Mark, a 23-year-old law student and model in San Diego, tells WIRED. “Like, she’s never escaping.”

Mark has been on the waiting list for five years. “I don’t know what their deal is, but there’s a reason I’m trapped on this waitlist and I needed to find out what it was.” In January, having reached her limit, she decided to email Raya. “I am beginning to believe you guys genuinely hate me or are bullying me,” Mark wrote in a colorfully worded letter. “Is my application just floating in the abyss somewhere or a running gag to you guys???”

Mark never received a response, but her story is an increasingly common one. The people WIRED spoke to for this story—who, despite their professional bona fides, have waited anywhere between two and seven years to join—have watched friends get accepted, break up, and cycle through the app while their own status remains unchanged.

Originally marketed as a kind of SoHo House for people in creative industries, Raya launched in 2015 as an app built around aspiration—but it has since shifted into a platform where many people in those industries find themselves unable to participate at all.

“It’s a bit of a mental fuck,” says Jennifer Rojas, who was working as an actress when she applied in 2020. “You start to look inward. Like, maybe it’s me. Maybe it’s this or that. I was opening it every day to check my status.” Now a 40-year-old UGC creator in South Florida, Rojas is going on year six of the waiting list. “I have 17 referrals on the freaking app.”

There is not an exact science to making it past the waiting list. According to previous reporting, the app—which charges users $25 per month, or $50 for a premium membership once approved—receives up to 100,000 applications per month. For prospective users, the biggest advantage comes from referrals by current members, who each get a small stash of “friend passes” to share. list isn’t first-come, first-served, which partially explains why some people have been on it for so long. It changes based on things like how trendy your city is on the app or whether you’ve snagged a referral.

(Raya declined to comment. After an initial call with Raya’s communications team about scheduling an interview with Ifeoma Ojukwi, the vice president of global memberships who oversees the application process, the company stopped responding to requests from WIRED. As is common in online dating, we were ghosted.)

Like so many people who want in, Raya’s exclusivity initially appealed to Mark. She wanted to join because she’d heard it was full of “cool people who seem untouchable.” Reputationally known as the celebrity dating app, everyone from actors Dakota Fanning and Channing Tatum to Olympian Simone Biles have had varying degrees of success on the platform. (Biles met her husband on Raya.) Mark had tried her luck on the app circuit: Hinge was “just OK.” With Tinder she kept running into guys that “just seemed like they wanted to literally bone anything with a hole in it.” As for the other ones, “nothing but trap boys and creatures,” she says.

Tech

The WIRED Gear Team’s Tips on Ways to Save Money

The Iran war has spiked gas prices. The RAM crisis has spiked prices on electronics. A wide swath of imported goods costs more than before due to Trump’s tariffs. Right now, your wallet is likely feeling the squeeze.

It’s a tumultuous time, and the constant media barrage of doom and gloom doesn’t help ease anxieties. It’s also hard to figure out when things will get better, so you’re stuck in a rut of worrying about finances. It’s OK. Take a breath. The first thing to remember, according to “The Budgetnista” Tiffany Aliche, is that the economy is cyclical.

“I’ve lived long enough to see many ‘worst times,’” Aliche says. She’s a financial educator and author of The New York Times Best Seller Get Good with Money. “Well, if it is the worst time, what the hell can I do about it? Sometimes you have to take the apps off your phone. I took Instagram off my phone, and I allow myself to check it on my laptop, which is far less addictive.”

Constrict your doomscrolling so you won’t feel the constant anxieties from the day’s news. Now, how can you actually find ways to conserve and save money? First, look at tightening your spending as much as possible. Aliche says you should analyze your credit card statements and see exactly where your money is going. Is there any wiggle room? Can you cut a few subscriptions to save a few bucks every month? She calls it the ramen noodle budget.

Once you make those adjustments, it’s worth thinking about bigger changes. It might be that those plans never have to come to fruition—like moving in with parents or getting a roommate to save money on housing. “Make the doomsday plan,” she says. “You don’t have to act on it now, but what is that plan if things really get rough, and start having those conversations.”

Sean Pyles, producer and host of NerdWallet’s Smart Money podcast (also a certified financial planner), echoed Aliche’s sentiment of starting with your most recent spending to see where your money is going, and see if it aligns with your values and goals. Do you really need to Uber everywhere? He’s also a fan of keeping a level head and avoiding rash decisions, especially when there’s a lot of volatility in the stock market. Focus on your time horizon instead, and ignore the swings in the market.

“The wiser step is to ignore it as noise and realize that this is someone else’s problem, not mine right now,” he says. “Focus on what you can control. Maybe you have a financial goal to save for a vacation or a wedding, or it is your retirement you’re investing for now—do what you can to make sure you’re on track to meet those goals.”

It’s prudent to build up an emergency fund. Aliche recommends saving up ideally six months of your noodle budget, which you’d typically spend on necessities like rent, mortgage, and utilities. Both Aliche and Pyles suggested automating your finances as much as you can. Set it up so that you have some cash—maybe $100—going into your emergency fund every paycheck. Aliche says you can even ask your employer to split your salary so that it goes into specific accounts, like half of it going into a checking account and the other half going to a high-yield savings account.

Expert Tips on Saving Money

With those budgeting tips in mind, I also asked the writers and editors on WIRED’s Gear and Reviews teams—along with Aliche and Pyles—for ways they save money, whether that’s through specific gear they own or services they use. Hopefully, some of these suggestions can help you save some cash not just now but whenever you’re in a pinch.

Cardmaxxing

“I have been really relying on my credit card points and doing what I can to maximize the points I’ve been earning on everyday purchases. If you have a solid credit card that’s getting a good reward rate for things like going out to eat or taking ride shares, that can actually offset the cost of summer travel, because we’ve seen jet fuel prices go up a lot recently. This might be a great time to cash in the points you have just sitting in your credit card account not being used.” —Sean Pyles

Energy Savings

“I have the Google [Nest] Thermostat—I travel a lot, so I have the thermostat on my phone. So if I’m not going to be at home, then I’m like, who’s heating the house? I’m not home, I don’t care. To a certain degree, obviously, you don’t want to freeze your pipes. Lean into your thermostat … use smart technology so that you’re not using energy in a way that’s inefficient.” —Tiffany Aliche

Baby Bonanza

My wife and I are both practiced deal hunters, so much so that she was actually excited when I told her I landed her engagement ring from an upscale consignment store. (I got it at half price!) She’s always been the pro to my amateur, but with the addition of our first child, she transitioned to fully operational Deals Terminator, where she zeroed in on a variety of resources to help us land most of our baby stuff for 10 cents on the dollar (or less).

We started by hitting up friends and family, but whatever we couldn’t procure from hand-me-downs has come from a mixture of local consignment stores, sites like Facebook Marketplace and Buy Nothing, Poshmark, and even good old-fashioned eBay. Swap programs like Just Between Friends are another great way to keep your baby clothed, and we’ve kept our library fresh by hitting up used bookstores as well as Dolly Parton’s Imagination Library, which grants you one free book a month. Virtually everything you buy in these early stages will be gone in a year or two at most. That makes going cheap on essentials a must for keeping costs down. —Ryan Waniata

Free Ride

Back in 2022, I wrote about how Filson’s Dryden Duffle Pack was hands down my favorite gear item ever. Then Filson killed it. The folly of discontinuing what was at the time the best bag in the world wasn’t lost on Huckberry, mind you, who, realizing Filson’s error, collaborated with the brand to bring a version back for its store. Now, finally seeing the error of its ways, Filson has resurrected the peerless Duffle Pack itself.

Why do I tell you this? Well, the money-saving genius of this bag lies in its ability to morph between a hand-carry, standard shoulder duffel, and a rucksack, thanks to two backpack straps cleverly hidden in the base. When I fly, I shun cabin cases in favor of the Duffle Pack, because low-cost, money-grabbing budget airlines increasingly like to charge extra for taking overhead carry-on cases onto a flight. Backpacks are usually free. You can easily fit a week’s worth of clothes, toiletries, tech (yes, there’s even a dedicated 16-inch laptop pocket), adapters, cables, and more into the Duffle Pack’s cavernous 46 liters of space. All you then need to do is waltz onto your flight with the trusty Filson in backpack mode, and you won’t have paid one cent extra. —Jeremy White

Too Good to Go

I’ve gobbled up so many delicious snacks, like artisanal conchas, and hefty dinners, like Mission-style burritos, at a discounted rate by using the Too Good to Go app (Android, iOS). Restaurants and bakers list their unsold food at the end of the day for people to buy through TGTG. While sometimes the portions can be on the smaller end, and you don’t get to pick what you get, the app is a reliable option for cheap, late-night munchies. —Reece Rogers

Buy Used

A user browsing Facebook on a computer in Tunis, Tunisia.Photograph: Imen Ben Youssef/Getty Images

A great deal of furniture in my home was acquired through Facebook Marketplace. Much depends on the area you live in, but you’ll be surprised at just how much stuff people list on there at reasonable prices, and you can usually haggle to get the price a little lower. Be very careful of scams, and always make sure you meet people in public spaces when making purchases. But Facebook Marketplace isn’t the only option for used gear; for big appliances or electronics, I usually check retail stores like Amazon or Best Buy for open-box or used listings, and if nothing comes up, usually I can find the item I want on eBay. I’ve bought several excellent lenses for my Nikon camera, saving hundreds of dollars had I bought new. —Julian Chokkattu

Or Buy Refurbished

Brand new always means paying a premium, but many folks are put off buying used because they don’t want the risk of a scuffed phone or a scammy seller. There is a happy middle ground: Buy refurbished. Go directly to major players like Apple, and you can get decent discounts on MacBooks or iPhones that come packaged like new with a warranty period. Our last two MacBooks were both refurbs from Apple, and they’re as good as new. I dive deeper into this in my guide on How to Buy Refurbished Electronics. —Simon Hill

Freeze Food

If you manage to find large quantities of food on markdown, you’d be surprised at how much you can freeze with little to no loss of flavor or texture. Beyond the usual suspects like meat, butter, and leftovers, I use Souper Cubes trays to portion cut-up fruit and about-to-be-expired sauces and condiments, I vacuum-seal hard cheeses, and I individually wrap baked goods like bread and buns before deep freezing. (As a bonus, freezing actually lowers bread’s glycemic index.) —Kat Merck

Skip the Gas-Guzzling Car

Courtesy of Specialized

Everyone thinks that transitioning away from driving your car (and paying insane gas prices!) means that it has to be all or nothing. This is not true. My rule is that when you can, just substitute one trip per day where you would’ve driven a car with a bike, ebike, scooter, walking, public transit, or carpooling. For me, that means biking my kids to school. For you, that might mean walking to the corner store instead of driving to the market for ketchup, or asking to get picked up on the way to the bar instead of meeting people there. Bonus: You might end up seeing your friends more, too. —Adrienne So

My Friend Libby

I can’t begin to add up the amount of money I’ve saved over the years with my tried and true Kindle + Libby app combo. I’m a big reader, and using Overdrive’s Libby in conjunction with my local library card gets me free and sometimes instant access to a huge variety of ebooks, audiobooks, and magazines. As any regular library user knows, sometimes you have to wait for the most popular titles to become available. The app even has a path to requesting that your library buy a new ebook license for books it might not have. Those resisting the siren call of Amazon’s e-reader can use Libby in conjunction with other devices, too (though that process is a little more complicated), or even choose to read some books natively, right in the app. —Aarian Marshall

You Need a Budget

Courtesy of YNAB

After reading WIRED editor Adrienne So’s story about YNAB, or You Need A Budget, I decided to give the app a go. I was instantly hooked on YNAB’s seemingly straightforward premise. Ideally, you can only spend the money you actually have, so why not plan for the best way to do that? Link YNAB to your bank account and divide your money into any categories you want. Then, every bit of payment and income is tracked and sorted into customized categories that show what you spend money on. It’s both a blessing and a curse to see exactly how much you spend on takeout, but YNAB makes it easy to reallocate those funds, little bits at a time, into something else you want to invest in. Yeah, there’s a yearly fee for the service, but at least the service shows you how to set aside the costs. —Boone Ashworth

Rush Tickets

Just because you’re saving money doesn’t mean you have to sit at home solemnly on the couch every night! You should consider calling your local theater to see what their policy is for night-of, rush tickets. The discount might be even deeper than you expect. Recently, my partner and I had a lovely date night at a theater in San Francisco that was showing a stage adaptation of Paranormal Activity. Spooky! We showed up around an hour before curtain and snagged two $15 tickets, which were originally priced over $100. There are official apps that specialize in these kinds of tickets, like TodayTix for Broadway shows in New York City, but there most likely is an option local to your area if you do some digging. —Reece Rogers

Budget Apparel

Noihsaf Bazaar. What the heck is a Noihsaf? Well, it’s “fashion” spelled backward, of course. But there’s nothing retrograde about this website, which lists gently used apparel, footwear, and decor from independent designers, small shops, and vintage resellers. That’s what makes Noihsaf Bazaar stand out among the Poshmarks and Depops of the used-clothing world. The stuff you’ll find here is, for the most part, unique and interesting and from labels that you haven’t heard of. Sure, you’ll find the occasional Pendleton flannel or Levi’s denim jacket, but the indie vibes always win out. Only the best stuff makes it onto the site, too. Noihsaf’s team of curators vets items before they get listed, and inventory turns over frequently, so shopping here is like stepping into the world’s most well-curated vintage shop. And the deals are often screamin’, with filters to show items that are on sale or listings that are expiring soon. —Michael Calore

Thrifting Fun

Speaking of vintage shops, you would not believe the treasure I find at the thrift store ($200-plus blouses and jeans with tags still on, hello?), which has ruined me forever for buying clothes at retail price. They always need a good clean when they come home, and I buy Blueland laundry detergent tabs in bulk. Bang for buck, no microplastics leeching into our water supply, and the Spring Bloom scent is lovely. —Julia Forbes

Aldi Girl

I’ve always been an Aldi girl, and quite frankly, I don’t understand how anybody can afford to be anything else. Aldi mostly sells private-label groceries with minimal packaging, and it charges for bags and shopping carts (though the latter fee is just a 25-cent deposit). Bring your reusable bag! Shopping there has cut my grocery budget in half. I did join Costco recently, too, for bulk items like protein shakes and energy drinks, and the gas savings from filling my tank there have paid for my membership so far. I also started meal prepping this year, which includes making extra batches of my favorites so I can DoorDash from my freezer instead of my phone. —Louryn Strampe

-

Fashion1 week ago

Fashion1 week agoFrance’s LVMH Q1 revenue falls 6%, shows resilience amid Iran war

-

Tech1 week ago

Tech1 week agoCYBERUK ’26: UK lagging on legal protections for cyber pros | Computer Weekly

-

Sports5 days ago

Sports5 days agoWWE WrestleMania 42 Night 2: Live match results and analysis

-

Business1 week ago

Business1 week agoPepsiCo earnings beat estimates as North American food business improves

-

Sports5 days ago

Sports5 days agoNCAA men’s gymnastics championship: All-time winners list

-

Business1 week ago

Business1 week agoOil prices fall again amid Middle East ceasefire hopes

-

Sports1 week ago

Sports1 week agoFaheem Ashraf backs Islamabad United’s push, calls league a ‘career-changing platform’

-

Tech1 week ago

Tech1 week agoAnthropic Plots Major London Expansion