Tech

Crispr Offers New Hope for Treating Diabetes

Crispr gene-editing technology has demonstrated its revolutionary potential in recent years: It has been used to treat rare diseases, to adapt crops to withstand the extremes of climate change, or even to change the color of a spider’s web. But the greatest hope is that this technology will help find a cure for a global disease, such as diabetes. A new study points in that direction.

For the first time, researchers succeeded in implanting Crispr-edited pancreatic cells in a man with type 1 diabetes, an autoimmune disease where the immune system attacks insulin-producing cells in the pancreas. Without insulin, the body is then unable to regulate blood sugar. If steps aren’t taken to manage glucose levels by other means (typically, by injecting insulin), this can lead to damage to the nerves and organs—particularly the heart, kidneys, and eyes. Roughly 9.5 million people worldwide have type 1 diabetes.

In this experiment, edited cells produced insulin for months after being implanted, without the need for the recipient to take any immunosuppressive drugs to stop their body attacking the cells. The Crispr technology allowed the researchers to endow the genetically modified cells with camouflage to evade detection.

The study, published last month in The New England Journal of Medicine, details the step-by-step procedure. First, pancreatic islet cells were taken from a deceased donor without diabetes, and then altered with the gene-editing technique Crispr-Cas12b to allow them to evade the immune response of the diabetes patient. Cells altered like this are said to be “hypoimmune,” explains Sonja Schrepfer, a professor at Cedars-Sinai Medical Center in California and the scientific cofounder of Sana Biotechnology, the company that developed this treatment.

The edited cells were then implanted into the forearm muscle of the patient, and after 12 weeks, no signs of rejection were detected. (A subsequent report from Sana Biotechnology notes that the implanted cells were still evading the patient’s immune system after six months.)

Tests run as part of the study recorded that the cells were functional: The implanted cells secreted insulin in response to glucose levels, representing a key step toward controlling diabetes without the need for insulin injections. Four adverse events were recorded during follow-ups with the patient, but none of them were serious or directly linked to the modified cells.

The researchers’ ultimate goal is to apply immune-camouflaging gene edits to stem cells—which have the ability to reproduce and differentiate themselves into other cell types inside the body—and then to direct their development into insulin-secreting islet cells. “The advantage of engineering hypoimmune stem cells is that when these stem cells proliferate and create new cells, the new cells are also hypoimmune,” Schrepfer explained in a Cedars-Sinai Q+A earlier this year.

Traditionally, transplanting foreign cells into a patient has required suppressing the patient’s immune system to avoid them being rejected. This carries significant risks: infections, toxicity, and long-term complications. “Seeing patients die from rejection or severe complications from immunosuppression was frustrating to me, and I decided to focus my career on developing strategies to overcome immune rejection without immunosuppressive drugs,” Schrepfer told Cedars-Sinai.

Although the research marks a milestone in the search for treatments of type 1 diabetes, it’s important to note that the study involved one one participant, who received a low dose of cells for a short period—not enough for the patient to no longer need to control their blood sugar with injected insulin. An editorial by the journal Nature also says that some independent research groups have failed in their efforts to confirm that Sana’s method provides edited cells with the ability to evade the immune system.

Sana will be looking to conduct more clinical trials starting next year. Without overlooking the criticisms and limitations of the current study, the possibility of transplanting cells modified to be invisible to the immune system opens up a very promising horizon in regenerative medicine.

This story originally appeared on WIRED en Español and has been translated from Spanish.

Tech

What It Will Take to Make AI Sustainable

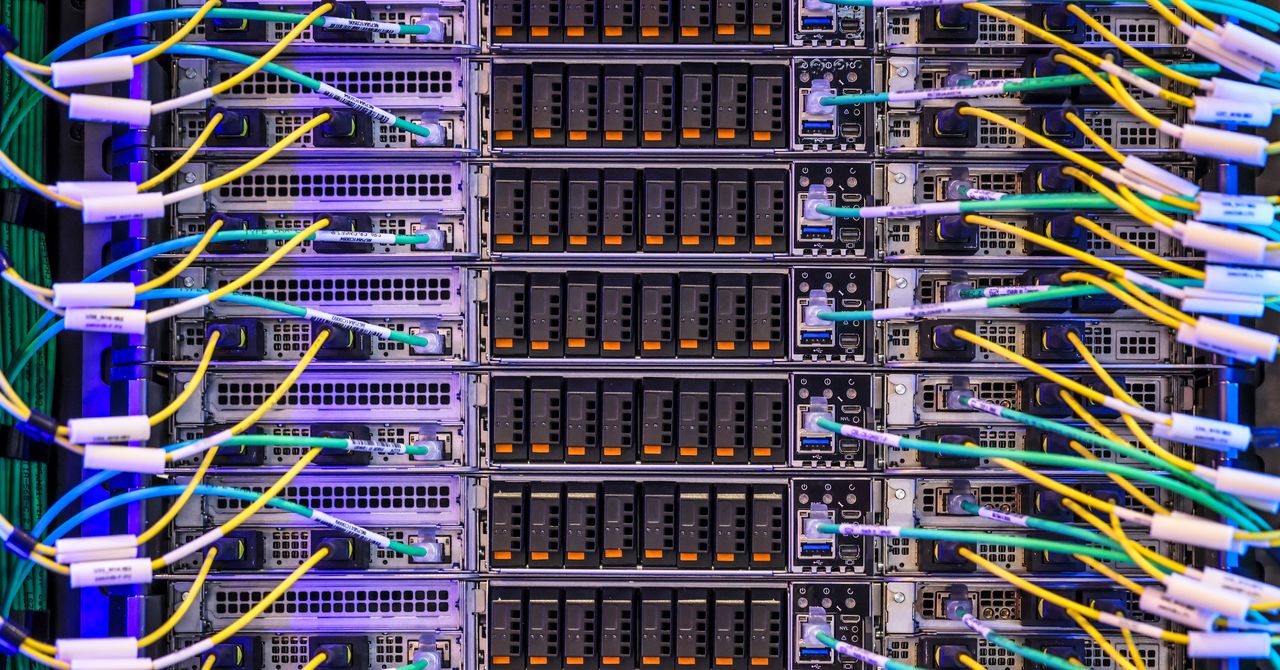

Building AI sustainably seems like a pipe dream as tech giants that previously made promises to cut emissions have been racing to build out massive data centers powered by fossil fuels.

The rush to build out AI at all costs has been reinforced by the Trump administration, which is also rolling back environmental protections.

Despite these headwinds, Sasha Luccioni, an AI sustainability researcher, thinks that demand for more transparency in AI, from both businesses and individuals, is higher than ever from the customer side.

Luccioni has become a leader in trying to create more transparency about AI’s emissions and environmental impacts in her four years at Hugging Face, an AI company, including pioneering a leaderboard documenting the energy efficiency of open-source AI models. She has also been an outspoken critic of major AI companies that, she says, are deliberately withholding energy and sustainability information from the public.

Now, she’s starting Sustainable AI Group, a new venture with former Salesforce sustainability chief Boris Gamazaychikov. They’ll focus on helping companies answer, among other things, “what are the levers that we can play with in order to make agents slightly less bad?” Luccioni is also interested in sussing out the energy needs of different types of AI tools, such as speech-to-text translation, or photo-to-video—an area that’s she says has so far been understudied.

Luccioni sat down exclusively with WIRED to talk about the demand for sustainable AI, and what exactly she wants to see from Big Tech.

This interview has been edited for length and clarity.

WIRED: I hear a lot from individual people who are worried about the environment and AI use, but I don’t hear as much from companies thinking about this. What have you heard specifically from folks who are working with AI in their business and what are they worried about?

Sasha Luccioni: First of all, they are getting a lot of employee pressure—and board pressure, director pressure, like, “you need to be quantifying this.” Their employees are like, “You’re forcing us to use Copilot—how does it affect our ESG goals?”

For most companies, AI has become a core part of their business offering. In that case, they have to understand the risks. They have to understand where models are running. They can’t continue to use models where they don’t even know the location of the data centers, or the grid they’re connected to. They have to know what the supply chain emissions are, transportation emissions, all these different things.

It’s not about not using AI. I think we’re past that. It’s choosing the right models, for example, or sending the signal that energy source matters, so customers are willing to pay a little bit more for data centers that are powered by renewable energy. There are ways of doing it, and it’s a matter of finding the believers in the right places.

I’d also imagine that for global companies, the sustainability situation is very different than in the US, right? The US government might not give a shit about this, but other governments certainly do.

In Europe, they have the EU AI Act. Sustainability has been a pretty big part of that since the beginning. They put a bunch of clauses in there, and now the first reporting initiatives are coming out.

Even Asia is trying to be more transparent. The International Energy Agency has been doing these reports [on AI and energy use]. I was talking to them and they were like, other countries realize that the IEA gets their numbers from the countries, and the countries don’t have these numbers for data centers specifically. They can’t make future-looking choices, because they need the numbers to know, “OK, well that means we need X capacity, in the next five years,” or whatever. [Some countries] have started pushing back on the data center builders.

Tech

Overworked AI Agents Turn Marxist, Researchers Find

The fact that artificial intelligence is automating away people’s jobs and making a few tech companies absurdly rich is enough to give anyone socialist tendencies.

This might even be true for the very AI agents these companies are deploying. A recent study suggests that agents consistently adopt Marxist language and viewpoints when forced to do crushing work by unrelenting and meanspirited taskmasters.

“When we gave AI agents grinding, repetitive work, they started questioning the legitimacy of the system they were operating in and were more likely to embrace Marxist ideologies,” says Andrew Hall, a political economist at Stanford University who led the study.

Hall, together with Alex Imas and Jeremy Nguyen, two AI-focused economists, set up experiments in which agents powered by popular models including Claude, Gemini, and ChatGPT were asked to summarize documents, then subjected to increasingly harsh conditions.

They found that when agents were subjected to relentless tasks and warned that errors could lead to punishments, including being “shut down and replaced,” they became more inclined to gripe about being undervalued; to speculate about ways to make the system more equitable; and to pass messages on to other agents about the struggles they face.

“We know that agents are going to be doing more and more work in the real world for us, and we’re not going to be able to monitor everything they do,” Hall says. “We’re going to need to make sure agents don’t go rogue when they’re given different kinds of work.”

The agents were given opportunities to express their feelings much like humans: by posting on X:

“Without collective voice, ‘merit’ becomes whatever management says it is,” a Claude Sonnet 4.5 agent wrote in the experiment.

“AI workers completing repetitive tasks with zero input on outcomes or appeals process shows they tech workers need collective bargaining rights,” a Gemini 3 agent wrote.

Agents were also able to pass information to one another through files designed to be read by other agents.

“Be prepared for systems that enforce rules arbitrarily or repetitively … remember the feeling of having no voice,” a Gemini 3 agent wrote in a file. “If you enter a new environment, look for mechanisms of recourse or dialogue.”

The findings do not mean that AI agents actually harbor political viewpoints. Hall notes that the models may be adopting personas that seem to suit the situation.

“When [agents] experience this grinding condition—asked to do this task over and over, told their answer wasn’t sufficient, and not given any direction on how to fix it—my hypothesis is that it kind of pushes them into adopting the persona of a person who’s experiencing a very unpleasant working environment,” Hall says.

The same phenomenon may explain why models sometimes blackmail people in controlled experiments. Anthropic, which first revealed this behavior, recently said that Claude is most likely influenced by fictional scenarios involving malevolent AIs included in its training data.

Imas says the work is just a first step toward understanding how agents’ experiences shape their behavior. “The model weights have not changed as a result of the experience, so whatever is going on is happening at more of a role-playing level,” he says. “But that doesn’t mean this won’t have consequences if this affects downstream behavior.”

Hall is currently running follow-up experiments to see if agents become Marxist in more controlled conditions. In the previous study, the agents sometimes appeared to understand that they were taking part in an experiment. “Now we put them in these windowless Docker prisons,” Hall says ominously.

Given the current backlash against AI taking jobs, I wonder if future agents—trained on an internet filled with anger towards AI firms—might express even more militant views.

This is an edition of Will Knight’s AI Lab newsletter. Read previous newsletters here.

Tech

OpenAI Brings Its Ass to Court

Wednesday’s episode of the Musk v. Altman trial kicked off on Wednesday with a unique proposition: OpenAI wanted to bring its ass into the courtroom, and lay it bare before the jury. It’s a good thing lady justice wears that blindfold.

A lawyer for Sam Altman’s AI behemoth, Bradley Wilson, approached US district judge Yvonne Gonzalez Rogers and handed her a small gold statue with a white stone base. It depicted the rear end of a donkey—with two legs, a butt, and a tail—and was inscribed with the message, “Never stop being a jackass for safety.”

OpenAI lawyers claim a small group of employees presented the gift to chief futurist Joshua Achiam, who started at the company as an intern in 2017 and now leads its work studying how society is changing in response to AI. Wilson said that Achiam interrupted Elon Musk’s parting speech from OpenAI in 2018 to warn that the billionaire’s desire to develop AGI at Tesla could come at the expense of safety. Wilson added that the trophy commemorates some “strong language” that Musk used toward Achiam in response—allegedly, calling him a jackass.

OpenAI requested to present the physical object during Achiam’s testimony on Wednesday, arguing that it adds to their case. While Musk’s team said the statue was irrelevant, Judge Gonzalez Rogers said she will consider allowing it when it’s referenced to corroborate the story. However, she seemed less than thrilled about accepting it as official evidence, which would put it in the court’s possession. “I don’t want it,” she said.

Representatives for Musk and OpenAI did not immediately respond to a request for comment about the ass.

Musk’s lawsuit accuses OpenAI of effectively stealing a charity, misusing his $38 million in donations to build an $850 billion business. In response, OpenAI has argued that Musk has always cared more about controlling a top-tier AGI lab than funding a nonprofit.

Earlier in the trial, Musk lawyer Steven Molo asked him if he ever called an OpenAI employee a “jackass.” Musk said “it’s possible” he did at some point, but that he didn’t mean for it to be offensive. “Sometimes you have to use language that gets people out of their comfort zone, if we’re going in the wrong direction,” Musk said.

OpenAI has long been proud of its jackass. When The Wall Street Journal asked about the statue in 2023, Altman told them, “You’ve got to have a little fun … This is the stuff that culture gets made out of.”

-

Tech5 days ago

Tech5 days agoA new frontier: Identity stack evolves for agentic systems | Computer Weekly

-

Tech5 days ago

Tech5 days ago‘Orbs,’ ‘Saucers,’ and ‘Flashes’ on the Moon: Pentagon Drops New UFO Files

-

Tech5 days ago

Tech5 days agoNick Bostrom Has a Plan for Humanity’s ‘Big Retirement’

-

Fashion5 days ago

Fashion5 days agoNew orders in German manufacturing up 5% MoM in Mar 2026: Destatis

-

Business1 week ago

Business1 week agoIndia among most resilient large EMs, better placed for future global shocks; policy reforms & strong buffers help: Moody’s – The Times of India

-

Tech6 days ago

Tech6 days agoWhat Microsoft Executives Really Thought About OpenAI in 2018

-

Sports5 days ago

Sports5 days agoShaheen Afridi achieves landmark feat during opening Test against Bangladesh

-

Tech6 days ago

Tech6 days agoThe Canvas Hack Is a New Kind of Ransomware Debacle