Tech

Scammers in China Are Using AI-Generated Images to Get Refunds

I don’t want to admit it, but I did spend a lot of money online this holiday shopping season. And unsurprisingly, some of those purchases didn’t meet my expectations. A photobook I bought was damaged in transit, so I snapped a few pictures, emailed them to the merchant, and got a refund. Online shopping platforms have long depended on photos submitted by customers to confirm that refund requests are legitimate. But generative AI is now starting to break that system.

A Pinch Too Suspicious

On the Chinese social media app RedNote, WIRED found at least a dozen posts from ecommerce sellers and customer service representatives complaining about allegedly AI-generated refund claims they’ve received. In one case, a customer complained that the bed sheet they purchased was torn to pieces, but the Chinese characters on the shipping label looked like gibberish. In another, the buyer sent a picture of a coffee mug with cracks that looked like paper tears. “This is a ceramic cup, not a cardboard cup. Who could tear apart a ceramic cup into layers like this?” the seller wrote.

The merchants reported that there are a few product categories where AI-generated damage photos are being abused the most: fresh groceries, low-cost beauty products, and fragile items like ceramic cups. Sellers often don’t ask customers to return these goods before issuing a refund, making them more prone to return scams.

In November, a merchant who sells live crabs on Douyin, the Chinese version of TikTok, received a photo from a customer that made it look like most of the crabs she bought arrived already dead, while two others had escaped. The buyer even sent videos showing the dead crabs being poked by a human finger. But something was off.

“My family has farmed crabs for over 30 years. We’ve never seen a dead crab whose legs are pointing up,” Gao Jing, the seller, said in a video she later posted on Douyin. But what ultimately gave away the con was the sexes of the crabs. There were two males and four females in the first video, while the second clip had three males and three females. One of them also had nine instead of eight legs.

Gao later reported the fraud to the police, who determined the videos were indeed fabricated and detained the buyer for eight days, according to a police notice Gao shared online. The case drew widespread attention on Chinese social media, in part because it was the first known AI refund scam of its kind to trigger a regulatory response.

Lowering Barriers

This problem isn’t unique to China. Forter, a New York-based fraud detection company, estimates that AI-doctored images used in refund claims have increased by more than 15 percent since the start of the year, and are continuing to rise globally.

“This trend started in mid-2024, but has accelerated over the past year as image-generation tools have become widely accessible and incredibly easy to use.” says Michael Reitblat, CEO and cofounder of Forter. He adds that the AI doesn’t have to get everything right, as frontline retail workers and refund review teams may not have the time to closely scrutinize each picture.

Tech

Enjoy up to 60% Off With eBay Coupons in April 2026

Long before we had Amazon or Facebook marketplace, or thousands of other online retailers, we had eBay. And now, we have an eBay coupon to help you save on basics like vacuums and phones, to even your most niche need—because eBay has everything from haunted objects to ironic landline phones to retro gaming consoles. One of the first and most enduring online shopping platforms, eBay has stood the test of time, providing us with the old-school feel of estate sales, complete with bidding wars and gently used items of quite literally every type.

Save up to 60% on Your Next Purchase at eBay

eBay has rotating deals, like 20% off up-and-coming brands, so be sure to check their page often to know which deals are next. They have huge savings on essentials, like Dyson vacuums—an enduring titan in the home cleaning realm. There’s also discounts on like-new refurbished Apple MacBooks and iPads so you can work or study for so much less. It’s not only office tech they have deals on, but even kitchen essentials, like the forever-popular KitchenAid Stand Mixer. eBay has deals on everything from clothing and jewelry to power tools, so check eBay’s deals page often.

How to Use an eBay Coupon (If you Have one Handy)

Once you’ve perused the nearly endless options of items on eBay, here’s how you can redeem the eBay discount code or offer at checkout: first, make sure your code isn’t expired (I know it sounds like a no-brainer, but you don’t want to be disappointed when that dreaded ‘invalid’ pop-up comes on the screen). Enter the code in the ‘Add coupons’ section, or check the box if the coupon is displayed. When you select ‘apply,’ you should see the discounted total, and then you’ll be prompted to pay.

Save More With Free Shipping

Once you find the special item of your dreams, go to the “shipping and pickup” search filter and check the “free shipping” box to get free shipping. Make sure you choose eBay free shipping on a multitude of items like motor parts, books, golf clubs, Pokemon cards, haunted objects, tech, and virtually anything else you can imagine.

Shop These Rotating eBay Deals

eBay has rotating deals, like 20% off up-and-coming brands, so be sure to check their page often to know which deals are next. They also have spotlighted, trending, and featured deals for huge savings on a myriad of products like auto parts, golf clubs, shoes, and more. eBay has a money-back guarantee to ensure you get the item you ordered or you get your money back.

Shop With eBay Mastercard to Get More Rewards

Have you heard of an eBay Mastercard? I hadn’t either, but if you’re a collector or frequent eBay shopper, an eBay Mastercard is a smart way to save on purchases you were already planning to make. You’ll earn five times the amount of points for the rest of the year after you spend $1,000 on eBay in a calendar year. Until then, you’ll earn three times the points per $1 spent, up to $1,000, on eBay in a calendar year. You can also earn twice as many points per $1 spent on gas, restaurant, and groceries, and 1 times as many points per $1 spent on all other Mastercard purchases.

Get Daily Deals With the eBay App

If you’re someone who shops or sells on eBay often, I’d suggest downloading the eBay app for even more perks. The eBay mobile app makes it easy to find the best rotating deals on various items and access to the hottest deals and discounts of the day before they leave. Through the app, you can browse everything from trendy items, to power tools, to tech gadgets, and then choose whatever price looks best. There’s also app-only discounts and special offers exclusively for eBay app users. Plus, eBay will help you figure out when’s the best time to buy, with price notifications to let you know when the price has dropped.

Tech

This Windows Laptop Makes the MacBook Neo Look Overpriced

The MacBook Neo made quite a splash when it landed in March. $599 for a MacBook felt groundbreaking, and it was easy for casual onlookers to declare that Windows laptops had no true answer to it.

But what if I told you there was a Windows option that was better in almost every way? That’s the HP OmniBook 5, a laptop you’ve probably never heard of unless you watch the space closely. I’ve been recommending it ever since I tested it last month. The price has been fluctuating, but more often than not, the 14-inch model was selling for $500. You read that right: $500. Today, the cheapest, most consistent price you’ll find it for is $730 over at Walmart, but I’ve seen the HP frequently drop the price from $1,050 down to around $500.

And just take a look at what you get for the price, because it’s absolutely stacked. It comes with 16 GB of RAM and 512 GB of storage, double what you get on the $599 MacBook Neo. There’s a 16-inch version as well, if you like the idea of having a bit more screen real estate work with.

The HP OmniBook 5 is powered by the Qualcomm Snapdragon X, a highly efficient chip that gets great, all-day battery life that’s at least on par with the MacBook Neo. If you haven’t used a Windows laptops in a few years and still think they can’t compete with MacBooks in battery life, you’re sorely mistaken.

The 16 GB of memory on the OmniBook 5 is particularly important to note, as it’s one of the big points of contention with the MacBook Neo. Being stuck at 8 GB in 2026 feels cruel on principle, and while testing it I was able to load up the MacBook Neo and easily find its breaking point. The 16 GB of memory on the HP OmniBook 5 is enough that you’ll never have to worry about how many tabs, applications, installations, or downloads you have going simultaneously. Combined with the better multicore performance of the Snapdragon X, it enables a kind of freedom that lets you forget about the hardware and focus on the task at hand. Don’t get me wrong—the MacBook Neo has its place, but calling it the undisputed king of budget laptops just isn’t right.

The HP OmniBook 5 Is Only $500

Now, I know what you’re thinking. Specs and performance don’t tell the whole story, and Apple has never been known for offering tons of specs for cheap. But the OmniBook 5 14 is also an attractive design in a highly portable package. At 0.5 inches, it’s exactly the same thickness as the MacBook Neo and right around the same weight too. Does the MacBook Neo have a bit more style and personality? Absolutely—especially if you fancy one of the bolder color options. But I’d say the OmniBook 5 is a very pretty laptop in its own right. It’s also made of aluminum, sturdy and well-built in your hands. The hinge is balanced nicely, allowing you to open the lid with one finger. It doesn’t feel cheap.

Tech

The 10 Best TV Shows to Stream This Month

After years of suffering in silence with her trauma, Vega eventually called out her accuser in one of the most public forums in existence: Facebook. Within just a few days, she was contacted by eight other women, most of them also American college students studying abroad, with eerily similar stories of their own encounters with Vela, who was known to many as “Manu.” This three-part docuseries traces how Vega found the courage to stand up to her attacker and how the far-reaching power of using one’s voice on social media can be used for more than just sharing memes and family photos. Ultimately, Vega’s efforts led authorities to determine that Manu had assaulted between 50 and 100 young women.

Star Wars: Maul—Shadow Lord

From The Mandalorian to Skeleton Crew, Disney+ has produced a dozen Star Wars TV shows since its streaming debut, and fans are always clamoring for more. This month, that means the premiere of Star Wars: Maul—Shadow Lord, a gritty, animated series for adults that is set after the events of the universe’s famous Clone Wars and told from the perspective of Maul, one of the space opera’s most notorious supervillains. But it unravels more like a crime-drama, as it follows Maul’s rogue attempts to use his Sith skills to rebuild his Shadow Collective, a massive crime syndicate composed of Sith leaders, Mandalorian warriors, bounty hunters, and more, all united by the goal of usurping Darth Sidious and destroying his Sith Order. IYKYK.

The Testaments

The Handmaid’s Tale marked a watershed moment for Hulu when, in 2017, it became the first streaming series to nab the Emmy for Outstanding Drama Series—solidifying the streamer’s reputation as a bona fide player. As that groundbreaking series signed off in 2025 after six seasons, it’s hardly surprising that Hulu would want to keep Margaret Atwood’s dystopian world alive, so now we have The Testaments. Set 15 years after the events of the original series, much of the series takes place at an elite prep school for young women learning to be the dutiful wives of the next wave of Commanders. Aunt Lydia (Ann Dowd) returns to terrify a new generation of young women, including Agnes (One Battle After Another’s breakout star Chase Infiniti), a pious young woman who is beginning to question the rules she has grown up obeying, and Daisy (Lucy Halliday), a Canadian teen and recent Gilead convert—all of whom have secrets they’re keeping.

Kara Swisher Wants to Live Forever

“There’s so much bad information that the good information gets drowned.” That’s the central thesis behind famed tech journalist Kara Swisher’s decision to dive headfirst into the science (and scams) of longevity—a multibillion-dollar industry that shows no signs of slowing—in this six-episode docuseries. Armed with her investigative skills and famously dry wit, Swisher talks to the brains behind brands promising wellness acolytes longer lives with everything from gene editing and AI-driven medical care to bleeding-edge anti-aging treatments. OpenAI CEO Sam Altman, outspoken “biohacker” Bryan Johnson, nepo baby venture capitalist Reed Jobs, and Nobel Prize–winning biochemist Jennifer Doudna are among those who help Swisher separate fact from fiction in the quest to live forever.

Margo’s Got Money Troubles

Margo Millet (Elle Fanning) is a clever, ambitious young woman with her whole life in front of her—until an affair with her English professor leaves her pregnant and suddenly thrust into adulthood. With mounting bills and limited options to gain real income, Margo ultimately turns to OnlyFans, where she quickly gains a large and lucrative following—and the judgment that comes along with that. Based on Rufi Thorpe’s bestselling 2024 novel, this dark dramedy cleverly uses its setup to challenge the many still-existing stigmas surrounding sex work and even single motherhood. While Fanning is the undoubted star, she is ably supported by an A-list team of costars, including Michelle Pfeiffer as her mom and former Hooters waitress Shyanne, and Nick Offerman as her dad Jinx, a former pro wrestler.

This Is a Gardening Show

First he was Between Two Ferns, now he’s got his own DIY gardening series. Emmy-winning actor-comedian Zach Galifianakis brings his absurdist comedy to this hilarious docuseries, which is (mostly) as earnest as it is funny. Each episode introduces viewers to a new group of gardeners. While it’s largely aimed at laughs, there’s also a real exploration of the many reasons why people choose to garden, which often leads to very real and important questions about mental health, sustainability, the disconnection many people feel in the modern world, the many flaws in our current “perverse” (Galifianakis’ word) food production system, and what that might mean for future generations. Appropriately, the series debuts on Earth Day (April 22).

Stranger Things: Tales From ’85

Much like Hulu wasn’t about to say goodbye entirely to The Handmaid’s Tale, just because Stranger Things said goodbye on New Year’s Eve doesn’t mean the gang from Hawkins, Indiana, is totally parting ways with Netflix. In this animated spinoff, the kids—Eleven, Mike, Will, Dustin, Lucas, and Max—are going back in time slightly, to 1985, where the friends are desperately trying to reacquaint themselves with “normal” life after their terrifying dealings with the Upside Down. But they soon realize that something is still amiss in Hawkins, and they quickly find themselves embroiled in yet another paranormal adventure. Much like the nostalgia-fueled live-action series, the animated show is meant to be reminiscent of the Saturday morning cartoons that were a staple of every ’80s kid’s pop culture diet. Notably, the show is also being heavily promoted as a more family-friendly entry in the series—meaning monsters for all. All 10 episodes will drop on April 23.

Buffy the Vampire Slayer

Buffy the Vampire Slayer is officially dead—at least for now. In mid-March, Sarah Michelle Gellar announced via Instagram that Hulu had put a stake through the heart of the long-awaited Buffy reboot, which would see the ’90s icon reprise her role as the vampire world’s biggest headache. But just because there presumably won’t be new episodes to enjoy doesn’t mean you can’t revisit the beloved original series.

-

Fashion1 week ago

Fashion1 week agoIndia’s exports face reset as EU links trade to carbon metrics: EY

-

Entertainment1 week ago

Entertainment1 week agoQueen Elizabeth II emotional message for Archie, Lilibet sparks speculation

-

Entertainment1 week ago

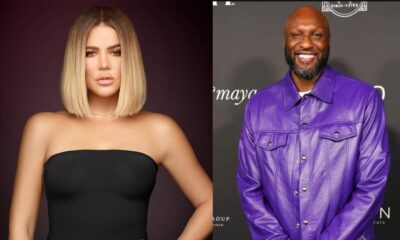

Entertainment1 week agoLamar Odom shocking response to Khloé Kardashian account of his overdose

-

Tech7 days ago

Tech7 days agoAs the Strait of Hormuz Reopens, Global Shipping Will Take Months to Recover

-

Tech7 days ago

Tech7 days agoAzure customers up in arms over ‘full’ UK South region | Computer Weekly

-

Fashion1 week ago

Fashion1 week agoCII submits 20-pt agenda to Indian govt to back firms hit by Iran war

-

Tech6 days ago

Tech6 days agoThis AI Button Wearable From Ex-Apple Engineers Looks Like an iPod Shuffle

-

Tech1 week ago

Tech1 week agoA Single Strike Won’t Shut Off the Gulf’s Desalination System