Tech

The DOGE Subcommittee Hearing on Weather Modification Was a Nest of Conspiracy Theorizing

The popularity of these conspiracies may also be on the rise in right-wing spaces. Some MAHA figureheads, including Nicole Shanahan, have shared geoengineering content promoting conspiracy theories, while Marla Maples, Donald Trump’s ex-wife, told Fox News in July that she helped Florida’s anti-weather modification bill pass. (Bill Gates’ track record of funding solar geoengineering research has undoubtedly helped fan some of these flames.)

Doricko, the Rainmaker CEO, has spent much of the past year testifying in state legislatures that were considering vague anti-geoengineering bills that would have also banned cloud seeding. In May, he told WIRED that he and his team had spoken in front of 31 state legislatures. Education, he says, is key to getting people on board with the technology.

“I think there’s some cohort of people that believe that, you know, Joe Biden is actually a lizard person,” he says. “I think that a lot of people aren’t quite that far along, but are very concerned about chemtrails, probably. Showing them farms that are greener than they otherwise would have been with testimonies from those farmers—that’s probably the way that we’re gonna win hearts and minds.” (Doricko told WIRED last week that in recent months, his company has had “interest, curiosity, and excitement” from various state governments, both Democratic and Republican, in using cloud seeding to enhance water supply. “The education that we had the opportunity to do ultimately I think assuaged a lot of reasonable people’s concerns.”)

There is one additional type of human-caused shift in the world’s weather that played an outsize role in the hearing: climate change. Greene and other Republican lawmakers repeated many climate denial talking points and bad framing around climate science, including the idea that carbon dioxide is good for the planet because it is plant food. There were multiple mentions of beach houses owned by Barack Obama and Al Gore as a way of illustrating supposed hypocrisy about sea level rise. One of the witnesses called by the House majority works at an organization with a long history of questioning established climate science; he claimed in his testimony that there is “uncertainty as to exactly how much influence humans have exerted” over the global rise in temperature—a take that is out of line with mainstream science.

“My view is that this is mainly a way of saying there are secret forces at work that are making your life miserable, and everything bad is due to these secret forces,” says Dessler. “When in reality, it’s not secret forces, it’s climate change and it’s these other things that are hurting people.”

But even a whole hearing dedicated to a conspiracy theory grab bag may not be enough for some. On X, a popular anti-geoengineering community was alight with posts about the hearing—including many critical of the experts and their findings. “This was a scripted show to protect the government’s weather control agenda,” one moderator’s post reads. “Why no independent voices?”

Tech

Amazon’s Spring Sale Is So-So, but Cadence Capsules Are a Bright Spot

The WIRED Reviews Team has been covering Amazon’s Big Spring Sale since it began at on Wednesday, and the overall deals have been … not great, honestly. So far, we’ve found decent markdowns on vacuums, smart bird feeders, and even an air fryer we love, but I just saw that Cadence Capsules, those colorful magnetic containers you may have seen on your social media pages, are 20 percent off. (For reference, the last time I saw them on sale, they were a measly 9 percent off.)

If you’re not familiar, they allow you to decant your full-sized personal care products you use at home—from shampoo and sunscreen to serums and pills—into a labeled, modular system of hexagonal containers that are leak-proof, dishwasher safe, and stick together magnetically in your bag or on a countertop. No more jumbled, travel-sized toiletries and leaky, mismatched bottles and tubes.

Cadence Capsules have garnered some grumbling online for being overly heavy or leaking, but I’ve been using them regularly for about a year—I discuss decanting your daily-use products in my guide to How to Pack Your Beauty Routine for Travel—and haven’t experienced any leaks. They do add weight if you’re trying to travel super-light, and because they’re magnetic, they will also stick to other metal items in your toiletry bag, like bobby pins or other hair accessories. This can be annoying, especially if you’re already feeling chaotic or in a hurry.

Otherwise, Capsules are modular, convenient, and make you feel supremely organized—magnetic, interchangeable inserts for the lids come with permanent labels like “shampoo,” “conditioner,” “cleanser,” and “moisturizer.” Maybe you love this; maybe you don’t. But at least if you buy on Amazon, you can choose which label genre you get (Haircare, Bodycare, Skincare, Daily Routine). If this just isn’t your jam, the Cadence website offers a set of seven that allows you to customize the color and lid label of each Capsule, but that set is not currently on sale.

Tech

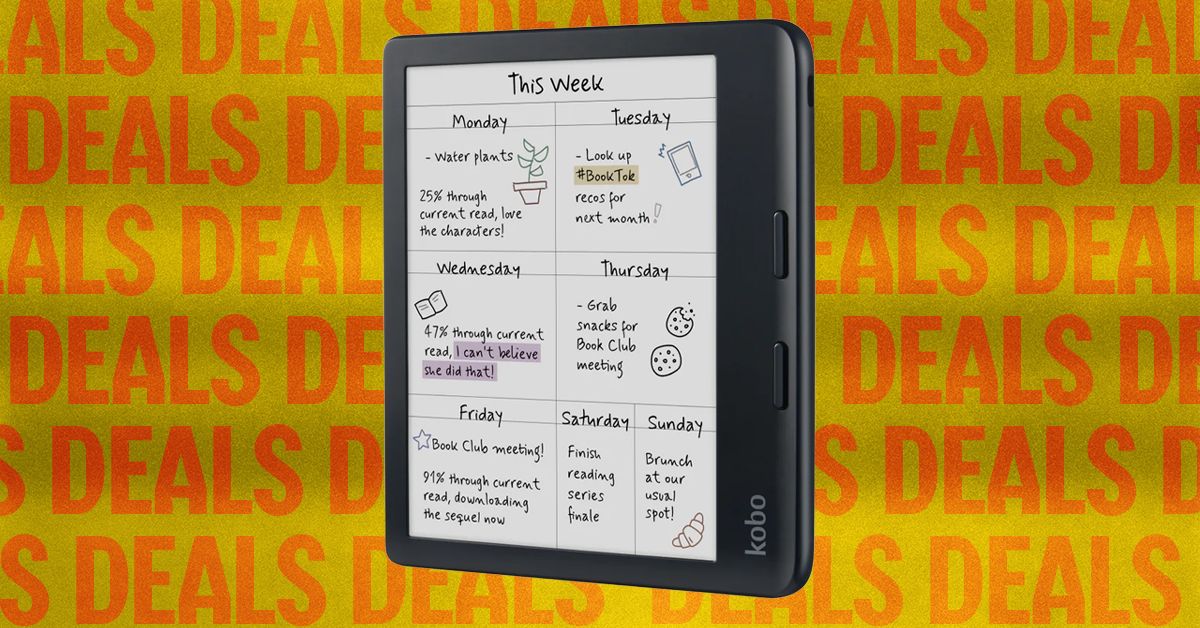

Fellow Readers, Don’t Miss These E-Reader Sales

This is the older Kindle Scribe, but the price and features are the best you’ll get, especially when it’s on sale like this. I still reach for this model even though I have the newer third generation, and keep in mind the second generation will also get some of the newer software and experiences over time. With the sale, it’s half the price of the newer model.

If you’re already a Kindle reader and looking to upgrade, it’s likely because you want a new feature like a color screen. While the Kobo above is the better buy, if you want to stay in the Kindle ecosystem but add some color to your books, both the Colorsoft and Colorsoft Signature are on sale.

If you’re looking to spend as little as possible, the basic Kindle (11th generation) is still a great e-reader and is currently under $100. It can do almost everything the other Kindles can (except the Scribe) on a snappy black-and-white screen. It doesn’t have a warm front light either, but it’s still a great purchase for the price.

Power up with unlimited access to WIRED. Get best-in-class reporting and exclusive subscriber content that’s too important to ignore. Subscribe Today.

Tech

This Speaker I Tried From Soundboks Can Handle a Real Party

In addition to the rubber balls, there’s a nice physical interface on the side for adjusting volume and pairing multiple Mix speakers together if you have multiple on hand (I was only sent the single mono speaker). Setup involves installing the Soundboks app, pairing to the speaker via Bluetooth on your phone, and picking whatever you want to play. It’s all quick and painless, especially for my first-time pairing with a Samsung Galaxy S24 Ultra.

Otherwise, it’s all very pro audio. Everything reminds me very much of the Peavey PA system I have in my music rehearsal space. The top of the speaker features a built-in carrying handle and a place for a strap (an accessory you have to buy aftermarket, or you can fasten it with any strap you have that fits through the hole). There are also top-hat mounts for the speakers to slide onto traditional PA pole stands, if you wanted to use them in that way at a party or event.

The grill is replaceable, as is the massive internal battery, which means that these things are pretty much indestructible as long as the amp and speakers themselves still work—the battery is the weak point of most portable speakers in 2026.

I bounced it around my yard, dropped it off my patio, and generally beat the crap out of it during my two-week testing period, and the thing just needed a little wipe down and a charge when it ran out of juice. The claimed 40 hours of battery at reasonable volume is accurate, but you’ll get about eight hours at max volume (which is very good for the category). If you need to bring some walk-out music to your kid’s all-day Little League tournament, this a great way to go.

Big Sound

Photograph: Parker Hall

Soundboks calls this speaker midsize, but at 21.4 pounds and the size of a medium-size cooler, I’d still call it a large speaker. That said, the size doesn’t make it any less portable than competitors from JBL and others; you still need a car or cargo ebike to take one of these with you, so what’s a couple inches here or there? The fact that this is a rectangle actually makes it easier to strap down than many others, especially with the holes for the strap and the built-in handle to tie down through.

-

Fashion1 week ago

Fashion1 week agoSales at US apparel, clothing accessories stores up 4% YoY in Jan 2026

-

Tech1 week ago

Tech1 week agoJustice Department Says Anthropic Can’t Be Trusted With Warfighting Systems

-

Fashion1 week ago

Fashion1 week agoSpain’s Inditex FY25 sales rise 3.2% to $46.28 bn amid strong demand

-

Sports1 week ago

Sports1 week agoMarch Madness 2026 – How to watch in SA, start time, schedule, TV channel for NCAA championship basketball tournament

-

Politics1 week ago

Politics1 week agoIran strikes Tel Aviv with cluster-warhead missiles in retaliation of Larijani’s martyrdom

-

Entertainment1 week ago

Entertainment1 week agoWith few new leads 45 days after Nancy Guthrie’s disappearance, investigation “becomes much harder,” expert says

-

Entertainment1 week ago

Entertainment1 week agoVal Kilmer revived 1 year after death through AI

-

Business1 week ago

Business1 week agoBrits cashing in jewellery as gold price hits record high