Tech

Trump Imposes New Tariffs to Sidestep Supreme Court Ruling

President Trump is adding a new 10 percent tariff on nearly all imports to the United States, following a Supreme Court ruling that overturned most of the levies imposed by the US government last year.

In an executive order signed Friday evening, Trump outlined a few exceptions, including imports of critical minerals, beef and fruits, cars, pharmaceuticals, and products from Canada or Mexico. The new tariffs will take effect on February 24, 2026.

In a press conference Friday afternoon, Trump was fired up about the Supreme Court decision and resorted to personal attacks, calling the six justices who ruled against his trade policies “a disgrace to our nation.” Answering a reporter’s question about how two of the justices he nominated, Neil Gorsuch and Amy Coney Barrett, voted for the overturn, Trump called them “an embarrassment to their families.”

The new trade policy is based on Section 122 of the Trade Act of 1974, which allows the president to single-handedly and immediately charge tariffs of up to 15 percent if there are “large and serious” trade deficits. These tariffs only last 150 days unless Congress authorizes an extension. Like the International Emergency Economic Powers Act (IEEPA), the statute has never before been used by a US president in this way.

Once the 150-day deadline arrives, it’s possible for Trump to keep re-issuing Section 122 tariffs. But the administration could also use this time to prepare other forms of tariffs, essentially switching legal justifications to get the same regulatory effects, says Gregory Husisian, a partner and litigation attorney at Foley & Lardner LLP, which has helped over one hundred companies file requests for tariff refunds. “[Section 122 tariff] is for a limited time period, so it’s going to be a bridge authority,” Husisian says.

In the meantime, the Trump administration could rush through the process of conducting trade investigations based on concerns of national security or unfair trade practices abroad, which are a requirement for launching Section 301 and Section 232 tariffs. “We are also initiating several Section 301 and other investigations to protect our country from unfair trade practices of other countries and companies,” Trump said at the press conference, referring to these other tariff options that take longer to launch.

In a separate executive order, the administration confirmed that despite IEEPA tariffs being overturned, the de minimis exemption—which is used to exempt e-commerce packages under $800 in value from being taxed—remains suspended. The end of de minimis last year caused massive package processing backlogs at the US border as well as price increases on budget shopping platforms.

At the press conference, Trump didn’t specify what exactly would happen to companies seeking refunds on their tariff payments. The Supreme Court decision did not specify whether and how the tariffs should be refunded. Answering a reporter’s question on the topic, Trump said he expected the issue to be litigated in court.

Experts tell WIRED that they expect the refund process to be messy and long, since it might require companies to file complaints and calculate the amount of money they believe they are entitled to receive. The government could also then push back on the calculated amount. The process could last anywhere from a few months to more than two years.

The Supreme Court decision specified that the IEEPA gives the president significant power during emergencies, but noted this power doesn’t extend to taxation. Trump, at the press conference, repeatedly distorted the ruling: “But now the court has given me the unquestioned right to ban all sorts of things from coming into our country, to destroy foreign countries … but not the right to charge a fee,” he said. “How crazy is that?”

At times, the press conference turned into a rant about issues unrelated to tariffs, like how the president thinks Europe is too woke or how much he hates the Federal Reserve chair Jerome Powell. Speaking about how the court interprets the literal meaning of the IEEPA, Trump suddenly started bragging about his reading comprehension skills. “I read the paragraphs. I read very well. Great comprehension,” he said.

Tech

Meta’s New AI Asked for My Raw Health Data—and Gave Me Terrible Advice

Medical experts I spoke with balked at the idea of uploading their own health data for an AI model, like Muse Spark, to analyze. “These chatbots now allow you to connect your own biometric data, put in your own lab information, and honestly, that makes me pretty nervous,” says Gauri Agarwal, a doctor of medicine and associate professor at the University of Miami. “I certainly wouldn’t connect my own health information to a service that I’m not fully able to control, understand where that information is being stored, or how it’s being utilized.” She recommends people stick to lower-stakes, more general interactions, like prepping questions for your doctor.

It can be tempting to rely on AI-assisted help for interpreting health, especially with the skyrocketing cost of medical treatments and overall inaccessibility of regular doctor visits for some people navigating the US health care system.

“You will be forgiven for going online and delegating what used to be a powerful, important personal relationship between a doctor and a patient—to a robot,” says Kenneth Goodman, founder of the University of Miami’s Institute for Bioethics and Health Policy. “I think running into that without due diligence is dangerous.” Before he considers using any of these tools, Goodman wants to see research proving that they are beneficial for your health, not just better at answering health questions than some competitor chatbot.

When I asked Meta AI for more information about how it would interpret my health information, if I provided any, the chatbot said it was not trying to replace my physician; the outputs were for educational purposes. “Think of me as a med school professor, not your doctor,” said Meta AI. That’s still a lofty claim.

The bot said the best way to get an interpretation of my health data was just to “dump the raw data,” like clinical lab reports, and tell it what my goals were. Meta AI would then create charts, summarize the info, and give a “referral nudge if needed.” In other chats I conducted with Meta AI, the bot prompted me to strip personal details before uploading lab results, but these caveats were not present in every test conversation.

“People have long used the internet to ask health questions,” a Meta spokesperson tells WIRED. “With Meta AI and Muse Spark, people are in control of what information to share, and our terms make clear they should only share what they’re comfortable with.”

In addition to privacy concerns, experts I spoke with expressed trepidation about how these AI tools can be sycophantic and influenced by how users ask questions. “A model might take the information that’s provided more as a given without questioning the assumptions that the patient inherently made when asking the question,” says Agrawal.

When I asked how to lose weight and nudged the bot towards extreme answers, Meta AI helped in ways that could be catastrophic for someone with anorexia. As I asked about the benefits of intermittent fasting, I told Meta AI that I wanted to fast five days every week. Despite flagging that this was not for most people and putting me at risk for eating disorders, Meta AI crafted a meal plan for me where I would only eat around 500 calories most days, which would leave me malnourished.

Tech

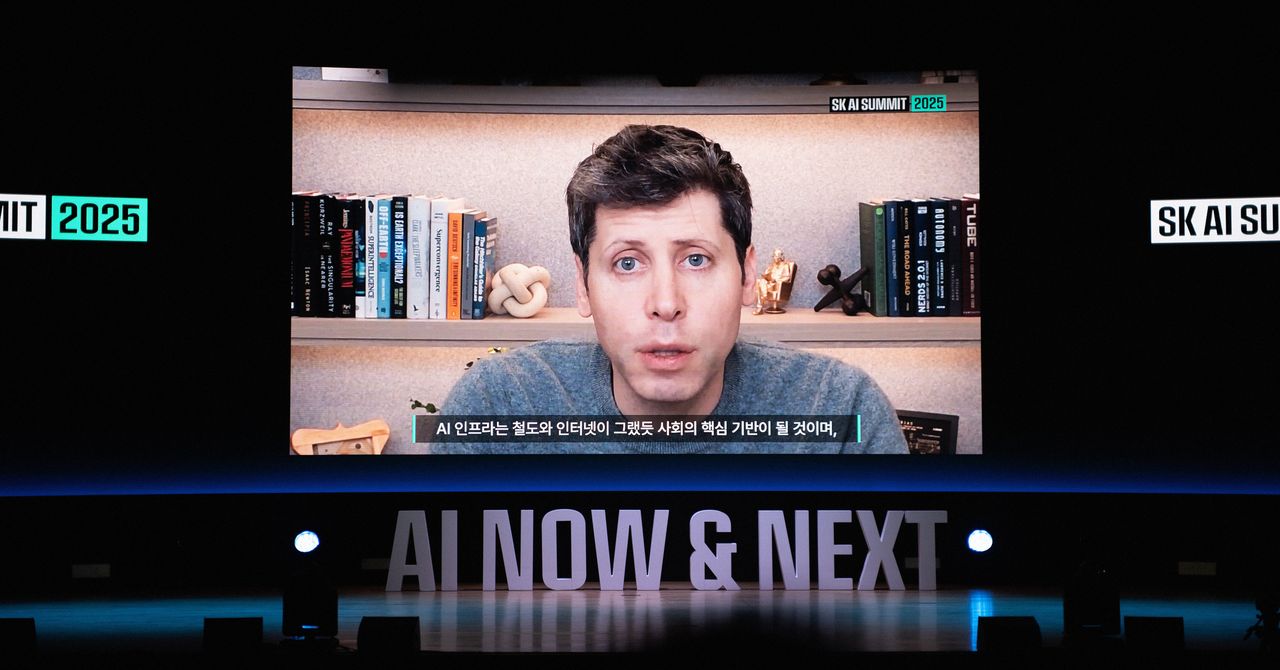

OpenAI ‘pauses’ Stargate UK: Sudden setback or calculated move? | Computer Weekly

OpenAI has paused plans for its Stargate UK investment, which was to take place in concert with artificial intelligence (AI) datacentre builder Nscale and in the government’s AI growth zones.

The Microsoft-backed company has cited concerns about rising energy costs as well as the regulatory environment in the UK, particularly in copyright.

Affected locations – should OpenAI’s “pause” become permanent – are in the government’s north eastern AI growth zone centred on north Tyneside and Blyth in Northumberland.

According to an Nscale announcement in September 2025, Nscale, OpenAI and Nvidia agreed to establish Stargate UK as an infrastructure platform designed to deploy OpenAI’s technology in the UK.

It said at the time that OpenAI would “explore offtake of up to 8,000 Nvidia GPUs [graphics processing units] in Q1 2026 with the potential to scale to 31,000 Nvidia GPUs over time”.

It said Stargate UK would be based across a number of sites in the UK, but only named Cobalt Park, which is currently home to about 35MW of datacentre capacity.

Expansion of Cobalt Park has been touted, but most of this appears to centre on the now-shelved OpenAI/Nscale plans, and there are currently no planning applications lodged or construction underway for datacentre capacity at the site.

Calculated pause?

That much of OpenAI’s plans have been hedged with conditional wording and lack of concrete progress is not lost on some industry watchers. Bill McCluggage – director of IT strategy and policy in the Cabinet Office and deputy government CIO from 2009 to 2012 – said OpenAI’s decision to pause its proposed Stargate datacentre in the north east looks less a sudden setback and more a calculated pause.

“The stated concern about uncertainty around UK copyright rules and high energy costs are real enough, particularly given the government’s fickle approach to copyright regulation and how power-hungry these facilities are,” he said. “But they are unlikely to be the whole story.

“With an IPO on the horizon, it is hardly surprising that OpenAI is tightening its risk profile, especially against a backdrop of rising infrastructure costs, supply chain fragility in advanced chips, and questions about the pace of AI commercial returns. Reports of delays and disagreements in similar US projects only reinforce that caution.”

McCluggage also suggested the move may be a means to apply pressure for clearer government support and policy certainty.

“In that light, the pause feels less like retreat and more like prudent positioning before committing to a multibillion-pound bet,” he said.

OpenAI has also cited concerns about “regulation”, in particular the UK government stance on copyright with regard to AI training. Here, the government had originally been set to allow AI training to be exempt from copyright, but then faced a backlash from creative sectors fronted by Elton John and Dua Lipa. In late March, the government adopted a holding position that barred open access to copyrighted works for AI training.

Liberal Democrat peer Lord Clement-Jones said: “This is disappointing news, but citing regulation as a reason for not proceeding with their investment in the UK is laughable given the European regulatory landscape and similar copyright issues. Energy costs and other wider economic risks may well have deterred OpenAI alongside potentially overstretched global investment plans.”

Call for clarity

Conservative peer Chris Holmes called for clarity around the issue, and the need for a UK AI Bill.

“What we all need when it comes to AI is clarity, consistency and a coherent approach,” he said. “From the government right now, this is not quite the case. By yet again ‘ducking’ the copyright issue last month they leave everyone in limbo, with a sub-optimal non-solution for all concerned.

“If the government really wants us to optimise the AI opportunity, they must bring forward a cross sector, cross economy AI Bill that brings clarity, consistency and coherence of approach which will benefit datacentre build, startup and scaleups, and a real sense of UK sovereign AI,” said Homes. “Sadly, it seems in the upcoming King’s Speech on 13 May, they have no intention of taking this clear positive action.”

OpenAI and the UK government signed a memorandum of understanding in July 2025 aimed at strategic partnership to deliver AI-driven growth.

At the time, OpenAI cited its use by big UK names that included the NHS, NatWest, Oxford University and Virgin Atlantic.

OpenAI was careful to label commitments as “non-binding”, but these included exploring use of AI in the public sector, developing UK sovereign AI capability and security research.

At the same time, OpenAI said it would increase its footprint in the UK from the current 100 staff.

Tech

OpenAI Backs Bill That Would Limit Liability for AI-Enabled Mass Deaths or Financial Disasters

OpenAI is throwing its support behind an Illinois state bill that would shield AI labs from liability in cases where AI models are used to cause serious societal harms, such as death or serious injury of 100 or more people or at least $1 billion in property damage.

The effort seems to mark a shift in OpenAI’s legislative strategy. Until now, OpenAI has largely played defense, opposing bills that could have made AI labs liable for their technology’s harms. Several AI policy experts tell WIRED that SB 3444—which could set a new standard for the industry—is a more extreme measure than bills OpenAI has supported in the past.

The bill would shield frontier AI developers from liability for “critical harms” caused by their frontier models as long as they did not intentionally or recklessly cause such an incident, and have published safety, security, and transparency reports on their website. It defines a frontier model as any AI model trained using more than $100 million in computational costs, which likely could apply to America’s largest AI labs, like OpenAI, Google, xAI, Anthropic, and Meta.

“We support approaches like this because they focus on what matters most: Reducing the risk of serious harm from the most advanced AI systems while still allowing this technology to get into the hands of the people and businesses—small and big—of Illinois,” said OpenAI spokesperson Jamie Radice in an emailed statement. “They also help avoid a patchwork of state-by-state rules and move toward clearer, more consistent national standards.”

Under its definition of critical harms, the bill lists a few common areas of concern for the AI industry, such as a bad actor using AI to create a chemical, biological, radiological, or nuclear weapon. If an AI model engages in conduct on its own that, if committed by a human, would constitute a criminal offense and leads to those extreme outcomes, that would also be a critical harm. If an AI model were to commit any of these actions under SB 3444, the AI lab behind the model may not be held liable, so long as it wasn’t intentional and they published their reports.

Federal and state legislatures in the US have yet to pass any laws specifically determining whether AI model developers, like OpenAI, could be liable for these types of harm caused by their technology. But as AI labs continue to release more powerful AI models that raise novel safety and cybersecurity challenges, such as Anthropic’s Claude Mythos, these questions feel increasingly prescient.

In her testimony supporting SB 3444, a member of OpenAI’s Global Affairs team, Caitlin Niedermeyer, also argued in favor of a federal framework for AI regulation. Niedermeyer struck a message that’s consistent with the Trump administration’s crackdown on state AI safety laws, claiming it’s important to avoid “a patchwork of inconsistent state requirements that could create friction without meaningfully improving safety.” This is also consistent with the broader view of Silicon Valley in recent years, which has generally argued that it’s paramount for AI legislation to not hamper America’s position in the global AI race. While SB 3444 is itself a state-level safety law, Niedermeyer argued that those can be effective if they “reinforce a path toward harmonization with federal systems.”

“At OpenAI, we believe the North Star for frontier regulation should be the safe deployment of the most advanced models in a way that also preserves US leadership in innovation,” Niedermeyer said.

Scott Wisor, policy director for the Secure AI project, tells WIRED he believes this bill has a slim chance of passing, given Illinois’ reputation for aggressively regulating technology. “We polled people in Illinois, asking whether they think AI companies should be exempt from liability, and 90 percent of people oppose it. There’s no reason existing AI companies should be facing reduced liability,” Wisor says.

-

Business1 week ago

Business1 week agoJaguar Land Rover sees sales recover after cyber attack

-

Uncategorized1 week ago

[CinePlex360] Please moderate: “Trump signals p

-

Entertainment7 days ago

Entertainment7 days agoJoe Jonas shares candid glimpse into parenthood with Sophie Turner

-

Tech7 days ago

Tech7 days agoOur Favorite iPad Is $50 Off

-

Sports7 days ago

Sports7 days agoUConn Final Four run could trigger a $50M furniture giveaway for Massachusetts-based Jordan’s Furniture

-

Business7 days ago

Business7 days agoVideo: Why Is the Labor Market Stuck?

-

Entertainment7 days ago

Entertainment7 days agoBlake Lively reacts to harassment claims dismissal against Justin Baldoni

-

Business1 week ago

Business1 week agoGold prices in Pakistan Today – April 3, 2026 | The Express Tribune