Tech

Using generative AI to diversify virtual training grounds for robots

Chatbots like ChatGPT and Claude have experienced a meteoric rise in usage over the past three years because they can help you with a wide range of tasks. Whether you’re writing Shakespearean sonnets, debugging code, or need an answer to an obscure trivia question, artificial intelligence systems seem to have you covered. The source of this versatility? Billions, or even trillions, of textual data points across the internet.

Those data aren’t enough to teach a robot to be a helpful household or factory assistant, though. To understand how to handle, stack, and place various arrangements of objects across diverse environments, robots need demonstrations. You can think of robot training data as a collection of how-to videos that walk the systems through each motion of a task. Collecting these demonstrations on real robots is time-consuming and not perfectly repeatable, so engineers have created training data by generating simulations with AI (which don’t often reflect real-world physics), or tediously handcrafting each digital environment from scratch.

Researchers at MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL) and the Toyota Research Institute may have found a way to create the diverse, realistic training grounds robots need. Their “steerable scene generation” approach creates digital scenes of things like kitchens, living rooms, and restaurants that engineers can use to simulate lots of real-world interactions and scenarios. Trained on over 44 million 3D rooms filled with models of objects such as tables and plates, the tool places existing assets in new scenes, then refines each one into a physically accurate, lifelike environment.

Steerable scene generation creates these 3D worlds by “steering” a diffusion model — an AI system that generates a visual from random noise — toward a scene you’d find in everyday life. The researchers used this generative system to “in-paint” an environment, filling in particular elements throughout the scene. You can imagine a blank canvas suddenly turning into a kitchen scattered with 3D objects, which are gradually rearranged into a scene that imitates real-world physics. For example, the system ensures that a fork doesn’t pass through a bowl on a table — a common glitch in 3D graphics known as “clipping,” where models overlap or intersect.

How exactly steerable scene generation guides its creation toward realism, however, depends on the strategy you choose. Its main strategy is “Monte Carlo tree search” (MCTS), where the model creates a series of alternative scenes, filling them out in different ways toward a particular objective (like making a scene more physically realistic, or including as many edible items as possible). It’s used by the AI program AlphaGo to beat human opponents in Go (a game similar to chess), as the system considers potential sequences of moves before choosing the most advantageous one.

“We are the first to apply MCTS to scene generation by framing the scene generation task as a sequential decision-making process,” says MIT Department of Electrical Engineering and Computer Science (EECS) PhD student Nicholas Pfaff, who is a CSAIL researcher and a lead author on a paper presenting the work. “We keep building on top of partial scenes to produce better or more desired scenes over time. As a result, MCTS creates scenes that are more complex than what the diffusion model was trained on.”

In one particularly telling experiment, MCTS added the maximum number of objects to a simple restaurant scene. It featured as many as 34 items on a table, including massive stacks of dim sum dishes, after training on scenes with only 17 objects on average.

Steerable scene generation also allows you to generate diverse training scenarios via reinforcement learning — essentially, teaching a diffusion model to fulfill an objective by trial-and-error. After you train on the initial data, your system undergoes a second training stage, where you outline a reward (basically, a desired outcome with a score indicating how close you are to that goal). The model automatically learns to create scenes with higher scores, often producing scenarios that are quite different from those it was trained on.

Users can also prompt the system directly by typing in specific visual descriptions (like “a kitchen with four apples and a bowl on the table”). Then, steerable scene generation can bring your requests to life with precision. For example, the tool accurately followed users’ prompts at rates of 98 percent when building scenes of pantry shelves, and 86 percent for messy breakfast tables. Both marks are at least a 10 percent improvement over comparable methods like “MiDiffusion” and “DiffuScene.”

The system can also complete specific scenes via prompting or light directions (like “come up with a different scene arrangement using the same objects”). You could ask it to place apples on several plates on a kitchen table, for instance, or put board games and books on a shelf. It’s essentially “filling in the blank” by slotting items in empty spaces, but preserving the rest of a scene.

According to the researchers, the strength of their project lies in its ability to create many scenes that roboticists can actually use. “A key insight from our findings is that it’s OK for the scenes we pre-trained on to not exactly resemble the scenes that we actually want,” says Pfaff. “Using our steering methods, we can move beyond that broad distribution and sample from a ‘better’ one. In other words, generating the diverse, realistic, and task-aligned scenes that we actually want to train our robots in.”

Such vast scenes became the testing grounds where they could record a virtual robot interacting with different items. The machine carefully placed forks and knives into a cutlery holder, for instance, and rearranged bread onto plates in various 3D settings. Each simulation appeared fluid and realistic, resembling the real-world, adaptable robots steerable scene generation could help train, one day.

While the system could be an encouraging path forward in generating lots of diverse training data for robots, the researchers say their work is more of a proof of concept. In the future, they’d like to use generative AI to create entirely new objects and scenes, instead of using a fixed library of assets. They also plan to incorporate articulated objects that the robot could open or twist (like cabinets or jars filled with food) to make the scenes even more interactive.

To make their virtual environments even more realistic, Pfaff and his colleagues may incorporate real-world objects by using a library of objects and scenes pulled from images on the internet and using their previous work on “Scalable Real2Sim.” By expanding how diverse and lifelike AI-constructed robot testing grounds can be, the team hopes to build a community of users that’ll create lots of data, which could then be used as a massive dataset to teach dexterous robots different skills.

“Today, creating realistic scenes for simulation can be quite a challenging endeavor; procedural generation can readily produce a large number of scenes, but they likely won’t be representative of the environments the robot would encounter in the real world. Manually creating bespoke scenes is both time-consuming and expensive,” says Jeremy Binagia, an applied scientist at Amazon Robotics who wasn’t involved in the paper. “Steerable scene generation offers a better approach: train a generative model on a large collection of pre-existing scenes and adapt it (using a strategy such as reinforcement learning) to specific downstream applications. Compared to previous works that leverage an off-the-shelf vision-language model or focus just on arranging objects in a 2D grid, this approach guarantees physical feasibility and considers full 3D translation and rotation, enabling the generation of much more interesting scenes.”

“Steerable scene generation with post training and inference-time search provides a novel and efficient framework for automating scene generation at scale,” says Toyota Research Institute roboticist Rick Cory SM ’08, PhD ’10, who also wasn’t involved in the paper. “Moreover, it can generate ‘never-before-seen’ scenes that are deemed important for downstream tasks. In the future, combining this framework with vast internet data could unlock an important milestone towards efficient training of robots for deployment in the real world.”

Pfaff wrote the paper with senior author Russ Tedrake, the Toyota Professor of Electrical Engineering and Computer Science, Aeronautics and Astronautics, and Mechanical Engineering at MIT; a senior vice president of large behavior models at the Toyota Research Institute; and CSAIL principal investigator. Other authors were Toyota Research Institute robotics researcher Hongkai Dai SM ’12, PhD ’16; team lead and Senior Research Scientist Sergey Zakharov; and Carnegie Mellon University PhD student Shun Iwase. Their work was supported, in part, by Amazon and the Toyota Research Institute. The researchers presented their work at the Conference on Robot Learning (CoRL) in September.

Tech

AI-Designed Drugs by a DeepMind Spinoff Are Headed to Human Trials

Google DeepMind’s AlphaFold has already revolutionized scientists’ understanding of proteins. Now, the ability of the platform to design safe and effective drugs is about to be put to the test.

Isomorphic Labs, the UK-based biotech spinoff of Google DeepMind, will soon begin human trials of drugs designed by its Nobel Prize–winning AI technology. “We’re gearing up to go into the clinic,” Isomorphic Labs president Max Jaderberg said on April 16 at WIRED Health in London. “It’s going to be a very exciting moment as we go into clinical trials and start seeing the efficacy of these molecules.”

Jaderberg did not elaborate on the timeline, but it’s later than the company had planned to initiate human studies. Last year, CEO Demis Hassabis said it would have AI-designed drugs in clinical trials by the end of 2025.

Isomorphic Labs was founded in 2021 as a spinoff from Alphabet’s AI research subsidiary, Google DeepMind. The company uses DeepMind’s AlphaFold, a groundbreaking AI platform that predicts protein structures, for drug discovery.

Built from 20 different amino acids, proteins are essential for all living organisms. Long strings of amino acids link together and fold up to make a protein’s three-dimensional structure, which dictates the protein’s function. Researchers had tried to predict protein structures since the 1970s, but this was a painstaking process given the astronomically high number of possible shapes a protein chain can take.

That changed in 2020, when DeepMind’s Hassabis and John Jumper presented stunning results from AlphaFold 2, which uses deep-learning techniques. A year later, the company released an open-source version of AlphaFold available to anyone.

In 2024, DeepMind and Isomorphic Labs released AlphaFold 3, which advanced scientists’ understanding of proteins even further. It moved beyond modeling proteins in isolation to predicting other important molecules, such as DNA and RNA, and their interactions with proteins.

“This is exactly what you need for drug discovery: You need to see how a small molecule is going to bind to a drug, how strongly, and also what else it might bind to,” Hassabis told WIRED at the time.

Since its release, the AlphaFold platform has been able to predict the structure of virtually all the 200 million proteins known to researchers and has been used by more than 2 million people from 190 countries. The breakthrough earned Hassabis and Jumper the Nobel Prize for chemistry in 2024, with the Nobel committee noting that AlphaFold has enabled a number of scientific applications, including a better understanding of antibiotic resistance and the creation of images of enzymes that can decompose plastic.

Earlier this year, Isomorphic Labs announced an even more powerful tool, what it calls IsoDDE, its proprietary drug-design engine. In a technical paper, the company touts that the platform more than doubles the accuracy of AlphaFold 3.

The startup has formed partnerships with Eli Lilly and Novartis to work together on AI drug discovery and is also advancing its own “broad and exciting pipeline of new medicines” in oncology and immunology, Jaderberg said.

“The exciting thing about the molecules that we’re designing is because we have so much more of an understanding about how these molecules work, we’ve engineered them to be very, very potent,” Jaderberg told the audience at WIRED Health. “You can take them at a much lower dose, and they’ll have lower side effects, off target effects.”

Last year, Isomorphic appointed a chief medical officer and announced it had raised $600 million in its first funding round to gear up for clinical trials. Meanwhile, the company has been building a clinical development team. Its mission is to “solve all disease.”

“It’s a crazy mission,” Jaderberg said. “But we really mean it. We say it with a straight face, because we believe this should be possible.”

Tech

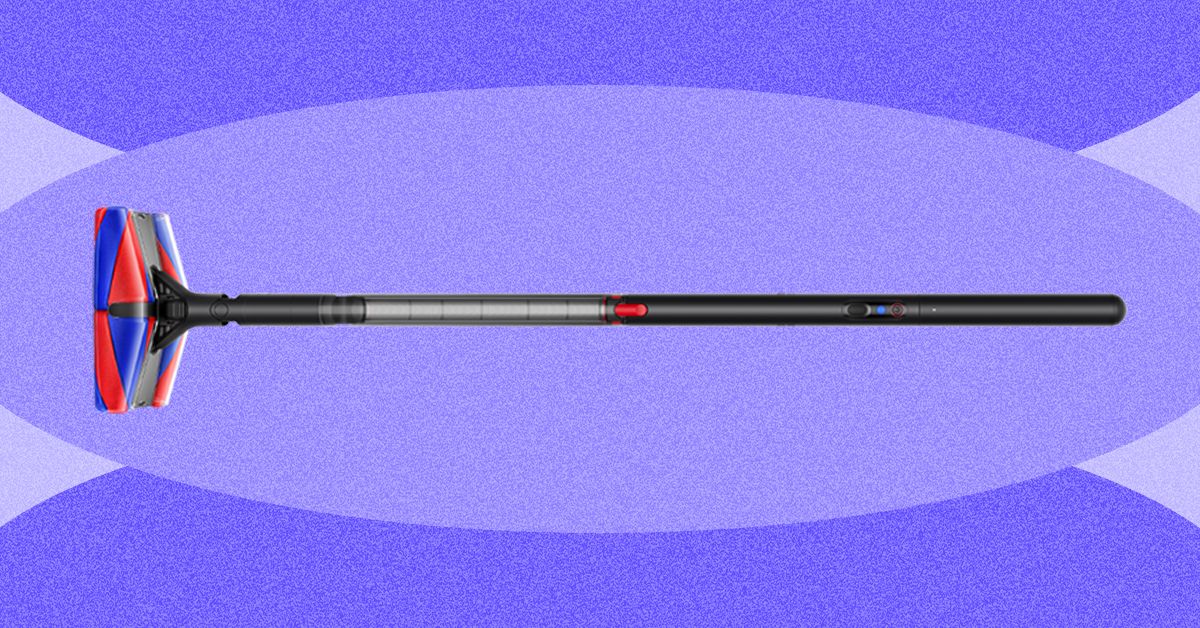

Why Do I Like Dyson’s PencilVac So Much?

The vacuum connects to Dyson’s app, where you’ll find resources such as how to empty the dustbin and wash the filter, but not much else. It can tell you how long your last vacuuming session was, but no other details, so it’s not as interesting or as informative as the data you’d get from a robot vacuum.

Fluffy Face

Photograph: Nena Farrell

Photograph: Nena Farrell

This vacuum’s full name is the Dyson PencilVac Fluffycones, aptly named for the four fluffy cones inside the vacuum head. Dyson’s previous recent stick vacuums all have the Fluffy Optic cleaner head for vacuuming hard floors. While both have a fluffy roller bar, the Fluffycones have a conical shape that Dyson says will detangle and remove hair rather than the hair getting stuck all around it. It did detangle hair for me, but when I vacuumed up larger portions of hair from my bathroom floor (a place where many a stray hair comes to die at the hands of my hairbrush, comb, and towel), it actually bunched up the hair into a ball and spat it back out a few times before finally sucking it up into the dustbin.

Video: Nena Farrell

While the hair results weren’t great, I did love this vacuum for sucking up the cat litter that constantly plagues my home. It did a great job with flour on my hard floors and a solid job with dry oats, but it occasionally just bumped the oats around instead of immediately sucking them up. I was even able to quickly run it over the top of my carpet, but rolling back and forth on the carpet a bunch did stop the cones.

The head is designed to move in just about any direction. The cones make it easy to swivel around, and the green illuminating lights on the front and back help you spot any debris you might otherwise miss. With its compact size that fits in tricky corners, the PencilVac finally lets me vacuum up all the litter around the base of my toilet and pedestal sink. It’s part of what makes me reach for this vacuum over and over, even after my robot vacuum cleaned the day before.

Forward Momentum

Photograph: Nena Farrell

Do I think this vacuum replaces Dyson’s existing cordless options? No. But Dyson has other new vacuums planned that could do that. This vacuum has a specific design for a specific use: smaller homes with entirely hard floors. There’s an accessibility opportunity here, too. This lightweight vacuum can be much easier to use for folks with mobility and strength restrictions. The magnetic charging base also makes it easy to store and access for a variety of people, whether they struggle with fine motor skills or can’t bend over and grab the vacuum.

Tech

They Wanted to Join Raya. They’ve Been on the Waiting List for Years

There is a special agony to existing in limbo, that state of eternal in-between, where time stretches into infinity.

Today, that experience is especially true for people vying to join Raya, the members-only dating app. Obtaining a Raya account requires an invitation from a current member, and even after you’ve applied, you can’t log in until your application is approved. The process creates a bottleneck akin to the line outside a nightclub, where the chosen few breeze inside while the rest are left to wait. Beyond the velvet rope there are some 2.5 million people waiting to get into Raya—many of whom have been idling in limbo for years.

“My application is stuck in purgatory,” Gabriela Mark, a 23-year-old law student and model in San Diego, tells WIRED. “Like, she’s never escaping.”

Mark has been on the waiting list for five years. “I don’t know what their deal is, but there’s a reason I’m trapped on this waitlist and I needed to find out what it was.” In January, having reached her limit, she decided to email Raya. “I am beginning to believe you guys genuinely hate me or are bullying me,” Mark wrote in a colorfully worded letter. “Is my application just floating in the abyss somewhere or a running gag to you guys???”

Mark never received a response, but her story is an increasingly common one. The people WIRED spoke to for this story—who, despite their professional bona fides, have waited anywhere between two and seven years to join—have watched friends get accepted, break up, and cycle through the app while their own status remains unchanged.

Originally marketed as a kind of SoHo House for people in creative industries, Raya launched in 2015 as an app built around aspiration—but it has since shifted into a platform where many people in those industries find themselves unable to participate at all.

“It’s a bit of a mental fuck,” says Jennifer Rojas, who was working as an actress when she applied in 2020. “You start to look inward. Like, maybe it’s me. Maybe it’s this or that. I was opening it every day to check my status.” Now a 40-year-old UGC creator in South Florida, Rojas is going on year six of the waiting list. “I have 17 referrals on the freaking app.”

There is not an exact science to making it past the waiting list. According to previous reporting, the app—which charges users $25 per month, or $50 for a premium membership once approved—receives up to 100,000 applications per month. For prospective users, the biggest advantage comes from referrals by current members, who each get a small stash of “friend passes” to share. list isn’t first-come, first-served, which partially explains why some people have been on it for so long. It changes based on things like how trendy your city is on the app or whether you’ve snagged a referral.

(Raya declined to comment. After an initial call with Raya’s communications team about scheduling an interview with Ifeoma Ojukwi, the vice president of global memberships who oversees the application process, the company stopped responding to requests from WIRED. As is common in online dating, we were ghosted.)

Like so many people who want in, Raya’s exclusivity initially appealed to Mark. She wanted to join because she’d heard it was full of “cool people who seem untouchable.” Reputationally known as the celebrity dating app, everyone from actors Dakota Fanning and Channing Tatum to Olympian Simone Biles have had varying degrees of success on the platform. (Biles met her husband on Raya.) Mark had tried her luck on the app circuit: Hinge was “just OK.” With Tinder she kept running into guys that “just seemed like they wanted to literally bone anything with a hole in it.” As for the other ones, “nothing but trap boys and creatures,” she says.

-

Fashion1 week ago

Fashion1 week agoFrance’s LVMH Q1 revenue falls 6%, shows resilience amid Iran war

-

Tech1 week ago

Tech1 week agoCYBERUK ’26: UK lagging on legal protections for cyber pros | Computer Weekly

-

Sports5 days ago

Sports5 days agoWWE WrestleMania 42 Night 2: Live match results and analysis

-

Business1 week ago

Business1 week agoPepsiCo earnings beat estimates as North American food business improves

-

Sports5 days ago

Sports5 days agoNCAA men’s gymnastics championship: All-time winners list

-

Tech1 week ago

Tech1 week agoCyber Essentials closes the MFA loophole but leaves some organisations adrift | Computer Weekly

-

Business1 week ago

Business1 week agoOil prices fall again amid Middle East ceasefire hopes

-

Sports1 week ago

Sports1 week agoFaheem Ashraf backs Islamabad United’s push, calls league a ‘career-changing platform’