Tech

What are the storage requirements for AI training and inference? | Computer Weekly

Despite ongoing speculation around an investment bubble that may be set to burst, artificial intelligence (AI) technology is here to stay. And while an over-inflated market may exist at the level of the suppliers, AI is well-developed and has a firm foothold among organisations of all sizes.

But AI workloads place specific demands on IT infrastructure and on storage in particular. Data volumes can start big and then balloon, in particular during training phases as data is vectorised and checkpoints are created. Meanwhile, data must be curated, gathered and managed throughout its lifecycle.

In this article, we look at the key characteristics of AI workloads, the particular demands of training and inference on storage I/O, throughput and capacity, whether to choose object or file storage, and the storage requirements of agentic AI.

What are the key characteristics of AI workloads?

AI workloads can be broadly categorised into two key stages – training and inference.

During training, processing focuses on what is effectively pattern recognition. Large volumes of data are examined by an algorithm – likely part of a deep learning framework like TensorFlow or PyTorch – that aims to recognise features within the data.

This could be visual elements in an image or particular words or patterns of words within documents. These features, which might fall under the broad categories of “a cat” or “litigation”, for example, are given values and stored in a vector database.

The assigned values provide for further detail. So, for example “a tortoiseshell cat”, would comprise discrete values for “cat” and “tortoiseshell”, that make up the whole concept and allow comparison and calculation between images.

Once the AI system is trained on its data, it can then be used for inference – literally, to infer a result from production data that can be put to use for the organisation.

So, for example, we may have an animal tracking camera and we want it to alert us when a tortoiseshell cat crosses our garden. To do that it would infer the presence or not of a cat and whether it is tortoiseshell by reference to the dataset built during the training described above.

But, while AI processing falls into these two broad categories, it is not necessarily so clear cut in real life. It will always be the case that training will be done on an initial dataset. But after that it is likely that while inference is an ongoing process, training also becomes perpetual as new data is ingested and new inference results from it.

So, to labour the example, our cat-garden-camera system may record new cats of unknown types and begin to categorise their features and add them to the model.

What are the key impacts on data storage of AI processing?

At the heart of AI hardware are specialised chips called graphics processing units (GPUs). These do the grunt processing work of training and are incredibly powerful, costly and often difficult to procure. For these reasons their utilisation rates are a major operational IT consideration – storage must be able to handle their I/O demands so they are optimally used.

Therefore, data storage that feeds GPUs during training must be fast, so it’s almost certainly going to be built with flash storage arrays.

Another key consideration is capacity. That’s because AI datasets can start big and get much bigger. As datasets undergo training, the conversion of raw information into vector data can see data volumes expand by up to 10 times.

Also, during training, checkpointing is carried out at regular intervals, often after every “epoch” or pass through the training data, or after changes are made to parameters.

Checkpoints are similar to snapshots, and allow training to be rolled back to a point in time if something goes wrong so that existing processing does not go to waste. Checkpointing can add significant data volume to storage requirements.

So, sufficient storage capacity must be available, and will often need to scale rapidly.

What are the key impacts of AI processing on I/O and capacity in data storage?

The I/O demands of AI processing on storage are huge. It is often the case that model data in use will just not fit into a single GPU memory and so is parallelised across many of them.

Also, AI workloads and I/O differ significantly between training and inference. As we’ve seen, the massive parallel processing involved in training requires low latency and high throughput.

While low latency is a universal requirement during training, throughput demands may differ depending on the deep learning framework used. PyTorch, for example, stores model data as a large number of small files while TensorFlow uses a smaller number of large model files.

The model used can also impact capacity requirements. TensorFlow checkpointing tends towards larger file sizes, plus dependent data states and metadata, while PyTorch checkpointing can be more lightweight. TensorFlow deployments tend to have a larger storage footprint generally.

If the model is parallelised across numerous GPUs this has an effect on checkpoint writes and restores that mean storage I/O must be up to the job.

Does AI processing prefer file or object storage?

While AI infrastructure isn’t necessarily tied to one or other storage access method, object storage has a lot going for it.

Most enterprise data is unstructured data and exists at scale, and it is often what AI has to work with. Object storage is supremely well suited to unstructured data because of its ability to scale. It also comes with rich metadata capabilities that can help data discovery and classification before AI processing begins in earnest.

File storage stores data in a tree-like hierarchy of files and folders. That can become unwieldy to access at scale. Object storage, by contrast, stores data in a “flat” structure, by unique identifier, with rich metadata. It can mimic file and folder-like structures by addition of metadata labels, which many will be familiar with in cloud-based systems such as Google Drive, Microsoft OneDrive and so on.

Object storage can, however, be slow to access and lacks file-locking capability, though this is likely to be of less concern for AI workloads.

What impact will agentic AI have on storage infrastructure?

Agentic AI uses autonomous AI agents that can carry out specific tasks without human oversight. They are tasked with autonomous decision-making within specific, predetermined boundaries.

Examples would include the use of agents in IT security to scan for threats and take action without human involvement, to spot and initiate actions in a supply chain, or in a call centre to analyse customer sentiment, review order history and respond to customer needs.

Agentic AI is largely an inference phase phenomenon so compute infrastructure will not need to be up to training-type workloads. Having said that, agentic AI agents will potentially access multiple data sources across on-premises systems and the cloud. That will cover the range of potential types of storage in terms of performance.

But, to work at its best, agentic AI will need high-performance, enterprise-class storage that can handle a wide variety of data types with low latency and with the ability to scale rapidly. That’s not to say datasets in less performant storage cannot form part of the agentic infrastructure. But if you want your agents to work at their best you’ll need to provide the best storage you can.

Tech

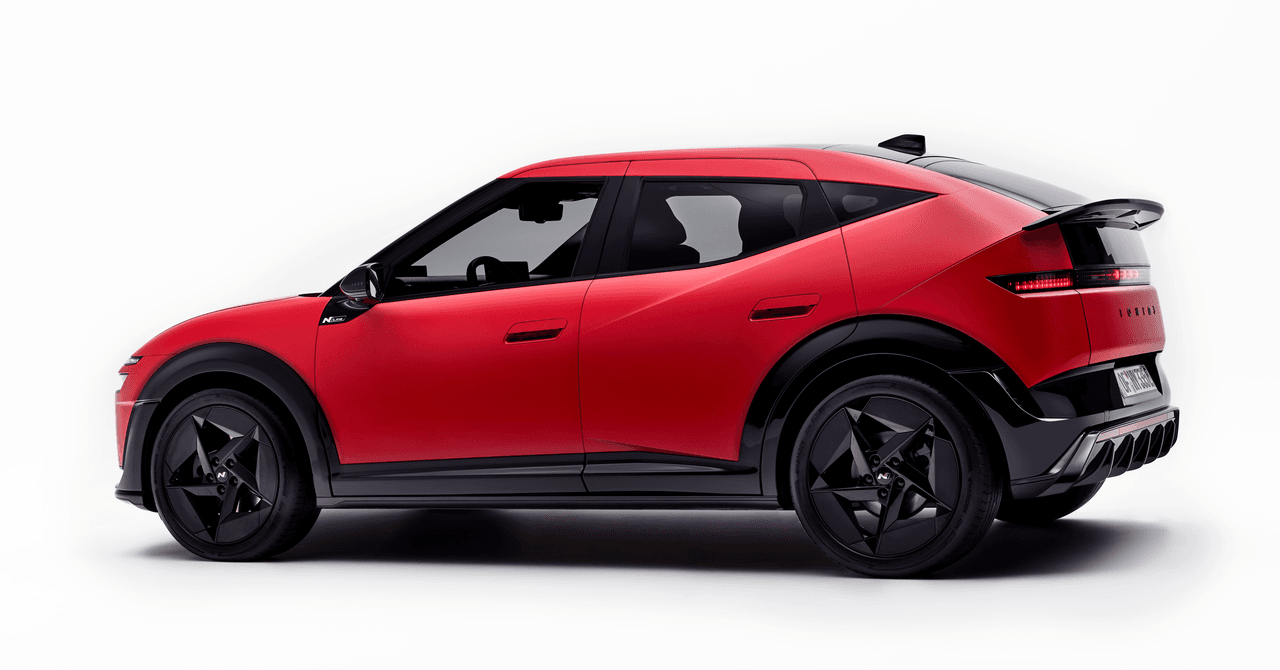

Hyundai’s New Ioniq 3 Has Hot-Hatch Looks, but Can It Beat BYD?

Hyundai has unveiled its Ioniq 3, a fully electric compact hatchback for urban driving designed to be as aerodynamically efficient as possible yet still offer up a surprisingly spacious interior—a trick the carmaker is loftily calling Aero Hatch. The 3 is intended to fill the gap between Hyundai’s Inster supermini and Ioniq 5 crossover.

In profile, the Ioniq 3 has a sleek front end that transitions into a roofline that stays straight over both front and rear occupants before dropping to merge with the rear spoiler. It’s this roofline that maximizes interior headroom for the rear passengers, but it also offers a supposed class-leading drag coefficient of 0.263.

The car has the same underpinnings as its sibling brand, Kia’s EV2. Two battery options will deliver a projected WLTP distance of 344 km (around 214 miles) for the Standard Range Ioniq 3; the Long Range version is supposedly good for a competitive 308-mile range. Built on the group’s Electric-Global Modular Platform (E-GMP), the car has a 400-volt architecture to lower costs rather than the 800-volt system of the Ioniq 5 N, 6, or 9 SUV. Still, this means that if you can find sufficiently fast DC charging, you can, in theory, top up from 10 to 80 percent in approximately 29 minutes (AC charging capability is up to 22 kW).

This is fine, but it is not a match for BYD’s new Blade 2.0 battery tech that WIRED tried, astonishingly allowing the Denza Z9 GT to charge its battery in just over nine minutes from 10 percent. True, that battery tech was in a $100,000 “premium” EV, but it’s coming to BYD’s wider models. And if BYD makes good on its plans to deliver a charging network to rival Tesla’s Supercharger, then very soon buyers will be expecting comparable charge times, and 30 minutes will quickly feel awfully long.

I asked José Muñoz, Hyundai Motor Company president and CEO, whether this new battery technology from BYD concerns him, whether Hyundai—leading the EV pack with 800-volt architectures for so long—needs to match the Blade 2.0’s performance. “We welcome the challenge,” Muñoz tells me. “Every challenge is an opportunity to do better. And I can tell you that, lately, we have a lot of opportunities to do better.”

“We are also working on fast charging,” Muñoz says, adding that Hyundai’s success will be built on not merely one leading technology but many. “There are not more elements that may be offered by the Chinese that we can offer. It’s only a matter of how you mix them. A lot of times, you get stuck into one indicator. I’m an engineer. And we always have the example of the airplanes: What is more important in an airplane, altitude or speed? There is only one answer. You need to achieve both.”

Tech

Prego Has a Dinner-Conversation-Recording Device, Capisce?

Prego, the pasta sauce company, is getting into hardware with a device that sits on your table and records dinner conversations. No, this isn’t April Fools’.

The Connection Keeper is a round puck that houses two microphones for recording around the table. The recorder was developed in partnership with StoryCorps, the 20-year-old nonprofit that has recorded conversations with more than 720,000 people about their lives.

The Connection Keeper is more of a publicity stunt than a readily available product. Fewer than 100 will be made. The pucks look more like a tuna can than what you’d associate with the pasta sauce brand—small and meant to be tucked aside so as not to attract attention. The whole goal here, Prego and StoryCorps say, is to advocate for keeping people off their phones during dinner.

“Everything now is AI, and everyone has their phones on the table,” says Elyce Henkin, a managing director of StoryCorps studios and brand partnerships. “It interrupts the conversation and the flow. We wanted to get rid of that and go back to the basics and have everyone talking to each other.”

The pucks come packaged with cards inspired by StoryCorps, designed to prompt conversations between family members. Some are aimed at kids; some are aimed at parents or other family members.

The device doesn’t record automatically. Press a button, and the device begins recording CD-quality audio. Push the button again to stop. It records all the audio on a 16-GB microSD card that can hold up to eight hours of audio at a time. Those recordings can then be saved on a StoryCorps microsite or the family’s own storage. There is no cloud connection, no Wi-Fi, and no artificial intelligence features whatsoever.

The more communal element of the project is that StoryCorps will allow users to share their recordings on its website (or keep them private). Anything that has been voluntarily shared will also be physically preserved as a recording along with the larger StoryCorps collection within the US Library of Congress.

Prego is a US company, named after the Italian word for “you’re welcome.” I’ll tell you this from experience growing up in an Italian-American extended family: The Connection Keeper is going to have a hell of a time keeping track of a conversation at a table full of loud uncles and your wine-drunk grandma, who all talk at the same time.

“I think it’s how a lot of families are,” Henkin says. “What StoryCorps does is that it reminds us of our similarities and the humanity that’s in us all, even though we are all different. I imagine that if someone were to go through and listen to the collection, there would be rowdy moments, and there would be kids laughing and moms saying, ‘Don’t eat with your mouth full.’ That’s all part of the truth of it.”

Tech

These Earbuds Drown Out Your Mouth-Breathing Roommates at $50 Off

Bose’s QuietComfort Ultra 2 earbuds are the best noise-canceling earbuds you can buy. Right now, they’re $50 off, which matches the best price we tend to see outside of special events like Black Friday and Cyber Monday. If you want to wait until November, they might hit $200 again, but otherwise $250 is a very fair deal—especially since they pop back up to $300 regularly. The discounted price applies to all five color options, including Black, Deep Plum, Desert Gold, Midnight Violet, and White Smoke (another rarity, as usually only the vivid colors go on sale).

Sometimes you just need to quiet the world. Whether it’s to play 10 hours of Coconut Mall on a loop to help you lock in and meet your Friday deadlines (thanks to my colleague Julia Forbes for that suggestion); muffle the crying babies, sniffling neighbors, and mysterious, potentially concerning clunking noises on an airplane; or to help you better appreciate the mix on Space Laces’ Vaultage 004 EP, active noise cancellation makes a huge difference to your listening experience.

The Bose QuietComfort Ultra 2 earbuds also have some of the best active noise cancellation you can find. They sound great out of the box, thanks to a custom sound profile based on the shape of your ears, but you can customize the EQ by using the app. The app also allows you to tweak touch controls and spatial audio.

The battery life lasts for about six hours, or 24 with the charging case. And while the noise cancellation can’t be beaten, these also have a pass-through feature called Aware mode, which filters in outside noise but smooths the loudest bits. That means you’ll be able to hear what’s going on, but you won’t be startled. True-crime podcast listeners, this one’s for you.

In fact, just about the only drawback we can find is that these might not be ideal for folks with super-small ears. Otherwise, they’re great all around, with solid call quality, excellent sound overall, and a sleek aesthetic. We think they offer good value at full price, so an extra $50 off is especially nice.

If you’re in the market for new headphones, but these don’t exactly fit what you’re looking for, we have plenty of other recommendations. Check out our guides to the Best Wireless Earbuds, Best Headphones for Working Out, Best Noise-Canceling Headphones, and Best Open Earbuds for additional hand-tested picks.

-

Fashion4 days ago

Fashion4 days agoFrance’s LVMH Q1 revenue falls 6%, shows resilience amid Iran war

-

Sports1 week ago

Sports1 week agoThe case for Man United’s Fernandes as Premier League’s best

-

Entertainment1 week ago

Entertainment1 week agoPalace left in shock as Prince William cancels grand ceremony

-

Business1 week ago

Business1 week agoUK could adopt EU single market rules under new legislation

-

Entertainment5 days ago

Entertainment5 days agoIs Claude down? Here’s why users are seeing errors

-

Fashion1 week ago

Fashion1 week agoEnergy emerges as biggest cost driver in textile margins

-

Business1 week ago

Business1 week agoDelta Air Lines unveils first new Delta One suite in premium cabin arms race

-

Tech1 week ago

Tech1 week agoA Lot of Shops Won’t Fix Electric Bikes. Here’s Why