Tech

What does the future hold for generative AI?

When OpenAI introduced ChatGPT to the world in 2022, it brought generative artificial intelligence into the mainstream and started a snowball effect that led to its rapid integration into industry, scientific research, health care, and the everyday lives of people who use the technology.

What comes next for this powerful but imperfect tool?

With that question in mind, hundreds of researchers, business leaders, educators, and students gathered at MIT’s Kresge Auditorium for the inaugural MIT Generative AI Impact Consortium (MGAIC) Symposium on Sept. 17 to share insights and discuss the potential future of generative AI.

“This is a pivotal moment — generative AI is moving fast. It is our job to make sure that, as the technology keeps advancing, our collective wisdom keeps pace,” said MIT Provost Anantha Chandrakasan to kick off this first symposium of the MGAIC, a consortium of industry leaders and MIT researchers launched in February to harness the power of generative AI for the good of society.

Underscoring the critical need for this collaborative effort, MIT President Sally Kornbluth said that the world is counting on faculty, researchers, and business leaders like those in MGAIC to tackle the technological and ethical challenges of generative AI as the technology advances.

“Part of MIT’s responsibility is to keep these advances coming for the world. … How can we manage the magic [of generative AI] so that all of us can confidently rely on it for critical applications in the real world?” Kornbluth said.

To keynote speaker Yann LeCun, chief AI scientist at Meta, the most exciting and significant advances in generative AI will most likely not come from continued improvements or expansions of large language models like Llama, GPT, and Claude. Through training, these enormous generative models learn patterns in huge datasets to produce new outputs.

Instead, LuCun and others are working on the development of “world models” that learn the same way an infant does — by seeing and interacting with the world around them through sensory input.

“A 4-year-old has seen as much data through vision as the largest LLM. … The world model is going to become the key component of future AI systems,” he said.

A robot with this type of world model could learn to complete a new task on its own with no training. LeCun sees world models as the best approach for companies to make robots smart enough to be generally useful in the real world.

But even if future generative AI systems do get smarter and more human-like through the incorporation of world models, LeCun doesn’t worry about robots escaping from human control.

Scientists and engineers will need to design guardrails to keep future AI systems on track, but as a society, we have already been doing this for millennia by designing rules to align human behavior with the common good, he said.

“We are going to have to design these guardrails, but by construction, the system will not be able to escape those guardrails,” LeCun said.

Keynote speaker Tye Brady, chief technologist at Amazon Robotics, also discussed how generative AI could impact the future of robotics.

For instance, Amazon has already incorporated generative AI technology into many of its warehouses to optimize how robots travel and move material to streamline order processing.

He expects many future innovations will focus on the use of generative AI in collaborative robotics by building machines that allow humans to become more efficient.

“GenAI is probably the most impactful technology I have witnessed throughout my whole robotics career,” he said.

Other presenters and panelists discussed the impacts of generative AI in businesses, from largescale enterprises like Coca-Cola and Analog Devices to startups like health care AI company Abridge.

Several MIT faculty members also spoke about their latest research projects, including the use of AI to reduce noise in ecological image data, designing new AI systems that mitigate bias and hallucinations, and enabling LLMs to learn more about the visual world.

After a day spent exploring new generative AI technology and discussing its implications for the future, MGAIC faculty co-lead Vivek Farias, the Patrick J. McGovern Professor at MIT Sloan School of Management, said he hoped attendees left with “a sense of possibility, and urgency to make that possibility real.”

Tech

Could Contact-Tracing Apps Help With the Hantavirus? Not Really

After three people died on a cruise ship struck by a hantavirus, authorities are actively tracking down 29 people who had left the ship. They’re trying to trace the spread of the virus. It’s a long, arduous, global process to find and notify people who might be at risk of infection.

Hey, wasn’t there supposed to be an app for that?

Contact-tracing apps were a global effort starting in 2020 during the Covid-19 pandemic. Enabled by phone companies like Apple and Google, contact tracing was designed to use Bluetooth connections to detect when people had come in contact with someone who had or would later test positive for Covid and report as much. It didn’t do much to solve the spread of the pandemic, but tracking the virus became more effective at least. The same process wouldn’t go well for the hantavirus problem.

“There is no use of apps for this hantavirus outbreak,” Emily Gurley, an epidemiologist at Johns Hopkins University, wrote in an email response to WIRED. “The number of cases are small, and it’s important to trace all contacts exactly to stop transmission.”

On a smaller scale of infection like this, officials have to start at the source (an infected individual), then go person-by-person, confirming where they went and who they might have come into contact with. Data collected by apps from a broad swath of devices would not be anywhere close to accurate enough to give a good idea of where the virus might have hitchhiked to next.

Contact tracing on a wider scale, like, say, a global pandemic, is less about tracking the individual infections and more about understanding what parts of the population might be affected, giving people the opportunity to self-quarantine after exposure. But that depends on how people choose to respond, and how the technology is utilized by public emergency systems. During the Covid pandemic, contact-tracing via apps tended to work better in more carefully managed European countries, but did not slow the spread in the US.

Making devices accessible to that kind of proximity information has also brought all sorts of concerns about privacy, given that the technology would require always-on access to work properly. Contact tracing also struggled to maintain accuracy, and in some cases could be providing false negatives or positives that don’t help further real information about the spread of the virus.

Especially in the case of something like the Hantavirus, where every person on that cruise ship can theoretically be directly tracked and contacted, it’s better to do that process the hard way.

“During small but highly fatal outbreaks, more precision is required,” Gurley wrote.

Tech

‘Reservation Hijacking’ Scams Target Travelers. Here’s How to Stay Safe

There’s another type of digital scam to be aware of, as per the BBC. It’s called “reservation hijacking.”

The name gives you a clue as to how it works. Essentially, scammers use details about a booking you’ve placed (perhaps with a hotel or airline) to trick you into sending money somewhere you shouldn’t.

While this type of scam isn’t brand new, a recent data breach at Booking.com has raised the risk of people being caught out. With data about you and your reservation, a far more convincing setup can be put in place—why wouldn’t you believe that someone purporting to be an employee from a spa you’ve got a reservation with is telling the truth about who they are, especially if they know the dates of your trip, your phone number, and your email address?

According to Booking.com, no financial information was exposed in the April 2026 hack. However, names, email addresses, phone numbers, and booking details have been leaked. The travel portal says affected customers have been emailed about the heightened risk of scams, so that’s the first thing to check for when it comes to staying safe.

Minimizing the risk of getting scammed by a reservation hijack involves many of the same security precautions you may already be following, and just being aware that this is a way you might be targeted will make a difference.

How Reservation Hijacks Work

We’ve already outlined the basics of a reservation hijack, but it can take several forms. As with other types of scams, it tends to evolve over time. The basic premise is that someone will get in touch with you claiming to be from a place you have a reservation with, whether it’s a car rental company or a hotel.

The scammers will try to pull together as much information as they can on you and your booking. Sometimes they’ll target employees of the place you’ve got the reservation with in order to get access to their systems, and other times they may take advantage of a wider data breach (as with the recent Booking.com hack).

They might also get information through other means. Maybe they’ve somehow got access to your email, or to some of your social media posts (where you’ve shared your next vacation destination and a countdown of how many days are left to go). Don’t be caught out if you find yourself speaking to someone who knows a lot about your travel plans.

Tech

I Tried the Best Captioning Smart Glasses, and Only One Leads the Pack

Unlike the other glasses I tested, Even doesn’t sell a subscription plan; everything’s included out of the box.

The only downside I could find with the G2 is that it is largely devoid of offline features, so the glasses have to be connected to the internet to do much of anything. Considering the G2’s capabilities, it’s a trade-off I am more than happy to make.

Other Captioning Glasses I Tested

There are plenty of capable captioning eyeglasses on the market, but they are surprisingly similar in both looks and features. While many are quite capable, none had the combination of power and affordability that I got with Even’s G2. Here’s a rundown of everything else I tested.

Leion’s Hey 2 is the price leader in this market, and even its prescription lenses ($90 to $299) are pretty affordable. The hardware, however, is heavy: 50 grams without lenses, 60 grams with them. A full charge gets you six to eight hours of operation; the case adds juice for up to 12 recharges.

I like the Leion interface, which lays out caption, translation, “free talk” (two-way translation), and a teleprompter feature on its clean app. You get access to nine languages; using Pro minutes expands that to 143. Leion sells its premium plan by the minute, not the month, so you need to remember to toggle this mode off when you don’t need it. Pricing is $10 for 120 minutes, $50 for 1,200 minutes, and $200 for 6,000 minutes. There’s no offline use supported, and I often struggled to get AI summaries to show up in English instead of Chinese (regardless of the recorded language).

You’re not seeing double: XRAI and Leion use the same manufacturer for their hardware, and the glasses weigh the same. The battery spec is also similar, with up to eight hours on the frames and another 96 hours when recharging with the case. XRAI claims its display is significantly brighter than competitors’, but I didn’t see much of a difference in day-to-day use.

The features and user experience are roughly the same, though Leion’s teleprompter feature isn’t implemented in XRAI’s app, and it doesn’t offer AI summaries of conversations. I also didn’t find XRAI’s app as user-friendly as Leion’s version, particularly when trying to switch among the admittedly exhaustive 300 language options. Only 20 of these are included without ponying up for a Pro subscription, which is sold both by the month and minute: $20/month gets you a max of 600 upgraded transcription minutes and 300 translation minutes; $40/month gets you 1,800 and 1,200 minutes, respectively. On the plus side, XRAI does have a rudimentary offline mode that works better than most. For prescription lenses, add $140 to $170.

AirCaps does not make its own prescription lenses. Instead, you must purchase a pair of $39 “lens holders” and take them to an optician if you want prescription inserts. I was unable to test these with prescription lenses and ultimately had to try them out over my regular glasses, which worked well enough for short-term testing. Frames weigh a hefty 53 grams without add-on lenses; the company couldn’t tell me how much extra weight prescription lenses would add to that, but it’s safe to say these are the bulkiest and heaviest captioning glasses on the market. Despite the weight, they only carry two to four hours of battery life, with 10 or so recharges packed into the comically large case. Another option is to clip one of AirCaps’ rechargeable 13-gram Power Capsules ($79 for two) to one of the arms, which can provide 12 to 18 extra hours of juice.

The AirCaps feature list and interface make it perhaps the simplest of all these devices, with just a single button to start and stop recording. Transcriptions and translations are available for free in nine languages. For $20/month, you can add the Pro package, which offers better accuracy, access to more than 60 languages, and the option to generate AI summaries on demand (though only if recordings are long enough). As a bonus: Five hours of Pro features are free each month. Offline mode works pretty well, too. The only bad news is that these bulky frames just aren’t comfortable enough for long-term wear.

The most expensive option on the market (up to $1,399 with prescription lenses!) weighs a relatively svelte 40 grams (52 grams with lenses) and offers about four hours of battery life. There’s no charging case; the glasses must be charged directly using the included USB-connected dongle.

The glasses are extremely simple, offering transcription and translation features—with support for about 80 languages, which is impressive. I unfortunately found the prescription lenses Captify sent to be the blurriest of the bunch, making the captions comparatively hard to read. And while the device supports offline transcription, performance suffered badly when disconnected from the internet. I couldn’t get translations to work at all when offline. For $15/month, you get better accuracy and speaker differentiation, and access to AI summaries of conversations. Prescription lenses cost between $99 and $600.

-

Politics7 days ago

Politics7 days agoIran weighs US reply delivered via Pakistan as Trump signals opposition to deal terms

-

Fashion1 week ago

Fashion1 week agoUS’ J.Jill, Inc. appoints Kimberly Wallengren as CMO

-

Fashion1 week ago

Fashion1 week agoAAFA pushes for swift US House passage of key anti-counterfeiting law

-

Fashion1 week ago

Fashion1 week agoUS cotton export sales show strong recovery, Upland rise 36%

-

Sports1 week ago

Sports1 week agoSajid Ali Sadpara summits world’s fifth-highest peak

-

Tech6 days ago

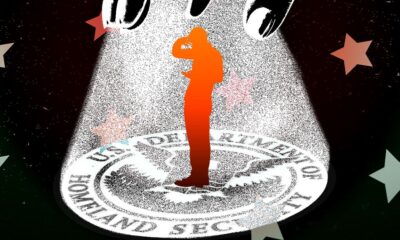

Tech6 days agoDHS Demanded Google Surrender Data on Canadian’s Activity, Location Over Anti-ICE Posts

-

Fashion1 week ago

Fashion1 week agoICE cotton witnesses sharp rise on weaker dollar, strong exports

-

Business1 week ago

Business1 week agoUK airlines to be allowed to cancel flights in advance over fuel shortages