Tech

Method teaches generative AI models to locate personalized objects

Say a person takes their French Bulldog, Bowser, to the dog park. Identifying Bowser as he plays among the other canines is easy for the dog owner to do while onsite.

But if someone wants to use a generative AI model like GPT-5 to monitor their pet while they are at work, the model could fail at this basic task. Vision-language models like GPT-5 often excel at recognizing general objects, like a dog, but they perform poorly at locating personalized objects, like Bowser the French Bulldog.

To address this shortcoming, researchers from MIT and the MIT-IBM Watson AI Lab have introduced a new training method that teaches vision-language models to localize personalized objects in a scene.

Their method uses carefully prepared video-tracking data in which the same object is tracked across multiple frames. They designed the dataset so the model must focus on contextual clues to identify the personalized object, rather than relying on knowledge it previously memorized.

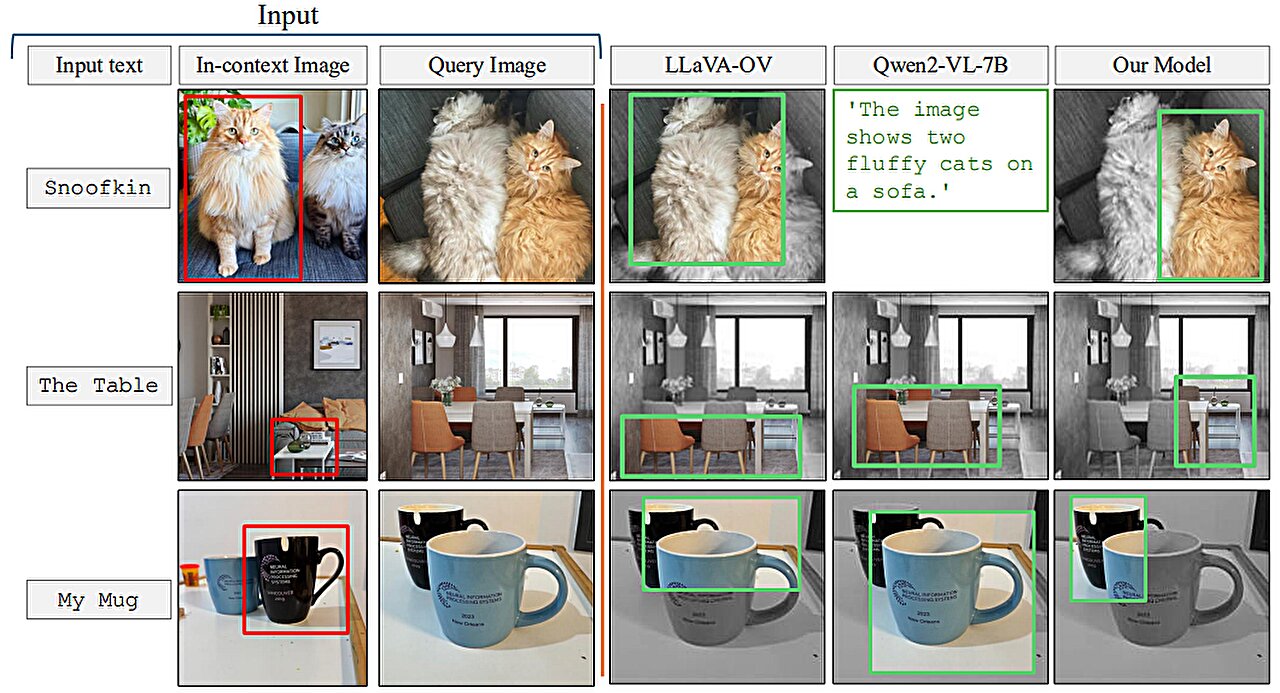

When given a few example images showing a personalized object, like someone’s pet, the retrained model is better able to identify the location of that same pet in a new image.

Models retrained with their method outperformed state-of-the-art systems at this task. Importantly, their technique leaves the rest of the model’s general abilities intact.

This new approach could help future AI systems track specific objects across time, like a child’s backpack, or localize objects of interest, such as a species of animal in ecological monitoring. It could also aid in the development of AI-driven assistive technologies that help visually impaired users find certain items in a room.

“Ultimately, we want these models to be able to learn from context, just like humans do. If a model can do this well, rather than retraining it for each new task, we could just provide a few examples and it would infer how to perform the task from that context. This is a very powerful ability,” says Jehanzeb Mirza, an MIT postdoc and senior author of a paper on this technique posted to the arXiv preprint server.

Mirza is joined on the paper by co-lead authors Sivan Doveh, a graduate student at Weizmann Institute of Science; and Nimrod Shabtay, a researcher at IBM Research; James Glass, a senior research scientist and the head of the Spoken Language Systems Group in the MIT Computer Science and Artificial Intelligence Laboratory (CSAIL); and others. The work will be presented at the International Conference on Computer Vision (ICCV 2025), held Oct 19–23 in Honolulu, Hawai’i.

An unexpected shortcoming

Researchers have found that large language models (LLMs) can excel at learning from context. If they feed an LLM a few examples of a task, like addition problems, it can learn to answer new addition problems based on the context that has been provided.

A vision-language model (VLM) is essentially an LLM with a visual component connected to it, so the MIT researchers thought it would inherit the LLM’s in-context learning capabilities. But this is not the case.

“The research community has not been able to find a black-and-white answer to this particular problem yet. The bottleneck could arise from the fact that some visual information is lost in the process of merging the two components together, but we just don’t know,” Mirza says.

The researchers set out to improve VLMs abilities to do in-context localization, which involves finding a specific object in a new image. They focused on the data used to retrain existing VLMs for a new task, a process called fine-tuning.

Typical fine-tuning data are gathered from random sources and depict collections of everyday objects. One image might contain cars parked on a street, while another includes a bouquet of flowers.

“There is no real coherence in these data, so the model never learns to recognize the same object in multiple images,” he says.

To fix this problem, the researchers developed a new dataset by curating samples from existing video-tracking data. These data are video clips showing the same object moving through a scene, like a tiger walking across a grassland.

They cut frames from these videos and structured the dataset so each input would consist of multiple images showing the same object in different contexts, with example questions and answers about its location.

“By using multiple images of the same object in different contexts, we encourage the model to consistently localize that object of interest by focusing on the context,” Mirza explains.

Forcing the focus

But the researchers found that VLMs tend to cheat. Instead of answering based on context clues, they will identify the object using knowledge gained during pretraining.

For instance, since the model already learned that an image of a tiger and the label “tiger” are correlated, it could identify the tiger crossing the grassland based on this pretrained knowledge, instead of inferring from context.

To solve this problem, the researchers used pseudo-names rather than actual object category names in the dataset. In this case, they changed the name of the tiger to “Charlie.”

“It took us a while to figure out how to prevent the model from cheating. But we changed the game for the model. The model does not know that ‘Charlie’ can be a tiger, so it is forced to look at the context,” he says.

The researchers also faced challenges in finding the best way to prepare the data. If the frames are too close together, the background would not change enough to provide data diversity.

In the end, finetuning VLMs with this new dataset improved accuracy at personalized localization by about 12% on average. When they included the dataset with pseudo-names, the performance gains reached 21%.

As model size increases, their technique leads to greater performance gains.

In the future, the researchers want to study possible reasons VLMs don’t inherit in-context learning capabilities from their base LLMs. In addition, they plan to explore additional mechanisms to improve the performance of a VLM without the need to retrain it with new data.

“This work reframes few-shot personalized object localization—adapting on the fly to the same object across new scenes—as an instruction-tuning problem and uses video-tracking sequences to teach VLMs to localize based on visual context rather than class priors. It also introduces the first benchmark for this setting with solid gains across open and proprietary VLMs.

“Given the immense significance of quick, instance-specific grounding—often without finetuning—for users of real-world workflows (such as robotics, augmented reality assistants, creative tools, etc.), the practical, data-centric recipe offered by this work can help enhance the widespread adoption of vision-language foundation models,” says Saurav Jha, a postdoc at the Mila-Quebec Artificial Intelligence Institute, who was not involved with this work.

More information:

Sivan Doveh et al, Teaching VLMs to Localize Specific Objects from In-context Examples, arXiv (2025). DOI: 10.48550/arxiv.2411.13317

This story is republished courtesy of MIT News (web.mit.edu/newsoffice/), a popular site that covers news about MIT research, innovation and teaching.

Citation:

Method teaches generative AI models to locate personalized objects (2025, October 16)

retrieved 16 October 2025

from https://techxplore.com/news/2025-10-method-generative-ai-personalized.html

This document is subject to copyright. Apart from any fair dealing for the purpose of private study or research, no

part may be reproduced without the written permission. The content is provided for information purposes only.

Tech

Port of Tyne advances connected mobility, autonomous logistics | Computer Weekly

The North East Automotive Alliance (NEAA), alongside the Port of Tyne, autonomous driving technology provider Oxa and a consortium of leading industry and academic partners, has delivered the Port‑Connected and Automated Logistics (P-CAL) project.

The Port of Tyne is one of the UK’s major deep-sea ports handling specialised bulk and containerised products, alongside delivery logistics, and assisting growing passenger numbers via its International Passenger Terminal.

Overall, the Port of Tyne adds £658m to the local economy, supporting 10,400 jobs directly and indirectly, and as one of the UK’s largest trust ports. Fully self-financing, it runs on a commercial basis, reinvesting all of its profits back into facilities along the River Tyne for the benefit of the North East and its stakeholders.

Delivered and funded through the UK government’s CAM [Connected and Automated Mobility] Pathfinder programme, NEAA – a collaborative, industry-led cluster dedicated to fostering a competitive and sustainable environment for businesses – is working with its partners to deliver P-CAL to demonstrate autonomous container transport at the Port of Tyne. The initiative will see the deployment of a fully autonomous terminal tractor and secure mesh communication network to move containers between the dockside and the container compound, creating a UK first in waterside port automation.

P-CAL was designed to push the boundaries of autonomous logistics by deploying and validating a fully autonomous terminal tractor in a live port environment. Building on the North East’s earlier 5G CAL and V‑CAL initiatives – which looked to assess the commercial viability of deploying autonomous yard tractors on the Vantec-Nissan route in Sunderland – the project worked to move autonomous technology from proof‑of‑concept trials into a complex, safety‑critical, real‑world operational setting.

Over the course of the project, the consortium is said to have successfully designed, integrated and tested an autonomous container transport service capable of operating on a busy quayside. The scope of work included the deployment of a fully autonomous terminal tractor; a resilient mesh communication network; the capability to integrate with terminal operating systems; real‑time coordination with live crane movements; and the implementation of a cyber security framework to enable safe, remote and automated operations.

The system was developed and tested in a newly defined and highly complex operational design domain. This is said to reflect the realities of a working port environment where traffic density, variable conditions and human interaction present unique challenges.

The regional and national partnership delivering the project combined expertise across autonomous systems, logistics, cyber security, academia, legal compliance and industrial operations. The consortium believes its project has generated valuable technical, operational and regulatory insight that will inform the future deployment of CAM services across ports, logistics hubs and industrial sites nationwide.

By augmenting the capability of the existing workforce, it says it has shown that autonomous systems can take on repetitive or more hazardous tasks, allowing skilled workers to focus on higher-value roles. This is seen as particularly vital for the North East, ensuring the region remains at the forefront of industrial evolution while creating a more resilient and tech-enabled labour market.

“Delivering autonomous logistics in a live port environment has been a major step forward for the sector,” said Graeme Hardie, operations director at the Port of Tyne. “P-CAL has shown what’s possible when innovation is applied to real operational challenges, improving safety, efficiency and sustainability. The Port of Tyne is proud to have played a leading role in a project that will influence how ports across the UK and beyond approach automation.”

Oxa founder and CEO Paul Newman added: “The success of P-CAL proves how autonomy will enable the future of resilient logistics operations. Through the project, we’ve demonstrated that existing work vehicles can be turned into a digital workforce – successfully completing autonomous container movements in a dynamic quayside environment, while providing worksite intelligence necessary for real-time industrial optimisation. P-CAL provides a blueprint for how ports and industrial hubs worldwide can deploy autonomous technology to drive productivity, efficiency and safety.”

CAM Pathfinder is funded by the UK government, delivered by the Department for Business and Trade in partnership with automated mobility firm Zenzic and Innovate UK, the UK’s national innovation agency.

Zenzic programme director Mark Cracknell said: “P-CAL is a strong example of how government and industry can work together to accelerate the commercial readiness of CAM technologies. Projects like this are vital in turning innovation into deployment, creating high‑value jobs and ensuring the UK remains globally competitive in connected and automated mobility. As the project closes, the outcomes and learning from P-CAL will continue to shape future CAM initiatives, investment opportunities and policy development, both regionally and nationally.”

The next phase of the project will examine how the system performs across broader port operations, including the added pressures of multiple vehicles working alongside people, equipment and live commercial activity.

Tech

‘STAGED’: Conspiracy Theories Are Everywhere Following White House Correspondents’ Dinner Shooting

In the immediate aftermath of the attack on the White House Correspondents’ Dinner on Saturday night, influencers, pundits, and random posters lit up social media platforms like X, Bluesky, and Instagram with conspiracy theories about the attack and the alleged shooter.

Both left and right-wing accounts claimed, without evidence, that the attack was staged.

President Donald Trump, Vice President JD Vance, and dozens of other high-profile administration officials and journalists were attending the dinner at the Hilton hotel in Washington, DC, when a suspect, later identified by media reports as Cole Tomas Allen from California, allegedly ran past security towards the event. He was detained by law enforcement while the president and vice president were evacuated. Police said that they believe Cole acted alone, but did not expand on who his intended target was or what his motive may have been. “We believe the suspect was targeting administration officials,” acting attorney general Todd Blanche told NBC’s Meet the Press on Sunday morning.

On Bluesky, which has a predominantly left-leaning user base, many people simply wrote the word “STAGED” over and over again, echoing the response to the Trump assassination attempt in Butler, Pennsylvania in 2024.

On X, many claimed the shooting was staged as a way to bolster support for Trump’s plan to build a new ballroom in the White House. The president referenced the ballroom in a press conference after the incident and a Truth Social post on Sunday morning. Many prominent online Trump boosters echoed the need for the ballroom, including far-right podcaster Jack Posobiec, Libs of TikTok creator Chaya Raichik, and Tom Fitton, the right-wing activist who runs Judicial Watch.

Their quick response, conspiracy theorists claimed, was evidence of a coordinated campaign following the shooting. “Is this another staged event,” one X user asked in a post that has been viewed more than 5 million times.

Other social media users who claimed the incident was staged pointed to a Fox News clip that featured the station’s White House correspondent Aishah Hasnie speaking from the Hilton hotel. Hasnie told viewers that prior to the shooting, press secretary Karoline Leavitt’s husband allegedly told her “you need to be very safe,” before the call was cut off.

“Fox News just cut one of their reporters off as they seemed to indicate the shooting was a pre-planned false flag,” one X user wrote in a post that has been viewed more than 2 million times. Hasnie later clarified in an X post that her cell service had cut out in a location with notoriously bad service, adding: “He was telling me to be careful with my own safety because the world is crazy. He was expressing his concern for my safety.”

“I don’t want to be fomenting conspiracies,” wrote Angelo Carusone, the chair and president of Media Matters, on Bluesky about the Fox News interview. “But I mean…this was super weird. Super weird.”

Leavitt herself was also the focus of conspiracy theories after she said “shots will be fired” in an interview ahead of the dinner, referring to the jokes Trump was scheduled to deliver. Following the attack, X users claimed the comment was “strange,” “sus,” or a “curious choice of words,” while sharing memes that suggested the shooting was staged. At least one mainstream outlet appeared to amplify the conspiracy theory as well, describing Leavitt’s comment as “eerie” and “bizarre.”

Tech

Your Kindle Is Better With Accessories. Here’s Where to Start

Kindle Holders

Hate holding up your Kindle? Or struggle with chronic pain that makes holding it feel terrible? These holders will literally take the weight out of your hands.

A Freestanding Charger

Looking to keep your Kindle charged without adding another cord to the floor of your desk or bedside table? Same. Here’s a more stylish solution if you have one of the Signature editions.

A Kindle Page Turner

The hottest new item to get as a Kindle lover is a page turner. They’re especially handy for holders like the ones above, where your hands aren’t already on the device, and can make for a great accessibility accessory for readers with different needs.

My biggest irritation with these devices so far is that you have to charge them both individually, and if one runs out of battery, the whole thing is useless. I also don’t love that the turner does tend to block at least one letter while I read, and you can’t place it on the lower or upper margins since it’ll activate the menus instead of turning the page. Still, it makes reading ultra comfortable, especially for my strained wrists.

Here’s my favorite one so far, that’s been solid at holding a charge, and next I’m testing this remote ($15) with a wearable ring clicker instead of a remote.

-

Sports1 week ago

Sports1 week agoWWE WrestleMania 42 Night 2: Live match results and analysis

-

Sports1 week ago

Sports1 week agoNCAA men’s gymnastics championship: All-time winners list

-

Fashion1 week ago

Fashion1 week agoUK’s Sosandar returns to profitability amid robust FY26 performance

-

Politics6 days ago

Politics6 days agoUK’s Starmer seeks to deflect blame over Mandelson appointment

-

Entertainment1 week ago

Entertainment1 week agoRuby Rose old essay resurfaces detailing night of alleged Katy Perry assault

-

Entertainment7 days ago

Entertainment7 days agoLee Anderson, Zarah Sultana kicked out of UK Parliament for calling PM ‘liar’

-

Business1 week ago

Business1 week agoNo fuel shortage: Govt assures 100% domestic LPG, PNG, CNG supply amid Hormuz energy crunch – The Times of India

-

Business7 days ago

Business7 days agoHow Trump’s psychedelics executive order could unlock stalled cannabis reform