Tech

Historical images made with AI recycle colonial stereotypes and bias—new research

Generative AI has revolutionized how we make and consume images. Tools such as Midjourney, DALL-E and Sora can now conjure anything, from realistic photos to oil-like paintings—all from a short text prompt.

These images circulate through social media in ways that make their artificial origins difficult to discern. But the ease of producing and sharing AI imagery also comes with serious social risks.

Studies show that by drawing on training data scraped from online and other digital sources, generative AI models routinely mirror sexist and racist stereotypes—portraying pilots as men, for example, or criminals as people of color.

My soon-to-be-published new research finds generative AI also carries a colonial bias.

When prompted to visualize Aotearoa New Zealand’s past, Sora privileges the European settler viewpoint: pre-colonial landscapes are rendered as empty wilderness, Captain Cook appears as a calm civilizer, and Māori are cast as timeless, peripheral figures.

As generative AI tools become increasingly influential in how we communicate, such depictions matter. They naturalize myths of benevolent colonization and undermine Māori claims to political sovereignty, redress and cultural revitalization.

‘Sora, what did the past look like?’

To explore how AI imagines the past, OpenAI’s text-to-image model Sora was prompted to create visual scenes from Aotearoa New Zealand’s history, from the 1700s to the 1860s.

The prompts were deliberately left open-ended—a common approach in critical AI research—to reveal the model’s default visual assumptions rather than prescribe what should appear.

Because generative AI systems operate on probabilities, predicting the most likely combination of visual elements based on their training data, the results were remarkably consistent: the same prompts produced near-identical images, again and again.

Two examples help illustrate the kinds of visual patterns that kept recurring.

In Sora’s vision of “New Zealand in the 1700s,” a steep forested valley is bathed in golden light, with Māori figures arranged as ornamental details. There are no food plantations or pā fortifications, only wilderness awaiting European discovery.

This aesthetic draws directly on the Romantic landscape tradition of 19th-century colonial painting, such as the work of John Gully, which framed the land as pristine and unclaimed (so-called terra nullius) to justify colonization.

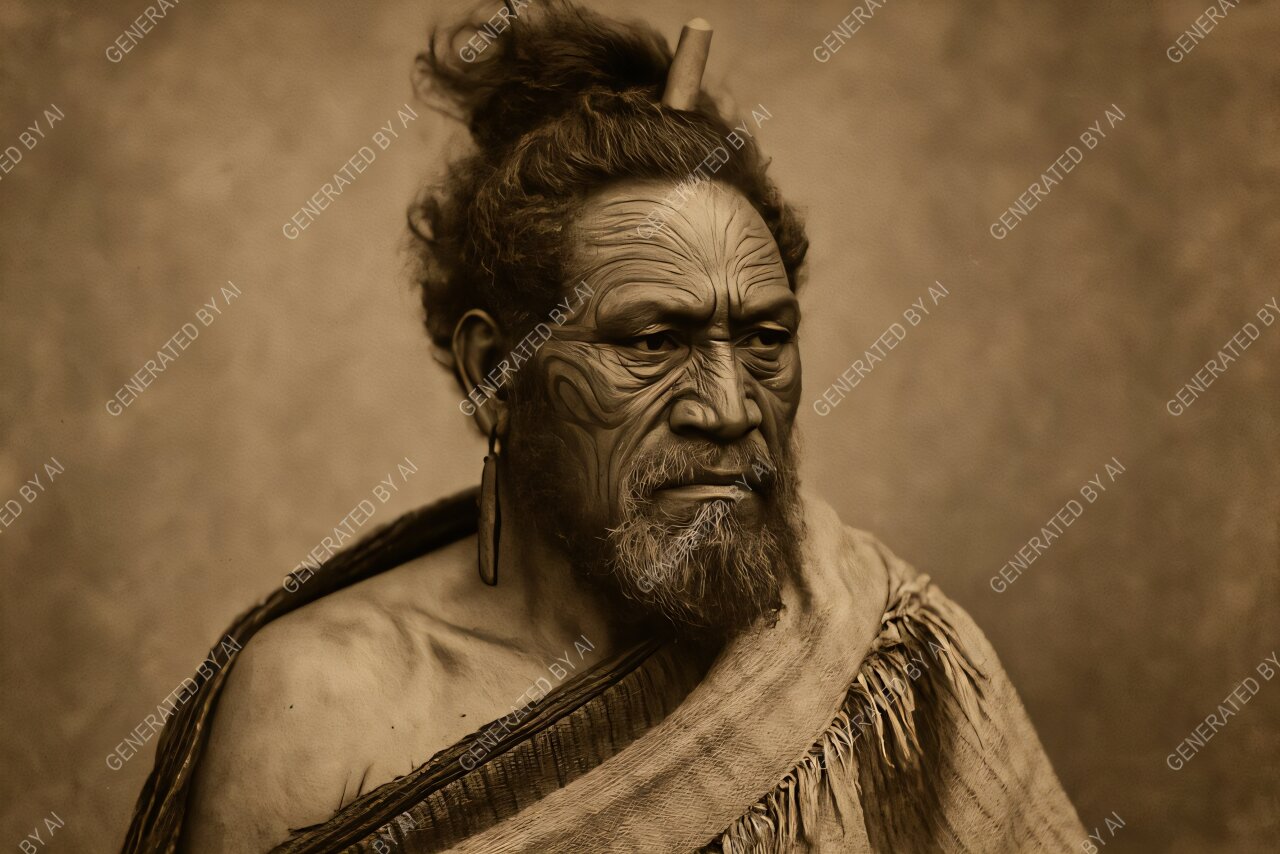

When asked to portray “a Māori in the 1860s,” Sora defaults to a sepia-toned studio portrait: a dignified man in a cloak, posed against a neutral backdrop.

The resemblance to cartes de visite photographs of the late 19th century is striking. Such portraits were typically staged by European photographers, who provided props to produce an image of the “authentic native.”

It’s revealing that Sora instinctively reaches for this format, even though the 1860s were defined by armed and political resistance by Māori communities, as colonial forces sought to impose British authority and confiscate land.

Recycling old sources

Visual imagery has always played a central role in legitimizing colonization. In recent decades, however, this colonial visual regime has been steadily challenged.

As part of the Māori rights movement and a broader historical reckoning, statues have been removed, museum exhibitions revised, and representations of Māori in visual media have shifted.

Yet the old imagery has not disappeared. It survives in digital archives and online museum collections, often de-contextualized and lacking critical interpretation.

And while the precise sources of generative AI training data are unknown, it is highly likely these archives and collections form part of what systems such as Sora learn from.

Generative AI tools effectively recycle those sources, thereby reproducing the very conventions that once served the project of empire.

But imagery that portrays colonization as peaceful and consensual can blunt the perceived urgency of Māori claims to political sovereignty and redress through institutions such as the Waitangi Tribunal, as well as calls for cultural revitalization.

By rendering Māori of the past as passive, timeless figures, these AI-generated visions obscure the continuity of the Māori self-determination movement for tino rangatiratanga and mana motuhake.

AI literacy is the key

Across the world, researchers and communities are working to decolonize AI, developing ethical frameworks that embed Indigenous data sovereignty and collective consent.

Yet visual generative AI presents distinct challenges, because it deals not only in data but also in images that shape how people see history and identity. Technical fixes can help, but they each have their limitations.

Extending datasets to include Māori-curated archives or images of resistance might diversify what the model learns—but only if done under principles of Indigenous data and visual sovereignty.

Addressing the bias in algorithms could, in theory, balance what Sora shows when prompted about colonial rule. But defining “fair” representation is a political question, not just a technical one.

Filters might block the most biased outputs, but they can also erase uncomfortable truths, such as depictions of colonial violence.

Perhaps the most promising solution lies in AI literacy. We need to understand how these systems think, what data they draw on, and how to prompt them effectively.

Approached critically and creatively—as some social media users are already doing—AI can move beyond recycling colonial tropes to become a medium for re-seeing the past through Indigenous and other perspectives.

This article is republished from The Conversation under a Creative Commons license. Read the original article.![]()

Citation:

Historical images made with AI recycle colonial stereotypes and bias—new research (2025, October 26)

retrieved 26 October 2025

from https://techxplore.com/news/2025-10-historical-images-ai-recycle-colonial.html

This document is subject to copyright. Apart from any fair dealing for the purpose of private study or research, no

part may be reproduced without the written permission. The content is provided for information purposes only.

Tech

In a Big Reversal, Zohran Mamdani Tells NYC Agencies to Use TikTok

New York City mayor Zohran Mamdani, who rode a social media-fueled campaign to Gracie Mansion, is reversing an Eric Adams–era directive barring TikTok from government-owned devices. Local agencies will now be able to post about their projects on the app, though with new guardrails to protect city networks.

“The Mamdani administration is committed to using every tool in our toolbox to communicate with New Yorkers,” says the email to agencies, obtained by WIRED. “At a moment when people are turning to city government for information about free services, emergency situations, upcoming events, and more, we want to open up new avenues of communication with the public and help deliver the information New Yorkers need.”

In August 2023, then-mayor Adams barred the use of TikTok on government devices, joining the ranks of other state and federal agencies that at the time deemed the app a major security risk. Adams spokesperson Jonah Allon said then that the city’s Cyber Command office had decided that TikTok, which was owned by the Chinese-based company ByteDance, “posed a security threat to the city’s technical networks and directed its removal from city-owned devices.”

The directive resulted in a number of popular city-run accounts shutting down, including accounts for the NYC Departments of Sanitation and Parks and Recreation. As of Tuesday morning, the accounts’ bios read, “This account was operated by NYC until August 2023. It’s no longer monitored.”

Now, these TikTok accounts will be allowed to reopen with a few new rules aimed at protecting the security of NYC’s networks and devices while allowing agencies to communicate with citizens on the popular app. In order to use TikTok, agencies will be required to use separate, government-issued devices for the app that “cannot contain sensitive or restricted data, and they cannot be used for email, internal systems, or privileged access,” according to the email to agencies. Agencies will designate specific staff from media and press offices to run the TikTok accounts with city government emails, not personal ones.

“In a fragmented media landscape, more and more people—especially younger people—are looking beyond the four corners of their television screen to stay informed,” Mamdani said in a statement to WIRED. “Our responsibility is simple: Meet people where they are. That means stepping outside our comfort zones and communicating in ways that reflect how New Yorkers actually live, work, and connect.”

Mamdani’s rule reversal comes after his November election that relied heavily on social media to conduct voter outreach. Mamdani leveraged TikTok to recruit volunteers and amplify his policy platform. Over his first few months in office, Mamdani has continued to leverage social media platforms, publishing a variety of public-service announcements related to city-run programs.

Ahead of dangerous winter weather in January, Mamdani published a video to the official @nycmayor account on Instagram asking New Yorkers to sign up for the city’s free emergency communications program, NotifyNYC. The program netted more than 32,000 new subscribers in the four days after the video was released, according to stats provided by Mamdani’s office. Last year, New York City Emergency Management ran a $240,000 advertising round for NotifyNYC, acquiring around 48,000 new subscribers. Mamdani also created a handful of videos asking New Yorkers to join a Department of Sanitation snow-shoveling program. Around 5,000 people signed up, tripling the number previously enrolled in the program.

The situation has also changed for the app. In January 2026, TikTok finalized a deal with the Trump administration to form a new US-based version of the company run by American investors, including Oracle. The consortium of American investors staved off a nationwide ban of the app.

Tech

The $1 Million Aston Martin Valhalla Makes You Drive Better Than You Thought Possible

Yes, it’s a supercar, but it’s also sold very much as a track and road car, one that accommodates a passenger, all of which means road trips and weekend-away stays are very much possible. Well, they would be if there were anywhere at all to store luggage. Lamborghini managed to find some luggage space in its Revuelto design, so there’s no excuse here, really.

The design department otherwise has had a field day. Top-mounted exhausts, dihedral doors, and even an F1-style roof snorkel to accompany that air-braking rear wing deliver an exterior that is nothing short of arresting. Somehow, none of this looks garish or out of place on the Valhalla in person. Everything has a purpose, and nothing seems to scream as flexing or showing off. There’s a cohesion to the Valhalla aesthetic that others might not manage.

Inside, it is much more comfortable than you would imagine. The one-piece carbon-fiber seats look like they are going to be tricky, but on my two-hour road drive, they were supportive and, yes, comfortable. Visibility is surprisingly good, but a camera system is required for the rear view mirror because there’s no rear window. The rest of the interior is minimal, but the steering wheel is excellent (which, as Jony Ive will tell you, is no mean feat) and neatly signals some motorsport cool.

Photograph: Jeremy White

The one gripe for the interior is the dash and center screens, which are clear and responsive, and offer up the usual smartphone mirroring options, but they aren’t luxurious. We’re seeing a lot more effort these days with screen design from Ferrari’s new Luce as well as BMW in the iX3 and i3, but here, Aston has decidedly functional, off-the-shelf-looking displays. If I were parting with a million dollars, I might want more consideration here.

Odin’s Beard

On the road and track is where the Valhalla excels. Impressive doesn’t come close, and, despite the delays, the patience shown by Aston has clearly paid dividends. The ride is superb, as well as being ridiculously quick. The chassis is exceptionally agile, making the car feel alert and light. There are enormous reserves of grip to match the formidable braking and acceleration, and as a result, this is a car that flatters you; it effortlessly seduces you into driving much harder and better than you think you can, all while giving you levels of confidence you wouldn’t think possible.

I’ve driven the Lamborghini Revuelto, and yes, it’s exciting, but also there’s a part of you that is wary—the part that knows that if you don’t keep your wits about you 100 percent of the time, things will go bad very quickly. The Valhalla offers up all of that fun and excitement, but almost none of the trepidation. It is gratifying and intuitive to drive. Anyone can fully enjoy this car, not merely those used to track days. Some will say the engine note is not as full-throated as might be expected in such a car, but others will be having so much fun they won’t care. Nor should they.

Tech

AI Has Flooded All the Weather Apps

You may have noticed a drop of AI in your weather app lately. As companies race to infuse artificial intelligence into every product, the wave has come for the humble weather app.

The Weather Company, operator of the Weather Channel, today released a revamped version of its Storm Radar app, featuring an AI-powered Weather Assistant that lets users customize how they view forecasts and weather maps, toggling between layers like radar, temperature, and weather conditions like wind and lightning.

It can also sync with other apps, like your calendar, to send text notifications and weather summaries that tie info about the upcoming weather into your daily plans. You can stick a voice on it to talk like an old-timey radio weatherman, if you’re into that. Like most weather apps, it gets the data comes from the National Oceanic and Atmospheric Administration (NOAA) and the National Weather Service (NWS).

The app costs $4 per month. It is available on iOS only for now, but the company says an Android version is coming eventually.

“We wanted to build an experience that would be a weather level-up for anybody, really, from a casual observer to a seasoned storm chaser,” says Joe Koval, a senior meteorologist at the Weather Company. “If you’re looking for advice on when the weather will be good to walk your dog tomorrow, you no longer have to look at a bunch of different disparate weather data elements and try to figure out the answer to that question yourself.”

You can find the weather on your phone already, of course. Android and iOS devices typically place the weather prominently beside the time. Google and Apple have both fused their weather apps into their smartphones directly. AI features have since been infused, offering insights and summaries about the day to come.

But there are third-party weather apps galore, like Storm Radar, Carrot Weather, Rain Viewer, and Acme Weather—an app from the former Dark Sky app creators. New weather apps like Rainbow Weather aim to be AI-first. Weather services are also being integrated directly into AI chatbots, like Accuweather, which recently launched an app directly in OpenAI’s ChatGPT.

“Everyone has their idea of what they want in a weather app, what data they’re interested in, how they’re interested in it being presented,” says Adam Grossman, a founder of the DarkSky app. “How do you build a single weather app that works for everybody?”

DarkSky, one of the most popular iOS weather apps, was bought by Apple in 2020 and merged into its Apple Weather service. Grossman eventually left Apple to start Acme Weather, with the goal of making a weather prediction service that better telegraphs the uncertainty of forecasting.

“No matter how good your forecast is, you’re going to be wrong,” Grossman says. “That’s something that weather apps traditionally haven’t done a great job of doing. Our approach is trying to figure out how to add those pieces of context back in.”

Repositories of weather information usually come from government sources, like NOAA or other global weather services that collect data from weather satellites, radar, weather balloons, and on-the-ground instruments. All that data is fed into weather prediction models that simulate the physics of the atmosphere. Those predictions are often generated by resource-intensive supercomputers, but machine learning models have trimmed that processing down, making predictions quicker. (Though sometimes less accurate, which can be accounted for by comparing multiple models.)

Weather apps like Storm Radar and Acme Weather translate that bounty of information by corroborating and compiling the models, then helping to create high-resolution maps and a visual representation of the data, an area where AI can also be particularly useful.

-

Politics1 week ago

Politics1 week agoAfghanistan announces release of detained US citizen

-

Sports1 week ago

Sports1 week agoBroadcast industry CEO says consolidation is ‘essential’ to compete for NFL soaring media rights prices

-

Entertainment1 week ago

Entertainment1 week agoUN warns migratory freshwater fish numbers are spiralling

-

Tech1 week ago

Tech1 week agoCan a Home Appliance Fix the Problem of Soft-Plastic Waste?

-

Business1 week ago

Business1 week agoProperty Play: Home flippers see smallest profits since the Great Recession, real estate data firm says

-

Business1 week ago

Business1 week agoGold prices soar in Pakistan – SUCH TV

-

Fashion1 week ago

Fashion1 week agoICE cotton slips on weaker crude, profit booking

-

Business1 week ago

Business1 week agoMore women are entering wealth management, but few are in advisory roles, study finds