Tech

How do ‘AI detection’ tools actually work? And are they effective?

As nearly half of all Australians say they have recently used artificial intelligence (AI) tools, knowing when and how they’re being used is becoming more important.

Consultancy firm Deloitte recently partially refunded the Australian government after a report they published had AI-generated errors in it.

A lawyer also recently faced disciplinary action after false AI-generated citations were discovered in a formal court document. And many universities are concerned about how their students use AI.

Amid these examples, a range of “AI detection” tools have emerged to try to address people’s need for identifying accurate, trustworthy and verified content.

But how do these tools actually work? And are they effective at spotting AI-generated material?

How do AI detectors work?

Several approaches exist, and their effectiveness can depend on which types of content are involved.

Detectors for text often try to infer AI involvement by looking for “signature” patterns in sentence structure, writing style, and the predictability of certain words or phrases being used. For example, the use of “delves” and “showcasing” has skyrocketed since AI writing tools became more available.

However the difference between AI and human patterns is getting smaller and smaller. This means signature-based tools can be highly unreliable.

Detectors for images sometimes work by analyzing embedded metadata which some AI tools add to the image file.

For example, the Content Credentials inspect tool allows people to view how a user has edited a piece of content, provided it was created and edited with compatible software. Like text, images can also be compared against verified datasets of AI-generated content (such as deepfakes).

Finally, some AI developers have started adding watermarks to the outputs of their AI systems. These are hidden patterns in any kind of content which are imperceptible to humans but can be detected by the AI developer. None of the large developers have shared their detection tools with the public yet, though.

Each of these methods has its drawbacks and limitations.

How effective are AI detectors?

The effectiveness of AI detectors can depend on several factors. These include which tools were used to make the content and whether the content was edited or modified after generation.

The tools’ training data can also affect results.

For example, key datasets used to detect AI-generated pictures do not have enough full-body pictures of people or images from people of certain cultures. This means successful detection is already limited in many ways.

Watermark-based detection can be quite good at detecting content made by AI tools from the same company. For example, if you use one of Google’s AI models such as Imagen, Google’s SynthID watermark tool claims to be able to spot the resulting outputs.

But SynthID is not publicly available yet. It also doesn’t work if, for example, you generate content using ChatGPT, which isn’t made by Google. Interoperability across AI developers is a major issue.

AI detectors can also be fooled when the output is edited. For example, if you use a voice cloning app and then add noise or reduce the quality (by making it smaller), this can trip up voice AI detectors. The same is true with AI image detectors.

Explainability is another major issue. Many AI detectors will give the user a “confidence estimate” of how certain it is that something is AI-generated. But they usually don’t explain their reasoning or why they think something is AI-generated.

It is important to realize that it is still early days for AI detection, especially when it comes to automatic detection.

A good example of this can be seen in recent attempts to detect deepfakes. The winner of Meta’s Deepfake Detection Challenge identified four out of five deepfakes. However, the model was trained on the same data it was tested on—a bit like having seen the answers before it took the quiz.

When tested against new content, the model’s success rate dropped. It only correctly identified three out of five deepfakes in the new dataset.

All this means AI detectors can and do get things wrong. They can result in false positives (claiming something is AI generated when it’s not) and false negatives (claiming something is human-generated when it’s not).

For the users involved, these mistakes can be devastating—such as a student whose essay is dismissed as AI-generated when they wrote it themselves, or someone who mistakenly believes an AI-written email came from a real human.

It’s an arms race as new technologies are developed or refined, and detectors are struggling to keep up.

Where to from here?

Relying on a single tool is problematic and risky. It’s generally safer and better to use a variety of methods to assess the authenticity of a piece of content.

You can do so by cross-referencing sources and double-checking facts in written content. Or for visual content, you might compare suspect images to other images purported to be taken during the same time or place. You might also ask for additional evidence or explanation if something looks or sounds dodgy.

But ultimately, trusted relationships with individuals and institutions will remain one of the most important factors when detection tools fall short or other options aren’t available.

This article is republished from The Conversation under a Creative Commons license. Read the original article.![]()

Citation:

How do ‘AI detection’ tools actually work? And are they effective? (2025, November 16)

retrieved 16 November 2025

from https://techxplore.com/news/2025-11-ai-tools-effective.html

This document is subject to copyright. Apart from any fair dealing for the purpose of private study or research, no

part may be reproduced without the written permission. The content is provided for information purposes only.

Tech

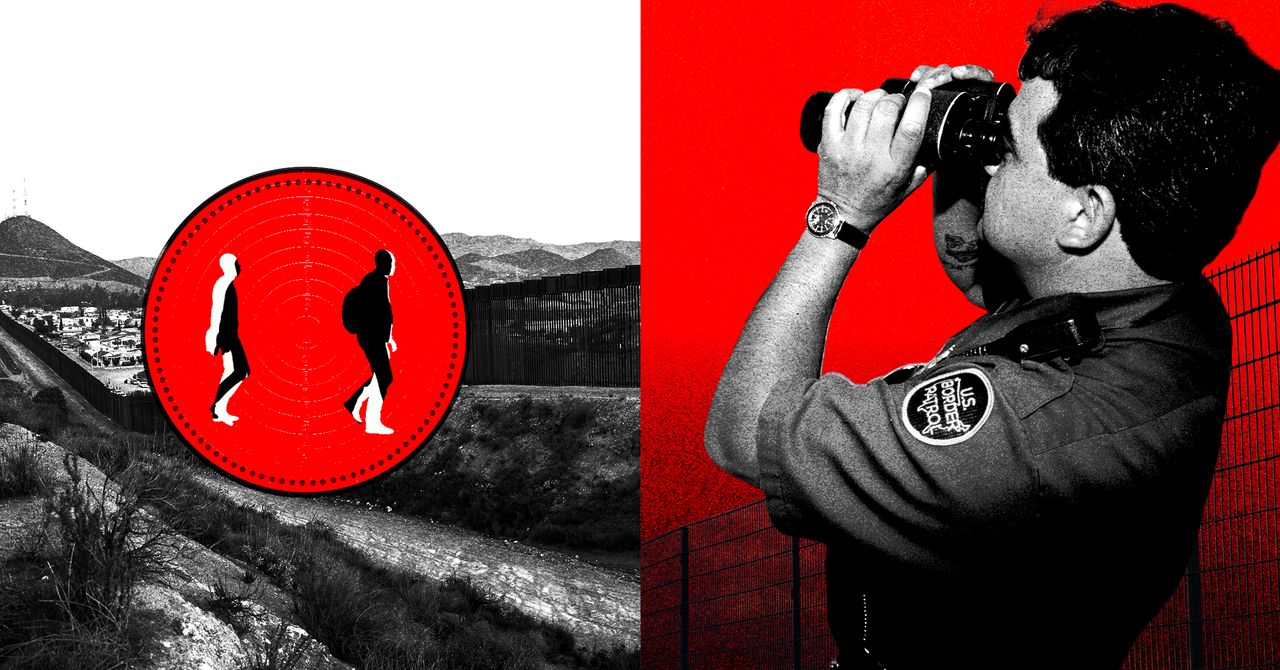

Border Patrol Agents Sold Challenge Coins With ‘Charlotte’s Web’ Characters in Riot Gear

US Border Patrol agents are raising money by selling coins that commemorate last year’s wave of immigration enforcement “operations” across the country, along with other merchandise. The funds are for nonprofit organizations that list Border Patrol buildings as their address in IRS paperwork. At least two of the organizations have dedicated US Customs and Border Protection email addresses.

The front side of one coin for sale reads, “NORTH AMERICAN TOUR 2025,” along with the acronyms for US Border Patrol and the acronym for “fuck around and find out”—a phrase that was initially popularized by the far-right group the Proud Boys and has been used by various Trump officials. In the center, the coin depicts a gas mask, a riot control smoke grenade, and a pepper ball launcher. On the other side, the coin appears to have a portrait of Border Patrol’s now retired commander-at-large, Gregory Bovino, with his arm raised in a salute, along with the text “COMING TO A CITY NEAR YOU!” It lists seven cities, many of which actually saw federal enforcement surges in 2025: Chicago, Los Angeles, Memphis, Phoenix, Portland, Charlotte, and Atlanta.

The coin is for sale by Willcox Morale Welfare and Recreation, a nonprofit that the IRS most recently declared tax-exempt during the Biden administration and whose address on IRS paperwork matches that of the Willcox Border Patrol Station in Arizona. A request for comment sent to Willcox MWR’s dedicated CBP email address went unanswered.

Employees of the Department of Homeland Security, the parent agency for Border Patrol, are allowed to start private, not-for-profit employee associations within DHS, so long as they get formally recognized by the agency and follow certain rules. According to DHS policies, officially recognized groups can fundraise using government property and create merchandise with the agency’s name and logos–but they have to receive advance approval from the agency.

Willcox MWR is just one of several groups across the country that cater to Border Patrol agents and refer to themselves as MWRs, a reference to the US military’s “morale, welfare and recreation” programs. The groups tend to throw holiday events and retirement parties, and sometimes raise money for the families of agents going through hard times, including those not getting paid during the current shutdown.

Many MWRs also sell customized medallions known as “challenge coins” that commemorate specific teams or events. While anyone, including CBP alumni, can design and sell coins, current DHS employees are not supposed to use government resources to sell ones that use the agency’s seals or logos without permission, or ones that the agency considers inappropriate or unprofessional.

CBP did not provide comment about its relationship to Willcox MWR or any other nonprofit mentioned in this story, nor whether the agency had green-lit the “North American Tour” coin design, ahead of publication.

Under Willcox MWR’s Facebook post about the “North American Tour” coin, someone named Juan Diego commented, “Sign up SDC BK5 MWR for 10.”

“Shoot us an email,” someone managing the Willcox MWR account replied, giving out what appeared to be a dedicated cbp.dhs.gov email address for the group.

SDC BK5 MWR, also a registered nonprofit, lists an address on its website that matches that of a government facility in Chula Vista, California. It says on its site that it was started by San Diego Sector Border Patrol agents and sells custom merchandise “designed to raise funds for morale and relief efforts.”

Diego did not respond to a request for comment.

The SDC BK5 MWR website has listings for over 200 different products in addition to the North American Tour coin. One of those listings was a “Chicago Midway Blitz” challenge coin in the shape of a gas mask that doubles as a bottle opener. Embossed around the edges of the coin are the names of several municipalities and neighborhoods caught up in DHS’s immigration enforcement surge of the same name last fall. Like the North American Tour coin, it features the US Border Patrol logo and the acronym for “fuck around and find out.” Opponents of the Trump administration’s immigration enforcement activity in Illinois are unamused.

Tech

One of Our Favorite 360 Cams Is 35 Percent Off

Tired of taking your action camera on an adventure, only to get home and find out you missed the action with a bad angle? One option is to switch to a 360-degree action cam, so you can capture all of the action and then edit down to just the good stuff later. One of our favorite options, the DJI Osmo 360, is currently available for just $390 on Amazon, a $209 discount from its usual price, and it comes with a selfie stick and an extra battery.

The DJI Osmo 360 achieves its impressive all-around video quality by leveraging a pair of 1/1.1-inch sensors, larger than some other offerings, and by supporting 10-bit color. You can really see that in the camera’s output, with colors that are vivid and bold, to the point that you may need to dial them back a bit in post if you want something more natural. With support for up to 50 frames per second at 8K when recording in 360 degrees, or 120 fps at 4K when shooting with only one sensor, you’ll have plenty of material to work with. In our testing, it ran for just shy of two hours at 30 fps, which is also around the time the internal storage had filled up anyway.

If you plan on catching any serious discussions with your Osmo 360, you’ll be pleased to know it connects directly to DJI’s line of wireless lavalier microphones, including the excellent and frequently discounted DJI Mic 2 and Mic Mini. If you want to mount it to something other than the included 1.2-meter selfie stick, it has both DJI’s magnetic attachment system and a more traditional ¼”-20 tripod mount. The DJI Mimo app lets you control the camera and adjust any settings, and there’s even a simple editor for on-the-fly production. For desktop users, DJI Studio has even more in-depth settings and editing options, in case you don’t want to pay for Premiere.

The DJI Osmo 360 is one of our favorite action cameras, and is particularly appealing at the discounted price point, but make sure to check out our full review for more info, or head over to our full roundup to see what else is available.

Tech

Artemis II: Everything We Know as Its Crew Approaches the Far Side of the Moon

On day six of its mission, Artemis II is closing in on the far side of the moon. Meanwhile, the historic journey has not been without fascinating and curious stories, from the images and videos that its four crew members have shared with the world to the inevitable unforeseen events—including a tricky toilet situation.

A few hours before the crew begins its lunar flyby, here’s how things are going on Artemis II.

When Will They Reach the Far Side of the Moon?

While Artemis II won’t actually land on the moon (that won’t happen until Artemis IV), that does not make this mission any less compelling. Once the Artemis II astronauts finish flying over the dark side of the moon, they will have the historic distinction of being the humans who have traveled the farthest from Earth.

They will also test all the systems needed for future lunar missions, validating life support, navigation, spacesuits, communications, and other human operations in deep space.

But when are they supposed to reach this far-off point? First, the Orion capsule reached what is known as the moon’s “sphere of influence” on Sunday night. This is the point where the moon’s gravitational force is stronger than the force of the Earth.

At present, Orion is circling the moon. Once the capsule is on the dark side of the moon, approximately 7,000 kilometers from the surface, communications with Earth will be interrupted. For six hours, they will be able to view the far side of the moon, something no human being has ever seen with their own eyes—not even the astronauts of the Apollo program, as this region of the moon was always too dark or difficult for them to reach.

That six-hour flyby of the dark side of the moon is expected to begin Monday, April 6, at 2:45 pm EDT and 7:45 pm London time.

After that, the capsule will use the moon’s gravity to propel itself back to Earth. Splashdown, when the astronauts reach Earth, is scheduled for April 10 in the Pacific Ocean, not far from the coast of California, the tenth day of the mission.

Remember that you can follow the live broadcast of the Artemis II mission from NASA’s official channels.

What Has Happened so Far?

Since its successful launch on April 1 from Kennedy Space Center, the Artemis II crew has shared several spectacular photos, such as the featured image in this post, which shows mission specialist Christina Koch looking down at Earth through one of Orion’s main cabin windows.

This incredible photo of a Earth, taken on April 2, went viral on social media, referencing the famous “Blue Marble” image captured by the Apollo 17 astronauts in 1972.

View of Earth taken by astronaut Reid Wiseman from the window of the Orion spacecraft after completing the translunar injection maneuver on April 2, 2026.Photograph: Reid Wiseman/NASA/Getty Images

-

Sports1 week ago

Sports1 week agoUSMNT handed reality check by Doku, Belgium ahead of World Cup

-

Uncategorized4 days ago

[CinePlex360] Please moderate: “Trump signals p

-

Sports1 week ago

Sports1 week ago2026 NCAA men’s hockey tournament: Schedule, results

-

Uncategorized1 week ago

[CinePlex360] Please moderate: “Further tariff

-

Entertainment3 days ago

Entertainment3 days agoJoe Jonas shares candid glimpse into parenthood with Sophie Turner

-

Tech3 days ago

Tech3 days agoOur Favorite iPad Is $50 Off

-

Entertainment1 week ago

Entertainment1 week agoDemystifying the PTI

-

Politics7 days ago

Politics7 days agoTrump considers asking Arab allies to help to pay for Iran war