Tech

How do ‘AI detection’ tools actually work? And are they effective?

As nearly half of all Australians say they have recently used artificial intelligence (AI) tools, knowing when and how they’re being used is becoming more important.

Consultancy firm Deloitte recently partially refunded the Australian government after a report they published had AI-generated errors in it.

A lawyer also recently faced disciplinary action after false AI-generated citations were discovered in a formal court document. And many universities are concerned about how their students use AI.

Amid these examples, a range of “AI detection” tools have emerged to try to address people’s need for identifying accurate, trustworthy and verified content.

But how do these tools actually work? And are they effective at spotting AI-generated material?

How do AI detectors work?

Several approaches exist, and their effectiveness can depend on which types of content are involved.

Detectors for text often try to infer AI involvement by looking for “signature” patterns in sentence structure, writing style, and the predictability of certain words or phrases being used. For example, the use of “delves” and “showcasing” has skyrocketed since AI writing tools became more available.

However the difference between AI and human patterns is getting smaller and smaller. This means signature-based tools can be highly unreliable.

Detectors for images sometimes work by analyzing embedded metadata which some AI tools add to the image file.

For example, the Content Credentials inspect tool allows people to view how a user has edited a piece of content, provided it was created and edited with compatible software. Like text, images can also be compared against verified datasets of AI-generated content (such as deepfakes).

Finally, some AI developers have started adding watermarks to the outputs of their AI systems. These are hidden patterns in any kind of content which are imperceptible to humans but can be detected by the AI developer. None of the large developers have shared their detection tools with the public yet, though.

Each of these methods has its drawbacks and limitations.

How effective are AI detectors?

The effectiveness of AI detectors can depend on several factors. These include which tools were used to make the content and whether the content was edited or modified after generation.

The tools’ training data can also affect results.

For example, key datasets used to detect AI-generated pictures do not have enough full-body pictures of people or images from people of certain cultures. This means successful detection is already limited in many ways.

Watermark-based detection can be quite good at detecting content made by AI tools from the same company. For example, if you use one of Google’s AI models such as Imagen, Google’s SynthID watermark tool claims to be able to spot the resulting outputs.

But SynthID is not publicly available yet. It also doesn’t work if, for example, you generate content using ChatGPT, which isn’t made by Google. Interoperability across AI developers is a major issue.

AI detectors can also be fooled when the output is edited. For example, if you use a voice cloning app and then add noise or reduce the quality (by making it smaller), this can trip up voice AI detectors. The same is true with AI image detectors.

Explainability is another major issue. Many AI detectors will give the user a “confidence estimate” of how certain it is that something is AI-generated. But they usually don’t explain their reasoning or why they think something is AI-generated.

It is important to realize that it is still early days for AI detection, especially when it comes to automatic detection.

A good example of this can be seen in recent attempts to detect deepfakes. The winner of Meta’s Deepfake Detection Challenge identified four out of five deepfakes. However, the model was trained on the same data it was tested on—a bit like having seen the answers before it took the quiz.

When tested against new content, the model’s success rate dropped. It only correctly identified three out of five deepfakes in the new dataset.

All this means AI detectors can and do get things wrong. They can result in false positives (claiming something is AI generated when it’s not) and false negatives (claiming something is human-generated when it’s not).

For the users involved, these mistakes can be devastating—such as a student whose essay is dismissed as AI-generated when they wrote it themselves, or someone who mistakenly believes an AI-written email came from a real human.

It’s an arms race as new technologies are developed or refined, and detectors are struggling to keep up.

Where to from here?

Relying on a single tool is problematic and risky. It’s generally safer and better to use a variety of methods to assess the authenticity of a piece of content.

You can do so by cross-referencing sources and double-checking facts in written content. Or for visual content, you might compare suspect images to other images purported to be taken during the same time or place. You might also ask for additional evidence or explanation if something looks or sounds dodgy.

But ultimately, trusted relationships with individuals and institutions will remain one of the most important factors when detection tools fall short or other options aren’t available.

This article is republished from The Conversation under a Creative Commons license. Read the original article.![]()

Citation:

How do ‘AI detection’ tools actually work? And are they effective? (2025, November 16)

retrieved 16 November 2025

from https://techxplore.com/news/2025-11-ai-tools-effective.html

This document is subject to copyright. Apart from any fair dealing for the purpose of private study or research, no

part may be reproduced without the written permission. The content is provided for information purposes only.

Tech

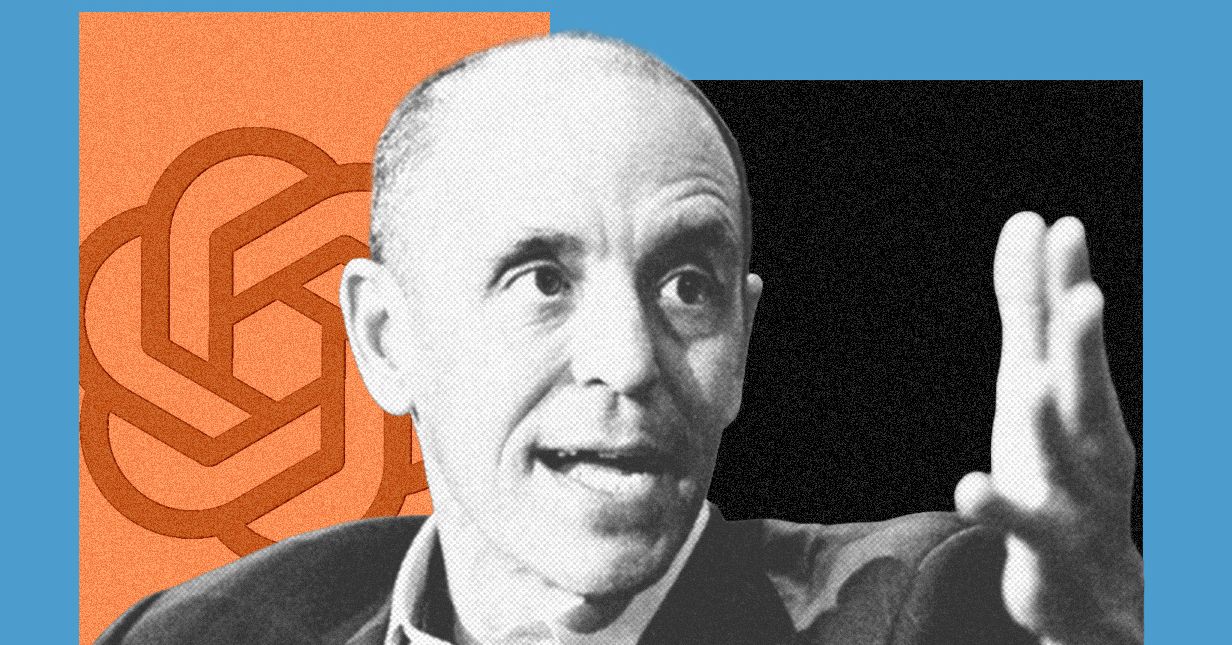

Can OpenAI’s ‘Master of Disaster’ Fix AI’s Reputation Crisis?

Three months ago, OpenAI cofounder Greg Brockman told me his concerns about a mounting public relations crisis facing artificial intelligence companies: Despite the popularity of tools like ChatGPT, an increasingly large share of the population said they viewed AI negatively. Since then, the backlash has only intensified.

College commencement speakers are now getting booed for talking about AI in optimistic terms. Last month, someone threw a Molotov cocktail at OpenAI CEO Sam Altman’s San Francisco home and wrote a manifesto advocating for crimes against AI executives. No one has more to lose from this reputation crisis than OpenAI.

The person tasked with trying to fix it is Chris Lehane, OpenAI’s chief of global affairs and a veteran political operative. I sat down with him this week to discuss what I’d argue are his two biggest challenges yet: convincing the world to embrace OpenAI’s technology, while at the same time persuading lawmakers to adopt regulations that won’t hamper the company’s growth. Lehane views these goals as one in the same.

“When I was in the White House, we always used to talk about how good policy equals good politics,” says Lehane. “You have to think about both of these things moving in concert.”

After working on crisis communications in Bill Clinton’s White House, Lehane gave himself the nickname “master of disaster.” He later helped Airbnb fend off regulators in cities that viewed short-term home rentals as existing in a legal gray area, or as he puts it, “ahead of the law.” Lehane also played an instrumental role in the formation of Fairshake, a powerful crypto industry super PAC that worked to legitimize digital currencies in Washington. Since joining OpenAI in 2024, he’s quickly become one of the company’s most influential executives and now oversees its communications and policy teams.

Lehane tells me public narratives about how AI will change society are often “artificially binary.” On one side is the “Bob Ross view of the world” that predicts a future where nobody has to work anymore and everyone lives in “beachside homes painting in watercolors all day.” On the other is a dystopian future in which AI has become so powerful that only a small group of elites have the ability to control it. Neither scenario, in Lehane’s opinion, is very realistic.

OpenAI is guilty of promoting this kind of polarizing speech in the past. CEO Sam Altman warned last year that “whole classes of jobs” will go away when the singularity arrives. More recently he has softened his tone, declaring that “jobs doomerism is likely long-term wrong.”

Lehane wants OpenAI to start conveying a more “calibrated” message about the promises of AI that avoids either of these extremes. He says the company needs to put forward real solutions to the problems people are worried about, such as potential widespread job loss and the negative impacts of chatbots on children. As an example of this work, Lehane pointed to a list of policy proposals that OpenAI recently published, which include creating a four-day work week, expanding access to health care, and passing a tax on AI-powered labor.

“If you’re going to go out and say that there are challenges here, you also then have an obligation—particularly if you’re building this stuff—to actually come up with the ideas to solve those things,” Lehane says.

Some former OpenAI employees, however, have accused the company of downplaying the potential downsides of AI adoption. WIRED previously reported that members of OpenAI’s economic research unit quit after they became concerned that it was morphing into an advocacy arm for the company. The former employees argued that their warnings about AI’s economic impacts may have been inconvenient for OpenAI, but they honestly reflected what the company’s research found.

Packing Punches

With public skepticism toward AI growing, politicians are under pressure to prove to voters they can rein in tech companies. To combat this, the AI industry has stood up a new group of super PACs that are boosting pro-AI political candidates and trying to influence public opinion about the technology. Critics say the move backfired, and some candidates have started campaigning on the fact that AI super PACS are opposing them.

Lehane helped set up one of the biggest pro-AI super PACs, Leading the Future, which launched last summer with more than $100 million in funding commitments from tech industry figures, including Brockman. The group has opposed Alex Bores, the author of New York’s strongest AI safety law who is running for Congress in the state’s 12th district.

Tech

Meta Is in Crisis, Google Search’s Makeover, and AI Gets Booed by Graduates

Leah Feiger: Let’s invest.

Zoë Schiffer: They have that going for a while.

Leah Feiger: It wasn’t full Google, but it—

Zoë Schiffer: Somewhat there.

Leah Feiger: —had that vibe. To me, someone so on the outside of this in every single way, I know about these layoffs because they’ve been, A) so chaotic, but B) in some ways, needlessly so. Not to say that other tech companies aren’t firing scores of workers all the time. That feels like something we discuss on this podcast frequently, but this is happening with such a large runway and in a way that’s making employees feel so terrible about themselves.

Brian Barrett: Well, because it’s not just the layoffs, right? It’s also, even if you stay there, if you’re not culled from the herd, you are going to have to deal with this world in which you’ve got spyware on your laptops training AI to probably take your job at some point, right?

Zoë Schiffer: Explain that a little bit.

Brian Barrett: Meta announced, and this was more public, that they were going to put software on employee laptops that would monitor their keystrokes and how they move their cursors and basically how they do their job as Meta engineers and use that as training data for their own internal models to try to make their AI models better because they’re running out of other sources.

Zoë Schiffer: And could you opt out of that, Brian?

Brian Barrett: That’s a great question. I’m so glad you asked. You could not opt out.

Zoë Schiffer: I felt you didn’t know the answer to that one.

Brian Barrett: In fact, when an employee asked in a very public forum within Meta, “Hey, could we not do this?” Zoë, the response was?

Zoë Schiffer: Oh, absolutely you’re going to do this and shame on you for asking. And some of the employees who are staying, actually thousands of the employees who are staying, are getting drafted into the AI ranks. We published a piece today that was kind of about the morale inside the company, but also how there’s been this mad dash to use up perks and stipends that employees have. But one of the things that’s said at the end was that remaining employees are being asked to join AI teams. So whatever your job was previously, they’re internally getting drafted. You’re getting drafted into the AI ranks, now your job is going to look quite different.

Brian Barrett: That’s like 7,000 people.

Zoë Schiffer: Yes.

Leah Feiger: I’ve actually heard people use the word raptured.

Zoë Schiffer: Oh, my gosh.

Leah Feiger: Isn’t that—

Zoë Schiffer: And I wish we had that in the story.

Leah Feiger: I’m so sorry, but raptured into other teams. All of a sudden one day they’ve just disappeared. After this layoff, has Zuckerberg and co proposed a sort of coherent leadership plan or proposal? What happens after this?

Tech

Why the 2026 Hurricane Season Might Not Be That Bad

Atlantic hurricane season is almost upon us, and the early signs indicate it might be less active than usual. But that’s no reason to delete your weather app and ignore the forecast.

The National Oceanic and Atmospheric Administration is predicting eight to 14 named tropical systems, of which three to six will become hurricanes and one to three will be Category 3 or higher.

“What’s driving this forecast is largely an El Niño event,” said NOAA administrator Neil Jacobs.

Characterized by a tongue of hot water stretching across the Pacific, El Niño is likely to emerge this summer. That stretch of warm ocean rearranges weather patterns around the world. In the case of the tropical Atlantic, El Niño stirs up winds that make it hard for hurricanes to spin up. Those that do can sometimes be torn apart by what’s going on in the upper atmosphere. (The opposite is true in the Pacific, and NOAA is predicting a very active season in that ocean basin.)

During the three past super El Niños, accumulated cyclone energy—a metric that factors in storms’ strength and longevity—was well below normal.

That said, El Niño, even an extremely strong one, is only one of many factors that impact hurricane season. Hot local ocean temperatures can help storms form and gain strength, and the Atlantic is currently warmer than normal.

At the same time, Sahara dust can gum up the atmosphere and inhibit storms from forming. It’s also notoriously hard to predict when plumes of it will kick up. That’s what happened last year, when a below-average number of named storms formed despite an active forecast. Despite the lower-than-expected activity, last year still spawned Hurricane Melissa, one of the strongest storms to ever make landfall in the Atlantic basin.

All of which is to say that the seasonal forecast is a handy guide for what to expect, and it’s great for federal and state agencies to preposition supplies and resources. But it’s what happens with individual storms that ultimately matters.

“Even though we’re expecting a below average season in the Atlantic, it’s important to understand it only takes one,” Jacobs said, noting that even in quiet years, Category 5 storms have still made landfall.

The Trump administration has slashed staffing at NOAA and reduced the collection of some data, such as weather balloons, that can impact forecasts. Jacobs touted the value of new observations, including aerial drones that will be deployed operationally for the first time.

NOAA has also ramped up the use of artificial intelligence weather models trained on historical data. During the 2025 hurricane season, the agency tested an experimental hurricane model developed with Google DeepMind. Late last year, it also rolled out a suite of AI weather models to use in operational forecasting, in addition to traditional weather models that use equations to forecast the weather.

The agency says that the AI version of its flagship model provides better prediction of the tracks of tropical cyclones—the generic name for hurricanes—though it lags traditional weather models in predicting their intensity.

-

Entertainment1 week ago

Entertainment1 week agoConan O’Brien hat tricks as Oscar host

-

Fashion1 week ago

Fashion1 week agoItaly’s Zegna Group’s Q1 growth boosted by strong organic performance

-

Entertainment6 days ago

Entertainment6 days agoWhere Pete Davidson, Elsie Hewitt stand after breakup: Details revealed

-

Business1 week ago

Business1 week agoJersey Election 2026: Cost of living concern in St Helier Central

-

Business1 week ago

Business1 week agoTui issues update on summer jet fuel shortage fears

-

Entertainment7 days ago

Entertainment7 days agoEmilia Clarke recalls near-death incident while filming ‘Game of Thrones’

-

Fashion1 week ago

Fashion1 week agoGlobal cotton production, stocks to fall; consumption to rise: WASDE

-

Politics6 days ago

Politics6 days agoRising diesel costs from Iran war strain US school budgets