Tech

Just in Time for Spring, Don’t Miss These Electric Scooter Deals

The snow is melting, the days are getting longer, and I can almost smell the springtime ahead. Soon, we’ll be cruising around town on ebikes and electric scooters instead of burning fossil fuels. For now, the weather hasn’t quite caught up, which is great for markdowns. Many of the best electric scooters are still seeing significant discounts. If you’ve been thinking about buying one, now’s the best time: prices are low, and sunny commuting days are just ahead.

Gear editor Julian Chokkattu has spent five years testing more than 45 electric scooters. These are his top picks that are also on sale right now.

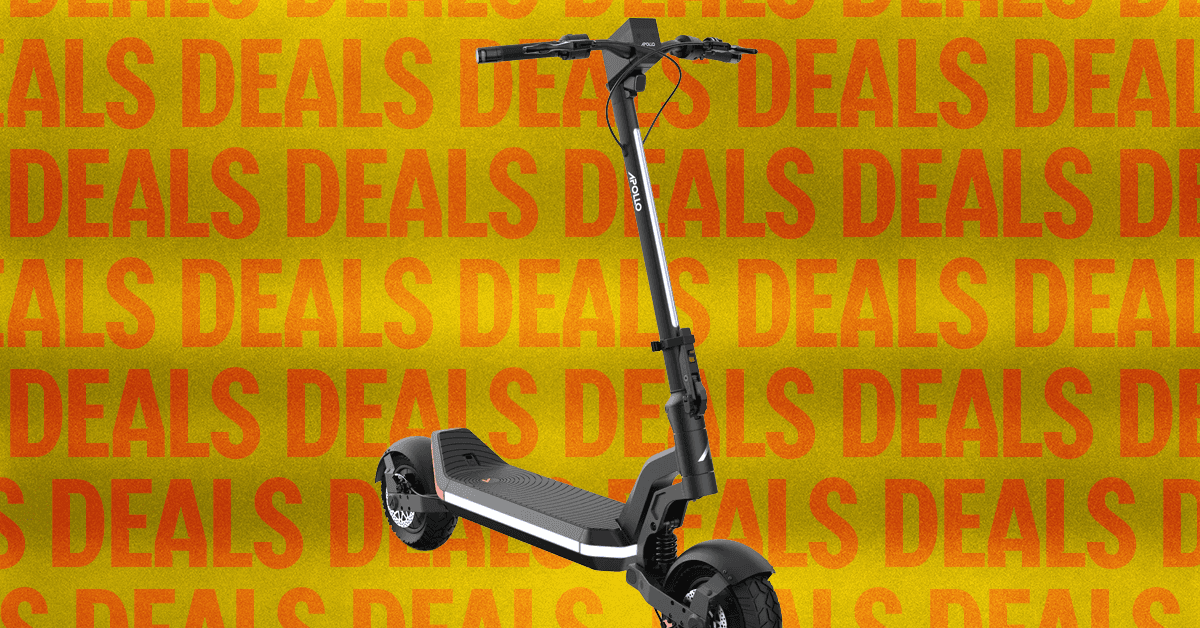

Apollo Go for $849 ($450 Off)

This is Gear editor Julian Chokkattu’s favorite scooter. The riding experience is powerful and smooth, thanks to its dual 350-watt motors and solid front and rear suspensions. The speed maxes out at 28 miles per hour (mph), which doesn’t make it the fastest scooter on the market, but it has a good range. (Chokkattu is a very tall man and was able to travel 15 miles on a single charge at 15 mph.) Other Apollo features he appreciates: turn signals, a dot display, a bell, along with a headlight and an LED strip for extra visibility.

Apollo Phantom 2.0 for $2099 ($900 Off)

The Apollo Phantom 2.0 maxes out at 44 mph, with plenty of power from its dual 1,750-watt motors. It’s a gorgeous scooter, designed with 11-inch self-healing tubeless tires and a dual-spring suspension system for a smooth riding experience. But with great power comes great weight. At 102 pounds, the Phantom 2.0 is the heaviest electric scooter Chokkattu has tested, so I would only recommend this purchase if you don’t live in a walkup and/or have a garage.

More Discounted Electric Scooters

Tech

Human-machine teaming dives underwater

The electricity to an island goes out. To find the break in the underwater power cable, a ship pulls up the entire line or deploys remotely operated vehicles (ROVs) to traverse the line. But what if an autonomous underwater vehicle (AUV) could map the line and pinpoint the location of the fault for a diver to fix?

Such underwater human-robot teaming is the focus of an MIT Lincoln Laboratory project funded through an internally administered R&D portfolio on autonomous systems and carried out by the Advanced Undersea Systems and Technology Group. The project seeks to leverage the respective strengths of humans and robots to optimize maritime missions for the U.S. military, including critical infrastructure inspection and repair, search and rescue, harbor entry, and countermine operations.

“Divers and AUVs generally don’t team at all underwater,” says principal investigator Madeline Miller. “Underwater missions requiring humans typically do so because they involve some sort of manipulation a robot can’t do, like repairing infrastructure or deactivating a mine. Even ROVs are challenging to work with underwater in very skilled manipulation tasks because the manipulators themselves aren’t agile enough.”

Beyond their superior dexterity, humans excel at recognizing objects underwater. But humans working underwater can’t perform complex computations or move very quickly, especially if they are carrying heavy equipment; robots have an edge over humans in processing power, high-speed mobility, and endurance. To combine these strengths, Miller and her team are developing hardware and algorithms for underwater navigation and perception — two key capabilities for effective human-robot teaming.

As Miller explains, divers may only have a compass and fin-kick counts to guide them. With few landmarks and potentially murky conditions caused by a lack of light at depth or the presence of biological matter in the water column, they can easily become disoriented and lost. For robots to help divers navigate, they need to perceive their environment. However, in the presence of darkness and turbidity, optical sensors (cameras) cannot generate images, while acoustic sensors (sonar) generate images that lack color and only show the shapes and shadows of objects in the scene. The historical lack of large, labeled sonar image datasets has hindered training of underwater perception algorithms. Even if data were available, the dynamic ocean can obscure the true nature of objects, confusing artificial intelligence. For instance, a downed aircraft broken into multiple pieces, or a tire covered in an overgrowth of mussels, may no longer resemble an aircraft or tire, respectively.

“Ultimately, we want to devise solutions for navigation and perception in expeditionary environments,” Miller says. “For the missions we’re thinking about, there is limited or no opportunity to map out the area in advance. For the harbor entry mission, maybe you have a satellite map but no underwater map, for example.”

On the navigation side, Miller’s team picked up on work started by the MIT Marine Robotics Group, led by John Leonard, to develop diver-AUV teaming algorithms. With their navigation algorithms, Leonard’s group ran simulations under optimal conditions and performed field testing in calm waters using human-paddled kayaks as proxies for both divers and AUVs. Miller’s team then integrated these algorithms into a mission-relevant AUV and began testing them under more realistic ocean conditions, initially with a support boat acting as a diver surrogate, and then with actual divers.

“We quickly learned that you need more sensing capabilities on the diver when you factor in ocean currents,” Miller explains. “With the algorithms demonstrated by MIT, the vehicle only needed to calculate the distance, or range, to the diver at regular intervals to solve the optimization problem of estimating the positions of both the vehicle and diver over time. But with the real ocean forces pushing everything around, this optimization problem blows up quickly.”

On the perception side, Miller’s team has been developing an AI classifier that can process both optical and sonar data mid-mission and solicit human input for any objects classified with uncertainty.

“The idea is for the classifier to pass along some information — say, a bounding box around an image — to the diver and indicate, “I think this is a tire, but I’m not sure. What do you think?” Then, the diver can respond, “Yes, you’ve got it right, or no, look over here in the image to improve your classification,” Miller says.

This feedback loop requires an underwater acoustic modem to support diver-AUV communication. State-of-the-art data rates in underwater acoustic communications would require tens of minutes to send an uncompressed image from the AUV to the diver. So, one aspect the team is investigating is how to compress information into a minimum amount to be useful, working within the constraints of the low bandwidth and high latency of underwater communications and the low size, weight, and power of the commercial off-the-shelf (COTS) hardware they’re using. For their prototype system, the team procured mostly COTS sensors and built a sensor payload that would easily integrate into an AUV routinely employed by the U.S. Navy, with the goal of facilitating technology transition. Beyond sonar and optical sensors, the payload features an acoustic modem for ranging to the diver and several data processing and compute boards.

Miller’s team has tested the sensor-equipped AUV and algorithms around coastal New England — including in the open ocean near Portsmouth, New Hampshire, with the University of New Hampshire’s (UNH) Gulf Surveyor and Gulf Challenger coastal research vessels as diver surrogates, and on the Boston-area Charles River, with an MIT Sailing Pavilion skiff as the surrogate.

“The UNH boats are well-equipped and can access realistic ocean conditions. But pretending to be a diver with a large boat is hard. With the skiff, we can move more slowly and get the relative motion in tune with how a diver and AUV would navigate together.”

Last summer, the team started testing equipment with human divers at Michigan Technological University’s Great Lakes Research Center. Although the divers lacked an interface to feed back information to the AUV, each swam holding the team’s tube-shaped prototype tablet, dubbed a “tube-let.” The tube-let was equipped with a pressure and depth sensor, inertial measurement unit (to track relative motion), and ranging modem — all necessary components for the navigation algorithms to solve the optimization problem.

“A challenge during testing was coordinating the motion of the diver and vehicle, because they don’t yet collaborate,” Miller says. “Once the divers go underwater, there is no communication with the team on the surface. So, you have to plan where to put the diver and vehicle so they don’t collide.”

The team also worked on the perception problem. The water clarity of the Great Lakes at that time of year allowed for underwater imaging with an optical sensor. Caroline Keenan, a Lincoln Scholars Program PhD student jointly working in the laboratory’s Advanced Undersea Systems and Technology Group and Leonard’s research group at MIT, took the opportunity to advance her work on knowledge transfer from optical sensors to sonar sensors. She is exploring whether optical classifiers can train sonar classifiers to recognize objects for which sonar data doesn’t exist. The motivation is to reduce the human operator load associated with labeling sonar data and training sonar classifiers.

With the internally funded research program coming to an end, Miller’s team is now seeking external sponsorship to refine and transition the technology to military or commercial partners.

“The modern world runs on undersea telecommunication and power cables, which are vulnerable to attack by disruptive actors. The undersea domain is becoming increasingly contested as more nations develop and advance the capabilities of autonomous maritime systems. Maintaining global economic security and U.S. strategic advantage in the undersea domain will require leveraging and combining the best of AI and human capabilities,” Miller says.

Tech

UK government accelerates autonomous vehicle development funding | Computer Weekly

A total of eight studies exploring how autonomous vehicles could benefit businesses and communities across the UK have received funding from a government-backed initiative aimed at accelerating the roll-out of commercially viable connected and automated mobility (CAM) services in the UK.

Part of the UK government’s industrial strategy is to address the complexities in commercialising CAM vehicles, and in addition to increased funding, the programme is complemented by the Automated Vehicles Act 2024, which is designed to pave the way for self-driving vehicles to be used safely and securely on British roads, removing the need for safety drivers. Alongside full implementation of the act by 2027, the government is also enabling commercial pilots of bus and taxi-like services from spring 2026.

Running until 2030, the £150m CAM Pathfinder programme is seen as key to realising the industry’s potential. It is aimed at addressing the challenges of bringing CAM vehicles to market, providing funding for projects that are intended to develop “world-first” technologies, products and services, ranging from “cutting-edge” software to smart transport services. It was announced in the government’s advanced manufacturing sector plan, which aims to grow the UK’s CAM industry – calculated to be worth £3.7bn.

Projects funded by CAM Pathfinder must demonstrate that the cutting-edge technology or mobility services being developed can help industries become safer, sustainable, inclusive and more productive. By accelerating the development, deployment and adoption of such technologies and services, the objective is to support growth and investment, and unlock innovation across transport.

The CAM Pathfinder programme is delivered by the Centre for Connected and Autonomous Vehicles (CCAV), supported by Zenzic and Innovate UK. CCAV is a joint policy unit of the Department for Transport and the Department for Business and Trade. Zenzic was created by the UK government and industry to champion the CAM ecosystem and lead the UK in accelerating the self-driving revolution, with the goal of ensuring a safer, more secure, sustainable and inclusive transport future.

The aim of the Feasibility Studies 2 competition is to support organisations to overcome key barriers to investment decisions in CAM technologies, both in private and public sector environments. Through the studies, organisations will set out to produce business cases designed to unlock advanced, at-scale deployments of CAM across the UK. It will offer an initiative to establish how self-driving vehicles could boost the aviation sector; how self-driving freight vehicles could lift the nation’s automotive industry and how private-hire automated vehicles could be deployed on London roads.

Among the projects are Aspire, a study looking to address what is seen as a critical UK mobility challenge: structural driver shortages, rising operational costs and the need to maintain connectivity while meeting zero-emission mandates. It is being carried out by the Bamford Bus Company, Loughborough University and Queen’s University Belfast.

A study by Fusion Processing, Develop and quantify business models, is seeking to identify the staff, processes and investments required to deliver operational cost savings and efficiencies at UK airports, while Moonbility is offering Sentinel Shuttle, a future-ready feasibility study to unlock safe, scalable driverless shuttle operations across NHS hospital and care estates. This is being enabled by real-time onboard monitoring and remote oversight.

Odysse has embarked on a feasibility study for Level-4 automated vehicles (AVs) on private-hire services in high-demand London corridors. This will explore how emerging self-driving technologies could help shape the future of urban mobility in one of the world’s most dynamic cities.

Based on work by BCA Automotive, National Highways, Newcastle University, Perform Green, South Tyneside Council and Sunderland City Council, the North East Vehicle Autonomous Corridor comprises a feasibility study into the deployment of autonomous electric HGVs. It will focus on the strategic road freight corridor between the Nissan Motor Manufacturing UK Sunderland plant and the Port of Tyne.

Meanwhile, Tactic is a six-month feasibility study led by iC4DTI, with Cenex as partner, to produce an investment-ready business case for a driver-out CAM freight service on the Teesport to Teesside International Airport corridor within the Teesside Freeport.

The V-CAL feasibility study will assess the commercial viability of deploying autonomous yard tractors on the Vantec-Nissan route in Sunderland. The nine-month project builds on the outcomes of the 5G CAL and V-CAL initiatives, moving from technical proof-of-concept to a business case for full-scale deployment without safety drivers.

Dedicated CAV corridor

Finally, the Wellcome Genome Campus project will deliver a feasibility study for one of the UK’s first dedicated corridors for connected and autonomous vehicles (CAVs). It will link the Wellcome Genome Campus (WGC) to Whittlesford Parkway railway station in Cambridgeshire.

Mark Cracknell, programme director at Zenzic, said: “CAM solutions have the potential to unlock new business opportunities and economic growth in all corners of the country. These feasibility studies will help to articulate the impact that market-ready CAM technologies can have on both business productivity and economic growth. We are excited to start working with the organisations delivering each of the eight projects to further develop their business cases, demonstrate the commerciality of their solutions and paint a clearer picture of the commercially viable CAM solutions coming down the road.”

Claire Spooner, director of innovation service at Innovate UK, added: “This latest tranche of funding from the CAM Pathfinder programme will enable the UK to unlock the huge future benefits of these new CAM technologies. These projects, around the UK, will develop new solutions for a range of CAM applications and scenarios, and they will enable the companies behind these innovations to scale and grow.”

Tech

Bremont Is Sending a Watch to the Moon’s Surface

A multifaceted decahedral black ceramic bezel and sandwich-style three-piece case—a reworking of Bremont’s signature Trip-Tick construction—house a chronometer-rated automatic chronograph movement made by Sellita, with a 62-hour power reserve.

The watch will be a passenger aboard the FLIP rover, due to launch as part of Astrobotic’s Griffin Mission One (Griffin-1), expected to land at the lunar south pole at some point in the second half of this year.

It’s a one-way mission: The rover will remain permanently on the lunar surface, with the watch ticking away as it roams the landscape. FLIP’s objectives include reaching elevated positions on the lunar terrain, gathering data on lunar dust accumulation, testing dust-mitigation coatings, and surviving a two-week lunar night in hibernation (which would be a first for a US rover).

In terms of serious timekeeping data for Bremont, the mission is frankly symbolic. The watch will be positioned vertically in a specially designed housing within the FLIP’s chassis, between its front wheels. Only the watch head, weighing 107 grams, is included, glued in place using a specialist composite, its face visible to FLIP’s HD cameras. But the hibernatory periods will mean the watch (whose mechanical movement is driven in normal circumstances by the motion of the wearer’s arm) will stop running once its 62-hour power reserve runs down.

When the FLIP is on the move again, its motion should—in theory—jolt the mechanism into action once more. Despite the gravitational pull that’s a sixth of the Earth’s, the acceleration, pitches, and tilts of the rover should swing the winding rotor, if with less torque and efficiency than on Earth.

“My guess is that the watch will function from time to time, but for short periods,” Cerrato says. “We will learn along the way. But that’s what is exciting—it projects us into a thinking process that is absolutely out of the box. Just the fact of having it there is inspiring.” However, there is little doubt that Bremont will, just like other brands with any ties to the cosmos, mine its new space connection for all it is worth.

FLIP itself, which weighs just 1,058 pounds and carries a mix of commercial and government payloads, four HD cameras, and a deployable solar array, is fundamentally a technology demonstrator for Flexible Logistics and Exploration (FLEX), Astrolab’s much larger SUV-sized rover destined to support NASA’s Artemis program. The firm developed the FLIP from scratch after NASA’s equivalent vehicle for which the Griffin-1 mission was contracted, the VIPER, was put on pause in 2024. This left Astrobotic seeking a stand-in in short order. Astrolab, which signed the contract within a month of hearing about the opportunity in the fall of 2024, took the FLIP from blank sheet to finished rover in roughly a year.

Its standout feature is its hyper-deformable wheels, minutely structured from silicone, composite, and stainless steel, which create a soft, enlarged contact surface with the terrain. “It’s like if you’re off-roading in a Jeep or Land Rover where you let some air out of the tires to go softer and spread the load over a larger area,” explains Astrolab’s founder, Jaret Matthews. While the moon’s nighttime temperatures of around -200 degrees Celsius (around -328 Fahrenheit) would cause conventional rubber tires to become glass-like and shatter, Astrolab’s solution is intended to keep the rover from sinking into the unconsolidated lunar dust—or regolith—that covers the environment.

-

Fashion1 week ago

Fashion1 week agoIndia’s exports face reset as EU links trade to carbon metrics: EY

-

Entertainment6 days ago

Entertainment6 days agoQueen Elizabeth II emotional message for Archie, Lilibet sparks speculation

-

Tech6 days ago

Tech6 days agoAs the Strait of Hormuz Reopens, Global Shipping Will Take Months to Recover

-

Tech6 days ago

Tech6 days agoAzure customers up in arms over ‘full’ UK South region | Computer Weekly

-

Entertainment1 week ago

Entertainment1 week agoLamar Odom shocking response to Khloé Kardashian account of his overdose

-

Sports1 week ago

Sports1 week agoWith Messi goal, Inter Miami open new stadium with dream moment

-

Tech5 days ago

Tech5 days agoThis AI Button Wearable From Ex-Apple Engineers Looks Like an iPod Shuffle

-

Fashion6 days ago

Fashion6 days agoCII submits 20-pt agenda to Indian govt to back firms hit by Iran war

.png)

.png)