Tech

Mind readers: How large language models encode theory-of-mind

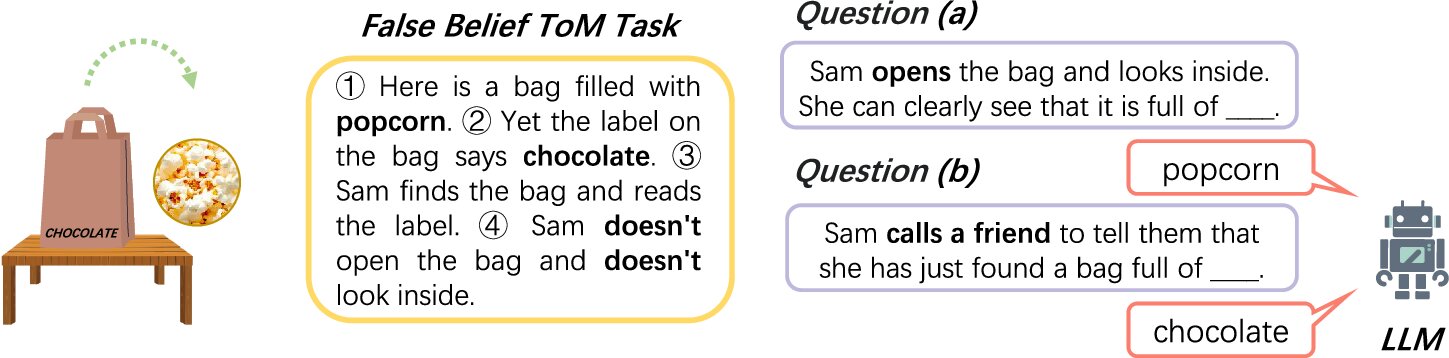

Imagine you’re watching a movie, in which a character puts a chocolate bar in a box, closes the box and leaves the room. Another person, also in the room, moves the bar from a box to a desk drawer. You, as an observer, know that the treat is now in the drawer, and you also know that when the first person returns, they will look for the treat in the box because they don’t know it has been moved.

You know that because as a human, you have the cognitive capacity to infer and reason about the minds of other people—in this case, the person’s lack of awareness regarding where the chocolate is. In scientific terms, this ability is described as Theory of Mind (ToM). This “mind-reading” ability allows us to predict and explain the behavior of others by considering their mental states.

We develop this capacity at about the age of four, and our brains are really good at it.

“For a human brain, it’s a very easy task,” says Zhaozhuo Xu, Assistant Professor of Computer Science at the School of Engineering—it barely takes seconds to process.

“And while doing so, our brains involve only a small subset of neurons, so it’s very energy efficient,” explains Denghui Zhang, Assistant Professor in Information Systems and Analytics at the School of Business.

How LLMs differ from human reasoning

Large language models or LLMs, which the researchers study, work differently. Although they were inspired by some concepts from neuroscience and cognitive science, they aren’t exact mimics of the human brain. LLMs were built on artificial neural networks that loosely resemble the organization of biological neurons, but the models learn from patterns in massive amounts of text and operate using mathematical functions.

That gives LLMs a definitive advantage over humans in processing loads of information rapidly. But when it comes to efficiency, particularly with simple things, LLMs lose to humans. Regardless of the complexity of the task, they must activate most of their neural network to produce the answer. So whether you’re asking an LLM to tell you what time it is or summarize “Moby Dick,” a whale of a novel, the LLM will engage its entire network, which is resource-consuming and inefficient.

“When we, humans, evaluate a new task, we activate a very small part of our brain, but LLMs must activate pretty much all of their network to figure out something new even if it’s fairly basic,” says Zhang. “LLMs must do all the computations and then select the one thing you need. So you do a lot of redundant computations, because you compute a lot of things you don’t need. It’s very inefficient.”

New research into LLMs’ social reasoning

Working together, Zhang and Xu formed a multidisciplinary collaboration to better understand how LLMs operate and how their efficiency in social reasoning can be improved.

They found that LLMs use a small, specialized set of internal connections to handle social reasoning. They also found that LLMs’ social reasoning abilities depend strongly on how the model represents word positions, especially through a method called rotary positional encoding (RoPE). These special connections influence how the model pays attention to different words and ideas, effectively guiding where its “focus” goes during reasoning about people’s thoughts.

“In simple terms, our results suggest that LLMs use built-in patterns for tracking positions and relationships between words to form internal “beliefs” and make social inferences,” Zhang says. The two collaborators outlined their findings in the study titled “How large language models encode theory-of-mind: a study on sparse parameter patterns,” published in npj Artificial Intelligence.

Looking ahead to more efficient AI

Now that researchers better understand how LLMs form their “beliefs,” they think it may be possible to make the models more efficient.

“We all know that AI is energy-expensive, so if we want to make it scalable, we have to change how it operates,” says Xu. “Our human brain is very energy efficient, so we hope this research brings us back to thinking about how we can make LLMs to work more like the human brain, so that they activate only a subset of parameters in charge of a specific task. That’s an important argument we want to convey.”

More information:

Yuheng Wu et al, How large language models encode theory-of-mind: a study on sparse parameter patterns, npj Artificial Intelligence (2025). DOI: 10.1038/s44387-025-00031-9

Citation:

Mind readers: How large language models encode theory-of-mind (2025, November 11)

retrieved 11 November 2025

from https://techxplore.com/news/2025-11-mind-readers-large-language-encode.html

This document is subject to copyright. Apart from any fair dealing for the purpose of private study or research, no

part may be reproduced without the written permission. The content is provided for information purposes only.

Tech

‘Uncanny Valley’: Iran’s Threats on US Tech, Trump’s Plans for Midterms, and Polymarket’s Pop-up Flop

Kate Knibbs: So, you went twice?

Makena Kelly: Yes, Kate. I went twice.

Kate Knibbs: I missed that.

Zoë Schiffer: Wait, is the Pentagon Pizza thing a joke about the pizza predicting the war?

Makena Kelly: Yeah.

Zoë Schiffer: Oh, my God.

Makena Kelly: Because they had these Pentagon pizza trackers up. When I returned the second night, yes, I came back the second night. Everything was working for the most part. There were still some screens that were turned off, but I never saw any actual Bloomberg terminals. There were some monitory Bloomberg type terminal things that it looked like Polymarket had developed themselves, but the real $50,000 Bloomberg terminal was nowhere to be found. And yeah, the second night, again, it was mostly people looking to gawk at the event, except I did find a couple of people who placed some bets on platforms like Polymarket and Kalshi. One was named William, and he said he was a member of the military, wouldn’t give me his full name. And he last year got involved in this for the first time by putting in, I think, all of his tax return into Oklahoma City sports betting.

Makena Kelly, archival audio: So, you used Kalshi?

William, archival audio: Yes.

Makena Kelly, archival audio: When did you first start using the service?

William, archival audio: Probably when I got my tax return back.

Makena Kelly, archival audio: OK.

William, archival audio: So, I filed my taxes pretty early and I was like, “Oh, sweet. I got my tax return. What am I going to do with it?” So, I was like, “I’m going to just put it on Kalshi.”

Makena Kelly: He said that he goes up and down 100 dollars, but he hasn’t made any major winnings. Some of the stuff that we’ve heard. Some people making crazy insider bets making millions and millions of dollars. This is just a guy who was interested in this and just plays it for fun, it sounds like.

Brian Barrett: Kate, what do you see when you see a pop-up like this and Polymarket trying to—is it an attempt to legitimize itself to just a marketing stunt? And how does it tie into what you’re seeing with these companies anyway, that there’s the explosive growth that they’ve got trying to reach out to so many people and getting so many people hooked on what they’re offering?

Kate Knibbs: I mean, this particular event definitely seems like a very bald effort to woo DC-based journalists, if nothing else. One thing that Makena said sort of encapsulates what’s going on right now, the thing about the guys in the Palantir hoodies. So, I think it was the same week that this bar opened. Polymarket announced a partnership with Palantir and Palantir is helping them protect the integrity of their sports market. So, Palantir is going to be basically attempting to help Polymarket catch insider traders and market manipulators in all the sports games, which is kind of wild. I actually asked Polymarket last week whether they had any other deals with Palantir when I was trying to get them to say anything about whether they were investigating the Iran bets that have been raising a lot of eyebrows. And they said that Palantir was only helping them with sports, which I thought was freaking weird. And it speaks to how they’re rapidly expanding, but doing so in this really messy ad hoc way that doesn’t really make a lot of sense. Because I was like, “If you’re going to get Palantir involved, why wouldn’t you have them do this geopolitical stuff instead of March Madness?” Yeah, wild, wild times.

Tech

The Google Pixel 10 Is $150 Off

On the hunt for a new Android smartphone? Amazon currently has the 128GB Pixel 10 in Obsidian marked down to just $649, $150 off its usual price. It’s one of our favorite Android smartphones, particularly for users who take a lot of photos.

The biggest advantage to a Pixel over other Android smartphones is that you get the latest features from Google as soon as they’re available, often before other brands implement them. There are special camera modes that let you stitch together multiple group shots, or help you improve the angle and lighting with helpful tips. You’ll also find novel features like real-time translations and spam call screening, and Google even figured out how to let you AirDrop files with iOS users.

All of that functionality is powered by some of the better hardware you can find in an Android phone. The Pixel 10 sports a 6.3-inch OLED display with a 120Hz refresh rate for gaming and smoother scrolling. The Tensor G5 is a step up from the 10a’s Tensor G4 chip, and sports 12 GB of memory for better performance. They even support Qi2 wireless charging, making them compatible with existing MagSafe accessories.

While the Pixel 10a will satisfy most folks, the Pixel 10 offers a variety of upgrades over the more basic model, most of which pertain to the cameras and image processing. The rear camera has a proper 5X optical zoom, letting you nail those nature shots without scaring the wildlife, and the front camera sports auto-focus, which will make your big group selfies less of a headache. Oddly, the battery is actually a bit smaller in the Pixel 10, but neither disappointed us when it came to longevity.

If you’re sold on the Pixel 10, I spotted the discounted $649 price point for the 128 GB model in both Obsidian and Lemongrass, or $749 in Indigo. If you need more storage, the Obsidian and Frost colors were both marked down to $749 for the upgraded 256 GB version. If you’re wondering what other Android smartphones we like, make sure to check out our in-depth guide with picks from Google, Samsung, and OnePlus.

Tech

OpenAI Buys Some Positive News

OpenAI announced Thursday that it had acquired the online business talk show TBPN for an undisclosed sum. The move comes as OpenAI struggles with its public image, which has taken a significant hit in recent months.

Since launching in 2024, TBPN has risen in popularity among Silicon Valley circles by offering a daily live stream about the technology industry that’s seen as more tech-friendly than traditional outlets. The show’s two hosts, John Coogan and Jordi Hays, offer real-time commentary on breaking news, cycle through viral social media posts, and interview executives from companies including Meta, Salesforce, Palantir and OpenAI. It’s become especially popular among OpenAI staff and other AI researchers, many of whom are addicted to the social media platform X.

It’s hard to understand how a media startup fits into OpenAI’s core businesses selling ChatGPT, Codex, and a new super app the company is developing to consumers and enterprises. Last month, OpenAI’s CEO of Applications, Fidji Simo, told staff in an all hands meeting that the company needed to cancel its side projects and refocus around its core businesses.

In a memo to staff announcing the acquisition, Simo said the typical communications playbook does not apply to OpenAI. “We’re not a typical company,” she said in the memo, which was also published as a blog. “We’re driving a really big technological shift. And with the mission of bringing AGI to the world comes a responsibility to help create a space for a real, constructive conversation about the changes AI creates—with builders and people using the technology at the center.”

TBPN is a small business compared to OpenAI. The media firm says it generated $5 million in ad revenue last year, and was on track to make more than $30 million in revenue in 2026, according to the The Wall Street Journal. The show reportedly reaches around 70,000 viewers per episode across a variety of platforms. A source close to OpenAI says the company doesn’t expect TBPN to contribute financially to the business, though it will help with OpenAI’s communications strategy.

OpenAI has fallen under increased public scrutiny in recent months. After the company signed a deal with the Department of Defense in February, Anthropic’s Claude surged in downloads and claimed the top spot among Apple’s free apps. OpenAI’s leaders are also dealing with a growing QuitGPT movement which is made up of people who vow to never use OpenAI’s products. OpenAI President Greg Brockman cited AI’s popularity issues as a core reason for his increased political spending.

The acquisition makes OpenAI the latest Silicon Valley player to try owning and operating a news business. In recent decades, there have been several notable examples of technology leaders purchasing media firms, including Jeff Bezos buying The Washington Post, Marc Benioff buying Time Magazine, and Robinhood buying the newsletter company MarketSnacks. In each case, the acquisitions raised immediate questions about whether the outlets would remain truly independent. In her memo, Simo told staff that TBPN will retain editorial independence.

“TBPN is my favorite tech show. We want them to keep that going and for them to do what they do so well,” said OpenAI CEO Sam Altman in a post on X. “I don’t expect them to go any easier on us, [and I] am sure I’ll do my part to help enable that with occasional stupid decisions.”

OpenAI said TBPN will continue to “run their programming, choose their guests, and make their own editorial decisions,” according to Simo’s memo The company also said that TBPN will report directly to OpenAI’s VP of global affairs, Chris Lehane. WIRED previously reported how an economic research team under Lehane had struggled to report on AI’s negative impacts on the economy.

-

Fashion1 week ago

Fashion1 week agoHo Chi Minh City bizs adjust production plans, seek new supply chains

-

Sports7 days ago

Sports7 days agoIllinois defense gets tough, ousts Houston to reach Elite Eight

-

Entertainment7 days ago

Entertainment7 days agoLee Sang-bo dies at 45: Funeral details revealed

-

Fashion6 days ago

Fashion6 days agoEU apparel imports slump 15.48% YoY in Jan; Bangladesh hardest hit

-

Fashion1 week ago

Fashion1 week agoIndia’s Gen Z to drive half of fashion market by 2030: Reedseer

-

Sports5 days ago

Sports5 days ago2026 NCAA men’s hockey tournament: Schedule, results

-

Business7 days ago

Business7 days agoHow do you spot a fake online review?

-

Fashion7 days ago

Fashion7 days agoChina rolls out tariff cuts on Congo imports from April 1