Tech

The Race to Build the DeepSeek of Europe Is On

Against that backdrop, Europe’s reliance on American-made AI begins to look more and more like a liability. In a worst case scenario, though experts consider the possibility remote, the US could choose to withhold access to AI services and crucial digital infrastructure. More plausibly, the Trump administration could use Europe’s dependence as leverage as the two sides continue to iron out a trade deal. “That dependency is a liability in any negotiation—and we are going to be negotiating increasingly with the US,” says Taddeo.

The European Commission, White House, and UK Department for Science, Innovation and Technology did not respond to requests for comment.

To hedge against those risks, European nations have attempted to bring the production of AI onshore, through funding programs, targeted deregulation, and partnerships with academic institutions. Some efforts have focused on building competitive large language models for native European languages, like Apertus and GPT-NL.

For as long as ChatGPT or Claude continues to outperform Europe-made chatbots, though, America’s lead in AI will only grow. “These domains are very often winner-takes-all. When you have a very good platform, everybody goes there,” says Nejdl. “Not being able to produce state-of-the-art technology in this field means you will not catch up. You will always just feed the bigger players with your input, so they will get even better and you will be more behind.”

Mind the Gap

It is unclear precisely how far the UK or EU intends to take the push for “digital sovereignty,” lobbyists claim. Does sovereignty require total self-sufficiency across the sprawling AI supply chain, or only an improved capability in a narrow set of disciplines? Does it demand the exclusion of US-based providers, or only the availability of domestic alternatives? “It’s quite vague,” says Boniface de Champris, senior policy manager at the Computer & Communications Industry Association, a membership organization for technology companies. “It seems to be more of a narrative at this stage.”

Neither is there broad agreement as to which policy levers to pull to create the conditions for Europe to become self-sufficient. Some European suppliers advocate for a strategy whereby European businesses would be required, or at least incentivized, to buy from homegrown AI firms—similar to China’s reported approach to its domestic processor market. Unlike grants and subsidies, such an approach would help to seed demand, argues Ying Cao, CTO at Magics Technologies, a Belgium-based outfit developing AI-specific processors for use in space. “That’s more important than simply access to capital,” says Cao. “The most important thing is that you can sell your products.” But those who advocate for open markets and deregulation claim that trying to cut out US-based AI companies risks putting domestic businesses at a disadvantage to global peers, left to choose whichever AI products suit them best. “From our perspective, sovereignty means having choice,” says de Champris.

But for all the disagreement over policy minutiae, there is a broad belief that bridging the performance gap to the American leaders remains eminently possible for even budget- and resource-constrained labs, as DeepSeek illustrated. “If I would already think we will not catch up, I would not [try],” says Nejdl. SOOFI, the open source model development project in which Nejdl is involved, intends to put out a competitive general purpose language model with roughly 100 billion parameters within the next year.

“Progress in this field will not to the larger part depend anymore on the biggest GPU clusters,” claims Nejdl. “We will be the European DeepSeek.”

Tech

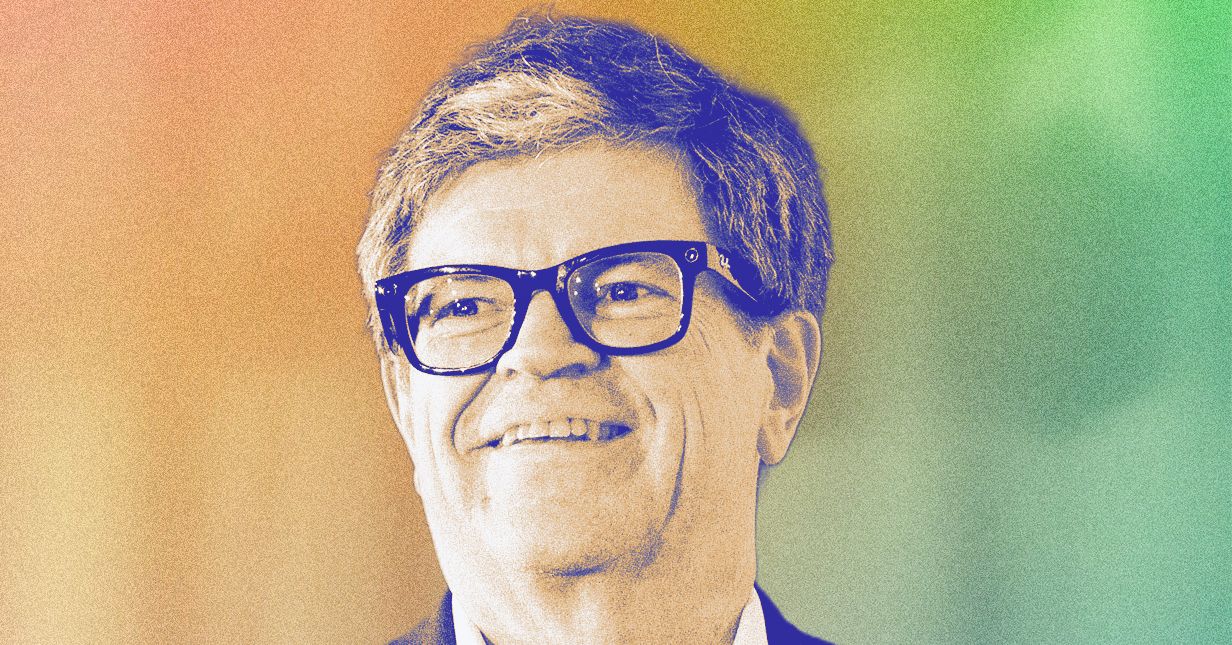

Yann LeCun Raises $1 Billion to Build AI That Understands the Physical World

Advanced Machine Intelligence (AMI), a new Paris-based startup cofounded by Meta’s former chief AI scientist Yann LeCun, announced Monday it has raised more than $1 billion to develop AI world models.

LeCun argues that most human reasoning is grounded in the physical world, not language, and that AI world models are necessary to develop true human-level intelligence. “The idea that you’re going to extend the capabilities of LLMs [large language models] to the point that they’re going to have human-level intelligence is complete nonsense,” he said in an interview with WIRED.

The financing, which values the startup at $3.5 billion, was co-led by investors such as Cathay Innovation, Greycroft, Hiro Capital, HV Capital, and Bezos Expeditions. Other notable backers include Mark Cuban, former Google CEO Eric Schmidt, and French billionaire and telecommunications executive Xavier Niel.

AMI (pronounced like the French word for friend) aims to build “a new breed of AI systems that understand the world, have persistent memory, can reason and plan, and are controllable and safe,” the company says in a press release. The startup says it will be global from day one, with offices in Paris, Montreal, Singapore, and New York, where LeCun will continue working as a New York University professor in addition to leading the startup. AMI will be the first commercial endeavor for LeCun since his departure from Meta in November 2025.

LeCun’s startup represents a bet against many of the world’s biggest AI labs like OpenAI, Anthropic, and even his former workplace, Meta, which believe that scaling up LLMs will eventually deliver AI systems with human-level intelligence or even superintelligence. LLMs have powered viral products such as ChatGPT and Claude Code, but LeCun has been one of the AI industry’s most prominent researchers speaking out about the limitations of these AI models. LeCun is well known for being outspoken, but as a pioneer of modern AI that won a Turing award back in 2018, his skepticism carries weight.

LeCun says AMI aims to work with companies in manufacturing, biomedical, robotics, and other industries that have lots of data. For example, he says AMI could build a realistic world model of an aircraft engine and work with the manufacturer to help them optimize for efficiency, minimize emissions, or ensure reliability.

AMI was cofounded by LeCun and several leaders he worked with at Meta, including the company’s former director of research science, Michael Rabbat; former vice president of Europe, Laurent Solly; and former senior director of AI research, Pascale Fung. Other cofounders include Alexandre LeBrun, former CEO of the AI health care startup Nabla, who will serve as AMI’s CEO, and Saining Xie, a former Google DeepMind researcher who will be the startup’s chief science officer.

The Case for World Models

LeCun does not dismiss the overall utility of LLMs. Rather, in his view, these AI models are simply the tech industry’s latest promising trend, and their success has created a “kind of delusion” among the people who build them. “It’s true that [LLMs] are becoming really good at generating code, and it’s true that they are probably going to become even more useful in a wide area of applications where code generation can help,” says LeCun. “That’s a lot of applications, but it’s not going to lead to human-level intelligence at all.”

LeCun has been working on world models for years inside of Meta, where he founded the company’s Fundamental AI Research lab, FAIR. But he’s now convinced his research is best done outside the social media giant. He says it’s become clear to him that the strongest applications of world models will be selling them to other enterprises, which doesn’t fit neatly into Meta’s core consumer business.

As AI world models like Meta’s Joint-Embedding Predictive Architecture (JEPA) became more sophisticated, “there was a reorientation of Meta’s strategy where it had to basically catch up with the industry on LLMs and kind of do the same thing that other LLM companies are doing, which is not my interest,” says LeCun. “So sometime in November, I went to see Mark Zuckerberg and told him. He’s always been very supportive of [world model research], but I told him I can do this faster, cheaper, and better outside of Meta. I can share the cost of development with other companies … His answer was, OK, we can work together.”

Tech

Nvidia Is Planning to Launch an Open-Source AI Agent Platform

Nvidia is planning to launch an open-source platform for AI agents, people familiar with the company’s plans tell WIRED.

The chipmaker has been pitching the product, referred to as NemoClaw, to enterprise software companies. The platform will allow these companies to dispatch AI agents to perform tasks for their own workforces. Companies will be able to access the platform regardless of whether their products run on Nvidia’s chips, sources say.

The move comes as Nvidia prepares for its annual developer conference in San Jose next week. Ahead of the conference, Nvidia has reached out to companies including Salesforce, Cisco, Google, Adobe, and CrowdStrike to forge partnerships for the agent platform. It’s unclear whether these conversations have resulted in official partnerships. Since the platform is open source, it’s likely that partners would get free, early access in exchange for contributing to the project, sources say. Nvidia plans to offer security and privacy tools as part of this new open-source agent platform.

Nvidia did not respond to a request for comment. Representatives from Cisco, Google, Adobe, and CrowdStrike also did not respond to requests for comment. Salesforce did not provide a statement prior to publication.

Nvidia’s interest in agents comes as people are embracing “claws,” or open-source AI tools that run locally on a user’s machine and perform sequential tasks. Claws are often described as self-learning, in that they’re supposed to automatically improve over time. Earlier this year, an AI agent known as OpenClaw—which was first called Clawdbot, then Moltbot—captivated Silicon Valley due to its ability to run autonomously on personal computers and complete work tasks for users. OpenAI ended up acquiring the project and hiring the creator behind it.

OpenAI and Anthropic have made significant improvements in model reliability in recent years, but their chatbots still require hand-holding. Purpose-built AI agents or claws, on the other hand, are designed to execute multiple steps without as much human supervision.

The usage of claws within enterprise environments is controversial. WIRED previously reported that some tech companies, including Meta, have asked employees to refrain from using OpenClaw on their work computers, due to the unpredictability of the agents and potential security risks. Last month a Meta employee who oversees safety and alignment for the company’s AI lab publicly shared a story about an AI agent going rogue on her machine and mass deleting her emails.

For Nvidia, NemoClaw appears to be part of an effort to court enterprise software companies by offering additional layers of security for AI agents. It’s also another step in the company’s embrace of open-source AI models, part of a broader strategy to maintain its dominance in AI infrastructure at a time when leading AI labs are building their own custom chips. Nvidia’s software strategy until now has been heavily reliant on its CUDA platform, a famously proprietary system that locks developers into building software for Nvidia’s GPUs and has created a crucial “moat” for the company.

Last month The Wall Street Journal reported that Nvidia also plans to reveal a new chip system for inference computing at its developer conference. The system will incorporate a chip designed by the startup Groq, which Nvidia entered into a multibillion-dollar licensing agreement with late last year.

Paresh Dave and Maxwell Zeff contributed to this report.

Tech

Anthropic Claims Pentagon Feud Could Cost It Billions

Anthropic executives allege that current customers and prospective ones have been demanding new terms and even backing out of negotiations since the US Department of Defense labeled the AI startup a supply-chain risk late last month, according to court papers that also revealed new financial details about the company.

Hundreds of millions of dollars in expected revenue this year from work tied to the Pentagon is already at risk for Anthropic, the company’s chief financial officer, Krishna Rao, wrote in a court filing on Monday. But if the government has its way and pressures a broad range of companies from doing business with the AI startup, regardless of any ties to the military, Anthropic could ultimately lose billions of dollars in sales, he stated. Its all-time sales, since commercializing its technology in 2023, exceed $5 billion, according to Rao.

Anthropic’s revenue exploded as its Claude models began outperforming rivals and showing advanced capabilities in areas such as generating software code. But the company spends heavily on computing infrastructure and remains deeply unprofitable. Rao specified that Anthropic has spent over $10 billion to train and deploy its models.

Anthropic chief commercial officer Paul Smith provided several examples of partners who have privately raised concerns to the AI startup in recent days. He said a financial services customer paused negotiations over a $15 million deal because of the supply-chain label, and two leading financial services companies have refused to close deals valued together at $80 million unless they gain the right to unilaterally cancel their contracts for any reason. A grocery store chain canceled a sales meeting, citing the supply-chain-risk designation, Smith added.

“All have taken steps that reflect deep distrust and a growing fear of associating with Anthropic,” Smith wrote.

The executives’ comments are part of statements from six Anthropic leaders in support of a preliminary order that would allow the San Francisco company to continue doing business with the Department of Defense until lawsuits about the supply-chain-risk issue are resolved.

Anthropic has sued the Trump administration in two courts. A lawsuit filed in San Francisco federal court on Monday alleges the government violated the company’s free speech rights. A separate case filed Monday in the federal appeals court in Washington, DC, accuses the Defense Department of unfairly discriminating and retaliating against Anthropic.

The company is seeking a hearing as soon as Friday in San Francisco for a temporary reprieve. The legal battle and sales fallout follows a weeks-long dispute between Anthropic and the Pentagon over the potential use of AI technologies for mass domestic surveillance and autonomous lethal weapons. Anthropic contends AI is not yet capable of safely undertaking the tasks, while the Pentagon wants the right to make that judgment on its own.

By law, the supply-chain designation prevents a narrow set of companies that do business with the Pentagon from incorporating Anthropic into their systems. But Defense secretary Pete Hegseth has cast a wider net. He posted on X late last month that “effective immediately, no contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic.”

Rao wrote that the Pentagon reinforced the message by reaching out to several startups about their use of Claude, which he said he learned had happened from speaking with an investor that Anthropic and the smaller companies all share. They “have grown worried and uncertain about their ability to use Claude,” Rao wrote.

The Pentagon declined to comment on the lawsuits and did not immediately respond to a request for comment about Rao’s allegation about the outreach.

-

Politics3 days ago

Politics3 days agoIndia let Iran warship dock the day US sank another off Sri Lanka, say officials

-

Sports3 days ago

Sports3 days agoPakistan set for FIH Pro League debut | The Express Tribune

-

Entertainment3 days ago

Entertainment3 days agoHarry Styles kicks off new era with ‘One Night Only’ comeback show

-

Business1 week ago

Business1 week agoLabour parliamentarians urge UK Government to oppose Rosebank oil field

-

Business3 days ago

Business3 days agoRestaurant group changes name after bid to buys pubs across the UK

-

Business3 days ago

Business3 days agoHome heating oil: ‘Most of my pension has gone on home heating oil’

-

Tech1 week ago

Tech1 week agoThe 5 Big ‘Known Unknowns’ of Donald Trump’s New War With Iran

-

Sports1 week ago

Sports1 week agoUSA vs. Argentina (Mar 1, 2026) Live Score – ESPN