Tech

A firewall for science: AI tool identifies 1,000 ‘questionable’ journals

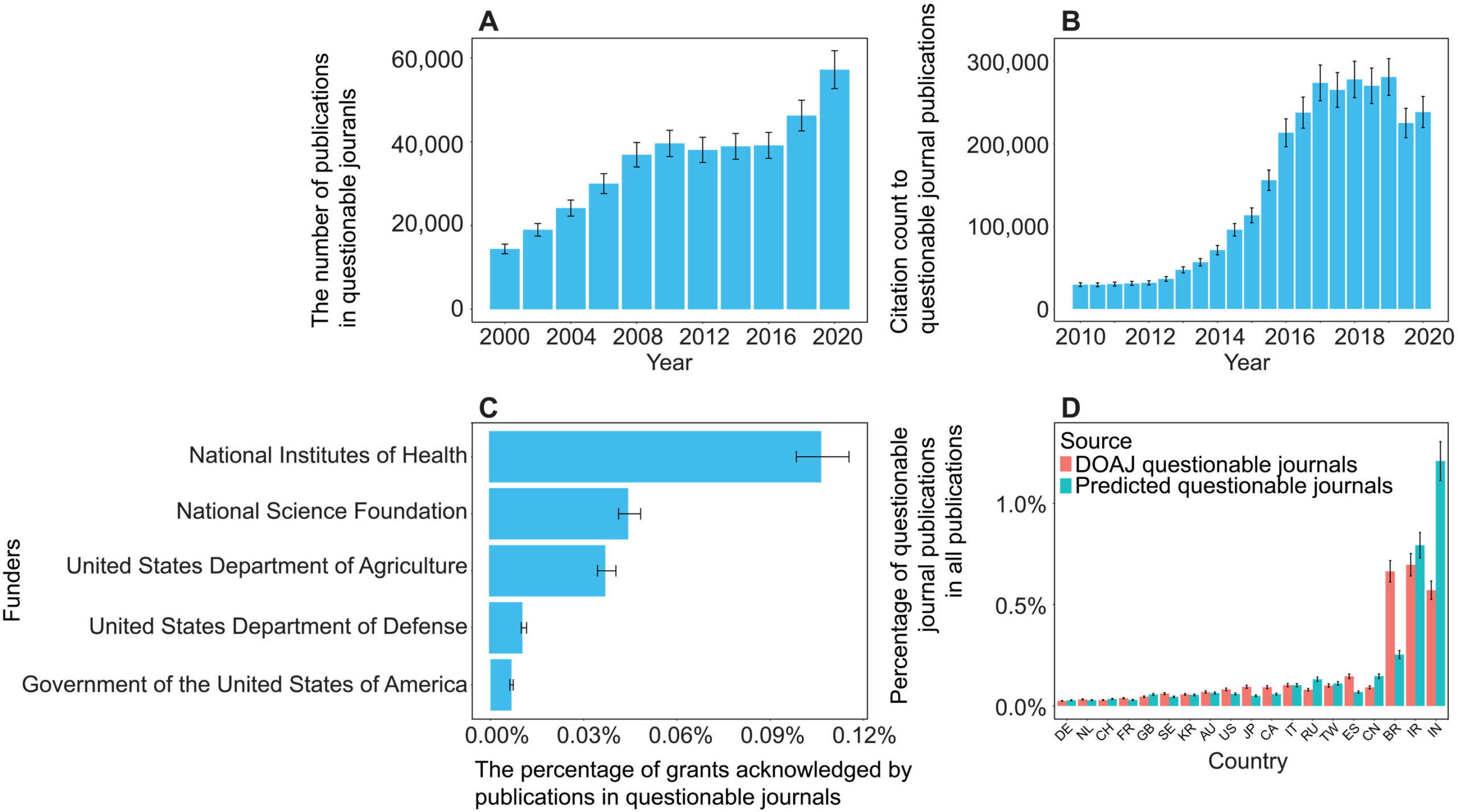

A team of computer scientists led by the University of Colorado Boulder has developed a new artificial intelligence platform that automatically seeks out “questionable” scientific journals.

The study, published Aug. 27 in the journal Science Advances, tackles an alarming trend in the world of research.

Daniel Acuña, lead author of the study and associate professor in the Department of Computer Science, gets a reminder of that several times a week in his email inbox: These spam messages come from people who purport to be editors at scientific journals, usually ones Acuña has never heard of, and offer to publish his papers—for a hefty fee.

Such publications are sometimes referred to as “predatory” journals. They target scientists, convincing them to pay hundreds or even thousands of dollars to publish their research without proper vetting.

“There has been a growing effort among scientists and organizations to vet these journals,” Acuña said. “But it’s like whack-a-mole. You catch one, and then another appears, usually from the same company. They just create a new website and come up with a new name.”

His group’s new AI tool automatically screens scientific journals, evaluating their websites and other online data for certain criteria: Do the journals have an editorial board featuring established researchers? Do their websites contain a lot of grammatical errors?

Acuña emphasizes that the tool isn’t perfect. Ultimately, he thinks human experts, not machines, should make the final call on whether a journal is reputable.

But in an era when prominent figures are questioning the legitimacy of science, stopping the spread of questionable publications has become more important than ever before, he said.

“In science, you don’t start from scratch. You build on top of the research of others,” Acuña said. “So if the foundation of that tower crumbles, then the entire thing collapses.”

The shake down

When scientists submit a new study to a reputable publication, that study usually undergoes a practice called peer review. Outside experts read the study and evaluate it for quality—or, at least, that’s the goal.

A growing number of companies have sought to circumvent that process to turn a profit. In 2009, Jeffrey Beall, a librarian at CU Denver, coined the phrase “predatory” journals to describe these publications.

Often, they target researchers outside of the United States and Europe, such as in China, India and Iran—countries where scientific institutions may be young, and the pressure and incentives for researchers to publish are high.

“They will say, ‘If you pay $500 or $1,000, we will review your paper,'” Acuña said. “In reality, they don’t provide any service. They just take the PDF and post it on their website.”

A few different groups have sought to curb the practice. Among them is a nonprofit organization called the Directory of Open Access Journals (DOAJ). Since 2003, volunteers at the DOAJ have flagged thousands of journals as suspicious based on six criteria. (Reputable publications, for example, tend to include a detailed description of their peer review policies on their websites.)

But keeping pace with the spread of those publications has been daunting for humans.

To speed up the process, Acuña and his colleagues turned to AI. The team trained its system using the DOAJ’s data, then asked the AI to sift through a list of nearly 15,200 open-access journals on the internet.

Among those journals, the AI initially flagged more than 1,400 as potentially problematic.

Acuña and his colleagues asked human experts to review a subset of the suspicious journals. The AI made mistakes, according to the humans, flagging an estimated 350 publications as questionable when they were likely legitimate. That still left more than 1,000 journals that the researchers identified as questionable.

“I think this should be used as a helper to prescreen large numbers of journals,” he said. “But human professionals should do the final analysis.”

Acuña added that the researchers didn’t want their system to be a “black box” like some other AI platforms.

“With ChatGPT, for example, you often don’t understand why it’s suggesting something,” Acuña said. “We tried to make ours as interpretable as possible.”

The team discovered, for example, that questionable journals published an unusually high number of articles. They also included authors with a larger number of affiliations than more legitimate journals, and authors who cited their own research, rather than the research of other scientists, to an unusually high level.

The new AI system isn’t publicly accessible, but the researchers hope to make it available to universities and publishing companies soon. Acuña sees the tool as one way that researchers can protect their fields from bad data—what he calls a “firewall for science.”

“As a computer scientist, I often give the example of when a new smartphone comes out,” he said. “We know the phone’s software will have flaws, and we expect bug fixes to come in the future. We should probably do the same with science.”

More information:

Han Zhuang et al, Estimating the predictability of questionable open-access journals, Science Advances (2025). DOI: 10.1126/sciadv.adt2792

Citation:

A firewall for science: AI tool identifies 1,000 ‘questionable’ journals (2025, August 30)

retrieved 30 August 2025

from https://techxplore.com/news/2025-08-firewall-science-ai-tool-journals.html

This document is subject to copyright. Apart from any fair dealing for the purpose of private study or research, no

part may be reproduced without the written permission. The content is provided for information purposes only.

Tech

‘The Last Airbender’ Leaked Online. Some Fans Say Paramount Deserves the Fallout

The online leak of a full version of Avatar: Aang, The Last Airbender—a highly anticipated animated film in a multimedia fantasy franchise—has divided passionate fans while upsetting those who spent years working on the film.

The leaks began on X late on Saturday night, about six months before Aang was scheduled to premiere on Paramount+. User @ImStillDissin posted two short clips from the film. “Nickelodeon accidentally emailed me the entire Avatar aang movie,” he claimed. He also threatened to stream the entire movie if Paramount didn’t release an official trailer, and he posted a still from the movie’s end credits, revealing previously undisclosed voice-over cast and roles. The media from @ImStillDissin’s posts were later hit with copyright strikes and removed.

But within 48 hours, links to download the full movie appeared on 4chan and X, where some users also directly streamed the film. Across the web, fans said they had successfully pirated and watched what appeared to be a nearly finished and “beautiful” animated film.

While some argued that Paramount deserved to be punished because of certain creative and marketing decisions around the movie, others noted what a blow the leak was to the animators and production crew. A number of those team members took to social media to convey their sadness and frustration.

“We worked on the aang movie for years with the expectation that’d [sic] we’d get to celebrate all of our hard work in theaters. Just to see people unceremoniously leak the film and pass our shots around on twitter like candy,” animator Julia Schoel wrote Tuesday on X.

The user behind @ImStillDissin, who would not reveal his real name due to fear of legal repercussions, tells WIRED that he obtained the movie almost by chance and did not expect his posts to set off such a crisis in the entertainment world. “When I posted those clips I was purely trolling,” he says. “I was expecting a day of clout farming at best, not for the whole thing to blow up like this.”

(While WIRED has done its due diligence in verifying that the person speaking to us was behind the @ImStillDissin X account, we acknowledge that the hacking community is known to troll.)

According to @ImStillDissin, a screen-grabbed version of Avatar: Aang, The Last Airbender was circulating among people he knew from his days in the hacking community, one of whom shared it with him. “Broadly speaking, the supply chain for movies and TV is rife with insecure companies and vendors and lax checks,” he claims. He notes that two different SpongeBob SquarePants movies leaked months before their release dates in 2024. “Someone on 4chan who wasn’t happy at me drip-feeding stuff posted a copy of a draft script [of the new Avatar film] from like two years back,” says @ImStillDissin.

Neither Nickelodeon nor its parent company Paramount have confirmed a hack had taken place, nor have they issued a statement on the matter. They also did not respond to requests for comment.

Originally announced in 2021, Avatar: Aang, The Last Airbender marked the first production for Avatar Studios, a division of Nickelodeon’s animation department.

Some people felt justified in pirating and sharing the movie due to the recasting of voice actors. Last year, during a Reddit AMA, casting director Jenny Jue wrote that the voice cast from the Avatar TV show that aired on Nickelodeon in the 2000s was not returning due to efforts to “match actors’ ethnic/racial background to the characters they’re portraying.”

Tech

NASA Wants to Put Nuclear Reactors on the Moon

Having demonstrated that it has the operational capability to transport humans safely to the moon and back, the United States is moving on to its next major aim: It wants nuclear reactors in orbit and on the lunar surface by 2030. For such a feat, the National Aeronautics and Space Administration will have to work in conjunction with the Department of Defense and the Department of Energy.

In a post on X, the White House Office of Science and Technology Policy (OSTP) unveiled a document with new guidelines for federal agencies to establish the space nuclear technology road map for the coming years. This, they say, will ensure “US space superiority.”

At present, space instruments use solar power to operate. However, this is considered impractical for more complex purposes. Although technically there is always sunlight, the power is intermittent and almost always requires bulky batteries to store it.

Reactors produce fairly continuous energy for years through nuclear fission. They can also be used for so-called nuclear electric propulsion. Continuous output makes them the most viable option for lunar base subsistence, but they can also allow spacecraft to undertake long or complex missions without worrying about depleting a limited supply of chemical fuel.

Nuclear technology, in short, makes it possible to go farther, with more payload, for longer, and with fewer constraints.

According to the memorandum, the US goal is to put a medium-power reactor in orbit by 2028, with a variant designed for nuclear electric propulsion, and a first functional large reactor on the surface of the moon by 2030. To achieve this, both NASA and the Pentagon will develop energy technologies in parallel, using the current strategy of competition among contractors.

The reactors will have to be modular and scalable, and will have to include applications for both future life on the moon and space propulsion. For its part, the DOE will have to ensure that these projects have the fuel, infrastructure, and safety features necessary to achieve their objectives. In addition, the agency will evaluate whether the industry has the capacity to produce up to four reactors in five years.

The plan contemplates technologies that produce at least 20 kilowatts of electricity (kWe) for three years in orbit and at least five years on the lunar surface. In the meantime, they should have a design capable of raising power to 100 kWe. The first designs should arrive within a year.

Finally, the order tasks the OSTP with creating a road map for the initiative, noting obstacles and recommendations for addressing them.

“Nuclear power in space will give us the sustained electricity, heating, and propulsion essential to a permanent presence on the moon, Mars, and beyond,” OSTP posted. For his part, NASA administrator Jared Isaacman posted, “The time has come for America to get underway on nuclear power in space.” The message was followed by an emoji of a US flag.

The plan provides a common framework for each agency to work within. In the background, the race for space infrastructure is evidence of technological competition with China, which is also seeking advanced energy capabilities for the moon.

This story originally appeared in WIRED en Español and has been translated from Spanish.

Tech

AI Could Democratize One of Tech’s Most Valuable Resources

Nvidia is the undisputed king of AI chips. But thanks to the AI it helped build, the champ could soon face growing competition.

Modern AI runs on Nvidia designs, a dynamic that has propelled the company to a market cap of well over $4 trillion. Each new generation of Nvidia chip allows companies to train more powerful AI models using hundreds or thousands of processors networked together inside vast data centers. One reason for Nvidia’s success is that it provides software to help program each new generation of chip. That may soon not be such a differentiated skill.

A startup called Wafer is training AI models to do one of the most difficult and important jobs in AI—optimizing code so that it runs as efficiently as possible on a particular silicon chip.

Emilio Andere, cofounder and CEO of Wafer, says the company performs reinforcement learning on open source models to teach them to write kernel code, or software that interacts directly with hardware in an operating system. Andere says Wafer also adds “agentic harnesses” to existing coding models like Anthropic’s Claude and OpenAI’s GPT to soup up their ability to write code that runs directly on chips.

Many prominent tech companies now have their own chips. Apple and others have for years used custom silicon to improve the performance and the efficiency of software running on laptops, tablets, and smartphones. At the other end of the scale, companies like Google and Amazon mint their own silicon to improve the performance of their cloud-computing platforms. Meta recently said it would deploy 1 gigawatt of compute capacity with a new chip developed with Broadcom. Deploying custom silicon also involves writing a lot of code so that it runs smoothly and efficiently on the new processor.

Wafer is working with companies including AMD and Amazon to help optimize software to run efficiently on their hardware. The startup has so far raised $4 million in seed funding from Google’s Jeff Dean, Wojciech Zaremba of OpenAI, and others.

Andere believes that his company’s AI-led approach has the potential to challenge Nvidia’s dominance. A number of high-end chips now offer similar raw floating point performance—a key industry benchmark of a chip’s ability to perform simple calculations—to Nvidia’s best silicon.

“The best AMD hardware, the best [Amazon] Trainium hardware, the best [Google] TPUs, give you the same theoretical flops to Nvidia GPUs,” Andere told me recently. “We want to maximize intelligence per watt.”

Performance engineers with the skill needed to optimize code to run reliably and efficiently on these chips are expensive and in high demand, Andere says, while Nvidia’s software ecosystem makes it easier to write and maintain code for its chips. That makes it hard for even the biggest tech companies to go it alone.

When Anthropic partnered with Amazon to build its AI models on Trainium, for instance, it had to rewrite its model’s code from scratch to make it run as efficiently as possible on the hardware, Andere says.

Of course, Anthropic’s Claude is now one of many AI models that are now superhuman at writing code. So Andere reckons it may not be long before AI starts consuming Nvidia software advantage.

“The moat lives in the programmability of the chip,” Andere says in reference to the libraries and software tools that make it easier to optimize code for Nvidia hardware. “I think it’s time to start rethinking whether that’s actually a strong moat.”

Besides making it easier to optimize code for different silicon, AI may soon make it easier to design chips themselves. Ricursive Intelligence, a startup founded by two ex-Google engineers, Azalia Mirhoseini and Anna Goldie, is developing new ways to design computer chips with artificial intelligence. If its technology takes off, a lot more companies could branch into chip design, creating custom silicon that runs their software more efficiently.

-

Fashion1 week ago

Fashion1 week agoIndia’s exports face reset as EU links trade to carbon metrics: EY

-

Entertainment1 week ago

Entertainment1 week agoQueen Elizabeth II emotional message for Archie, Lilibet sparks speculation

-

Entertainment1 week ago

Entertainment1 week agoLamar Odom shocking response to Khloé Kardashian account of his overdose

-

Tech1 week ago

Tech1 week agoAzure customers up in arms over ‘full’ UK South region | Computer Weekly

-

Tech1 week ago

Tech1 week agoAs the Strait of Hormuz Reopens, Global Shipping Will Take Months to Recover

-

Fashion1 week ago

Fashion1 week agoCII submits 20-pt agenda to Indian govt to back firms hit by Iran war

-

Tech7 days ago

Tech7 days agoThis AI Button Wearable From Ex-Apple Engineers Looks Like an iPod Shuffle

-

Politics6 days ago

Politics6 days agoIndian airlines hit hardest after Dubai limits foreign flights until May 31