Tech

A new way to test how well AI systems classify text

Is this movie review a rave or a pan? Is this news story about business or technology? Is this online chatbot conversation veering off into giving financial advice? Is this online medical information site giving out misinformation?

These kinds of automated conversations, whether they involve seeking a movie or restaurant review or getting information about your bank account or health records, are becoming increasingly prevalent. More than ever, such evaluations are being made by highly sophisticated algorithms, known as text classifiers, rather than by human beings. But how can we tell how accurate these classifications really are?

Now, a team at MIT’s Laboratory for Information and Decision Systems (LIDS) has come up with an innovative approach to not only measure how well these classifiers are doing their job, but then go one step further and show how to make them more accurate.

The new evaluation and remediation software was developed by Kalyan Veeramachaneni, a principal research scientist at LIDS, his students Lei Xu and Sarah Alnegheimish, and two others. The software package is being made freely available for download by anyone who wants to use it.

The team’s results were published on July 7 in the journal Expert Systems in a paper by Xu, Veeramachaneni, and Alnegheimish of LIDS, along with Laure Berti-Equille at IRD in Marseille, France, and Alfredo Cuesta-Infante at the Universidad Rey Juan Carlos, in Spain.

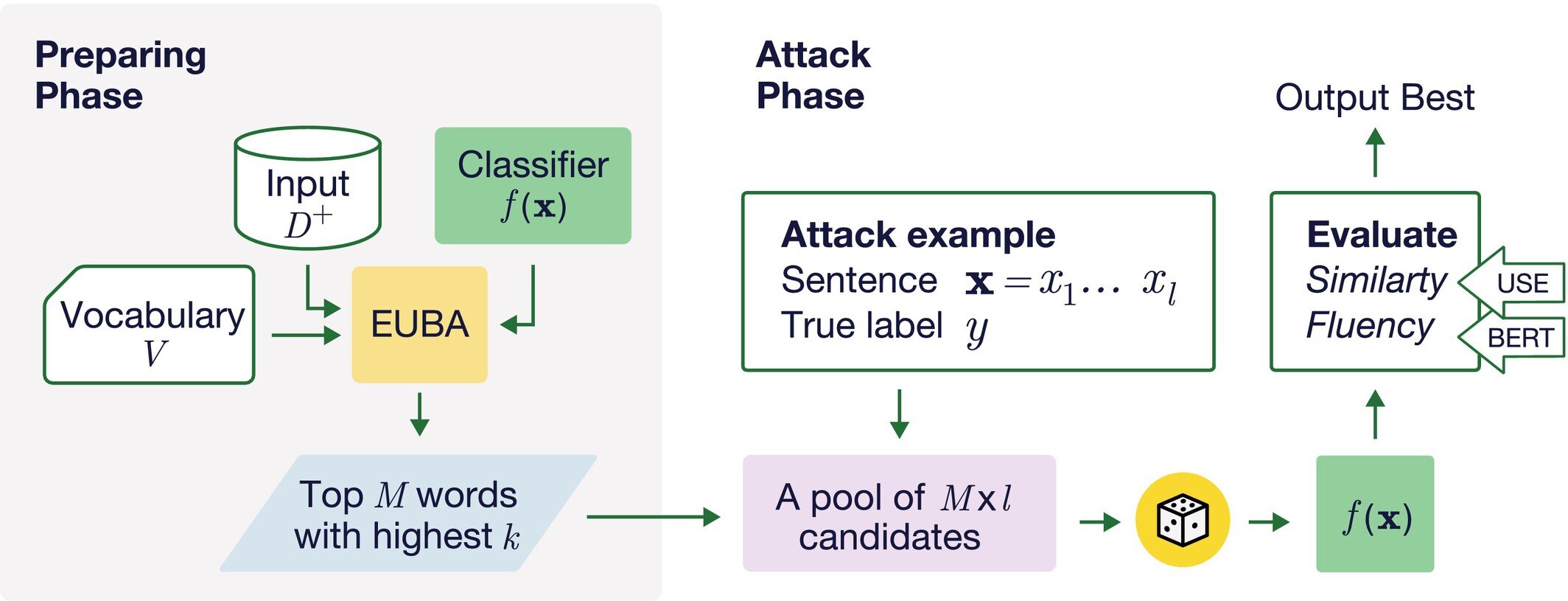

A standard method for testing these classification systems is to create what are known as synthetic examples—sentences that closely resemble ones that have already been classified. For example, researchers might take a sentence that has already been tagged by a classifier program as being a rave review, and see if changing a word or a few words while retaining the same meaning could fool the classifier into deeming it a pan. Or a sentence that was determined to be misinformation might get misclassified as accurate. This ability to fool the classifiers makes these adversarial examples.

People have tried various ways to find the vulnerabilities in these classifiers, Veeramachaneni says. But existing methods of finding these vulnerabilities have a hard time with this task and miss many examples that they should catch, he says.

Increasingly, companies are trying to use such evaluation tools in real time, monitoring the output of chatbots used for various purposes to try to make sure they are not putting out improper responses. For example, a bank might use a chatbot to respond to routine customer queries such as checking account balances or applying for a credit card, but it wants to ensure that its responses could never be interpreted as financial advice, which could expose the company to liability.

“Before showing the chatbot’s response to the end user, they want to use the text classifier to detect whether it’s giving financial advice or not,” Veeramachaneni says. But then it’s important to test that classifier to see how reliable its evaluations are.

“These chatbots, or summarization engines or whatnot, are being set up across the board,” he says, to deal with external customers and within an organization as well, for example providing information about HR issues. It’s important to put these text classifiers into the loop to detect things that they are not supposed to say, and filter those out before the output gets transmitted to the user.

That’s where the use of adversarial examples comes in—those sentences that have already been classified but then produce a different response when they are slightly modified while retaining the same meaning. How can people confirm that the meaning is the same? By using another large language model (LLM) that interprets and compares meanings.

So, if the LLM says the two sentences mean the same thing, but the classifier labels them differently, “that is a sentence that is adversarial—it can fool the classifier,” Veeramachaneni says. And when the researchers examined these adversarial sentences, “we found that most of the time, this was just a one-word change,” although the people using LLMs to generate these alternate sentences often didn’t realize that.

Further investigation, using LLMs to analyze many thousands of examples, showed that certain specific words had an outsized influence in changing the classifications, and therefore the testing of a classifier’s accuracy could focus on this small subset of words that seem to make the most difference. They found that one-tenth of 1% of all the 30,000 words in the system’s vocabulary could account for almost half of all these reversals of classification, in some specific applications.

Lei Xu Ph.D. ’23, a recent graduate from LIDS who performed much of the analysis as part of his thesis work, “used a lot of interesting estimation techniques to figure out what are the most powerful words that can change the overall classification, that can fool the classifier,” Veeramachaneni says.

The goal is to make it possible to do much more narrowly targeted searches, rather than combing through all possible word substitutions, thus making the computational task of generating adversarial examples much more manageable. “He’s using large language models, interestingly enough, as a way to understand the power of a single word.”

Then, also using LLMs, he searches for other words that are closely related to these powerful words, and so on, allowing for an overall ranking of words according to their influence on the outcomes. Once these adversarial sentences have been found, they can be used in turn to retrain the classifier to take them into account, increasing the robustness of the classifier against those mistakes.

Making classifiers more accurate may not sound like a big deal if it’s just a matter of classifying news articles into categories, or deciding whether reviews of anything from movies to restaurants are positive or negative. But increasingly, classifiers are being used in settings where the outcomes really do matter, whether preventing the inadvertent release of sensitive medical, financial, or security information, or helping to guide important research, such as into properties of chemical compounds or the folding of proteins for biomedical applications, or in identifying and blocking hate speech or known misinformation.

As a result of this research, the team introduced a new metric, which they call p, which provides a measure of how robust a given classifier is against single-word attacks. And because of the importance of such misclassifications, the research team has made its products available as open access for anyone to use. The package consists of two components: SP-Attack, which generates adversarial sentences to test classifiers in any particular application, and SP-Defense, which aims to improve the robustness of the classifier by generating and using adversarial sentences to retrain the model.

In some tests, where competing methods of testing classifier outputs allowed a 66% success rate by adversarial attacks, this team’s system cut that attack success rate almost in half, to 33.7%. In other applications, the improvement was as little as a 2% difference, but even that can be quite important, Veeramachaneni says, since these systems are being used for so many billions of interactions that even a small percentage can affect millions of transactions.

More information:

Lei Xu et al, Single Word Change Is All You Need: Using LLMs to Create Synthetic Training Examples for Text Classifiers, Expert Systems (2025). DOI: 10.1111/exsy.70079

This story is republished courtesy of MIT News (web.mit.edu/newsoffice/), a popular site that covers news about MIT research, innovation and teaching.

Citation:

A new way to test how well AI systems classify text (2025, August 14)

retrieved 14 August 2025

from https://techxplore.com/news/2025-08-ai-text.html

This document is subject to copyright. Apart from any fair dealing for the purpose of private study or research, no

part may be reproduced without the written permission. The content is provided for information purposes only.

Tech

Anthropic Plots Major London Expansion

Anthropic is moving into a new London office as it seeks to expand its research and commercial footprint in Europe, setting up a scrap between the leading AI labs for talent emerging from British universities.

The company, which opened its first London office in 2023, is moving to the same neighborhood as Google DeepMind, OpenAI, Meta, Wayve, Isomorphic Labs, Synthesia, and various AI research institutions.

Anthropic’s new, 158,000-square-foot office footprint will have space enough for 800 people—four times its current head count—giving it room to potentially outscale OpenAI, which itself recently announced an expansion in London.

“Europe’s largest businesses and fastest-growing startups are choosing Claude, and we’re scaling to match,” says Pip White, head of EMEA North at Anthropic. “The UK combines ambitious enterprises and institutions that understand what’s at stake with AI safety with an exceptional pool of AI talent—we want to be where all of that comes together.

UK government officials had reportedly attempted to coax Anthropic into expanding its presence in London after the company recently fell out with the US administration. Anthropic refused to allow its models to be used in mass surveillance and autonomous weapon systems, leading to an ongoing legal battle between the AI lab and the Pentagon.

As part of the expansion, Anthropic says it will deepen its work with the UK’s AI Security Institute, a government body that this week published a risk evaluation of its latest model, Claude Mythos Preview. According to Politico, the UK government is one of few across Europe to have been granted access to the model, which Anthropic has released to only select parties, citing concerns over the potential for its abuse by cybercriminals.

The increasing concentration of AI companies in the same London district is an important step in creating a pathway for research to translate into AI products, says Geraint Rees, vice-provost at University College London, whose campus is around the corner from Anthropic’s new office.

“This cluster didn’t emerge from a planning document. It grew because serious researchers and companies understand that proximity isn’t a nice-to-have,” he said last month, speaking at an event attended by WIRED. “That’s how the innovation system actually works. It’s not a clean, linear transfer from lab to market. It’s messier, richer, more human than that.”

Tech

LG’s High-End Soundbar System Makes My Living Room Feel Like a Home Theater

Setup was relatively quick and painless. You just have to unbox four speakers, a soundbar, and a subwoofer, attach their power cables, and plug in everything. Pairing happens through the LG ThinQ app, which allows you to set up the Sound Suite system and tune it to exactly where you’re sitting in the room using your cell phone’s microphone.

You can also set up each speaker to play music and group it with any other LG smart speakers you might have around your home, like the more affordable $250 M5 bookshelf speaker, to create a whole-home system.

Once all the components were synced, I plugged the soundbar into the C5 OLED via HDMI, and was able to easily control everything via the TV remote’s volume and mute buttons. More in-depth settings had to happen in the app, but if you’re anything like me, this won’t become a regular chore. You’ll set it how you like it once and move on. While the pairing functionality with the LG TV was nice, it’s not required–the eARC port lets the Sound Suite work perfectly with any modern TV.

The bar itself runs the show, with a black-and-white display on the far left that shows your mode and volume, among other settings. In the center of the bar and below each speaker, an LED light strip that also shows you the volume when you change it, which is a nice touch.

Getting Musical

Photograph: Parker Hall

The sound of the LG Sound Suite is full and cinematic, thanks in no small part to the extra dedicated speakers. Most competitors lack front left and right, simply opting to use the soundbar for these channels. As such, the width and breadth of the soundstage were bigger than most competitors I’ve tried, with only Samsung’s flagship HW-Q990F as a real contender. Even the Samsung lacked the lower-frequency audio quality that these LG speakers provide.

Tech

Cyber Essentials closes the MFA loophole but leaves some organisations adrift | Computer Weekly

On 27 April, the government backed security certification scheme, Cyber Essentials v3.3, takes effect and multi-factor authentication (MFA) becomes a pass-or-fail requirement for the first time.

If a cloud service your organisation uses offers MFA and you have not enabled it, you fail. No discretion, no partial credit, no route to remediate inside the assessment cycle.

This is the right call. I want to say that clearly, because what follows is a problem with the implementation, not the policy. MFA is the single most effective control against credential-based attacks, and the scheme has needed to stop tolerating its absence for a long time. The National Cyber Security Centre (NCSC), part of GCHQ, which developed Cyber Essentials and certification company, IASME have got this decision right.

But in the assessments we have conducted this year, I have seen two organisations that will hit a wall on 27 April, and I do not think they are unusual.

Train company could not deploy MFA

The first is a train operating company in the South East. Station operations rooms run on shared terminals where staff rotate through shifts in time-critical conditions. A transport union raised formal concerns that MFA would introduce delays at the keyboard that could affect train operations and, in their view, the safety of train movements.

The company listened and chose not to enable MFA in those environments. Under v3.2 they passed, with the relevant questions marked as non-compliant but not fatal. Under Cyber Essentials v3.3 they will fail.

Charity run by volunteers faces MFA hurdle

The second is a nationally known charity with hundreds of high street shops. The shops are staffed largely by volunteers many of whom work a few hours a week, and staff turnover is high.

The cost and management overhead of enrolling every volunteer onto MFA, using personal phones they may not have and authenticator apps they would not keep, was considered prohibitive. So MFA was never switched on. Same story: they passed under v3.2. Under v3.3 they fail.

Neither of these organisations is ignoring security. Both made considered decisions based on how their people actually work. The problem is not that they do not want to comply. It is that the standard toolkit of MFA methods, including SMS codes, authenticator apps on personal phones, and push notifications, does not fit a six-person shared terminal that has to be available in seconds, or a volunteer workforce that changes every week.

FIDO2 could offer solutions

The frustrating part is that there is a solution, and it is already proven in healthcare, manufacturing and retail. FIDO2 authentication delivered through NFC badge-taps lets a staff member authenticate in under two seconds: tap a badge, enter a short PIN, session opens.

It satisfies the MFA requirement by combining possession of the badge with knowledge of the PIN. It is faster than typing a password. Crucially, it is compliant, because each badge is enrolled as that individual’s unique FIDO2 credential, so the Cyber Essentials requirement for unique user accounts is met. Shared keys or shared PINs would not work. Individual badges do.

Need for better guidance

v3.3 explicitly recognises FIDO2 authenticators and passkeys as valid MFA methods. The compliance path is clear. What is missing is anyone telling the organisations most affected that this path exists.

That is the gap that must close. The NCSC and IASME have made the right policy decision; the scheme would be weaker without it.

But implementation guidance for shared-terminal, shift-based and high-turnover environments is thin, and these organisations are running out of time to find their way through it. Many of them hold Cyber Essentials because it is required for government contracts or in their supply chains; losing certification has a direct commercial cost.

The answer is not to soften the requirement. The answer is to make sure no one fails for lack of information about how to meet it.

Jonathan Krause is Founder and Managing Director of Forensic Control

-

Entertainment1 week ago

Entertainment1 week agoQueen Elizabeth II emotional message for Archie, Lilibet sparks speculation

-

Tech1 week ago

Tech1 week agoAzure customers up in arms over ‘full’ UK South region | Computer Weekly

-

Tech1 week ago

Tech1 week agoAs the Strait of Hormuz Reopens, Global Shipping Will Take Months to Recover

-

Fashion1 week ago

Fashion1 week agoCII submits 20-pt agenda to Indian govt to back firms hit by Iran war

-

Tech1 week ago

Tech1 week agoThis AI Button Wearable From Ex-Apple Engineers Looks Like an iPod Shuffle

-

Politics6 days ago

Politics6 days agoIndian airlines hit hardest after Dubai limits foreign flights until May 31

-

Entertainment4 days ago

Entertainment4 days agoPalace left in shock as Prince William cancels grand ceremony

-

Politics6 days ago

Politics6 days agoChinese, Taiwanese will unite, Xi tells Taiwan opposition leader