Tech

Agentic AI to make data uplink the next mobile bottleneck | Computer Weekly

One of the consequences of artificial intelligence (AI) systems becoming capable of reasoning, planning and executing tasks autonomously is that mobile traffic patterns are changing noticeably, with uplink being of growing importance.

A study from InterDigital has shown how the emergence of agentic AI will redefine the demands placed on devices, networks and cloud infrastructure. Among the findings of the The distributed network shift enabling AI on device report, conducted by ABI Research for the InterDigital, was that the rapid adoption of agentic systems – which is expected to increase across enterprise and consumer markets over the next three years – was increasing uplink traffic from AI devices, changing the way modern networks operate. The result will be a reimagining of network design.

The study noted that modern mobile networks have historically been optimised for downlink throughput and video delivery. However, unlike traditional mobile applications that primarily consume data via downlink, agentic AI systems continuously generate and exchange contextual information to enable real-time reasoning and decision-making. Therefore, as AI devices generate increasing volumes of upstream data, networks risk becoming overloaded, leading to higher latency and costs.

The study found four main devices driving uplink traffic: smart glasses, wearables, smartphones, IoT sensors and devices. Smart glasses continuously capture video, images and environmental context, sending data upstream for real-time AI inference and assistance. ABI Research predicts 70 million smart glasses shipments by 2030, with cellular-enabled devices representing more than 12% of shipments.

By contrast, wearables – including next-generation tech that collects voice, biometric and contextual signals – support persistent agentic AI interactions. Smartphones increasingly transmit multimodal inputs such as voice, photos, video and sensor data to cloud and edge AI systems. In their operation, IoT sensors and devices continuously stream operational or environmental data to AI models for analysis, automation and decision-making.

The study also found uplink pressures are already visible in video-heavy applications such as livestreaming and real-time video collaboration, where many users uploading simultaneously can create localised mobile cell congestion. It added that unlike these temporary spikes, agentic AI systems will generate continuous upstream data exchanges from connected devices, potentially creating sustained pressure on uplink capacity.

The report suggested that to meet AI demands of modern devices, the industry must transition toward distributed intelligence architectures, where AI workloads are orchestrated across on-device processors, and cloud platforms based on their complexity. It said that embedding intelligence deeper into network infrastructure will ensure AI-enabled applications can operate efficiently without compromising on performance.

The study observed that as the entire mobile ecosystem continues to innovate and integrate the latest AI technology at pace, ensuring a coherent and complementary direction of travel is essential to enabling future AI applications and their associated experiences.

This is particularly seen as the case for 6G networks, which will be designed to make smartphones better at Mobile Broadband (MBB) access by improving network speeds, reducing latency and refining the battery life of devices.

However, InterDigital cautioned this is just the foundation on which additional services will be built. Integrating AI in the network will allow smartphones to offload demanding applications to the edge of the network – as well as into centralised locations – to ensure optimal resource utilisation, enabling a distributed intelligence fabric.

“Agentic AI introduces a new set of requirements for both networks and devices,” said Larbi Belkhit, and Paul Schell, senior analysts at ABI Research and co-authors of the report. “Supporting autonomous AI systems will demand far more distributed computing architectures and significantly more intelligent networks. Operators will need to manage increasingly symmetrical traffic patterns while enabling real-time AI workloads across device, edge and cloud.”

“Agentic AI marks the next phase in the evolution of intelligent connectivity,” said InterDigital chief technology officer Rajesh Pankaj. “Intelligence must be distributed across devices, networks and the cloud, and delivering these AI-enhanced services efficiently will require a new computing architecture that balances performance, latency and energy efficiency.”

Tech

Top Western Digital Promo Code 2026

Started more than 50 years ago, data storage company Western Digital is one of the world’s largest computer hard disk drive manufacturers, and produces solid-state drives (SSDs) and flash memory devices. Western Digital makes all the essentials for home office and business digital storage, whether you want to back up via cloud storage, easily take your presentation on a USB flash drive to your next important meeting, or upgrade your home security surveillance’s storage system, Western Digital has what you need—and we have promo codes to help you save.

Recycle and Save 15% Off With Western Digital Promo

One of the biggest issues of our modern life is how to responsibly recycle e-waste. That’s why Western Digital makes it easier to recycle your old, broken, or defunct electronics. With Western Digital’s Easy Recycle program, you can safely dispose of NAS systems and internal or external HDDs and SSDs. Plus, they recycle devices from any manufacturer—not just Western Digital products. And when you go green and recycle through their program, you’ll get a 15% off Western Digital promo code that counts towards your next purchase of $50 or more when you shop online at Western Digital.

Get 10% Off With a Western Digital Coupon Code

Right now, you can save 10% on your first order when you sign up to receive emails from Western Digital. All you need to do is head to Western Digital’s promo page, where you’ll input your email to sign up for special offers, promotions, and that Western Digital promo code for 10% off. The code will be sent to your inbox where you can use it to save on tech essentials.

Does Western Digital Have Free Shipping?

Western Digital has even more ways to save, with free standard shipping on eligible orders of $50 or more for non-members. Western Digital members receive free standard shipping on all eligible orders in the lower 48.

Additional Western Digital Deals

Western Digital has education discounts, where students and teachers can get up to 15% off purchases after verifying their status with Youth Discount. Once their identity is verified, they’ll get a voucher code sent to their inbox to use at checkout. Western Digital also has a 15% discount for seniors 55 years or older. Seniors just need to verify their status with Senior Discount. Once age is verified, folks will get a Western Digital promo code sent to their email to save.

In a commitment to sustainability, Western Digital has a program with Easy Recycle, where you can safely dispose of NAS systems and internal or external HDDs and SSDs. (They’ll also recycle devices from any manufacturer, not just Western Digital). As a token of appreciation for participating in their initiative for a greener future, participants can get 15% off their next purchase of $50 or more.

Choosing the Right Western Digital Product

It’s hard to know which is the right digital storage system for you—in fact, we even made a handy guide on How to Back Up Your Digital Life, and have a whole roundup of some of our favorite WIRED-tested external hard drives. In a similar vein, Western Digital created a FAQ webpage on how to choose the right storage drive for your needs, like budget and data. A Western Digital Hardrive is a budget-friendly option that delivers the capacity needed to store years of photos, videos, backups, workloads, and archives. While a Western Digital SSD offers fast and reliable responsiveness for more large-scale operating systems and active projects.

Tech

The Best LED Skincare Deals I’ve Seen This Mother’s Day Are at Megelin

The red-light therapy market shows no signs of slowing down. According to Fortune Business Insights, the industry is projected to grow from $1.21 billion in 2026 to $1.76 billion by 2034. Riding that wave is Hong Kong-based Megelin, which is currently running its largest Mother’s Day sale yet, offering major discounts on most of its LED devices and select electrical muscle stimulation (EMS) tools.

I’ve been testing the Duo Lux Laser & LED Light Therapy Mask for the past two weeks as part of a six-week trial. While I’m still forming my final verdict, I already have some early thoughts (more on that below). In the meantime, check out the standout deals because some of these discounts might be too good to pass up while they’re live.

This Laser & LED Light Therapy Mask Is $270 Off

The Megelin Duo Lux Laser & LED Light Therapy Mask combines 660-nanometer (nm) and 1,064-nm lasers with a 660-nm LED light for a more intensive treatment. The brand claims it can help smooth wrinkles, soothe inflammation, reduce pigmentation, and minimize redness. After two weeks of testing, I haven’t noticed any visible changes in my skin just yet, though to its credit, I also haven’t experienced any irritation or adverse reactions.

My biggest issue was the initial unboxing experience: The mask had a strong chemical odor that reminded me of formaldehyde. For a device that sits against your face and doesn’t have a mouth opening, that’s not exactly reassuring. Wiping it down and letting it air out significantly reduced the smell, but it definitely made for a less-than-ideal first impression.

That said, the mask itself is extremely comfortable. The soft, flexible silicone contours well to the face, and the dual-strap design keeps it secure without feeling restrictive. Treatments are quick and easy to customize thanks to four different modes, all controlled through an attached remote. And because it’s cordless, you’re free to move around while using it.

At full price, it’s a steep investment compared to its competitors. But with the current $270 discount, it becomes a much more compelling option, especially given the added laser therapy component, which isn’t as common at this price point. I’ll continue testing through the full six-week period before sharing my final verdict, but if you’re tempted to take advantage of the sale now, Megelin does offer a 60-day money-back guarantee and a one-year warranty.

Tech

‘Orbs,’ ‘Saucers,’ and ‘Flashes’ on the Moon: Pentagon Drops New UFO Files

Trump first teased the release in February in a Truth Social post. The Pentagon coordinated the release in partnership with the White House, Director of National Intelligence Tulsi Gabbard, the Energy Department, NASA, and the FBI. Many of the files in this new drop contain documents that are already publicly available. However, some versions of these known documents in the new files contain more pages, or fewer redactions, than previously released versions.

More than 60 percent of Americans believe that the government is concealing information about UAP, according to YouGov, while 40 percent think UAP are likely alien in origin, according to Gallup. Congress has held hearings into whether there’s been a decades-long program to recover “non-human” technologies, yet evidence remains elusive.

Courtesy of the US Department of Defense

“If it’s just more blobby photos or redacted documents that don’t have any details in them, it’s more of the same,” Adam Frank, an astrophysicist at the University of Rochester who studies the search for alien life, says of the new files. “What we need are actual scientific results from the investigations that should have been done if the most extraordinary claims being made are true.”

The document drop follows a week of high-profile discussions of aliens, including Stephen Colbert’s interview with former President Barack Obama, released on Wednesday. Obama cast doubt on government cover-ups about aliens by joking that “some guy guarding the installation would have taken a selfie with the alien and sent it to his girlfriend.”

Courtesy of the US Department of Defense

Members of the Artemis II crew also second-guessed the idea of a vast government-wide conspiracy to hide the discovery of extraterrestrial life in a discussion with The Daily this week.

“Do you realize that if we found alien life out there, and we came back and reported on it, NASA would never have a budget issue for the rest of eternity?” said Reid Weisman, the commander of Artemis II. “So trust me.”

Victor Glover, the astronaut who piloted the mission, added: “Why would we hide that from you?”

-

Politics6 days ago

Politics6 days agoIran weighs US reply delivered via Pakistan as Trump signals opposition to deal terms

-

Tech1 week ago

Tech1 week agoAlmost half of UK businesses hit by cyber attacks | Computer Weekly

-

Tech1 week ago

Tech1 week agoThis Indigenous Language Survived Russian Occupation. Can It Survive YouTube?

-

Fashion1 week ago

Fashion1 week agoCanada’s Lululemon appoints Esi Eggleston Bracey to board of directors

-

Fashion1 week ago

Fashion1 week agoUS’ J.Jill, Inc. appoints Kimberly Wallengren as CMO

-

Entertainment1 week ago

Entertainment1 week agoDavid Allan Coe, country singer who wrote “Take This Job and Shove It,” dies at age 86

-

Business1 week ago

Business1 week agoGovernment hikes jet fuel prices by 5% for international airlines – The Times of India

-

Fashion1 week ago

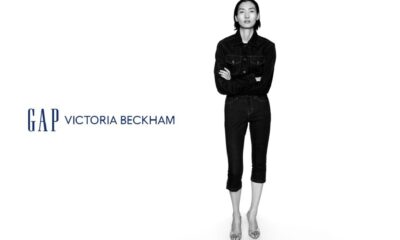

Fashion1 week agoUS’ Gap partners with Victoria Beckham on timeless wardrobe essentials