Tech

AI model could boost robot intelligence via object recognition

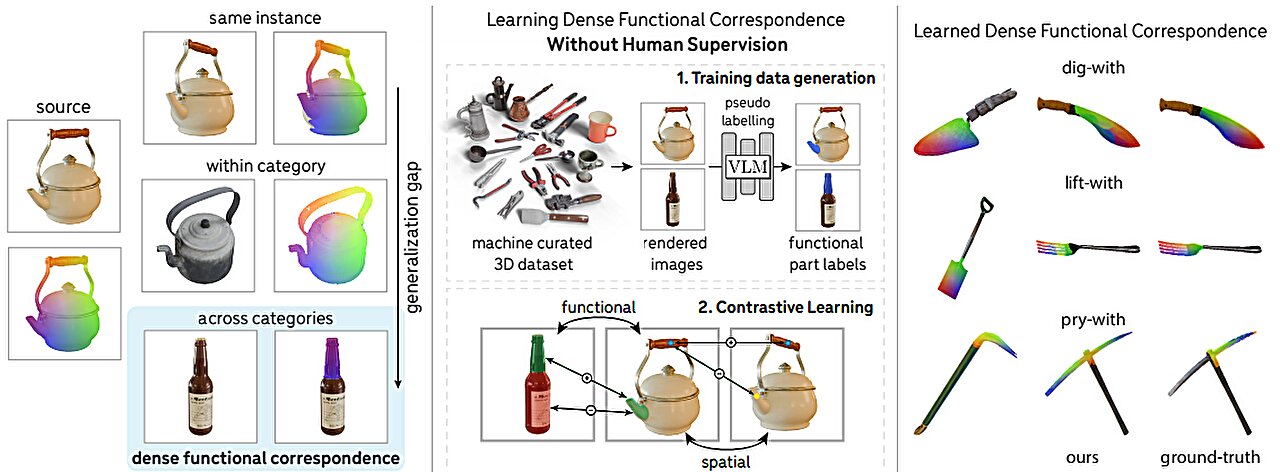

Stanford researchers have developed an innovative computer vision model that recognizes the real-world functions of objects, potentially allowing autonomous robots to select and use tools more effectively.

In the field of AI known as computer vision, researchers have successfully trained models that can identify objects in two-dimensional images. It is a skill critical to a future of robots able to navigate the world autonomously. But object recognition is only a first step. AI also must understand the function of the parts of an object—to know a spout from a handle, or the blade of a bread knife from that of a butter knife.

Computer vision experts call such utility overlaps “functional correspondence.” It is one of the most difficult challenges in computer vision. But now, in a paper that will be presented at the International Conference on Computer Vision (ICCV 2025), Stanford scholars will debut a new AI model that can not only recognize various parts of an object and discern their real-world purposes but also map those at pixel-by-pixel granularity between objects.

A future robot might be able to distinguish, say, a meat cleaver from a bread knife or a trowel from a shovel and select the right tool for the job. Potentially, the researchers suggest, a robot might one day transfer the skills of using a trowel to a shovel—or of a bottle to a kettle—to complete a job with different tools.

“Our model can look at images of a glass bottle and a tea kettle and recognize the spout on each, but also it comprehends that the spout is used to pour,” explains co-first author Stefan Stojanov, a Stanford postdoctoral researcher advised by senior authors Jiajun Wu and Daniel Yamins. “We want to build a vision system that will support that kind of generalization—to analogize, to transfer a skill from one object to another to achieve the same function.”

Establishing correspondence is the art of figuring out which pixels in two images refer to the same point in the world, even if the photographs are from different angles or of different objects. This is hard enough if the image is of the same object but, as the bottle versus tea kettle example shows, the real world is rarely so cut-and-dried. Autonomous robots will need to generalize across object categories and to decide which object to use for a given task.

One day, the researchers hope, a robot in a kitchen will be able to select a tea kettle to make a cup of tea, know to pick it up by the handle, and to use the kettle to pour hot water from its spout.

Autonomy rules

True functional correspondence would make robots far more adaptable than they are currently. A household robot would not need training on every tool at its disposal but could reason by analogy to understand that while a bread knife and a butter knife may both cut, they each serve a specific purpose.

In their work, the researchers say, they have achieved “dense” functional correspondence, where earlier efforts were able to achieve only sparse correspondence to define only a few key points on each object. The challenge so far has been a paucity of data, which typically had to be amassed through human annotation.

“Unlike traditional supervised learning where you have input images and corresponding labels written by humans, it’s not feasible to humanly annotate thousands of pixels individually aligning across two different objects,” says co-first author Linan “Frank” Zhao, who recently earned his master’s in computer science at Stanford. “So, we asked AI to help.”

The team was able to achieve a solution with what is known as weak supervision—using vision-language models to generate labels to identify functional parts and using human experts only to quality-control the data pipeline. It is a far more efficient and cost-effective approach to training.

“Something that would have been very hard to learn through supervised learning a few years ago now can be done with much less human effort,” Zhao adds.

In the kettle and bottle example, for instance, each pixel in the spout of the kettle is aligned with a pixel in the mouth of the bottle, providing dense functional mapping between the two objects. The new vision system can spot function in structure across disparate objects—a valuable fusion of functional definition and spatial consistency.

Seeing the future

For now, the system has been tested only on images and not in real-world experiments with robots, but the team believes the model is a promising advance for robotics and computer vision. Dense functional correspondence is part of a larger trend in AI in which models are shifting from mere pattern recognition toward reasoning about objects. Where earlier models saw only patterns of pixels, newer systems can infer intent.

“This is a lesson in form following function,” says Yunzhi Zhang, a Stanford doctoral student in computer science. “Object parts that fulfill a specific function tend to remain consistent across objects, even if other parts vary greatly.”

Looking ahead, the researchers want to integrate their model into embodied agents and build richer datasets.

“If we can come up with a way to get more precise functional correspondences, then this should prove to be an important step forward,” Stojanov says. “Ultimately, teaching machines to see the world through the lens of function could change the trajectory of computer vision—making it less about patterns and more about utility.”

More information:

Weakly-Supervised Learning of Dense Functional Correspondences. dense-functional-correspondence.github.io/ On arXiv: DOI: 10.48550/arxiv.2509.03893

Citation:

AI model could boost robot intelligence via object recognition (2025, October 20)

retrieved 20 October 2025

from https://techxplore.com/news/2025-10-ai-boost-robot-intelligence-recognition.html

This document is subject to copyright. Apart from any fair dealing for the purpose of private study or research, no

part may be reproduced without the written permission. The content is provided for information purposes only.

Tech

This Jammer Wants to Block Always-Listening AI Wearables. It Probably Won’t Work

Deveillance also claims the Spectre can find nearby microphones by detecting radio frequencies (RF), but critics say finding a microphone via RF emissions is not effective unless the sensor is immediately beside it.

“If you could detect and recognize components via RF the way Spectre claims to, it would literally be transformative to technology,” Jordan wrote in a text to WIRED after he built a device to test detecting RF signatures in microphones. “You’d be able to do radio astronomy in Manhattan.”

Deveillance is also looking at ways to integrate nonlinear junction detection (NLJD), a very high-frequency radio signal used by security professionals to find hidden mics and bugs. NLJD detectors are expensive and used primarily in professional contexts like military operations.

Even if a device could detect a microphone’s exact location, objects around a room can change how the frequencies spread and interact. The emitted frequencies could also be a problem. There haven’t been adequate studies to show what effects ultrasonic frequencies have on the human ear, but some people and many pets can hear them and find them obnoxious or even painful. Baradari acknowledges that her team needs to do more testing to see how pets are affected.

“They simply cannot do this,” engineer and YouTuber Dave Jones (who runs the channel EEVblog) wrote in an email to WIRED. “They are using the classic trick of using wording to imply that it will detect every type of microphone, when all they are probably doing is scanning for Bluetooth audio devices. It’s totally lame.” Baradari reiterates that the Spectre uses a combination of RF and Bluetooth low energy to detect microphones.

WIRED asked Baradari to share any evidence of the Spectre’s effectiveness at identifying and blocking microphones in a person’s vicinity. Baradari shared a few short videoclips of people putting their phones to their ears listening to audioclips—which were presumably jammed by the Spectre—but these videos do little to prove that the device works.

Future Imperfect

Baradari has taken the critiques in stride, acknowledging that the tech is still in development. “I actually appreciate those comments, because they’re making me think and see more things as well,” Baradari says. “I do believe that with the ideas that we’re having and integrating into one device, these concerns can be addressed.”

People were quick to poke fun at the Spectre I online, calling the technology the cone of silence from Dune. Now, the Deveillance website reads, “Our goal is to make the cone of silence become reality.”

John Scott-Railton, a cybersecurity researcher at Citizen Lab, who is critical of the Spectre I, lauded the device’s virality as an indication of the real hunger for these kinds of gadgets to win back our privacy.

“The silver lining of this blowing up is that it is a Ring-like moment that highlights how quickly and intensely consumer attitudes have shifted around pervasive recording devices,” says Scott-Railton. “We need to be building products that do all the cool things that people want but that don’t have the massive privacy- and consent-violation undertow. You need device-level controls, and you need regulations of the companies that are doing this.”

Cooper Quintin, a senior staff technologist at the Electronic Frontier Foundation, echoed those sentiments, even if critics believe Deveillance’s efforts to be flawed.

“If this technology works, it could be a boon for many,” Quintin wrote in an email to WIRED. “It is nice to see a company creating something to protect privacy instead of working on new and creative ways to extract data from us.”

Tech

I’ve Tried Every Pixel Phone Ever Made—Here Are the Best to Buy Right Now

Portrait Light: You can change up the lighting in your portrait selfies after you take them by opening them up in Google Photos, tapping the Edit button, and heading to Actions > Portrait Light. This adds an artificial light you can place anywhere in the photo to brighten up your face and erase that 5 o’clock shadow. Use the slider at the bottom to tweak the strength of the light. It also works on older Portrait mode photos you may have captured. It works only on faces.

Health and Accessibility Features

Cough & Snore Detection (Tensor G2 and newer): On the Pixel 7 and newer, you can have your Pixel detect if you cough and snore when sleeping, provided you place your Pixel near your bed before you nod off. This will work only if you use Google’s Bedtime mode function, which you can turn on by heading to Settings > Digital Wellbeing & Parental Controls > Bedtime Mode.

Guided Frame (Tensor G2 and newer): For blind or low-vision people, the camera app can now help take a selfie with audio cues (it works with the front and rear cameras). You’ll need to enable TalkBack for this to work (Settings > Accessibility > TalkBack). Then open the camera app. It will automatically help you frame the shot.

Simple View: This mode makes the font size bigger, along with other elements on the screen, like widgets and quick-settings tiles. It also increases touch sensitivity, all of which hopefully makes it easier to see and use the screen. You can enable it by heading to Settings > Accessibility > Simple View.

Safety and Security Features

Theft Protection: This is a broader Android 15 feature, but essentially, Google’s algorithms can figure out if someone snatches your Pixel out of your hands. If they’re trying to get away, the device automatically locks. Additionally, with another device, you can use Remote Lock to lock your stolen Pixel with your phone number and a security answer. To toggle these features on, go to Settings > Security & privacy > Device unlock > Theft protection.

Identity Check: If your Pixel detects you’re in a new location, Identity Check will require your fingerprint or face authentication before you can make any changes to sensitive settings, offering extra peace of mind in case you lose your phone or if it’s stolen. You can enable this in Settings > Security & privacy > Device unlock > Theft protection > Identity Check.

Courtesy of Google

Private Space: Another Android 15 addition, Pixel phones finally have a feature that lets you hide and lock select apps. You can use a separate Google account, set a lock, and install any app to hide away. To set it all up, head to Settings > Security & privacy > Private space.

Satellite eSOS (Pixel 9 and Pixel 10 series, excluding Pixel 9a): Like Apple’s SOS feature on iPhones, you can now reach emergency contacts or emergency services even when you don’t have cell service or Wi-Fi connectivity. It’s not just available in the continental US, but also in Hawaii, Alaska, Canada, and even Europe.

Tech

I’ve Tried Every Pixel Phone Ever Made—Here Are the Best to Buy Right Now

Portrait Light: You can change up the lighting in your portrait selfies after you take them by opening them up in Google Photos, tapping the Edit button, and heading to Actions > Portrait Light. This adds an artificial light you can place anywhere in the photo to brighten up your face and erase that 5 o’clock shadow. Use the slider at the bottom to tweak the strength of the light. It also works on older Portrait mode photos you may have captured. It works only on faces.

Health and Accessibility Features

Cough & Snore Detection (Tensor G2 and newer): On the Pixel 7 and newer, you can have your Pixel detect if you cough and snore when sleeping, provided you place your Pixel near your bed before you nod off. This will work only if you use Google’s Bedtime mode function, which you can turn on by heading to Settings > Digital Wellbeing & Parental Controls > Bedtime Mode.

Guided Frame (Tensor G2 and newer): For blind or low-vision people, the camera app can now help take a selfie with audio cues (it works with the front and rear cameras). You’ll need to enable TalkBack for this to work (Settings > Accessibility > TalkBack). Then open the camera app. It will automatically help you frame the shot.

Simple View: This mode makes the font size bigger, along with other elements on the screen, like widgets and quick-settings tiles. It also increases touch sensitivity, all of which hopefully makes it easier to see and use the screen. You can enable it by heading to Settings > Accessibility > Simple View.

Safety and Security Features

Theft Protection: This is a broader Android 15 feature, but essentially, Google’s algorithms can figure out if someone snatches your Pixel out of your hands. If they’re trying to get away, the device automatically locks. Additionally, with another device, you can use Remote Lock to lock your stolen Pixel with your phone number and a security answer. To toggle these features on, go to Settings > Security & privacy > Device unlock > Theft protection.

Identity Check: If your Pixel detects you’re in a new location, Identity Check will require your fingerprint or face authentication before you can make any changes to sensitive settings, offering extra peace of mind in case you lose your phone or if it’s stolen. You can enable this in Settings > Security & privacy > Device unlock > Theft protection > Identity Check.

Courtesy of Google

Private Space: Another Android 15 addition, Pixel phones finally have a feature that lets you hide and lock select apps. You can use a separate Google account, set a lock, and install any app to hide away. To set it all up, head to Settings > Security & privacy > Private space.

Satellite eSOS (Pixel 9 and Pixel 10 series, excluding Pixel 9a): Like Apple’s SOS feature on iPhones, you can now reach emergency contacts or emergency services even when you don’t have cell service or Wi-Fi connectivity. It’s not just available in the continental US, but also in Hawaii, Alaska, Canada, and even Europe.

-

Business1 week ago

Business1 week agoAttock Cement’s acquisition approved | The Express Tribune

-

Fashion1 week ago

Fashion1 week agoPolicy easing drives Argentina’s garment import surge in 2025

-

Politics1 week ago

Politics1 week agoWhat are Iran’s ballistic missile capabilities?

-

Business1 week ago

Business1 week agoIndia Us Trade Deal: Fresh look at India-US trade deal? May be ‘rebalanced’ if circumstances change, says Piyush Goyal – The Times of India

-

Sports1 week ago

Sports1 week agoLPGA legend shares her feelings about US women’s Olympic wins: ‘Gets me really emotional’

-

Fashion1 week ago

Fashion1 week agoSouth Korea’s Misto Holdings completes planned leadership transition

-

Entertainment1 week ago

Entertainment1 week agoBobby J. Brown, “The Wire” and “Law & Order: SUV” actor, dies of smoke inhalation after reported fire

-

Fashion1 week ago

Fashion1 week agoTexwin Spinning showcasing premium cotton yarn range at VIATT 2026