Tech

MIT-IBM Watson AI Lab seed to signal: Amplifying early-career faculty impact

The early years of faculty members’ careers are a formative and exciting time in which to establish a firm footing that helps determine the trajectory of researchers’ studies. This includes building a research team, which demands innovative ideas and direction, creative collaborators, and reliable resources.

For a group of MIT faculty working with and on artificial intelligence, early engagement with the MIT-IBM Watson AI Lab through projects has played an important role helping to promote ambitious lines of inquiry and shaping prolific research groups.

Building momentum

“The MIT-IBM Watson AI Lab has been hugely important for my success, especially when I was starting out,” says Jacob Andreas — associate professor in the Department of Electrical Engineering and Computer Science (EECS), a member of the MIT Computer Science and Artificial Intelligence Laboratory (CSAIL), and a researcher with the MIT-IBM Watson AI Lab — who studies natural language processing (NLP). Shortly after joining MIT, Andreas jump-started his first major project through the MIT-IBM Watson AI Lab, working on language representation and structured data augmentation methods for low-resource languages. “It really was the thing that let me launch my lab and start recruiting students.”

Andreas notes that this occurred during a “pivotal moment” when the field of NLP was undergoing significant shifts to understand language models — a task that required significantly more compute, which was available through the MIT-IBM Watson AI Lab. “I feel like the kind of the work that we did under that [first] project, and in collaboration with all of our people on the IBM side, was pretty helpful in figuring out just how to navigate that transition.” Further, the Andreas group was able to pursue multi-year projects on pre-training, reinforcement learning, and calibration for trustworthy responses, thanks to the computing resources and expertise within the MIT-IBM community.

For several other faculty members, timely participation with the MIT-IBM Watson AI Lab proved to be highly advantageous as well. “Having both intellectual support and also being able to leverage some of the computational resources that are within MIT-IBM, that’s been completely transformative and incredibly important for my research program,” says Yoon Kim — associate professor in EECS, CSAIL, and a researcher with the MIT-IBM Watson AI Lab — who has also seen his research field alter trajectory. Before joining MIT, Kim met his future collaborators during an MIT-IBM postdoctoral position, where he pursued neuro-symbolic model development; now, Kim’s team develops methods to improve large language model (LLM) capabilities and efficiency.

One factor he points to that led to his group’s success is a seamless research process with intellectual partners. This has allowed his MIT-IBM team to apply for a project, experiment at scale, identify bottlenecks, validate techniques, and adapt as necessary to develop cutting-edge methods for potential inclusion in real-world applications. “This is an impetus for new ideas, and that’s, I think, what’s unique about this relationship,” says Kim.

Merging expertise

The nature of the MIT-IBM Watson AI Lab is that it not only brings together researchers in the AI realm to accelerate research, but also blends work across disciplines. Lab researcher and MIT associate professor in EECS and CSAIL Justin Solomon describes his research group as growing up with the lab, and the collaboration as being “crucial … from its beginning until now.” Solomon’s research team focuses on theoretically oriented, geometric problems as they pertain to computer graphics, vision, and machine learning.

Solomon credits the MIT-IBM collaboration with expanding his skill set as well as applications of his group’s work — a sentiment that’s also shared by lab researchers Chuchu Fan, an associate professor of aeronautics and astronautics and a member of the Laboratory for Information and Decision Systems, and Faez Ahmed, associate professor of mechanical engineering. “They [IBM] are able to translate some of these really messy problems from engineering into the sort of mathematical assets that our team can work on, and close the loop,” says Solomon. This, for Solomon, includes fusing distinct AI models that were trained on different datasets for separate tasks. “I think these are all really exciting spaces,” he says.

“I think these early-career projects [with the MIT-IBM Watson AI Lab] largely shaped my own research agenda,” says Fan, whose research intersects robotics, control theory, and safety-critical systems. Like Kim, Solomon, and Andreas, Fan and Ahmed began projects through the collaboration the first year they were able to at MIT. Constraints and optimization govern the problems that Fan and Ahmed address, and so require deep domain knowledge outside of AI.

Working with the MIT-IBM Watson AI Lab enabled Fan’s group to combine formal methods with natural language processing, which she says, allowed the team to go from developing autoregressive task and motion planning for robots to creating LLM-based agents for travel planning, decision-making, and verification. “That work was the first exploration of using an LLM to translate any free-form natural language into some specification that robot can understand, can execute. That’s something that I’m very proud of, and very difficult at the time,” says Fan. Further, through joint investigation, her team has been able to improve LLM reasoning — work that “would be impossible without the IBM support,” she says.

Through the lab, Faez Ahmed’s collaboration facilitated the development of machine-learning methods to accelerate discovery and design within complex mechanical systems. Their Linkages work, for instance, employs “generative optimization” to solve engineering problems in a way that is both data-driven and has precision; more recently, they’re applying multi-modal data and LLMs to computer-aided design. Ahmed states that AI is frequently applied to problems that are already solvable, but could benefit from increased speed or efficiency; however, challenges — like mechanical linkages that were deemed “almost unsolvable” — are now within reach. “I do think that is definitely the hallmark [of our MIT-IBM team],” says Ahmed, praising the achievements of his MIT-IBM group, which is co-lead by Akash Srivastava and Dan Gutfreund of IBM.

What began as initial collaborations for each MIT faculty member has evolved into a lasting intellectual relationship, where both parties are “excited about the science,” and “student-driven,” Ahmed adds. Taken together, the experiences of Jacob Andreas, Yoon Kim, Justin Solomon, Chuchu Fan, and Faez Ahmed speak to the impact that a durable, hands-on, academia-industry relationship can have on establishing research groups and ambitious scientific exploration.

Tech

Meta Is Shutting Down Horizon Worlds on Meta Quest

Pour one out from your digital bottle, because Meta is shutting down the virtual reality experience of Horizon Worlds.

Meta sent an email blast to Horizon Worlds users today stating that the social VR world will officially end on its Quest VR headsets; starting March 31, Horizon Worlds will no longer be in the Quest store. Some Horizon-specific perks, including Meta Credits, avatars, and some digital clothes and in-world purchases, will also be removed. The VR worlds will be shutting down entirely on June 15, after which the service will be available only as a mobile platform.

The move comes after Meta made widespread cuts to its Reality Labs division in February, laying off 10 percent of employees in its VR department.

Horizon Worlds was Meta’s grand foray into building out the metaverse, the aspiration of a fully virtual environment inspired by Neal Stephenson’s Snow Crash. The company believed in the effort so much that it changed its name from Facebook to Meta in support of its VR endeavors.

Horizon Worlds is one of the less popular VR services out there, if the borderline glee you can find in the comments of the r/oculus subreddit thread about the service ending is anything to go by. It was widely mocked since it was first announced, especially due to a rocky start. Player avatars didn’t have legs and looked like such dead-eyed monsters that Meta CEO Mark Zuckerberg’s uncanny avatar became a meme.

Almost immediately, Horizon Worlds was populated primarily by children. But screeching kiddos throwing digital doughnuts around are not the most stable or profitable user base. Meta pumped billions of dollars into the service, arranging high-profile partnerships with other brands and artists to have virtual concerts by Imagine Dragons and Coldplay. Even with all that pomp, Meta’s proprietary-verse has always been less popular than VRChat, the social service that people actually seem to like enough to attend virtual raves and presidential elections.

As Meta shifts its focus to artificial intelligence and its Ray-Ban smart glasses, it has drastically cut its investments in its metaverse divisions, including stopping updates to very popular services like Supernatural Fitness.

“Meta’s pivot on Horizon Worlds is the predicted and inevitable outcome of a big, risky bet that never found an audience,” wrote Mike Proulx, vice president and research director at market research firm Forrester, in an email to WIRED. “Meta was trying to solve for a consumer problem that doesn’t exist. You can’t build a mass social platform reliant on hardware most people neither own nor want to wear for more than short bursts.”

Tech

Why DoorDash Will Now Pay You to Eat at a Restaurant

At The Eighty-Six in Manhattan, exclusivity is the point.

The luxe, 11-table steakhouse is the sort of place that lavishes caviar and aged mimolette cheese on its potatoes, and crows that your market-price duck was raised by one Dr. Joe Jurgielewicz, DVM, in the rural hills of Pennsylvania. Taylor Swift has reportedly dined there in a Miu Miu skirt.

Reservations are a scarce commodity that the restaurant, and New York law forbids you from selling one. “Access is the main asset,” wrote food writer Helen Rosner in a recent New Yorker review of The Eighty-Six. “The product is the door, and what a door! An impossible door!”

What may be more surprising is that arguably New York’s most exclusive restaurant will set aside space for diners only via “table drops” for The Eighty-Six on DoorDash, a food logistics app that until recently placed its bets on the notion that you’d rather eat burritos at home.

After acquiring hospitality tech company SevenRooms last year, DoorDash has plunged whole-body into a raging war with competing tech companies such as Resy and OpenTable for control over an increasingly scarce asset: seats at some of the most exclusive restaurants in the country.

“We’re offering value to customers to discover new restaurants for casual dining,” DoorDash CEO Tony Xu told investors in a February 2026 earnings call. “We’re also doing it in the form of access— where we’re offering reservations to some of the best restaurants.”

Equally unreservable New York sister restaurants Or’esh and The Corner Store, likewise, will not save you a seat except through DoorDash’s app and website. Exclusive arrangements with DoorDash and SevenRooms also hold for some of the most gate-kept restaurants in Miami, like Michelin-recognized Ecuadorian hot spot Cotoa or Miami-London steakhouse Sparrow Italia.

So far, DoorDash has rolled out reservations in 13 of the largest US cities, including Los Angeles, Las Vegas, and Chicago. But many more are slated.

Deep in the Reservation Wars

Maybe it’s the aftermath of the Covid-19 pandemic, which accustomed the country to wildly long incubation times on plans for food and drink. Or maybe it’s just a sign of growing wealth inequality, as middlebrow restaurants struggle but ultra-exclusive experiences for the wealthy proliferate.

But as WIRED’s colleagues at Bon Appetit noted a few years back, restaurant reservation mania has in recent years spiraled into competitive sport, leading to a gray economy of gig-work line-standers and a damaging black market of restaurant scalpers who hoarded reservations and resold seats to high bidders.

A parade of American cities, beginning with New York, have since outlawed reservation scalping after lobbying by restaurant groups. What’s taken its place is a new pitched battle among restaurant reservation apps.

DoorDash is the newest and perhaps most disruptive entrant in these reservation wars, as technology apps leverage restaurant access to bring diners into an entire loyalty-based ecosystem—often through partnerships with credit card companies.

OpenTable is now offering dining credits and reserved tables at luxe restaurants in a number of major American cities for Chase Sapphire cardholders. Reservation app Resy, owned by credit card company American Express, has absorbed event ticketing app Tock to take this even further, creating an ecosystem of exclusive “experiences” and loyalty rewards for American Express cardholders.

DoorDash Moving in Fast

“But no one seems to be moving in as quickly, or as aggressively, as DoorDash, already the largest dining delivery app in the country before folding in SevenRooms. DoorDash has been quick to press this advantage by locking down exclusive access to some of the most in-demand tables across the country.

In Manhattan alone, more than 200 restaurants have current or pending deals with DoorDash to offer exclusive tables, times, or reservations. At the February earnings call, DoorDash CEO Xu laid out the strategy: becoming an everything app for restaurants to capture customers.

Tech

AI tools offer ‘near-real-time’ analysis of data from seized mobile phones and computers | Computer Weekly

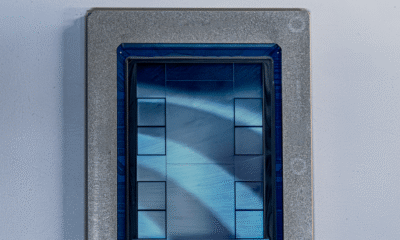

Artificial intelligence (AI)-powered tools developed by Israeli digital intelligence company Cellebrite will give police investigators the capability to interrogate call records, text messages, images and videos stored on mobile phones and other electronic devices at ultra-high speed.

The company has developed AI tools that allow police investigators to interrogate data retrieved from multiple electronic devices, discover links between different data sets, map the location of phones over time and construct timelines of events.

Cellebrite’s Guardian Investigate platform aims to help law enforcement agencies investigate major incidents, such as shootings, terrorist attacks or large organised crime investigations, by gaining rapid insights from data as it is gathered.

The platform allows investigators from multiple departments and agencies to work on the same data – which is held on a central cloud platform – allocate tasks and identify further avenues of investigation.

The technology enables investigators to analyse data as soon as it is retrieved from a mobile phone and uploaded into Cellebrite’s Guardian cloud for decoding and analysis, without having to wait for forensic experts to submit reports on each mobile device.

Mapping people’s movements

The company demonstrated how its platform is able to take cell site data, which records the phone masts a mobile phone has connected to, along with data from Google Maps, to track the movements of an individual over time.

The platform acts as a replacement for whiteboards used by police during investigations to map locations, timelines and communications, including social media posts and video, with AI tools able to find relationships between them.

Guardian Investigate uses AI to spot anomalous behaviour – for example, it can identify when two people who are in regular phone contact suddenly stop communicating, or when someone suddenly puts their phone into airplane mode.

Cellebrite has incorporated technology from its 2021 acquisition of open source intelligence company Digital Clues to develop AI agents that can identify the owner of an email or a phone number from publicly available information on the internet.

AI is able to identify owners of mobile devices

Matt Goeckel, a former law enforcement official and now technical marketing director at Cellebrite, demonstrated how the tool is able to autonomously identify the owner of a mobile phone by analysing the emails used to log in to its apps and linking them to their owner through open source research on the internet.

“I can ask Investigate AI to go off and do an open source search, and see what’s available about this particular individual,” he told Computer Weekly. “We will find profile pictures, we will find additional names, we will find user names, phone numbers, addresses. This is all public information.”

Goeckel said that one of the platform’s most powerful capabilities is its ability to find inconsistencies in large volumes of evidence, such as when two witnesses give contradictory accounts in witness statements. An investigator would normally spot that, but “as the cases grow, the risk of missing something becomes greater”, he said.

Because the platform is able to hold all the data relating to a particular investigation, it is able to eliminate “swivel chairing”, where investigators have to look at one screen to view a surveillance video and another to view call records.

Rapid summaries of text messages

Brazoria County Sheriff’s Office in Texas, which carried out a pilot of Guardian Investigate, claims that Cellebrite’s AI technology was able to summarise the contents of a mobile device holding 200,000 text messages in a fraction of the time taken by human investigators.

The AI software “instantly” revealed connections that would have been “nearly impossible” to identify manually, allowing analysts to create operational intelligence packages in hours rather than in months, it said in a testimonial written for Cellebrite.

Another tool, Cellebrite Genesis, is a standalone AI analysis tool for organisations that want to keep their data in-house, with similar capabilities, operating through a ChatGPT-like interface.

Cellebrite claims that in one counter-terrorism case in Australia, Genesis was able to uncover evidence of a planned terrorism attack in three minutes, a task that would take a human analyst two to three weeks.

According to Cellebrite, digital evidence is now used in over 90% of criminal cases, including data from mobile phones, social media and, in recent years, drones.

In the past, investigators typically collected a phone from a crime scene and sent it to a lab for analysis, where an expert would pick out data based on a search warrant and send it back to the investigator.

Ashely Hernandez, a product management executive at Cellebrite, told Computer Weekly its AI technology will allow investigators to work directly with the data from seized devices without having to wait for experts to review the data and send in reports. “It is as close to real time as we can make it happen,” she said.

The human in the loop

Cellebrite says its AI tools allow analysts to check its decisions by reviewing the source evidence used by the AI, and has a “human in the loop” by design to guard against errors and the possibility of AI hallucinations.

Peter Sommer, a forensic expert familiar with Cellebrite’s technology, said that while AI is good at sorting through large quantities of data, its results have to be manually checked if the evidence is to stand up in court.

“In any situation where you use AI in a forensic science situation, you have to go back to the original data,” he added. “AI is fine at sorting through large quantities of data, but having pointed you in the right direction, you then have to go back manually and check it. There are just too many things that can go wrong with AI if people just take the immediate results.”

-

Business6 days ago

Business6 days agoStock market crash today (March 12, 2026): Nifty50 opens below 23,600; BSE Sensex down over 900 points on continuing US-Iran war – The Times of India

-

Fashion1 week ago

Fashion1 week agoIntertextile Shanghai 2026: Fringe events spotlight market trends

-

Entertainment1 week ago

Entertainment1 week agoWhat time will NASA’s 600 kg satellite crash to Earth today— 14 years after launch?

-

Business1 week ago

Business1 week agoCrude oil surpasses $100: WTI up 30%, brent crude reaches $118; what it means? – The Times of India

-

Fashion1 week ago

Fashion1 week agoGerman brand Adidas posts 13% revenue growth in 2025

-

Tech6 days ago

Tech6 days agoMeta Developed 4 New Chips to Power Its AI and Recommendation Systems

-

Fashion7 days ago

Fashion7 days agoUK’s Topshop unveils Tolu Coker capsule collection

-

Tech1 week ago

Tech1 week agoI Used Google’s New Gemini-Powered ‘Help Me Create’ Tool in Docs. It’s Great at Corporate-Speak