Tech

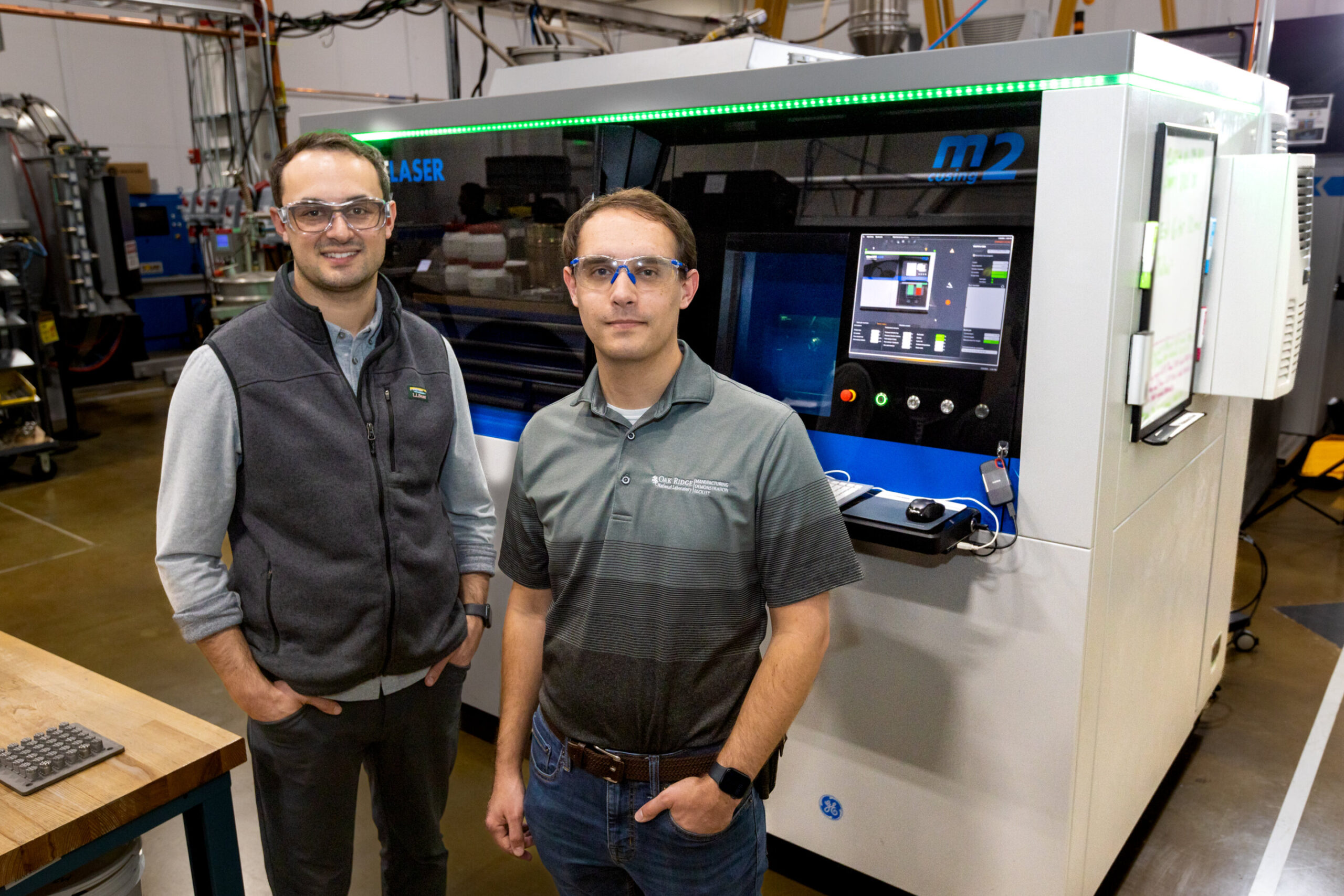

New dataset for smarter 3D printing released

Oak Ridge National Laboratory’s Peregrine software, used to monitor and analyze parts created through powder bed additive manufacturing, has released its most advanced dataset to date.

The dataset is titled “In situ Visible Light and Thermal Imaging Data from a Laser Powder Bed Fusion Additive Manufacturing Process Co-Registered to X-ray Computed Tomography and Fatigue Data.”

In its ongoing effort to support the nation’s additive manufacturing industry with comprehensive datasets, the Department of Energy’s Manufacturing Demonstration Facility produced this new dataset as part of a study to establish strong correlations between manufacturing anomalies, internal defects, and resulting mechanical performance.

This dataset contains state-of-the-art monitoring data for laser powder bed fusion (L-PBF), which uses a laser to melt and fuse metal powder to create the layers of a metal part. The dataset includes machine process parameters and sensor data, geometries, and detailed images of the 3D-build process captured from multiple angles and lighting types, combining high-resolution visible and near-infrared imaging along with X-ray scans of the printed parts.

“Peregrine takes images during printing, using AI to look for anomalies,” said Luke Scime, a researcher in the Manufacturing Systems Analytics Group at ORNL.

“You do that for every single layer, and you build up a three-dimensional map of all the locations that might have issues, and then you try to predict which of those might cause a problem in the final part.”

The Peregrine software’s custom algorithm uses pixel values of images to scrutinize the composition of edges, lines, corners, and textures, and sends an alert to operators about any problems during the printing process so they can make quick adjustments.

Through its Dynamic Multilabel Segmentation Convolutional Neural Network, or DMSCNN, Peregrine looks at data from multiple sensors to detect problems and send an alert. For instance, L-PBF prints experience spatter, where molten material is ejected as the laser melts the metal powder. These spattered particles can land elsewhere on the part, affecting the overall quality.

The new dataset includes all DMSCNN segmentation results and fatigue-tested specimens subjected to such spatter-induced perturbations.

This comprehensive ensemble of information supports AI model development for digital qualification of AM processes. By using the improved open-source Peregrine dataset, researchers and manufacturers can develop even smarter, adaptive quality assurance and quality control systems for their 3D-printed parts.

Other ORNL researchers who contributed to the new dataset include Zackary Snow, Chase Joslin, William Halsey, Andres Marquez Rossy, Amir Ziabari, Vincent Paquit, and Ryan Dehoff.

More information:

Zackary Snow et al, In situ Visible Light and Thermal Imaging Data from a Laser Powder Bed Fusion Additive Manufacturing Process Co-Registered to X-ray Computed Tomography and Fatigue Data, Oak Ridge National Laboratory (ORNL), Oak Ridge, TN (United States). Oak Ridge Leadership Computing Facility (OLCF); Oak Ridge National Laboratory (ORNL), Oak Ridge, TN (United States) (2025). DOI: 10.13139/ornlnccs/2524534

Citation:

New dataset for smarter 3D printing released (2025, August 25)

retrieved 25 August 2025

from https://techxplore.com/news/2025-08-dataset-smarter-3d.html

This document is subject to copyright. Apart from any fair dealing for the purpose of private study or research, no

part may be reproduced without the written permission. The content is provided for information purposes only.

Tech

How to Watch the Lyrids Meteor Shower at Its Peak

In mid-April, astronomy enthusiasts will be able to enjoy one of the classic celestial spectacles. The meteor shower known as the Lyrids will illuminate the sky, especially in the northern hemisphere, and anyone will be able to see it with the naked eye, weather permitting—if they know where to look.

The Lyrids began to appear as early as April 14, but their activity peaks between the night of April 21 and the early morning of April 22, according to NASA. During those hours, the shower will show 15 to 20 meteors per hour under dark skies.

The shower gets its name because the meteors appear to emerge from the constellation Lyra. Locating the radiant is simple if you use an astronomical mapping app: Just find Vega, the fifth brightest star in the sky, surpassed only by Sirius, Canopus, Alpha Centauri A, and Arcturus. Once you locate it, look around it; the luminous traces of the Lyrids will seem to be projected from that point due to a perspective effect. Keep in mind that it takes 20 to 30 minutes for the human eye to adjust to darkness.

The moon will be in early crescent phase during the peak, so its light will interfere very little. With a dark sky, meteors should stand out easily. The shower is usually visible from 10 pm to dawn, although early morning offers the best conditions. It is best to stay away from light pollution and, if possible, to observe from high ground. An outing to the mountains works well.

Each meteor shower has a different origin. In April, Earth crosses the cloud of fragments left by comet C/1861 G1 (Thatcher) in its orbit around the sun. This comet, discovered in 1861, takes about 415 years to complete its journey. The grains of ice and rock that it released centuries ago enter the atmosphere at high speed and produce the flashes we know as the Lyrids.

After the Lyrids, the calendar still holds several spectacles for those who follow the night sky. The Eta Aquarids will arrive in May with debris from Halley’s Comet. The Perseids will appear in August, the Orionids will return in October, and the year will close with the Leonids in November and the Geminids in December. The latter is considered the most intense and reliable shower on the calendar.

This story originally appeared on WIRED en Español and has been translated from Spanish.

Tech

A Humanoid Robot Set a Half-Marathon Record in China

Over the weekend in China, a humanoid robot shattered world half-marathon record—the human record—by seven minutes.

The star performer was a robot developed by the Chinese company Honor (the smartphone maker), which finished the 13.1-mile race in 50 minutes, 26 seconds. The human record, set by Ugandan Olympic medalist Jacob Kiplimo, is 57 minutes, 20 seconds. The result marks an impressive milestone especially considering that, just a year earlier, the fastest robot at this half-marathon event took two and a half hours to complete the same distance.

But Honor’s robot was not the only participant. The event consisted of more than 100 humanoid robots from 76 institutions across China. The robots lined up alongside 12,000 human runners in Beijing’s E-Town, albeit on separate courses to avoid accidents. The contrast in performance between humans and robots was more than evident.

Run, Robot, Run

A humanoid robot is designed to mimic the structure and movement of the human body, with legs, arms, and sensors that allow it to interact with its environment. In this case, the winning robot incorporated features inspired by elite runners: long legs (almost a meter), advanced balance systems, and a liquid cooling mechanism, similar to that of smartphones, to prevent overheating during the race.

In addition, many of the participating robots operated autonomously, meaning without direct human control. Thanks to artificial intelligence algorithms, they could adjust their pace, maintain balance, and adapt to the terrain in real time. Notably, the Honor robot that achieved the 50-minute mark operated autonomously. The Chinese manufacturer presented another robot, operated by remote control, that ran the same stretch in even less time: 48 minutes, 19 seconds.

As expected, there were some accidents in the race. Some robots fell down, others veered off the path, and several needed technical assistance along the way. While the physical performance of humanoid robots has advanced rapidly, their reliability is still developing. Of course, the laughter and jeers are no longer as frequent as they used to be, replaced by applause and exclamations of surprise.

Robot Superiority

Just like the robots that went viral for their impressive martial arts display a few weeks ago, this long-distance race is part of a broader strategy by China to show off its leadership in the development of advanced robots.

You don’t need to be a robotics expert to see that this achievement demonstrates that machines can outperform humans at specific physical tasks under controlled conditions. (It’s hard to imagine that the winning robot could achieve the same result, for example, if it started to rain during the race.) But humans still have a few tricks up their sleeve: Running in a straight line is very different from performing complex real-world activities, such as manipulating delicate objects or interacting socially.

However, it’s understandable that the image of a robot crossing the finish line in record time, ahead of human athletes, raises several questions. Is this the beginning of a new era in which machines redefine physical limits?

One could argue that a car is a machine, and those have always been faster than humans. But a humanoid robot is designed to mimic humans. It’s more alarming to see one beat humanity at its own game—even if so many of them are still tripping over themselves.

This story originally appeared in WIRED en Español and has been translated from Spanish.

Tech

War Memes Are Turning Conflict Into Content

As ceasefire announcements between the US and Iran—and separately between Israel and Lebanon—dominated headlines over the past two weeks, they also prompted a look back at how war spread online: through memes.

There were jokes about conscription. Captions about getting drafted, but at least with a Bluetooth device. The song “Bazooka” went viral, with users lip-syncing to: “Rest in peace my granny, she got hit by a bazooka.” Military filters followed. So did posts about Americans wanting to be sent to Dubai “to save all the IG models.”

Across the Gulf, the tone was different but the instinct was the same. Memes joked that Iran was replying to Israel faster than the person you’re thinking about. Delivery drivers were shown “dodging missiles.” “Eid fits” became hazmat suits and tactical vests.

Dark humor is one of the oldest responses to fear, a way of reclaiming control, however briefly, over events that offer none. Variations of that idea appear across psychology and philosophy, including Freud’s relief theory, which frames humor as a release of tension.

But social media changes the scale and speed of that instinct.

A joke once shared within a small community can become a global template in minutes. Algorithms do not reward depth or accuracy; they reward engagement. The memes that travel fastest are usually stripped of context, easy to recognize and simple to remix.

Middle East scholar and media analyst Adel Iskandar traces political satire back centuries, from banned satirical papyri in ancient Egypt to cartoons during revolutions and gallows humor in modern wars. “Where there is hardship, there is satire,” he says. “Where there is loss of hope, there is hope in comedy.”

That tradition still exists online. But today it is fused with recommendation systems designed to keep attention moving.

Memes Spread Faster Than Facts

The word “meme” was coined by Richard Dawkins in his 1976 book The Selfish Gene, where he described how ideas replicate like genes. On today’s internet, replication follows platform logic.

Fitness means generality. A meme does not need to be accurate. It needs to feel familiar. It needs the right format, paired with trending audio and the right emotional shorthand.

“A meme is like a virus,” Iskandar says. “If it doesn’t travel, it’ll die.”

The most visible response online is not always the truest one. It is often just the easiest to spread. And once context disappears, one crisis can start to resemble any other.

Geography shapes humor too, and adds another level of tension. “If you live far away from the threat, you’re capable of producing content that ridicules it with an element of safety,” says Iskandar. “Whereas if you happen to be within close proximity, it is more of a fatalism.”

That divide matters. For some users, war exists mainly as mediated spectacle: clips, edits, graphics, headlines, and reaction posts. For others, it is sirens, uncertainty, disrupted flights, rising prices, and messages checking who is safe.

The same meme can function as entertainment in one country and emotional survival in another. Take the American experience of violence, which Sut Jhally, professor of communication at the University of Massachusetts Amherst, says “is very mediated.”

What much of the Western world has consumed instead is what cultural critic George Gerbner called “happy violence”: spectacular, consequence-free, and detached from the aftermath.

Jhally argues that the September 11 attacks remain the defining modern American experience of war-adjacent political violence. Much else has been cinematic: distant invasions, blockbuster destruction, video-game logic, apocalypse franchises.

The teenager from the Midwest joking about being drafted is drawing from zombie films and superhero apocalypses. “There is almost no discussion about what an actual Third World War would look like,” he says. “People do not have a perception of what that really looks like.”

-

Fashion5 days ago

Fashion5 days agoFrance’s LVMH Q1 revenue falls 6%, shows resilience amid Iran war

-

Sports1 week ago

Sports1 week agoThe case for Man United’s Fernandes as Premier League’s best

-

Entertainment1 week ago

Entertainment1 week agoPalace left in shock as Prince William cancels grand ceremony

-

Business1 week ago

Business1 week agoUK could adopt EU single market rules under new legislation

-

Entertainment6 days ago

Entertainment6 days agoIs Claude down? Here’s why users are seeing errors

-

Fashion1 week ago

Fashion1 week agoEnergy emerges as biggest cost driver in textile margins

-

Business1 week ago

Business1 week agoDelta Air Lines unveils first new Delta One suite in premium cabin arms race

-

Tech1 week ago

Tech1 week agoA Lot of Shops Won’t Fix Electric Bikes. Here’s Why