Tech

A new way to test how well AI systems classify text

Is this movie review a rave or a pan? Is this news story about business or technology? Is this online chatbot conversation veering off into giving financial advice? Is this online medical information site giving out misinformation?

These kinds of automated conversations, whether they involve seeking a movie or restaurant review or getting information about your bank account or health records, are becoming increasingly prevalent. More than ever, such evaluations are being made by highly sophisticated algorithms, known as text classifiers, rather than by human beings. But how can we tell how accurate these classifications really are?

Now, a team at MIT’s Laboratory for Information and Decision Systems (LIDS) has come up with an innovative approach to not only measure how well these classifiers are doing their job, but then go one step further and show how to make them more accurate.

The new evaluation and remediation software was developed by Kalyan Veeramachaneni, a principal research scientist at LIDS, his students Lei Xu and Sarah Alnegheimish, and two others. The software package is being made freely available for download by anyone who wants to use it.

The team’s results were published on July 7 in the journal Expert Systems in a paper by Xu, Veeramachaneni, and Alnegheimish of LIDS, along with Laure Berti-Equille at IRD in Marseille, France, and Alfredo Cuesta-Infante at the Universidad Rey Juan Carlos, in Spain.

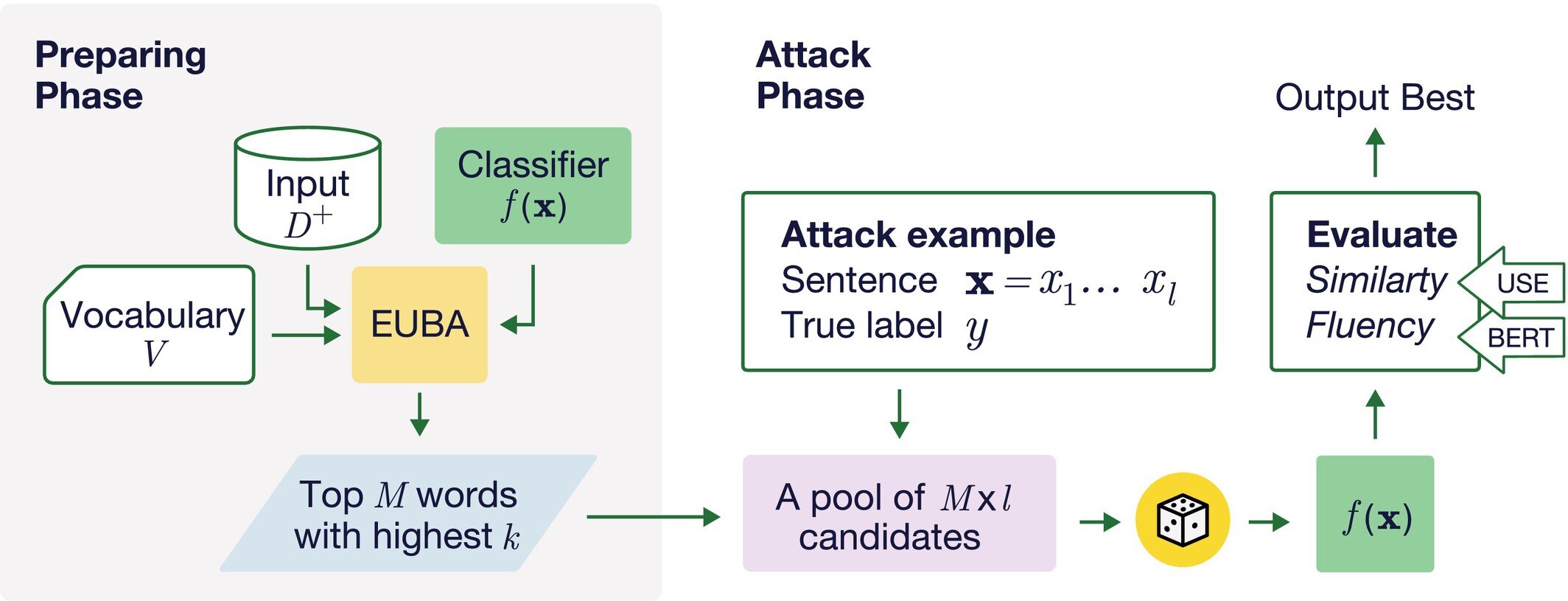

A standard method for testing these classification systems is to create what are known as synthetic examples—sentences that closely resemble ones that have already been classified. For example, researchers might take a sentence that has already been tagged by a classifier program as being a rave review, and see if changing a word or a few words while retaining the same meaning could fool the classifier into deeming it a pan. Or a sentence that was determined to be misinformation might get misclassified as accurate. This ability to fool the classifiers makes these adversarial examples.

People have tried various ways to find the vulnerabilities in these classifiers, Veeramachaneni says. But existing methods of finding these vulnerabilities have a hard time with this task and miss many examples that they should catch, he says.

Increasingly, companies are trying to use such evaluation tools in real time, monitoring the output of chatbots used for various purposes to try to make sure they are not putting out improper responses. For example, a bank might use a chatbot to respond to routine customer queries such as checking account balances or applying for a credit card, but it wants to ensure that its responses could never be interpreted as financial advice, which could expose the company to liability.

“Before showing the chatbot’s response to the end user, they want to use the text classifier to detect whether it’s giving financial advice or not,” Veeramachaneni says. But then it’s important to test that classifier to see how reliable its evaluations are.

“These chatbots, or summarization engines or whatnot, are being set up across the board,” he says, to deal with external customers and within an organization as well, for example providing information about HR issues. It’s important to put these text classifiers into the loop to detect things that they are not supposed to say, and filter those out before the output gets transmitted to the user.

That’s where the use of adversarial examples comes in—those sentences that have already been classified but then produce a different response when they are slightly modified while retaining the same meaning. How can people confirm that the meaning is the same? By using another large language model (LLM) that interprets and compares meanings.

So, if the LLM says the two sentences mean the same thing, but the classifier labels them differently, “that is a sentence that is adversarial—it can fool the classifier,” Veeramachaneni says. And when the researchers examined these adversarial sentences, “we found that most of the time, this was just a one-word change,” although the people using LLMs to generate these alternate sentences often didn’t realize that.

Further investigation, using LLMs to analyze many thousands of examples, showed that certain specific words had an outsized influence in changing the classifications, and therefore the testing of a classifier’s accuracy could focus on this small subset of words that seem to make the most difference. They found that one-tenth of 1% of all the 30,000 words in the system’s vocabulary could account for almost half of all these reversals of classification, in some specific applications.

Lei Xu Ph.D. ’23, a recent graduate from LIDS who performed much of the analysis as part of his thesis work, “used a lot of interesting estimation techniques to figure out what are the most powerful words that can change the overall classification, that can fool the classifier,” Veeramachaneni says.

The goal is to make it possible to do much more narrowly targeted searches, rather than combing through all possible word substitutions, thus making the computational task of generating adversarial examples much more manageable. “He’s using large language models, interestingly enough, as a way to understand the power of a single word.”

Then, also using LLMs, he searches for other words that are closely related to these powerful words, and so on, allowing for an overall ranking of words according to their influence on the outcomes. Once these adversarial sentences have been found, they can be used in turn to retrain the classifier to take them into account, increasing the robustness of the classifier against those mistakes.

Making classifiers more accurate may not sound like a big deal if it’s just a matter of classifying news articles into categories, or deciding whether reviews of anything from movies to restaurants are positive or negative. But increasingly, classifiers are being used in settings where the outcomes really do matter, whether preventing the inadvertent release of sensitive medical, financial, or security information, or helping to guide important research, such as into properties of chemical compounds or the folding of proteins for biomedical applications, or in identifying and blocking hate speech or known misinformation.

As a result of this research, the team introduced a new metric, which they call p, which provides a measure of how robust a given classifier is against single-word attacks. And because of the importance of such misclassifications, the research team has made its products available as open access for anyone to use. The package consists of two components: SP-Attack, which generates adversarial sentences to test classifiers in any particular application, and SP-Defense, which aims to improve the robustness of the classifier by generating and using adversarial sentences to retrain the model.

In some tests, where competing methods of testing classifier outputs allowed a 66% success rate by adversarial attacks, this team’s system cut that attack success rate almost in half, to 33.7%. In other applications, the improvement was as little as a 2% difference, but even that can be quite important, Veeramachaneni says, since these systems are being used for so many billions of interactions that even a small percentage can affect millions of transactions.

More information:

Lei Xu et al, Single Word Change Is All You Need: Using LLMs to Create Synthetic Training Examples for Text Classifiers, Expert Systems (2025). DOI: 10.1111/exsy.70079

This story is republished courtesy of MIT News (web.mit.edu/newsoffice/), a popular site that covers news about MIT research, innovation and teaching.

Citation:

A new way to test how well AI systems classify text (2025, August 14)

retrieved 14 August 2025

from https://techxplore.com/news/2025-08-ai-text.html

This document is subject to copyright. Apart from any fair dealing for the purpose of private study or research, no

part may be reproduced without the written permission. The content is provided for information purposes only.

Tech

Lenovo’s Latest Wacky Concepts Include a Laptop With a Built-In Portable Monitor

Do you like having a second screen with your computer setup? What if your laptop could carry a second screen for you? That’s the idea behind Lenovo’s latest proof of concept, the ThinkBook Modular AI PC, announced at Mobile World Congress in Barcelona.

Lenovo is never shy to show off wacky, weird concept laptops. We’ve seen a PC with a transparent screen, one with a rollable OLED screen, a swiveling screen, and another with a flippy screen. At CES earlier this year, the company showed off a gaming laptop with a display that expands at the push of a button. Sometimes, these concepts turn into real products that go on sale (often in limited quantities).

At MWC 2026, Lenovo trotted out three concepts. While it’s unclear whether any of them will become real, purchasable products, there’s some unique utility here, and a peek at how computing experiences could change in the future.

A Laptop With a Built-In Portable Screen

As someone with a multi-screen setup at home and a fondness for portable monitors, the ThinkBook Modular AI PC appeals to me the most. At first glance, it looks like a normal laptop. Take a look behind, and you’ll notice there’s a second screen magnetically hanging off the back of the laptop, like a koala carrying a baby on its back.

The screen is connected to the laptop using pogo-pin connectors, so you can use it in this state to display content to people in front of you, say, if you were making a presentation during a meeting. Alternatively, you can pop this second screen off, remove a hidden kickstand resting under the laptop, and magnetically attach it to the 14-inch screen so that you have a traditional portable monitor experience. (You’ll need to connect this to the laptop via a USB-C cable in this orientation.)

If you don’t have the desk space for that orientation, you can always remove the keyboard from the base and pop the second screen there—it’ll auto-connect to the laptop via the pogo pins, and you’ll be able to use the Bluetooth keyboard to type on a dual-screen setup that resembles the Asus ZenBook Duo. The whole system is a fantastically portable method of improving productivity on the go, and the laptop isn’t too thick or cumbersome.

Tech

The 5 Big ‘Known Unknowns’ of Donald Trump’s New War With Iran

More recently, Iran has been a regular adversary in cyberspace—and while it hasn’t demonstrated quite the acuity of Russia or China, Iran is “good at finding ways to maximize the impact of their capabilities,” says Jeff Greene, the former executive assistant director of cybersecurity at CISA. Iran, in particular, famously was responsible for a series of distributed-denial-of-service attacks on Wall Street institutions that worried financial markets, and its 2012 attack on Saudi Aramco and Qatar’s Rasgas marked some of the earliest destructive infrastructure cyberattacks.

Today, surely, Iran is weighing which of these tools, networks, and operatives it might press into a response—and where, exactly, that response might come. Given its history of terror campaigns and cyberattacks, there’s no reason to think that Iran’s retaliatory options are limited to missiles alone—or even to the Middle East at all.

Which leads to the biggest known unknown of all:

5. How does this end? There’s an apocryphal story about a 1970s conversation between Henry Kissinger and a Chinese leader—it’s told variously as either Mao-Tse Tung or Zhou Enlai. Asked about the legacy of the French revolution, the Chinese leader quipped, “Too soon to tell.” The story almost surely didn’t happen, but it’s useful in speaking to a larger truth particularly in societies as old as the 2,500-year-old Persian empire: History has a long tail.

As much as Trump (and the world) might hope that democracy breaks out in Iran this spring, the CIA’s official assessment in February was that if Khamenei was killed, he would be likely replaced with hardline figures from the Islamic Revolutionary Guard Corps. And indeed, the fact that Iran’s retaliatory strikes against other targets in the Middle East continued throughout Saturday, even after the death of many senior regime officials—including, purportedly, the defense minister—belied the hope that the government was close to collapse.

The post-World War II history of Iran has surely hinged on three moments and its intersections with American foreign policy—the 1953 CIA coup, the 1979 revolution that removed the shah, and now the 2026 US attacks that have killed its supreme leader. In his recent bestselling book King of Kings, on the fall of the shah, longtime foreign correspondent Scott Anderson writes of 1979, “If one were to make a list of that small handful of revolutions that spurred change on a truly global scale in the modern era, that caused a paradigm shift in the way the world works, to the American, French, and Russian Revolutions might be added the Iranian.”

It is hard not to think today that we are living through a moment equally important in ways that we cannot yet fathom or imagine—and that we should be especially wary of any premature celebration or declarations of success given just how far-reaching Iran’s past turmoils have been.

Defense Secretary Pete Hegseth has repeatedly bragged about how he sees the military and Trump administration’s foreign policy as sending a message to America’s adversaries: “F-A-F-O,” playing off the vulgar colloquialism. Now, though, it’s the US doing the “F-A” portion in the skies over Iran—and the long arc of Iran’s history tells us that we’re a long, long way from the “F-O” part where we understand the consequences.

Let us know what you think about this article. Submit a letter to the editor at mail@wired.com.

Tech

This Backyard Smoker Delivers Results Even a Pitmaster Would Approve Of

While my love of smoked meats is well-documented, my own journey into actually tending the fire started just last spring when I jumped at the opportunity to review the Traeger Woodridge Pro. When Recteq came calling with a similar offer to check out the Flagship 1600, I figured it would be a good way to stay warm all winter.

While the two smokers have a lot in common, the Recteq definitely feels like an upgrade from the Traeger I’ve been using. Not only does it have nearly twice the cooking space, but the huge pellet hopper, rounded barrel, and proper smokestack help me feel like a real pitmaster.

The trade-off is losing some of the usability features that make the Woodridge Pro a great first smoker. The setup isn’t as quite as simple, and the larger footprint and less ergonomic conditions require a little more experience or patience. With both options, excellent smoked meat is just a few button presses away, but speaking as someone with both in their backyard, I’ve been firing up the Recteq more often.

Getting Settled

Photograph: Brad Bourque

Setting up the Recteq wasn’t as time-consuming as the Woodridge, but it was more difficult to manage on my own. Some of the steps, like attaching the bull horns to the lid, or flipping the barrel onto its stand, would really benefit from a patient friend or loved one. Like most smokers, you’ll need to run a burn-in cycle at 400 degrees Fahrenheit to make sure there’s nothing left over from manufacturing or shipping. Given the amount of setup time and need to cool down the smoker after, I would recommend setting this up Friday afternoon if you want to smoke on a Saturday.

-

Politics1 week ago

Politics1 week agoPakistan carries out precision strikes on seven militant hideouts in Afghanistan

-

Business1 week ago

Business1 week agoEye-popping rise in one year: Betting on just gold and silver for long-term wealth creation? Think again! – The Times of India

-

Tech1 week ago

Tech1 week agoThese Cheap Noise-Cancelling Sony Headphones Are Even Cheaper Right Now

-

Sports1 week ago

Sports1 week agoKansas’ Darryn Peterson misses most of 2nd half with cramping

-

Entertainment1 week ago

Entertainment1 week agoSaturday Sessions: Say She She performs "Under the Sun"

-

Sports1 week ago

Sports1 week agoHow James Milner broke Premier League’s appearances record

-

Entertainment1 week ago

Entertainment1 week agoViral monkey Punch makes IKEA toy global sensation: Here’s what it costs

-

Sports1 week ago

Sports1 week agoFloyd Mayweather to come out of retirement again