Tech

How to ensure youth, parents, educators and tech companies are on the same page on AI

Artificial intelligence is now part of everyday life. It’s in our phones, schools and homes. For young people, AI shapes how they learn, connect and express themselves. But it also raises real concerns about privacy, fairness and control.

AI systems often promise personalization and convenience. But behind the scenes, they collect vast amounts of personal data, make predictions and influence behavior, without clear rules or consent.

This is especially troubling for youth, who are often left out of conversations about how AI systems are built and governed.

Concerns about privacy

My research team conducted national research and heard from youth aged 16 to 19 who use AI daily—on social media, in classrooms and in online games.

They told us they want the benefits of AI, but not at the cost of their privacy. While they value tailored content and smart recommendations, they feel uneasy about what happens to their data.

Many expressed concern about who owns their information, how it is used and whether they can ever take it back. They are frustrated by long privacy policies, hidden settings and the sense that you need to be a tech expert just to protect yourself.

As one participant said, “I am mainly concerned about what data is being taken and how it is used. We often aren’t informed clearly.”

Uncomfortable sharing their data

Young people were the most uncomfortable group when it came to sharing personal data with AI. Even when they got something in return, like convenience or customization, they didn’t trust what would happen next. Many worried about being watched, tracked or categorized in ways they can’t see.

This goes beyond technical risks. It’s about how it feels to be constantly analyzed and predicted by systems you can’t question or understand.

AI doesn’t just collect data, it draws conclusions, shapes online experiences, and influences choices. That can feel like manipulation.

Parents and teachers are concerned

Adults (educators and parents) in our study shared similar concerns. They want better safeguards and stronger rules.

But many admitted they struggle to keep up with how fast AI is moving. They often don’t feel confident helping youth make smart choices about data and privacy.

Some saw this as a gap in digital education. Others pointed to the need for plain-language explanations and more transparency from the tech companies that build and deploy AI systems.

Professionals focus on tools, not people

The study found AI professionals approach these challenges differently. They think about privacy in technical terms such as encryption, data minimization and compliance.

While these are important, they don’t always align with what youth and educators care about: trust, control and the right to understand what’s going on.

Companies often see privacy as a trade-off for innovation. They value efficiency and performance and tend to trust technical solutions over user input. That can leave out key concerns from the people most affected, especially young users.

Power and control lie elsewhere

AI professionals, parents and educators influence how AI is used. But the biggest decisions happen elsewhere. Powerful tech companies design most digital platforms and decide what data is collected, how systems work and what choices users see.

Even when professionals push for safer practices, they work within systems they did not build. Weak privacy laws and limited enforcement mean that control over data and design stays with a few companies.

This makes transparency and holding platforms accountable even more difficult.

What’s missing? A shared understanding

Right now, youth, parents, educators and tech companies are not on the same page. Young people want control, parents want protection and professionals want scalability.

These goals often clash, and without a shared vision, privacy rules are inconsistent, hard to enforce or simply ignored.

Our research shows that ethical AI governance can’t be solved by one group alone. We need to bring youth, families, educators and experts together to shape the future of AI.

The PEA-AI model

To guide this process, we developed a framework called PEA-AI: Privacy–Ethics Alignment in Artificial Intelligence. It helps identify where values collide and how to move forward. The model highlights four key tensions:

- Control versus trust: Youth want autonomy. Developers want reliability. We need systems that support both.

- Transparency versus perception: What counts as “clear” to experts often feels confusing to users.

- Parental oversight versus youth voice: Policies must balance protection with respect for youth agency.

- Education versus awareness gaps: We can’t expect youth to make informed choices without better tools and support.

What can be done?

Our research points to six practical steps:

- Simplify consent. Use short, visual, plain-language forms. Let youth update settings regularly.

- Design for privacy. Minimize data collection. Make dashboards that show users what’s being stored.

- Explain the systems. Provide clear, non-technical explanations of how AI works, especially when used in schools.

- Hold systems accountable. Run audits, allow feedback and create ways for users to report harm.

- Teach privacy. Bring AI literacy into classrooms. Train teachers and involve parents.

- Share power. Include youth in tech policy decisions. Build systems with them, not just for them.

AI can be a powerful tool for learning and connection, but it must be built with care. Right now, our research suggests young people don’t feel in control of how AI sees them, uses their data or shapes their world.

Ethical AI starts with listening. If we want digital systems to be fair, safe and trusted, we must give youth a seat at the table and treat their voices as essential, not optional.

This article is republished from The Conversation under a Creative Commons license. Read the original article.![]()

Citation:

How to ensure youth, parents, educators and tech companies are on the same page on AI (2025, October 23)

retrieved 24 October 2025

from https://techxplore.com/news/2025-10-youth-parents-tech-companies-page.html

This document is subject to copyright. Apart from any fair dealing for the purpose of private study or research, no

part may be reproduced without the written permission. The content is provided for information purposes only.

Tech

Samsung’s 2 New Midrange Phones Get Price Hikes and Small Updates

Last month, Samsung jacked up the price of two of its flagship smartphones by $100. Now, its two new midrange models—the Galaxy A37 5G and Galaxy A57 5G—are getting $50 price bumps, despite minor hardware updates over last year’s Galaxy A36 and A56. Samsung has also trimmed the lineup—there’s no successor to the Galaxy A26 this year, at least not yet.

These price increases may be indicative of the economic climate, what with tariffs, higher oil prices due to the war in Iran, and the memory shortage that has driven up RAM and storage costs across the board. If a phone’s price doesn’t go up, it could still mean fewer meaningful hardware upgrades to keep costs down, very much like the recent Google Pixel 10a. (The outlier is the iPhone 17e, which managed to add features like MagSafe and a new processor, along with a few other upgrades, without a change to the price over the iPhone 16e.)

“Price increases or ‘down‑speccing’ have become the norm,” writes Jitesh Ubrani, research manager at IDC, in an email to WIRED. “Unfortunately, consumers will need to adjust to this new reality. The biggest bottleneck for brands right now is memory, with suppliers facing tight availability and significantly higher costs than in past years.” Ubrani says that while geopolitical factors haven’t yet affected hardware pricing, they are adding uncertainty that could increase costs in the future.

Samsung did not comment on exactly what is driving the price bump. However, it says consumers eyeing its A-series phones prioritize upgrading out of necessity—maybe their current phone just broke or is really old—and they don’t care much for AI features. Value for money is the number one purchase driver, above performance and battery life. So it’s a little odd to see the company raise prices, though Samsung hopes the improvements are compelling.

The Galaxy A57 5G costs $550 with 8 GB of RAM and 128 GB of storage, and $610 if you bump storage to 256 GB. Meanwhile, the Galaxy A37 5G starts at $450 for 6 GB of RAM and 128 GB of storage, or $540 for 8 GB of RAM and 256 GB of storage. They both officially go on sale on April 9.

Small Updates

Processor upgrades are the main highlight for these phones. The Galaxy A37 is powered by Samsung’s Exynos 1480, which should offer 14 percent better CPU performance, 24 percent better graphics, and, perhaps shockingly, 167 percent better neural processing performance—helpful for AI tasks. That’s compared to the Qualcomm Snapdragon 6 Gen 3 chip in last year’s Galaxy A36.

The Galaxy A57 sports the Exynos 1680, which isn’t a huge leap over the Exynos 1580 in the Galaxy A56, but still offers a nice lift: 10 percent better CPU performance, 7 percent faster graphics, and 42 percent improved neural processing. Both of these phones still have the same 5,000-mAh battery capacity and charging speeds. (There’s no wireless charging, despite competing phones like the iPhone 17e or Google Pixel 10a offering the feature.)

Tech

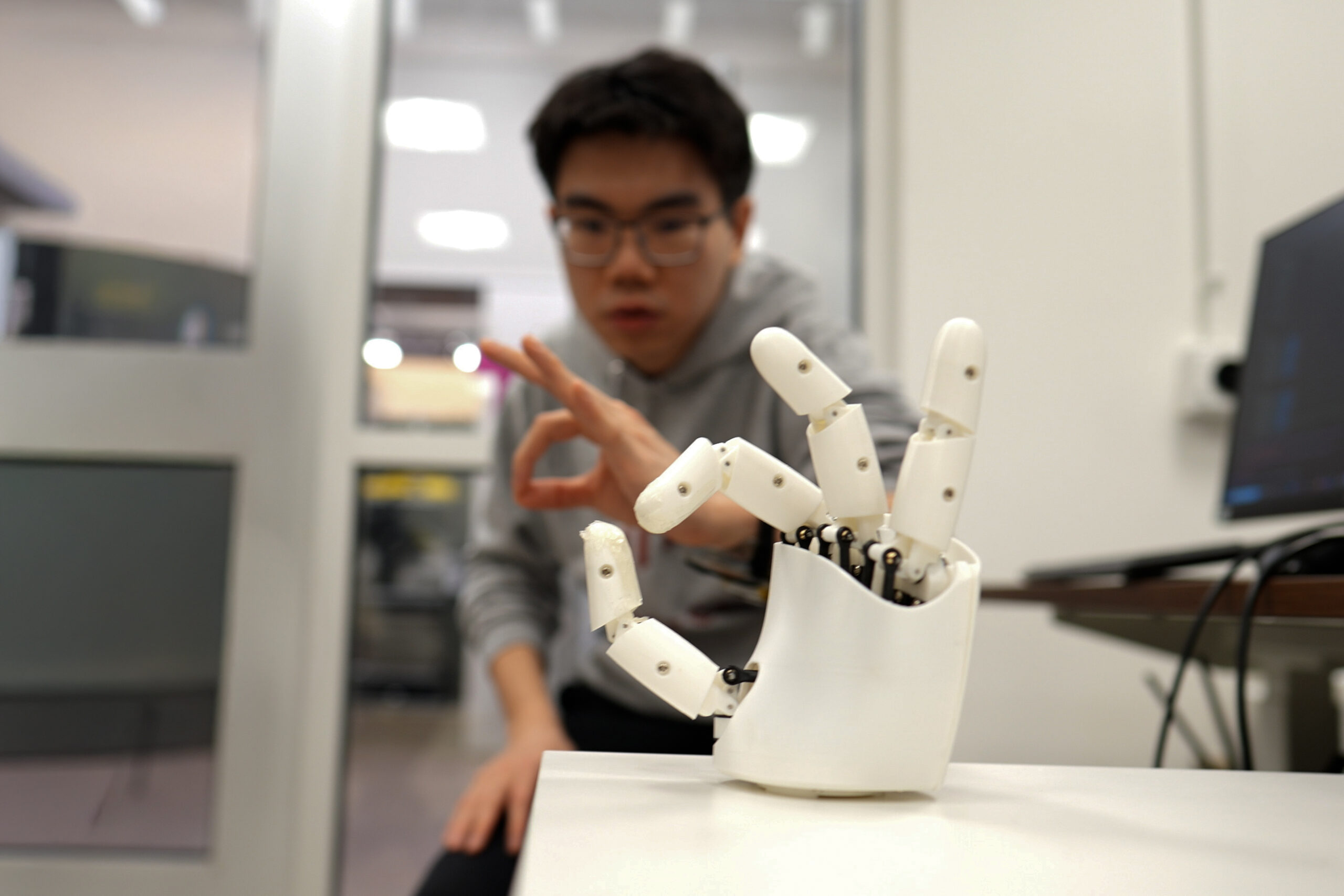

Wristband enables wearers to control a robotic hand with their own movements

The next time you’re scrolling your phone, take a moment to appreciate the feat: The seemingly mundane act is possible thanks to the coordination of 34 muscles, 27 joints, and over 100 tendons and ligaments in your hand. Indeed, our hands are the most nimble parts of our bodies. Mimicking their many nuanced gestures has been a longstanding challenge in robotics and virtual reality.

Now, MIT engineers have designed an ultrasound wristband that precisely tracks a wearer’s hand movements in real-time. The wristband produces ultrasound images of the wrist’s muscles, tendons, and ligaments as the hand moves, and is paired with an artificial intelligence algorithm that continuously translates the images into the corresponding positions of the five fingers and palm.

The researchers can train the wristband to learn a wearer’s hand motions, which the device can communicate in real-time to a robot or a virtual environment.

In demonstrations, the team has shown that a person wearing the wristband can wirelessly control a robotic hand. As the person gestures or points, the robot does the same. In a sort of wireless marionette interaction, the wearer can manipulate the robot to play a simple tune on the piano and shoot a small basketball into a desktop hoop. With the same wristband, a wearer can also manipulate objects on a computer screen, for instance pinching their fingers together to enlarge and minimize a virtual object.

The team is using the wristband to gather hand motion data from many more users with different hand sizes, finger shapes, and gestures. They envision building a large dataset of hand motions that can be plumbed, for instance, to train humanoid robots in dexterity tasks, such as performing certain surgical procedures. The ultrasound band could also be used to grasp, manipulate, and interact with objects in video games, design applications, or other virtual settings.

“We think this work has immediate impact in potentially replacing hand tracking techniques with wearable ultrasound bands in virtual and augmented reality,” says Xuanhe Zhao, the Uncas and Helen Whitaker Professor of Mechanical Engineering at MIT. “It could also provide huge amounts of training data for dexterous humanoid robots.”

Zhao, Gengxi Lu, and their colleagues present the wristband’s new design in a paper appearing today in Nature Electronics. Their MIT co-authors are former postdocs Xiaoyu Chen, Shucong Li, and Bolei Deng; graduate students SeongHyeon Kim and Dian Li; postdocs Shu Wang and Runze Li; and Anantha Chandrakasan, MIT provost and the Vannevar Bush Professor of Electrical Engineering and Computer Science. Other co-authors are graduate students Yushun Zheng and Junhang Zhang, Baoqiang Liu, Chen Gong, and Professor Qifa Zhou from the University of Southern California.

Seeing strings

There are currently a number of approaches to capturing and mimicking human hand dexterity in robots. Some approaches use cameras to record a person’s hand movements as they manipulate objects or perform tasks. Others involve having a person wear a glove with sensors, which records the person’s hand movements and transmits the data to a receiving robot. But erecting a complex camera system for different applications is impractical and prone to visual obstacles. And sensor-laden gloves could limit a person’s natural hand motions and sensations.

A third approach uses the electrical signals from muscles in the wrist or forearm that scientists then correlate with specific hand movements. Researchers have made significant advances in this approach, however these signals are easily affected by noise in the environment. They are also not sensitive enough to distinguish subtle changes in movements. For instance, they may discern whether a thumb and index finger are pinched together or pulled apart, but not much of the in-between path.

Zhao’s team wondered whether ultrasound imaging might capture more dexterous and continuous hand movements. His group has been developing various forms of ultrasound stickers — miniaturized versions of the transducers used in doctor’s offices that are paired with hydrogel material that can safely stick to skin.

In their new study, the team incorporated the ultrasound sticker design into a wearable wristband to continuously image the muscles and tendons in the wrist.

“The tendons and muscles in your wrist are like strings pulling on puppets, which are your fingers,” Lu says. “So the idea is: Each time you take a picture of the state of the strings, you’ll know the state of the hand.”

Mapping manipulation

The team designed a wristband with an ultrasound sticker that is the size of a smartwatch, and added onboard electronics that are about as small as a cellphone. They attached the wristband to a volunteer’s wrist and confirmed that the device produced clear and continuous images of the wrist as the volunteer moved their fingers in various gestures.

The challenge then was to relate the black and white ultrasound images of the wrist to specific positions of the hand. As it turns out, the fingers and thumb are capable of 22 degrees of freedom, or different ways of extending or angling. The researchers found that they could identify specific regions in their ultrasound images of the wrist that correlate to each of these 22 degrees of freedom. For instance, changes in one region relate to thumb extension, while changes in another region correlate with movements of the index finger.

To establish these connections, a volunteer wearing the wristband would move their hand in various positions while the researchers recorded the gestures with multiple cameras surrounding the volunteer. By matching changes in certain regions of the ultrasound images with hand positions recorded by the cameras, the team could label wrist image regions with the corresponding degree of freedom in the hand. But to do this translation continuously, and in real-time, would be an impossible task for humans.

So, the team turned to artificial intelligence. They used an AI algorithm that can be trained to recognize image patterns and correlate them with specific labels and, in this case, the hand’s various degrees of freedom. The researchers trained the algorithm with ultrasound images that they meticulously labeled, annotating the image regions associated with a specific degree of freedom. They tested the algorithm on a new set of ultrasound images and found it correctly predicted the corresponding hand gestures.

Once the researchers successfully paired the AI algorithm with the wristband, they tested the device on more volunteers. For the new study, eight volunteers with different hand and wrist sizes wore the wristband while they formed various hand gestures and grasps, including making the signs for all 26 letters in American Sign Language. They also held objects such as a tennis ball, a plastic bottle, a pair of scissors, and a pencil. In each case, the wristband precisely tracked and predicted the position of the hand.

To demonstrate potential applications, the team developed a simple computer program that they wirelessly paired with the wristband. As a wearer went through the motions of pinching and grasping, the gestures corresponded to zooming in and out on an object on the computer screen, and virtually moving and manipulating it in a smooth and continuous fashion.

The researchers also tested the wristband as a wireless controller of a simple commercial robotic hand. While wearing the wristband, a volunteer went through the motions of playing a keyboard. The robot in turn mimicked the motions in real-time to play a simple tune on a piano. The same robot was also able to mimic a person’s finger taps to play a desktop basketball game.

Zhao is planning to further miniaturize the wristband’s hardware, as well as train the AI software on many more gestures and movements from volunteers with wider ranging hand sizes and shapes. Ultimately, the team is building toward a wearable hand tracker that can be worn by anyone, to wirelessly manipulate humanoid robots or virtual objects with high dexterity.

“We believe this is the most advanced way to track dexterous hand motion, through wearable imaging of the wrist,” Zhao says. “We think these wearable ultrasound bands can provide intuitive and versatile controls for virtual reality and robotic hands.”

This research was supported, in part, by MIT, the U.S. National Institutes of Health, the U.S. National Science Foundation, the U.S. Department of Defense, and Singapore National Research Foundation through the Singapore-MIT Alliance for Research and Technology.

Tech

Iranians Don’t Have a Missile Alert System, So Volunteers Built Their Own Warning Map

Since Donald Trump’s war on Iran started more than three weeks ago, United States military forces have allegedly attacked more than 9,000 sites, creating a climate of fear and constant uncertainty for Iranians in Tehran and across the country. Without an advanced warning system from the government, and amid the longest internet shutdown in Iran’s history, Iranians are left in an information void.

Even before Israel and the United States began dropping bombs, Iran’s lack of a public emergency alert tool and severe state-controlled digital oppression has impacted tens of millions of citizens. Since the 12-day Israel-Iran war last year, though, a group of Iranian digital rights activists and volunteers has been working to fill the gap with a dynamic, regularly updated mapping platform called Mahsa Alert. The project can’t replace real-time early alerts that could come from a coordinated government service, but the tool sends push notifications when Israeli forces warn about attacks, details some confirmed strike locations, and offers offline mapping capabilities.

“There is no emergency alert in Iran,” says Ahmad Ahmadian, the president and CEO of US-based digital rights group Holistic Resilience, which is behind Mahsa Alert and has been developing the platform since last summer. “This was where we saw the traction, we saw the need, and we continued working on it with the volunteers, with some [open source intelligence] experts, and used this to map the repression machinery ecosystem of Iran and surveillance.”

Mahsa Alert is a website but also has Android and iOS apps, which were intentionally designed to be lightweight and easy to use on any device. Given the heavy government connectivity control inside Iran and erratic access to the internet, volunteers also prioritized engineering the platform for offline use. And it can be easily updated if a user does get connectivity for a brief period by downloading APK files that contain new data. The team works to keep these updates extremely small; a recent release was 60 kilobytes, and Ahmadian says they are typically no more than 100 kilobytes.

One overlay on Mahsa Alerts plots the locations of “confirmed attacks” that Ahmadian says his team or other OSINT investigators have verified, using video footage or images that are submitted to a Telegram bot or shared on social media. There are also warnings about areas where Israeli forces have issued evacuation alerts, along with the crucial component of people submitting reports on what is happening around them.

“We have to go through a due diligence and verification process and tag them before putting them on the map,” Ahmadian says of the reported attacks and incidents, adding that the team has a backlog of more than 3,000 reports that it is working through or is unable to verify. Along with attempting to map strikes, the team behind Mahsa Alert have also plotted “danger zones” that could be at risk of attack—such as sites linked to Iran’s nuclear program or military—so ordinary citizens can stay away from them. Ahmadian claims 90 percent of attacks it has confirmed were at sites that were already present on the map. “Some of them that we can confirm, we do it because [a user] has shared a photo or they have shared some details that makes them verifiable,” he says.

The map also includes locations of thousands of CCTV cameras, suspected government checkpoints, and other domestic infrastructure. Medical facilities, such as hospitals and pharmacies, are included on the map along with other resources like the locations of religious sites and past protests.

Mahsa Alert has become more visible on global social media feeds as Iranians around the world share details from the map, encouraging people to look into the service and flagging it for friends and family who could use it as a resource. “The app went from near zero to over 100,000 daily active users in a matter of days,” Ahmadian says, adding that in total there have been around 335,000 users this year, with people first turning to the app during the Iranian regime’s brutal crackdown on anti-government protesters in January. Through the limited user information the app collects, Ahmadian claims there are signs that 28 percent of users are accessing the platform from inside Iran.

-

Fashion1 week ago

Fashion1 week agoSales at US apparel, clothing accessories stores up 4% YoY in Jan 2026

-

Business1 week ago

Business1 week agoPeloton is launching bikes and treadmills for gyms, accelerating commercial strategy

-

Tech1 week ago

Tech1 week agoJustice Department Says Anthropic Can’t Be Trusted With Warfighting Systems

-

Business1 week ago

Business1 week agoStocks and pound rise as US rate call approaches

-

Business1 week ago

Business1 week agoBrits cashing in jewellery as gold price hits record high

-

Entertainment7 days ago

Entertainment7 days agoVal Kilmer revived 1 year after death through AI

-

Sports1 week ago

Sports1 week agoPCB files complaint over allowing Bangladesh to take review on penultimate ball – SUCH TV

-

Sports1 week ago

Sports1 week agoMarch Madness 2026 – How to watch in SA, start time, schedule, TV channel for NCAA championship basketball tournament