Tech

OpenAI backs AI-animated film for Cannes debut

ChatGPT-maker OpenAI is backing the production of a feature-length animated film created largely with artificial intelligence tools, aiming to prove the technology can revolutionize Hollywood filmmaking with faster timelines and lower costs.

The movie, titled “Critterz,” follows woodland creatures on an adventure after their village is disrupted by a stranger, with producers hoping to premiere at the Cannes Film Festival in May 2026 before a global theatrical release, they said in statement on Monday.

The project has a budget of under $30 million and a production timeline of just nine months—a fraction of the typical $100-200 million cost and three-year development cycle for major animated features.

“Critterz” originated as a short film by Chad Nelson, a creative specialist at OpenAI, who began developing the concept three years ago using the company’s DALL-E image generation tool.

Nelson has partnered with London-based Vertigo Films and Los Angeles studio Native Foreign to expand the project into a full-length feature.

“OpenAI can say what its tools do all day long, but it’s much more impactful if someone does it,” Nelson said in the news release. “That’s a much better case study than me building a demo.”

The production will blend AI technology with human work.

Artists will draw sketches that are fed into OpenAI’s tools, including GPT-5 and image-generating models, while human actors will voice the characters.

The script was written by some of the same writers behind the successful “Paddington in Peru.”

However the project comes amid intense legal battles between Hollywood studios and AI companies over intellectual property rights.

Major studios including Disney, Universal and Warner Bros. Discovery have filed copyright infringement lawsuits against AI firm Midjourney, alleging the company illegally trained its models on their characters.

The film is funded by Vertigo’s Paris-based parent company, Federation Studios, with about 30 contributors sharing profits through a specialized compensation model.

Critterz will not be the first animated feature film made with generative AI.

In 2024, “DreadClub: Vampire’s Verdict,” considered the first AI animated feature film and made with a budget of $405, was released, as well as “Where the Robots Grow.”

Those releases, as well as the original “Critterz” short film, received mixed reactions from viewers, with some critics questioning whether current AI technology can produce cinema-quality content that resonates emotionally with audiences.

© 2025 AFP

Citation:

OpenAI backs AI-animated film for Cannes debut (2025, September 9)

retrieved 9 September 2025

from https://techxplore.com/news/2025-09-openai-ai-animated-cannes-debut.html

This document is subject to copyright. Apart from any fair dealing for the purpose of private study or research, no

part may be reproduced without the written permission. The content is provided for information purposes only.

Tech

That Ex-CIA Agent in All Your Feeds Is After a Pardon From Donald Trump

One morning a few weeks ago, John Kiriakou got a call from his 16-year-old niece. “Uncle John, you’re exploding on TikTok,” he recalls her telling him.

Kiriakou, a 61-year-old ex-CIA officer who went to prison in 2013 for disclosing classified information related to the agency’s Middle East torture program, had no idea what she was talking about. He doesn’t have a TikTok account. He’s more of a Facebook lurker, if anything. But clips from a podcast Kiriakou filmed in January with Steven Bartlett, who hosts the Diary of a CEO show, which has more than 15 million subscribers on YouTube, were going viral without his intervention.

For nearly two decades, Kiriakou has been on a campaign to receive a presidential pardon. From 1990 to 2004, Kiriakou served as a CIA analyst and counterterrorism officer, leading a 2002 operation to capture Abu Zubaydah, who ran a training camp for al Qaeda fighters. During his detention, the CIA waterboarded Zubaydah. Kiriakou later discussed the agency’s torture tactics in a 2007 interview with ABC News, where he went on to serve as a terrorism consultant. Five years later, the Justice Department charged Kiriakou, who then pleaded guilty to disclosing the name of a covert operative who participated in CIA interrogations to journalists.

Though Kiriakou finished his prison sentence in 2015, he wants a presidential pardon to clear his name and get back decades of pension contributions. “I had 20 years of proud federal service. My pension was $700,000,” says Kiriakou. “Without that pension, I’m going to have to work until the day I die. It was wrong of them to take it from me, and I want it back. I can only get it back with a pardon.”

In recent years, he’s applied through official channels and tried navigating President Donald Trump’s informal and expensive clemency market. So far, his requests have gone unanswered. Now, he’s trying something different, appearing on some of the very same podcasts Trump did throughout the 2024 election. Clips of him chatting with Tucker Carlson and Joe Rogan, among others, won’t stop making the rounds—and the internet is loving it.

When Kiriakou sat down with Bartlett for the January podcast, they had a serious conversation discussing his career at the CIA, his whistleblowing, and, ultimately, his nearly two-year imprisonment. But it’s the stories Kiriakou tells throughout the episode—about gathering intelligence in countries like Pakistan or detailing the CIA’s MKUltra program—that have drawn millions of views in “brainrot”-style edits on platforms like TikTok and Instagram Reels.

“See you in two scrolls,” one commenter wrote on a clip of Kiriakou, joking about how frequently videos of him appeared on their For You page.

One user who goes by the handle @_bamboclat is credited by Know Your Meme for popularizing these edits of Kiriakou telling unimaginable stories about his time abroad. These clips have received around 50 million views on the account.

“I first found out about him through podcasts on TikTok. I think the reason why everyone is in love with him is because he’s a good storyteller,” says @_bamboclat, who declined to share his full name. “He’s been telling it for 20 years. Slowing down and speeding it up, the meme version of him, is pretty popular with Gen Z and the TikTok audience.”

The virality has turned Kiriakou into a cultural phenomenon. Following his newfound popularity, the Creative Artists Agency (CAA) signed him. Cameo—the platform that allows users to request personalized videos from their favorite celebrities—recruited Kiriakou last month. So far, he’s made more than 700 videos for fans for around $150 apiece. In one Cameo video, Kiriakou is asked to shout out a woman’s nail salon. The clip is being used as an advertisement for the business on TikTok.

Tech

Samsung’s 2 New Midrange Phones Get Price Hikes and Small Updates

Last month, Samsung jacked up the price of two of its flagship smartphones by $100. Now, its two new midrange models—the Galaxy A37 5G and Galaxy A57 5G—are getting $50 price bumps, despite minor hardware updates over last year’s Galaxy A36 and A56. Samsung has also trimmed the lineup—there’s no successor to the Galaxy A26 this year, at least not yet.

These price increases may be indicative of the economic climate, what with tariffs, higher oil prices due to the war in Iran, and the memory shortage that has driven up RAM and storage costs across the board. If a phone’s price doesn’t go up, it could still mean fewer meaningful hardware upgrades to keep costs down, very much like the recent Google Pixel 10a. (The outlier is the iPhone 17e, which managed to add features like MagSafe and a new processor, along with a few other upgrades, without a change to the price over the iPhone 16e.)

“Price increases or ‘down‑speccing’ have become the norm,” writes Jitesh Ubrani, research manager at IDC, in an email to WIRED. “Unfortunately, consumers will need to adjust to this new reality. The biggest bottleneck for brands right now is memory, with suppliers facing tight availability and significantly higher costs than in past years.” Ubrani says that while geopolitical factors haven’t yet affected hardware pricing, they are adding uncertainty that could increase costs in the future.

Samsung did not comment on exactly what is driving the price bump. However, it says consumers eyeing its A-series phones prioritize upgrading out of necessity—maybe their current phone just broke or is really old—and they don’t care much for AI features. Value for money is the number one purchase driver, above performance and battery life. So it’s a little odd to see the company raise prices, though Samsung hopes the improvements are compelling.

The Galaxy A57 5G costs $550 with 8 GB of RAM and 128 GB of storage, and $610 if you bump storage to 256 GB. Meanwhile, the Galaxy A37 5G starts at $450 for 6 GB of RAM and 128 GB of storage, or $540 for 8 GB of RAM and 256 GB of storage. They both officially go on sale on April 9.

Small Updates

Processor upgrades are the main highlight for these phones. The Galaxy A37 is powered by Samsung’s Exynos 1480, which should offer 14 percent better CPU performance, 24 percent better graphics, and, perhaps shockingly, 167 percent better neural processing performance—helpful for AI tasks. That’s compared to the Qualcomm Snapdragon 6 Gen 3 chip in last year’s Galaxy A36.

The Galaxy A57 sports the Exynos 1680, which isn’t a huge leap over the Exynos 1580 in the Galaxy A56, but still offers a nice lift: 10 percent better CPU performance, 7 percent faster graphics, and 42 percent improved neural processing. Both of these phones still have the same 5,000-mAh battery capacity and charging speeds. (There’s no wireless charging, despite competing phones like the iPhone 17e or Google Pixel 10a offering the feature.)

Tech

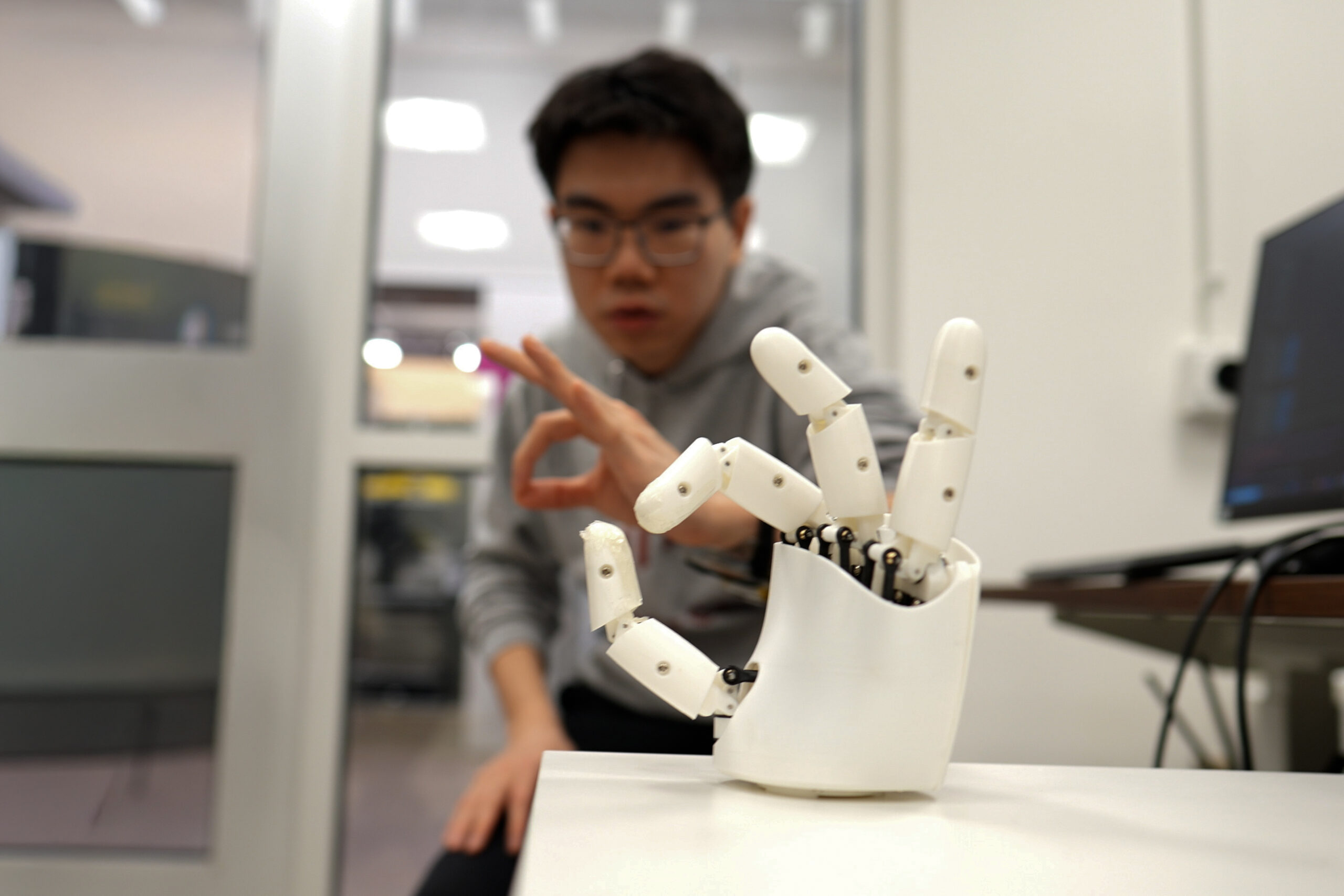

Wristband enables wearers to control a robotic hand with their own movements

The next time you’re scrolling your phone, take a moment to appreciate the feat: The seemingly mundane act is possible thanks to the coordination of 34 muscles, 27 joints, and over 100 tendons and ligaments in your hand. Indeed, our hands are the most nimble parts of our bodies. Mimicking their many nuanced gestures has been a longstanding challenge in robotics and virtual reality.

Now, MIT engineers have designed an ultrasound wristband that precisely tracks a wearer’s hand movements in real-time. The wristband produces ultrasound images of the wrist’s muscles, tendons, and ligaments as the hand moves, and is paired with an artificial intelligence algorithm that continuously translates the images into the corresponding positions of the five fingers and palm.

The researchers can train the wristband to learn a wearer’s hand motions, which the device can communicate in real-time to a robot or a virtual environment.

In demonstrations, the team has shown that a person wearing the wristband can wirelessly control a robotic hand. As the person gestures or points, the robot does the same. In a sort of wireless marionette interaction, the wearer can manipulate the robot to play a simple tune on the piano and shoot a small basketball into a desktop hoop. With the same wristband, a wearer can also manipulate objects on a computer screen, for instance pinching their fingers together to enlarge and minimize a virtual object.

The team is using the wristband to gather hand motion data from many more users with different hand sizes, finger shapes, and gestures. They envision building a large dataset of hand motions that can be plumbed, for instance, to train humanoid robots in dexterity tasks, such as performing certain surgical procedures. The ultrasound band could also be used to grasp, manipulate, and interact with objects in video games, design applications, or other virtual settings.

“We think this work has immediate impact in potentially replacing hand tracking techniques with wearable ultrasound bands in virtual and augmented reality,” says Xuanhe Zhao, the Uncas and Helen Whitaker Professor of Mechanical Engineering at MIT. “It could also provide huge amounts of training data for dexterous humanoid robots.”

Zhao, Gengxi Lu, and their colleagues present the wristband’s new design in a paper appearing today in Nature Electronics. Their MIT co-authors are former postdocs Xiaoyu Chen, Shucong Li, and Bolei Deng; graduate students SeongHyeon Kim and Dian Li; postdocs Shu Wang and Runze Li; and Anantha Chandrakasan, MIT provost and the Vannevar Bush Professor of Electrical Engineering and Computer Science. Other co-authors are graduate students Yushun Zheng and Junhang Zhang, Baoqiang Liu, Chen Gong, and Professor Qifa Zhou from the University of Southern California.

Seeing strings

There are currently a number of approaches to capturing and mimicking human hand dexterity in robots. Some approaches use cameras to record a person’s hand movements as they manipulate objects or perform tasks. Others involve having a person wear a glove with sensors, which records the person’s hand movements and transmits the data to a receiving robot. But erecting a complex camera system for different applications is impractical and prone to visual obstacles. And sensor-laden gloves could limit a person’s natural hand motions and sensations.

A third approach uses the electrical signals from muscles in the wrist or forearm that scientists then correlate with specific hand movements. Researchers have made significant advances in this approach, however these signals are easily affected by noise in the environment. They are also not sensitive enough to distinguish subtle changes in movements. For instance, they may discern whether a thumb and index finger are pinched together or pulled apart, but not much of the in-between path.

Zhao’s team wondered whether ultrasound imaging might capture more dexterous and continuous hand movements. His group has been developing various forms of ultrasound stickers — miniaturized versions of the transducers used in doctor’s offices that are paired with hydrogel material that can safely stick to skin.

In their new study, the team incorporated the ultrasound sticker design into a wearable wristband to continuously image the muscles and tendons in the wrist.

“The tendons and muscles in your wrist are like strings pulling on puppets, which are your fingers,” Lu says. “So the idea is: Each time you take a picture of the state of the strings, you’ll know the state of the hand.”

Mapping manipulation

The team designed a wristband with an ultrasound sticker that is the size of a smartwatch, and added onboard electronics that are about as small as a cellphone. They attached the wristband to a volunteer’s wrist and confirmed that the device produced clear and continuous images of the wrist as the volunteer moved their fingers in various gestures.

The challenge then was to relate the black and white ultrasound images of the wrist to specific positions of the hand. As it turns out, the fingers and thumb are capable of 22 degrees of freedom, or different ways of extending or angling. The researchers found that they could identify specific regions in their ultrasound images of the wrist that correlate to each of these 22 degrees of freedom. For instance, changes in one region relate to thumb extension, while changes in another region correlate with movements of the index finger.

To establish these connections, a volunteer wearing the wristband would move their hand in various positions while the researchers recorded the gestures with multiple cameras surrounding the volunteer. By matching changes in certain regions of the ultrasound images with hand positions recorded by the cameras, the team could label wrist image regions with the corresponding degree of freedom in the hand. But to do this translation continuously, and in real-time, would be an impossible task for humans.

So, the team turned to artificial intelligence. They used an AI algorithm that can be trained to recognize image patterns and correlate them with specific labels and, in this case, the hand’s various degrees of freedom. The researchers trained the algorithm with ultrasound images that they meticulously labeled, annotating the image regions associated with a specific degree of freedom. They tested the algorithm on a new set of ultrasound images and found it correctly predicted the corresponding hand gestures.

Once the researchers successfully paired the AI algorithm with the wristband, they tested the device on more volunteers. For the new study, eight volunteers with different hand and wrist sizes wore the wristband while they formed various hand gestures and grasps, including making the signs for all 26 letters in American Sign Language. They also held objects such as a tennis ball, a plastic bottle, a pair of scissors, and a pencil. In each case, the wristband precisely tracked and predicted the position of the hand.

To demonstrate potential applications, the team developed a simple computer program that they wirelessly paired with the wristband. As a wearer went through the motions of pinching and grasping, the gestures corresponded to zooming in and out on an object on the computer screen, and virtually moving and manipulating it in a smooth and continuous fashion.

The researchers also tested the wristband as a wireless controller of a simple commercial robotic hand. While wearing the wristband, a volunteer went through the motions of playing a keyboard. The robot in turn mimicked the motions in real-time to play a simple tune on a piano. The same robot was also able to mimic a person’s finger taps to play a desktop basketball game.

Zhao is planning to further miniaturize the wristband’s hardware, as well as train the AI software on many more gestures and movements from volunteers with wider ranging hand sizes and shapes. Ultimately, the team is building toward a wearable hand tracker that can be worn by anyone, to wirelessly manipulate humanoid robots or virtual objects with high dexterity.

“We believe this is the most advanced way to track dexterous hand motion, through wearable imaging of the wrist,” Zhao says. “We think these wearable ultrasound bands can provide intuitive and versatile controls for virtual reality and robotic hands.”

This research was supported, in part, by MIT, the U.S. National Institutes of Health, the U.S. National Science Foundation, the U.S. Department of Defense, and Singapore National Research Foundation through the Singapore-MIT Alliance for Research and Technology.

-

Fashion1 week ago

Fashion1 week agoSales at US apparel, clothing accessories stores up 4% YoY in Jan 2026

-

Tech1 week ago

Tech1 week agoJustice Department Says Anthropic Can’t Be Trusted With Warfighting Systems

-

Business1 week ago

Business1 week agoStocks and pound rise as US rate call approaches

-

Sports1 week ago

Sports1 week agoPCB files complaint over allowing Bangladesh to take review on penultimate ball – SUCH TV

-

Entertainment7 days ago

Entertainment7 days agoVal Kilmer revived 1 year after death through AI

-

Sports1 week ago

Sports1 week agoMarch Madness 2026 – How to watch in SA, start time, schedule, TV channel for NCAA championship basketball tournament

-

Business1 week ago

Business1 week agoBrits cashing in jewellery as gold price hits record high

-

Politics1 week ago

Politics1 week agoIran strikes Tel Aviv with cluster-warhead missiles in retaliation of Larijani’s martyrdom