Tech

AI method reconstructs 3D scene details from simulated images using inverse rendering

Over the past decades, computer scientists have developed many computational tools that can analyze and interpret images. These tools have proved useful for a broad range of applications, including robotics, autonomous driving, health care, manufacturing and even entertainment.

Most of the best performing computer vision approaches employed to date rely on so-called feed-forward neural networks. These are computational models that process input images step by step, ultimately making predictions about them.

While some of these models were found to perform well when tested on the data they analyzed during training, they often do not generalize well across new images and in different scenarios. In addition, their predictions and the patterns they extract from images can be difficult to interpret.

Researchers at Princeton University recently developed a new inverse rendering approach that is more transparent and could also interpret a wide range of images more reliably. The new approach, introduced in a paper published in Nature Machine Intelligence, relies on a generative artificial intelligence (AI)-based method to simulate the process of image creation, while also optimizing it by gradually adjusting a model’s internal parameters.

“Generative AI and neural rendering have transformed the field in recent years for creating novel content: producing images or videos from scene descriptions,” Felix Heide, senior author of the paper, told Tech Xplore. “We investigate whether we can flip this around and use these generative models for extracting the scene descriptions from images.”

The new approach developed by Heide and his colleagues relies on a so-called differentiable rendering pipeline. This is a process for the simulation of image creation, relying on compressed representations of images created by generative AI models.

“We developed an analysis-by-synthesis approach that allows us to solve vision tasks, such as tracking, as test-time optimization problems,” explained Heide. “We found that this method generalizes across datasets, and in contrast to existing supervised learning methods, does not need to be trained on new datasets.”

Essentially, the method developed by the researchers works by placing models of 3D objects in a virtual scene depicting real world settings. These models of objects are generated by a generative AI based on random sample of 3D scene parameters.

“We then render all these objects back together into a 2D image,” said Heide. “Next, we compare this rendered image with the real observed image. Based on how different they are, we backpropagate the difference through both the differentiable rendering function and the 3D generation model to update its inputs. In just a few steps, we optimize these inputs to make the rendered match the observed images better.”

-

Optimizing 3D models through inverse neural rendering. From left to right: the observed image, initial random 3D generations, and three optimization steps that refine these to better match the observed image. The observed images are faded to show the rendered objects clearly. The method effectively refines object appearance and position, all done at test time with inverse neural rendering. Credit: Ost et al.

-

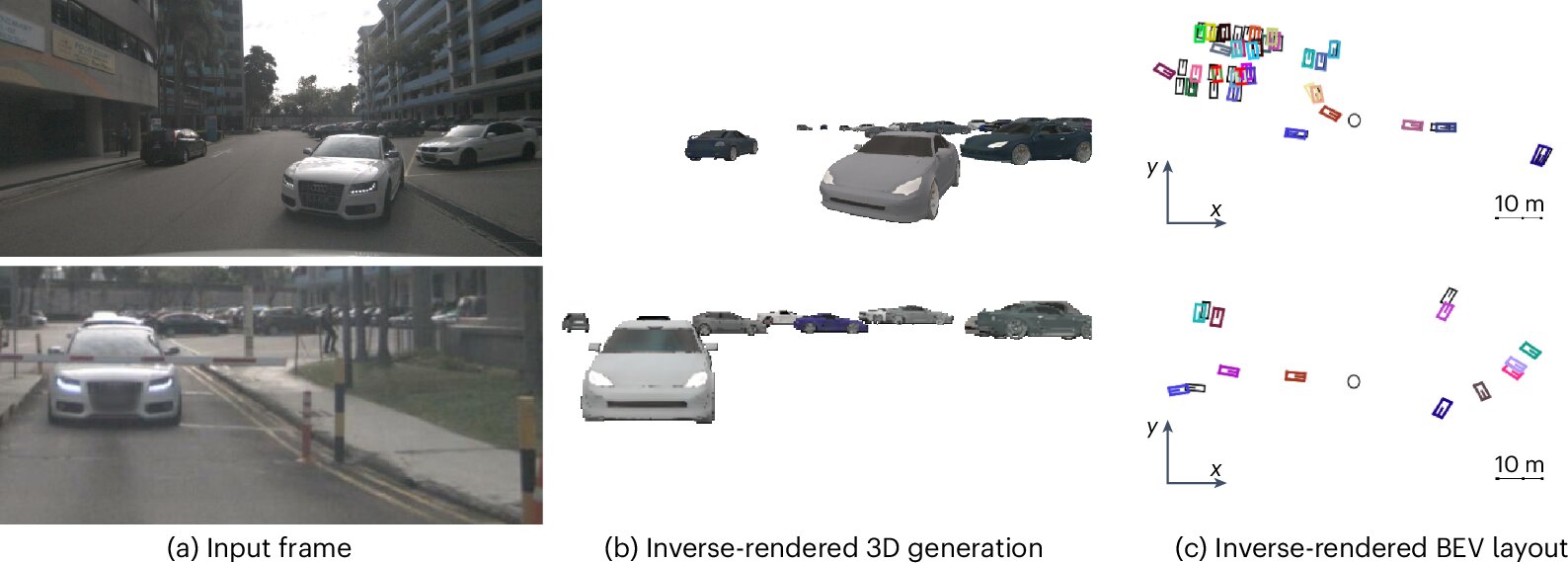

Generalization of 3D multi-object tracking with Inverse Neural Rendering. The method directly generalizes across datasets such as the nuScenes and Waymo Open Dataset benchmarks without additional fine-tuning and is trained on synthetic 3D models only. The observed images are overlaid with the closest generated object and tracked 3D bounding boxes. Credit: Ost et al.

A notable advantage of the team’s newly proposed approach is that it allows very generic 3D object generation models trained on synthetic data to perform well across a wide range of datasets containing images captured in real-world settings. In addition, the renderings produced by the models are far more explainable than those produced by conventional rendering tools based on feed-forward machine learning models.

“Our inverse rendering approach for tracking works just as well as learned feed-forward approaches, but it provides us with explicit 3D explanations of its perceived world,” said Heide.

“The other interesting aspect is the generalization capabilities. Without changing the 3D generation model or training it on new data, our 3D multi-object tracking through Inverse Neural Rendering works well across different autonomous driving datasets and object types. This can significantly reduce the cost of fine-tuning on new data or at least work as an auto-labeling pipeline.”

This recent study could soon help to advance AI models for computer vision, improving their performance in real-world settings while also increasing their transparency. The researchers now plan to continue improving their method and start testing it on more computer vision-related tasks.

“A logical next step is the expansion of the proposed approach to other perception tasks, such as 3D detection and 3D segmentation,” added Heide. “Ultimately, we want to explore if inverse rendering can even be used to infer the whole 3D scene, and not just individual objects. This would allow our future robots to reason and continuously optimize a three-dimensional model of the world, which comes with built-in explainability.”

Written for you by our author Ingrid Fadelli,

edited by Gaby Clark, and fact-checked and reviewed by Robert Egan—this article is the result of careful human work. We rely on readers like you to keep independent science journalism alive.

If this reporting matters to you,

please consider a donation (especially monthly).

You’ll get an ad-free account as a thank-you.

More information:

Julian Ost et al, Towards generalizable and interpretable three-dimensional tracking with inverse neural rendering, Nature Machine Intelligence (2025). DOI: 10.1038/s42256-025-01083-x.

© 2025 Science X Network

Citation:

AI method reconstructs 3D scene details from simulated images using inverse rendering (2025, August 23)

retrieved 23 August 2025

from https://techxplore.com/news/2025-08-ai-method-reconstructs-3d-scene.html

This document is subject to copyright. Apart from any fair dealing for the purpose of private study or research, no

part may be reproduced without the written permission. The content is provided for information purposes only.

Tech

These $500 Windows Laptops Show That the MacBook Neo Has Serious Competition

Today, Apple announced its new budget MacBook. At $599, it looks seriously impressive. While I haven’t tested its performance, battery life, or display just yet, it may end up being hard to beat at that price based on some of the specs alone.

But that doesn’t mean the competition isn’t there. I want to recommend a couple of Windows laptops deals that offer various advantages over the MacBook Neo, showing where the Neo has both strengths and weaknesses.

First, check out this Asus Vivobook 14, a laptop I’ve been happy to recommend as a budget computer for the past year. In many ways, this is the Windows version of a laptop like the MacBook Neo. It uses a highly-efficient ARM chip, the Qualcomm Snapdragon X, meaning it gets great battery life and performs admirably in daily tasks. It’s not quite as thin or light as the MacBook Neo, but it’s fairly portable for a laptop at this price.

Unlike the MacBook Neo, the Vivobook 14 comes with 16 GB of RAM and 512 GB of storage. That’s twice what you get in the MacBook Neo’s starting configuration. Right now, this configuration of the Vivobook 14 is on sale for $539. That’s a killer deal for those specs. It even comes with a healthier mix of ports, including HDMI, two USB-A, one USB-C, and a headphone jack. That also means it can support two external displays unlike the MacBook Neo, which can only handle just one.

Don’t get me wrong—I’m not at all saying the Vivobook 14 is a slam dunk over the MacBook Neo. Based on specs alone, I know the Vivobook 14 is a serious step down when it comes to the display. It’s less sharp, stretched across a larger screen, and the color performance isn’t so good. The Vivobook 14 maxes out at 280 nits, whereas Apple says the MacBook Neo can go all the way up to 500 nits. I have a hunch that the MacBook Neo will deliver a much better display in just about every regard.

There’s also the touchpad. It’s a little clunky to use, which is typical of budget Windows laptops. This is just a guess—but the touchpad on the MacBook Neo will likely feel smoother. It’s a mechanical trackpad (unlike the MacBook Air’s haptic feedback trackpad), but Apple has almost never made a bad trackpad.

If you’re not convinced by the Asus Vivobook 14, I’d also recommend the HP OmniBook 5, which is currently on sale for $500 and uses the same Snapdragon X chip. While it only has 256 GB of storage, it has a much better screen than the Vivobook 14, using an OLED display. It’s not any brighter than the Vivobook 14, but it gives you far better color performance and contrast. It’s also just 0.50 inches thick, matching the MacBook Neo exactly in portability.

Tech

Don’t Buy Some Random USB Hub off Amazon. Here Are 5 We’ve Tested and Approved

Other Good USB Hubs to Consider

Ugreen Revodok Pro 211 Docking Station for $64: Most laptop docking stations are bulky gadgets that often require a power source, but this one from Ugreen straddles the line between dock and hub. It has a small, braided cable running to a relatively large aluminum block. It’s a bit hefty but still compact, and it packs a lot of extra power. It has three USB ports (one USB-C and two USB-A) that each reached up to 900 MB/s of data-transfer speeds in my testing. That was enough to move large amounts of 4K video footage in minutes. The only problem is that using dual monitors on a Mac is limited to only mirroring.

Photograph: Luke Larsen

Hyper HyperDrive Next Dual 4K Video Dock for $150: This one also straddles the line between dock and USB hub. Many mobile docks lack proper Mac support, only allowing for mirroring instead of full extension. The HyperDrive Next Dual 4K fixes that problem, though, making it a great option for MacBooks (though it won’t magically give an old MacBook Air dual-monitor support). Unfortunately, you’ll be paying handsomely for that capability, as this one is more expensive than the other options. The other problem is that although this dock has two HDMI ports that can support 4K, though only one will be at 60 Hz and the other will be stuck at 30 Hz. So, if you plan to use it with multiple displays, you’ll need to drop the resolution 1440p or 1080p on one of them. I also tested this Targus model, which is made by the same company, which gets you two 4K displays at 60 Hz but not on Mac.

Anker USB-C Hub 5-in-1 for $20: This Anker USB hub is the one I carry in my camera bag everywhere. It plugs into the USB-C port on your laptop and provides every connection you’d need to offload photos or videos from camera gear. In our testing, the USB 3.0 ports reached transfer speeds over 400 MB/s, which isn’t quite as fast as some USB hubs on this list, but it’s solid for a sub-$50 device. Similarly, the SD card reader reached speeds of 80 MB/s for reading and writing, which isn’t the fastest SD cards can get, but adequate for moving files back and forth.—Eric Ravenscraft

Kensington Triple Video Mobile Dock for $83: Another mobile dock meant to provide additional external support, this one from Kensington can technically power up to three 1080p displays at 60 Hz using the two HDMI ports and one DisplayPort. It’s a lot of ports in a relatively small package, though the basic plastic case isn’t exactly inspiring.

Power up with unlimited access to WIRED. Get best-in-class reporting and exclusive subscriber content that’s too important to ignore. Subscribe Today.

Tech

Trump’s War on Iran Could Screw Over US Farmers

Global oil and gas prices have skyrocketed following the US attack on Iran last weekend. But another key global supply chain is also at risk, one that may directly impact American farmers who have already been squeezed for months by tariff wars. The conflict in the Middle East is choking global supplies of fertilizer right before the crucial spring planting season.

“This literally could not be happening at a worse time,” says Josh Linville, the vice president of fertilizer at financial services company StoneX.

The global fertilizer market focuses on three main macronutrients: phosphates, nitrogen, and potash. All of them are produced in different ways, with different countries leading in exports. Farmers consider a variety of factors, including crop type and soil conditions, when deciding which of these types of fertilizer to apply to their fields.

Potash and phosphates are both mined from different kinds of natural deposits; nitrogen fertilizers, by contrast, are produced with natural gas. QatarLNG, a subsidiary of Qatar Energy, a state-run oil and gas company, said on Monday that it would halt production following drone strikes on some of its facilities. This effectively took nearly a fifth of the world’s natural gas supply offline, causing gas prices in Europe to spike.

That shutdown puts supplies of urea, a popular type of nitrogen fertilizer, particularly at risk. On Tuesday, Qatar Energy said that it would also stop production of downstream products, including urea. Qatar was the second-largest exporter of urea in 2024. (Iran was the third-largest; it’s also a key exporter of ammonia, another type of nitrogen fertilizer.) Prices on urea sold in the US out of New Orleans, a key commodity port, were up nearly 15 percent on Monday compared to prices last week, according to data provided by Linville to WIRED. The blockage of the Strait of Hormuz is also preventing other countries in the region from exporting nitrogen products.

“When we look at ammonia, we’re looking at almost 30 percent of global production being either involved or at risk in this conflict,” says Veronica Nigh, a senior economist at the Fertilizer Institute, a US-based industry advocacy organization. “It gets worse when we think about urea. Urea is almost 50 percent.”

Other types of fertilizer are also at risk. Saudi Arabia, Nigh says, supplies about 40 percent of all US phosphate imports; taking them out of the equation for more than a few days could create “a really challenging situation” for the US. Other countries in the region, including Jordan, Egypt, and Israel, also play a big role in these markets.

“We are already hearing reports that some of those Persian Gulf manufacturers are shutting down production, because they’re saying, ‘I have a finite amount of storage for my supply,’” Linville says. “‘Once I reach the top of it, I can’t do anything else. So I’m going to shut down my production in order to make sure I don’t go over above that.’”

Conflict in the strait has intensified in the early part of this week, as the Islamic Revolutionary Guard Corps have reportedly threatened any ship passing through the strait. Traffic has slowed to a crawl. The Trump administration announced initiatives on Tuesday meant to protect oil tankers traveling through the strait, including providing a naval escort. Even if those initiatives succeed—which the shipping industry has expressed doubt about—much of the initial energy will probably go toward shepherding oil and gas assets out of the region.

“Fertilizer is not going to be the most valuable thing that’s gonna transit the strait,” says Nigh.

-

Business6 days ago

Business6 days agoIndia Us Trade Deal: Fresh look at India-US trade deal? May be ‘rebalanced’ if circumstances change, says Piyush Goyal – The Times of India

-

Politics7 days ago

Politics7 days agoWhat are Iran’s ballistic missile capabilities?

-

Business1 week ago

Business1 week agoHouseholds set for lower energy bills amid price cap shake-up

-

Politics7 days ago

Politics7 days agoUS arrests ex-Air Force pilot for ‘training’ Chinese military

-

Business6 days ago

Business6 days agoAttock Cement’s acquisition approved | The Express Tribune

-

Fashion1 week ago

Fashion1 week agoOECD GDP growth slows to 0.3% in Q4 amid mixed trends

-

Fashion6 days ago

Fashion6 days agoPolicy easing drives Argentina’s garment import surge in 2025

-

Fashion6 days ago

Fashion6 days agoTexwin Spinning showcasing premium cotton yarn range at VIATT 2026

.png)