Tech

AI method reconstructs 3D scene details from simulated images using inverse rendering

Over the past decades, computer scientists have developed many computational tools that can analyze and interpret images. These tools have proved useful for a broad range of applications, including robotics, autonomous driving, health care, manufacturing and even entertainment.

Most of the best performing computer vision approaches employed to date rely on so-called feed-forward neural networks. These are computational models that process input images step by step, ultimately making predictions about them.

While some of these models were found to perform well when tested on the data they analyzed during training, they often do not generalize well across new images and in different scenarios. In addition, their predictions and the patterns they extract from images can be difficult to interpret.

Researchers at Princeton University recently developed a new inverse rendering approach that is more transparent and could also interpret a wide range of images more reliably. The new approach, introduced in a paper published in Nature Machine Intelligence, relies on a generative artificial intelligence (AI)-based method to simulate the process of image creation, while also optimizing it by gradually adjusting a model’s internal parameters.

“Generative AI and neural rendering have transformed the field in recent years for creating novel content: producing images or videos from scene descriptions,” Felix Heide, senior author of the paper, told Tech Xplore. “We investigate whether we can flip this around and use these generative models for extracting the scene descriptions from images.”

The new approach developed by Heide and his colleagues relies on a so-called differentiable rendering pipeline. This is a process for the simulation of image creation, relying on compressed representations of images created by generative AI models.

“We developed an analysis-by-synthesis approach that allows us to solve vision tasks, such as tracking, as test-time optimization problems,” explained Heide. “We found that this method generalizes across datasets, and in contrast to existing supervised learning methods, does not need to be trained on new datasets.”

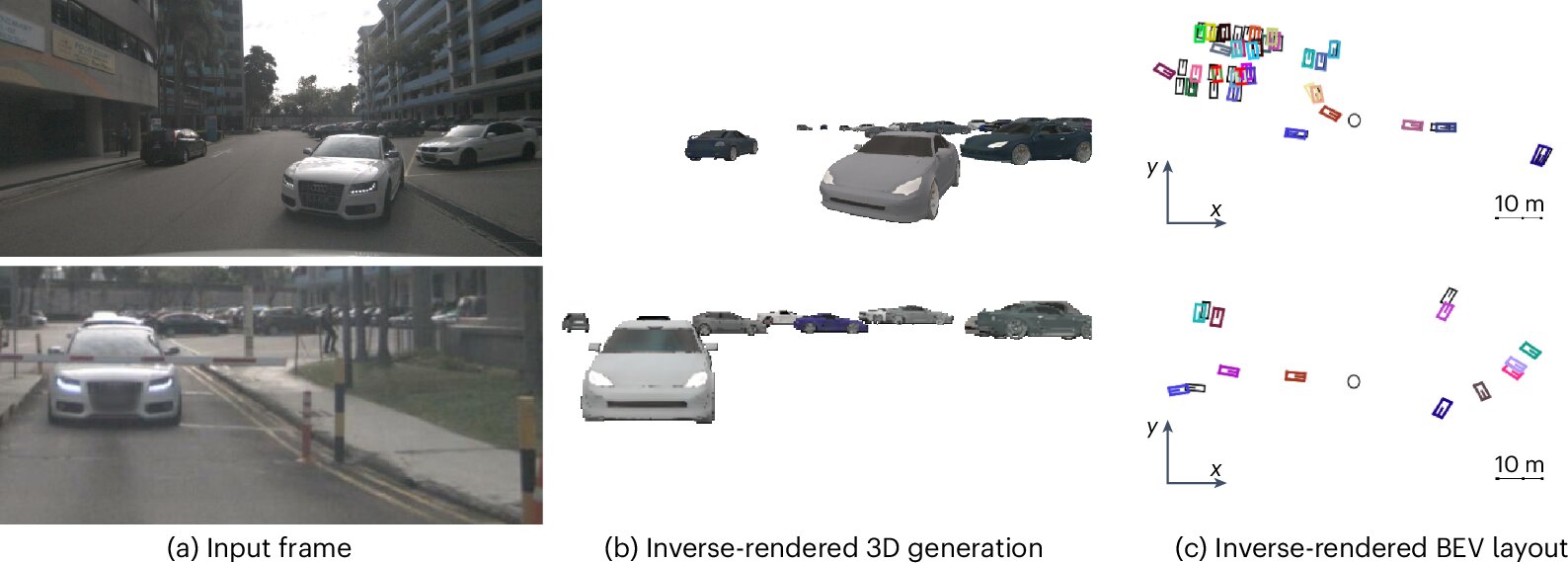

Essentially, the method developed by the researchers works by placing models of 3D objects in a virtual scene depicting real world settings. These models of objects are generated by a generative AI based on random sample of 3D scene parameters.

“We then render all these objects back together into a 2D image,” said Heide. “Next, we compare this rendered image with the real observed image. Based on how different they are, we backpropagate the difference through both the differentiable rendering function and the 3D generation model to update its inputs. In just a few steps, we optimize these inputs to make the rendered match the observed images better.”

-

Optimizing 3D models through inverse neural rendering. From left to right: the observed image, initial random 3D generations, and three optimization steps that refine these to better match the observed image. The observed images are faded to show the rendered objects clearly. The method effectively refines object appearance and position, all done at test time with inverse neural rendering. Credit: Ost et al.

-

Generalization of 3D multi-object tracking with Inverse Neural Rendering. The method directly generalizes across datasets such as the nuScenes and Waymo Open Dataset benchmarks without additional fine-tuning and is trained on synthetic 3D models only. The observed images are overlaid with the closest generated object and tracked 3D bounding boxes. Credit: Ost et al.

A notable advantage of the team’s newly proposed approach is that it allows very generic 3D object generation models trained on synthetic data to perform well across a wide range of datasets containing images captured in real-world settings. In addition, the renderings produced by the models are far more explainable than those produced by conventional rendering tools based on feed-forward machine learning models.

“Our inverse rendering approach for tracking works just as well as learned feed-forward approaches, but it provides us with explicit 3D explanations of its perceived world,” said Heide.

“The other interesting aspect is the generalization capabilities. Without changing the 3D generation model or training it on new data, our 3D multi-object tracking through Inverse Neural Rendering works well across different autonomous driving datasets and object types. This can significantly reduce the cost of fine-tuning on new data or at least work as an auto-labeling pipeline.”

This recent study could soon help to advance AI models for computer vision, improving their performance in real-world settings while also increasing their transparency. The researchers now plan to continue improving their method and start testing it on more computer vision-related tasks.

“A logical next step is the expansion of the proposed approach to other perception tasks, such as 3D detection and 3D segmentation,” added Heide. “Ultimately, we want to explore if inverse rendering can even be used to infer the whole 3D scene, and not just individual objects. This would allow our future robots to reason and continuously optimize a three-dimensional model of the world, which comes with built-in explainability.”

Written for you by our author Ingrid Fadelli,

edited by Gaby Clark, and fact-checked and reviewed by Robert Egan—this article is the result of careful human work. We rely on readers like you to keep independent science journalism alive.

If this reporting matters to you,

please consider a donation (especially monthly).

You’ll get an ad-free account as a thank-you.

More information:

Julian Ost et al, Towards generalizable and interpretable three-dimensional tracking with inverse neural rendering, Nature Machine Intelligence (2025). DOI: 10.1038/s42256-025-01083-x.

© 2025 Science X Network

Citation:

AI method reconstructs 3D scene details from simulated images using inverse rendering (2025, August 23)

retrieved 23 August 2025

from https://techxplore.com/news/2025-08-ai-method-reconstructs-3d-scene.html

This document is subject to copyright. Apart from any fair dealing for the purpose of private study or research, no

part may be reproduced without the written permission. The content is provided for information purposes only.

Tech

The Smart Home Gadgets to Amp Up Your Curb Appeal

I tried the battery version, which does require you recharge it every couple of weeks, but the wired-in version is the top recommendation on our guide to the Best Video Doorbells.

A Better Birdhouse

I had a new-to-me problem this spring: bird invasion. A little bird made a nest in my front-door wreath without us noticing. One evening, my sister opened the door, and the bird flew out of the nest and straight into our house. After a 30-minute battle to get it outside again (and keep my cat from eating it), it wasn’t until we saw the bird fly off the door again the next day that we realized it was calling our home its home, too.

If this is a common problem at your house, our resident bird-gear tester Kat Merck has a solution: a smart nesting box. Birdfy makes a few different smart bird feeders we like for bird-watching, and the Nest Duo is a birdhouse that lets you watch the birds while they nest inside of it. It’s a slim, attractive box that will add to your front yard’s style while also packing two solar-powered cameras (one facing the entrance, one focused inside) so you can bird-watch from multiple angles. It comes with different hole sizes to appeal to different species, metal predator guards to prevent chewing around the hole, and a remote control to reset or recharge the camera without disturbing your feathered neighbors.

Stylish Smart Lights

I’ve liked Govee’s smart outdoor string lights before, usually for my holiday decor, and have previously recommended something similar with a bistro-light-like look that happened to be smart. These clear bulb string lights are part of Govee’s current lineup and have a contemporary twist with a triangle in the center instead of the wire filament. These are a fun option for outdoor lights you can enjoy on warm nights, and they can do every color and shade of white without looking as bulky as permanent outdoor lights. (Added bonus, these lights are also Matter compatible!)

Fresh Bulbs

If you have light fixtures you want to remote-control, add an outdoor smart bulb. There are tons to choose from, and you can usually find one from any brand you already have at home. The only downside is that outdoor-rated smart bulbs are usually 4.75-inch-diameter PAR38-style bulbs, so they’re best for downward-facing floodlights on your porch or balcony. They’ll likely be too big to fit in a wall fixture as a replacement for a normal-sized bulb. Don’t just grab any smart bulb—not all are outdoor-rated. Check for mentions of outdoor use and waterproof ratings to make sure they’re safe to use. I’m a big fan of Cync bulbs, and the brand has an outdoor version of the Cync Full Color bulbs I like to use indoors. You’ll be able to add fun colors as well as shades of white, so you can turn the porch a spooky orange or red for Halloween, pink for Valentine’s Day, or the colors of your favorite sports team on game day.

Remote-Controlled Garage

If your garage is the centerpiece of your home’s curb appeal, you can control it as easily as a smart door by adding a smart controller. You can do two different styles: I have the Chamberlain MyQ professionally installed smart garage opener, which means the device that controls my garage has these smarts built into it (plus a camera, but I find it doesn’t work great with how far the device is from my Wi-Fi router), or you can get a smart garage controller that can add smart features onto an existing garage door. Both let you check whether the garage is open or closed and operate it remotely, and you can add a video keypad that doubles as a video doorbell and can let you open or close the garage without your phone.

Smart Shades

The front of my home faces west, so it’s absolutely baking at the end of the day. What I need to add are some of our favorite smart shades to automate closing the shades on that side of the house at the right time of day. These also give your home a nice, cohesive look and immediate, controllable privacy from the outside world. WIRED reviewer Simon Hill recommends the SmartWings shades as his top picks, and Lutron’s Caseta shades if you’re looking for a more upgraded look.

Invisible Swaps

Looking to add some smarts without touching your existing setup? These switch-ups can make your front door and yard smart without being visible.

Power up with unlimited access to WIRED. Get best-in-class reporting and exclusive subscriber content that’s too important to ignore. Subscribe Today.

Tech

The Best Movies to Stream This Month

April might be springtime in the northern hemisphere, but some of the best streaming services seem to think it’s the perfect time for a dry run of spooky season. How else to explain the arrival of some exquisitely dark slices of horror, like 28 Days Later: The Bone Temple arriving on Netflix, Weapons coming to Prime Video, or Shelby Oaks landing on Hulu? If you prefer your off-season Halloween viewing to be in the vein of campy B movies rather than serious scares though, horror specialist Shudder has you covered with Deathstalker, a gloriously cheesy reboot of a near-forgotten ’80s series.

Reality is often scarier than fiction though, as shown by Louis Theroux’s Inside the Manosphere—his first documentary film with Netflix, exploring the dark side of social media and the world of toxic male influencers. (Be sure to read our interview with the filmmaker.) And if the thought of that leaves you wanting something a bit more wholesome to watch, thankfully Zootopia 2 has popped up on Disney+—and there’s even a rabbit in that, for some appropriately springtime imagery.

Here are WIRED’s picks of the best movies to watch right now.

28 Years Later: The Bone Temple

The fourth film in the long-running postapocalyptic horror series switches focus from rampaging rage zombies to a more dangerous threat: humans. OK, OK, “people are the real monsters” isn’t a hot take for the genre, but The Bone Temple offers a unique twist, with 28 Years Later survivor Spike (Alfie Williams) trapped in the company of a murderous gang led by deranged satanist “Sir Lord” Jimmy Crystal (Sinners’ Jack O’Connell). The villain is modeled on disgraced British TV presenter Jimmy Savile, whose sexual abuse crimes hadn’t been revealed by the time of the initial outbreak in 28 Days Later, adding a dash of real-world terror.

As the group stalks what remains of the English countryside, Spike’s only hope might be Dr. Ian Kelson (Ralph Fiennes), whose experiments on curing alpha zombie Samson (Chi Lewis-Parry) might hold humanity’s last hope. Although best watched back to back with its predecessor for the full, horrifying picture, director Nia DaCosta’s chapter stands on its own—and earns bonus points for one of the best uses of Iron Maiden’s “Number of the Beast” in film history.

Louis Theroux: Inside the Manosphere

It’s the silence that does the trick; British documentarian Louis Theroux always knows when not to speak and instead let his subject expose themselves for the world to see. It’s a masterful technique whether Theroux is investigating the Westboro Baptist Church or UFO conspiracy theorists, but it is rarely put to better use than in his latest outing: exploring the online “manosphere” subculture of self-appointed “alphas” offering toxic advice on how to be a “real man.” Speaking with key figures in the loosely defined movement, Theroux’s mild-mannered approach often leaves them to do most of the talking, exposing shockingly misogynistic and extremist views. Even more distressing? The quiet revelation that for many of them their performative masculinity is all just one big grift, and how they rationalize the harm they cause in pursuit of a payout. Depressing but compelling viewing—not all men, but definitely all of these men.

Crime 101

Jewel thief Mike (Chris Hemsworth) is the best in the business, a meticulous planner who pulls off his heists without leaving a shred of evidence—much to the consternation of LAPD detective Lou Lubesnick (Mark Ruffalo), who doesn’t even know exactly who he’s hunting for a string of thefts. Elsewhere in the City of Angels, Sharon (Halle Berry) is an underappreciated VP at an insurance firm, frustrated at being passed over for promotion for years. She’s the perfect insider to help Mike orchestrate an elaborate $11 million diamond heist. But as Lou uncovers evidence connecting to Mike’s past, and the chaotic, violent biker Ormon (Barry Keoghan) aims to take the score for himself, even the most masterful planning can’t prevent everything spiraling dangerously out of control.

Tech

OpenAI Executive Kevin Weil Is Leaving the Company

Kevin Weil, OpenAI’s former chief product officer who was recently tapped to build a new AI workspace for scientists, Prism, is leaving the company, WIRED has confirmed. Weil was previously an early executive leading product at Instagram.

OpenAI is also sunsetting Prism, which the company launched as a web app in January this year to give scientists a better way to work with AI. The company is folding the roughly 10-person team behind it into Thibault Sottiaux’s Codex team. An OpenAI spokesperson confirmed the changes, and tells WIRED this is part of the company’s effort to unify its business and product strategy. OpenAI has broader ambitions to turn Codex, its AI coding application, into an “everything app.”

Weil, who joined OpenAI in June 2024, announced last September that he would be starting a new initiative inside of the company called “OpenAI for Science.” Now, OpenAI is dispersing those employees throughout the company’s product, research, and infrastructure teams. An OpenAI spokesperson reiterated the company’s commitment to accelerating scientific discovery, and says it’s one of the clearest ways AI can benefit humanity.

OpenAI is currently trying to refocus the company around a few key areas, such as enterprise offerings and coding. Last month, OpenAI’s CEO of AGI deployment Fidji Simo told staff that the company needs to simplify its product offerings. The push to divert resources to more consequential efforts resulted in OpenAI discontinuing its Sora video-generation app.

This is a developing story. Please check back for updates.

-

Entertainment6 days ago

Entertainment6 days agoPalace left in shock as Prince William cancels grand ceremony

-

Sports6 days ago

Sports6 days agoThe case for Man United’s Fernandes as Premier League’s best

-

Politics1 week ago

Politics1 week agoChinese, Taiwanese will unite, Xi tells Taiwan opposition leader

-

Entertainment1 week ago

Entertainment1 week agoDua Lipa hits major career high ahead of wedding with Callum Turner

-

Business1 week ago

Business1 week ago100% road tax waiver for electric cars, new rules for 2, 3 and 4 wheelers – what Delhi govt’s draft EV policy says – The Times of India

-

Business6 days ago

Business6 days agoUK could adopt EU single market rules under new legislation

-

Business1 week ago

Business1 week agoThe FAA wants gamers to apply for air traffic control jobs

-

Fashion6 days ago

Fashion6 days agoEnergy emerges as biggest cost driver in textile margins