Tech

Robots can now learn to use tools—just by watching us

Despite decades of progress, most robots are still programmed for specific, repetitive tasks. They struggle with the unexpected and can’t adapt to new situations without painstaking reprogramming. But what if they could learn to use tools as naturally as a child does by watching videos?

I still remember the first time I saw one of our lab’s robots flip an egg in a frying pan. It wasn’t pre-programmed. No one was controlling it with a joystick. The robot had simply watched a video of a human doing it, and then did it itself. For someone who has spent years thinking about how to make robots more adaptable, that moment was thrilling.

Our team at the University of Illinois Urbana-Champaign, together with collaborators at Columbia University and UT Austin, has been exploring that very question. Could robots watch someone hammer a nail or scoop a meatball, and then figure out how to do it themselves, without costly sensors, motion capture suits, or hours of remote teleoperation?

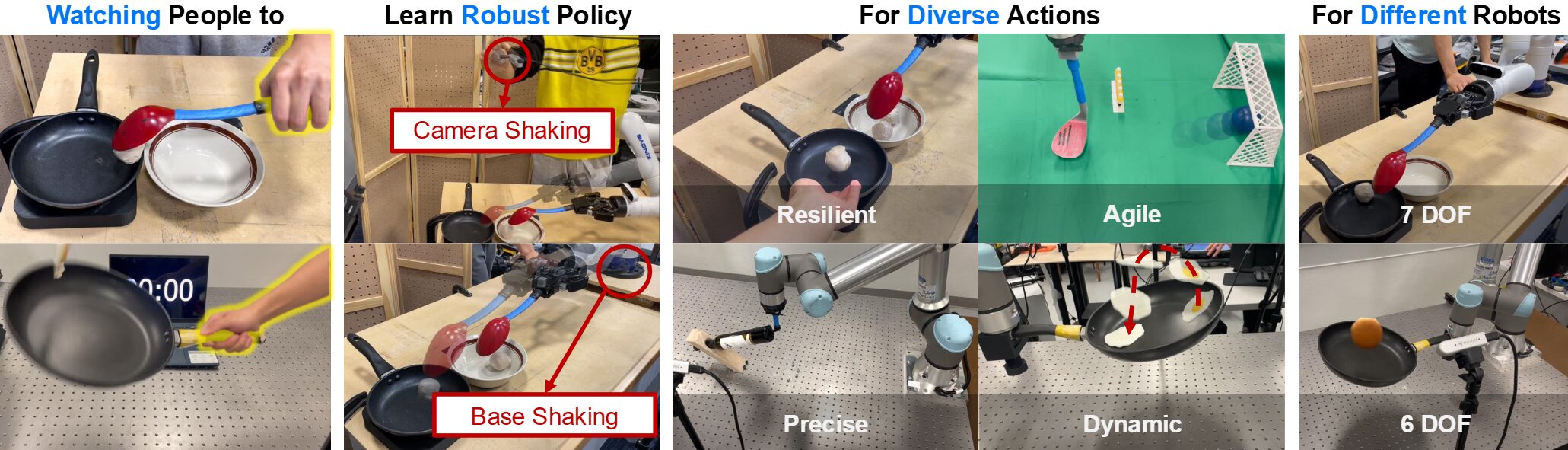

That idea led us to create a new framework we call “Tool-as-Interface,” currently available on the arXiv preprint server. The goal is straightforward: teach robots complex, dynamic tool-use skills using nothing more than ordinary videos of people doing everyday tasks. All it takes is two camera views of the action, something you could capture with a couple of smartphones.

Here’s how it works. The process begins with those two video frames, which a vision model called MASt3R uses to reconstruct a three-dimensional model of the scene. Then, using a rendering method known as 3D Gaussian splatting—think of it as digitally painting a 3D picture of the scene—we generate additional viewpoints so the robot can “see” the task from multiple angles.

But the real magic happens when we digitally remove the human from the scene. With the help of “Grounded-SAM,” our system isolates just the tool and its interaction with the environment. It is like telling the robot, “Ignore the human, and only pay attention to what the tool is doing.”

This “tool-centric” perspective is the secret ingredient. It means the robot isn’t trying to copy human hand motions, but is instead learning the exact trajectory and orientation of the tool itself. This allows the skill to transfer between different robots, regardless of how their arms or cameras are configured.

We tested this on five tasks: hammering a nail, scooping a meatball, flipping food in a pan, balancing a wine bottle, and even kicking a soccer ball into a goal. These are not simple pick-and-place jobs; they require speed, precision, and adaptability. Compared to traditional teleoperation methods, Tool-as-Interface achieved 71% higher success rates and gathered training data 77% faster.

One of my favorite tests involved a robot scooping meatballs while a human tossed in more mid-task. The robot didn’t hesitate, it just adapted. In another, it flipped a loose egg in a pan, a notoriously tricky move for teleoperated robots.

“Our approach was inspired by the way children learn, which is by watching adults,” said my colleague and lead author Haonan Chen. “They don’t need to operate the same tool as the person they’re watching; they can practice with something similar. We wanted to know if we could mimic that ability in robots.”

These results point toward something bigger than just better lab demos. By removing the need for expert operators or specialized hardware, we can imagine robots learning from smartphone videos, YouTube clips, or even crowdsourced footage.

“Despite a lot of hype around robots, they are still limited in where they can reliably operate and are generally much worse than humans at most tasks,” said Professor Katie Driggs-Campbell, who leads our lab.

“We’re interested in designing frameworks and algorithms that will enable robots to easily learn from people with minimal engineering effort.”

Of course, there are still challenges. Right now, the system assumes the tool is rigidly fixed to the robot’s gripper, which isn’t always true in real life. It also sometimes struggles with 6D pose estimation errors, and synthesized camera views can lose realism if the angle shift is too extreme.

In the future, we want to make the perception system more robust, so that a robot could, for example, watch someone use one kind of pen and then apply that skill to pens of different shapes and sizes.

Even with these limitations, I think we’re seeing a profound shift in how robots can learn, away from painstaking programming and toward natural observation. Billions of cameras are already recording how humans use tools. With the right algorithms, those videos could become training material for the next generation of adaptable, helpful robots.

This research, which was honored with the Best Paper Award at the ICRA 2025 Workshop on Foundation Models and Neural-Symbolic (NeSy) AI for Robotics, is a critical step toward unlocking that potential, transforming the vast ocean of human recorded video into a global training library for robots that can learn and adapt as naturally as a child does.

This story is part of Science X Dialog, where researchers can report findings from their published research articles. Visit this page for information about Science X Dialog and how to participate.

More information:

Haonan Chen et al, Tool-as-Interface: Learning Robot Policies from Human Tool Usage through Imitation Learning, arXiv (2025). DOI: 10.48550/arxiv.2504.04612

Cheng Zhu is second author of Tool-as-Interface: Learning Robot Policies from Human Tool Usage through Imitation Learning, UIUC BS Computer Engineering, UPenn MSE ROBO

Citation:

Robots can now learn to use tools—just by watching us (2025, August 23)

retrieved 23 August 2025

from https://techxplore.com/news/2025-08-robots-tools.html

This document is subject to copyright. Apart from any fair dealing for the purpose of private study or research, no

part may be reproduced without the written permission. The content is provided for information purposes only.

Tech

Europe’s Online Age Verification App Is Here

The European online age verification app is ready.

The app works with passports or ID cards, is built to be “completely anonymous” for the people who use it, works on any device (smartphones, tablets, and PCs), and is open source. “Best of all, online platforms can easily rely on our age verification app, so there are no more excuses,” said European Commission president Ursula von der Leyen at a press conference on Wednesday. “Europe offers a free and easy-to-use solution that can protect our children from harmful and illegal content.”

High Expectations

“It is our duty to protect our children in the online world just as we do in the offline world. And to do that effectively, we need a harmonized European approach,” von der Leyen said at Wednesday’s press conference. “And one of the central issues is the question, how can we ensure a technical solution for age verification that is valid throughout Europe? Today, I can announce that we have the answer.”

This answer takes the form of an open source app that any private company can repurpose, as long as it complies with European privacy standards and offers the same technical solution throughout the European Union. The user downloads the app, agrees to the terms and conditions, sets up a pin or biometric access, and proves their age through an electronic identification system, or by showing a passport or ID card (in which case biometric verification is also provided). The app does not store your name, date of birth, ID number, or any other personal information, according to the European Commission—only the fact that you are over a certain age.

After that, when a person using the app wants to access a social network (minimum age: 13), pornographic site (minimum age: 18), or any other age-protected content, if they are logged in from a computer, they need only scan the QR code shown on the site they want to visit. If, on the other hand, the person logs in from a smartphone, the app sends the proof of age directly. The platform does not access the document with which the user proved it in the first place.

Adoption Event

The need to introduce a common system for the entire European Union has been discussed for some time, and according to commission technicians, the technical work is now complete. Of course, it will still be possible to circumvent the system—all it takes is for an adult to lend their phone to a younger friend—but the technological architecture exists, and it will be up to EU member states to decide whether to integrate it into national digital wallets or develop independent apps.

“No More Excuses”

For the app to really be effective, platforms must be obligated to verify the age of their users—that’s where things get tricky. The Digital Services Act, which went into effect in 2024, requires “very large online platforms”—those with more than 45 million monthly users in the European Union—to take concrete steps to mitigate systemic risks related to child protection, with heavy penalties for noncompliance.

“And that’s why Europe has the DSA: to call online platforms to their responsibilities. Because Europe will not tolerate platforms making money at the expense of our children,” European Commission executive vice president Henna Virkkunen told a press conference. She added that after an investigation into TikTok, the European institutions plan to take similar action against Facebook, Instagram, and Snapchat, as well as four porn sites. “Since the platforms do not have adequate age verification tools, we developed the solution ourselves,” he concluded. In short, as von der Leyen also remarked, “there are no more excuses.”

Bare Minimum

So far, this is the European framework that sets the general rules. On this basis, member states can consider more restrictive measures. Italy was among the first to discuss how to regulate the use of social media by minors but has so far not landed on anything concrete. Elsewhere in the EU, France’s Emmanuel Macron has been a trailblazer on the issue, pushing France to discuss a rule to ban social networks for minors under the age of 15 entirely. So far, this measure has received broad political support—but the outcome depends largely on compatibility with the Digital Services Act and the availability of effective age verification systems like the app the European Commission just released.

This article originally appeared on WIRED Italia and has been translated.

Tech

Anthropic Plots Major London Expansion

Anthropic is moving into a new London office as it seeks to expand its research and commercial footprint in Europe, setting up a scrap between the leading AI labs for talent emerging from British universities.

The company, which opened its first London office in 2023, is moving to the same neighborhood as Google DeepMind, OpenAI, Meta, Wayve, Isomorphic Labs, Synthesia, and various AI research institutions.

Anthropic’s new, 158,000-square-foot office footprint will have space enough for 800 people—four times its current head count—giving it room to potentially outscale OpenAI, which itself recently announced an expansion in London.

“Europe’s largest businesses and fastest-growing startups are choosing Claude, and we’re scaling to match,” says Pip White, head of EMEA North at Anthropic. “The UK combines ambitious enterprises and institutions that understand what’s at stake with AI safety with an exceptional pool of AI talent—we want to be where all of that comes together.

UK government officials had reportedly attempted to coax Anthropic into expanding its presence in London after the company recently fell out with the US administration. Anthropic refused to allow its models to be used in mass surveillance and autonomous weapon systems, leading to an ongoing legal battle between the AI lab and the Pentagon.

As part of the expansion, Anthropic says it will deepen its work with the UK’s AI Security Institute, a government body that this week published a risk evaluation of its latest model, Claude Mythos Preview. According to Politico, the UK government is one of few across Europe to have been granted access to the model, which Anthropic has released to only select parties, citing concerns over the potential for its abuse by cybercriminals.

The increasing concentration of AI companies in the same London district is an important step in creating a pathway for research to translate into AI products, says Geraint Rees, vice-provost at University College London, whose campus is around the corner from Anthropic’s new office.

“This cluster didn’t emerge from a planning document. It grew because serious researchers and companies understand that proximity isn’t a nice-to-have,” he said last month, speaking at an event attended by WIRED. “That’s how the innovation system actually works. It’s not a clean, linear transfer from lab to market. It’s messier, richer, more human than that.”

Tech

CYBERUK ’26: UK lagging on legal protections for cyber pros | Computer Weekly

The increasingly long-in-the-tooth Computer Misuse Act (CMA) of 1990 remains an albatross around the neck of British cyber security professionals, and even though the UK government committed last December to reforming it, every minute of delay is holding back the nation’s security innovation, resilience, talent, and ability to defend itself against cyber attacks, campaigners have warned.

Ahead of the National Cyber Security Centre’s (NCSC’s) upcoming CYBERUK conference in Glasgow, the CyberUp Campaign for reform of the Computer Misuse Act (CMA) has published a new report, titled Protections for Cyber Researchers: How the UK is being left behind to maintain pressure on Westminster.

The CMA defines the vague offence of unauthorised access to a computer, which the campaigners want changed because it was written 35 years ago and fails to account for the development of the cyber security profession, and the fact that in the course of their day-to-day work, cyber pros may sometimes need to hack into other systems.

“Cyber attacks are growing in scale, sophistication and severity, with a devastating impact on infrastructure, businesses and charities,” said a CyberUp campaign spokesperson.

“While other countries have moved to refresh their cyber laws in response, the UK’s Computer Misuse Act hasn’t been updated since before the modern internet – hardly the best platform for accelerating our defences into the next decade.”

The group’s report highlights how other nations, Australia, Belgium, France, Germany, Hong Kong, Malta, Portugal, and the USA, have already secured legal protections for cyber professionals that enable them to go about their business without fear of prosecution.

In Portugal – Britain’s oldest formal ally under a treaty dating back to the 14th Century – the government last year published Decreto-Lei 125/2025, implementing the European Union (EU) Network and Information Systems (NIS2) Directive and revising the country’s cyber crime law to ensure that ethical hackers and professional cyber security practitioners working in good faith are both recognised and protected.

Portgual’s laws now accept some elements of cyber work may have to happen without explicit permission or involve unanticipated technical overreach that has a legitimate purpose.

As such, Portugal says that security work undertaken in good faith won’t be punished as long as the researcher fulfills a set of conditions. For example, they can act only to find vulnerabilities and these must be reported immediately, they must avoid taking harmful actions, like conducting DDoS attacks or installing malware, and they must respect the integrity of any data they may find or access and delete it within 10 days once the issue is addressed.

CyberUp said Portugal’s example demonstrates how cyber crime laws can be modernised to legally protect research carried out in the public interest.

“Portugal has demonstrated how to modernise their equivalent law through cyber legislation. We urge the government to follow this example and act swiftly through the Cyber Security and Resilience Bill to achieve meaningful reform, or risk lagging even further behind our peers,” the spokesperson said.

Defence Framework

Working with cyber security experts and legal advisors, the CyberUp campaign has developed its own Defence Framework that would allow cyber professionals to present a statutory defence in court as long as they adhere to the Framework’s four core principles.

- Harm Vs. Benefit: The benefits of the activity must outweigh the potential harms;

- Proportionality: Cyber pros must take all reasonable steps to minimise the risks of their activity;

- Intent: They must act honestly, sincerely, and clearly direct themselves towards improving security;

- Competence: Their qualifications and professional memberships should demonstrate they are suitably equipped to perform cyber security work.

The campaigners say this framework will bring clarity and confidence to the security sector, enabling cyber pros to run essential research tasks without fear of criminal prosecution, helping organisations operate to recognised legal standards, and enabling a more open and collaborative relationship between the cyber sector and the UK government.

-

Entertainment1 week ago

Entertainment1 week agoQueen Elizabeth II emotional message for Archie, Lilibet sparks speculation

-

Tech1 week ago

Tech1 week agoAzure customers up in arms over ‘full’ UK South region | Computer Weekly

-

Tech1 week ago

Tech1 week agoAs the Strait of Hormuz Reopens, Global Shipping Will Take Months to Recover

-

Fashion1 week ago

Fashion1 week agoCII submits 20-pt agenda to Indian govt to back firms hit by Iran war

-

Tech1 week ago

Tech1 week agoThis AI Button Wearable From Ex-Apple Engineers Looks Like an iPod Shuffle

-

Politics7 days ago

Politics7 days agoIndian airlines hit hardest after Dubai limits foreign flights until May 31

-

Entertainment4 days ago

Entertainment4 days agoPalace left in shock as Prince William cancels grand ceremony

-

Politics6 days ago

Politics6 days agoChinese, Taiwanese will unite, Xi tells Taiwan opposition leader