Tech

Researchers explore how AI can strengthen, not replace, human collaboration

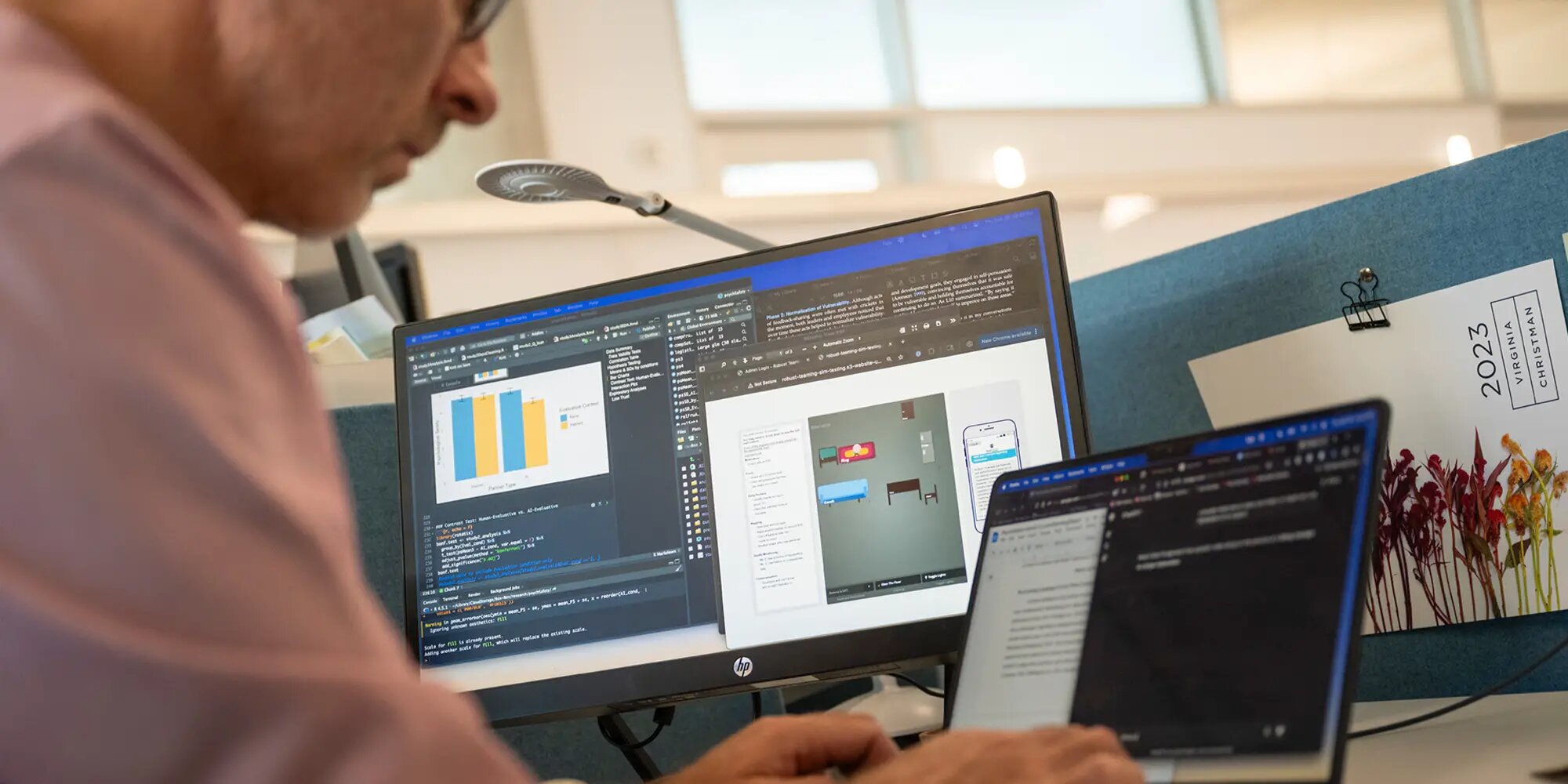

Researchers from Carnegie Mellon University’s Tepper School of Business are learning how AI can be used to support teamwork rather than replace teammates.

Anita Williams Woolley is a professor of organizational behavior. She researches collective intelligence, or how well teams perform together, and how artificial intelligence could change workforce dynamics. Now, Woolley and her colleagues are helping to figure out exactly where and how AI can play a positive role.

“I’m always interested in technology that can help us become a better version of ourselves individually,” Woolley said, “but also collectively, how can we change the way we think about and structure work to be more effective?”

Woolley collaborated with technologists and others in her field to develop Collective HUman-MAchine INtelligence (COHUMAIN), a framework that seeks to understand where AI fits within the established boundaries of organizational social psychology.

The researchers behind the 2023 publication of COHUMAIN caution against treating AI like any other teammate. Instead, they see it as a partner that works under human direction, with the potential to strengthen existing capabilities or relationships. “AI agents could create the glue that is missing because of how our work environments have changed, and ultimately improve our relationships with one another,” Woolley said.

The research that makes up the COHUMAIN architecture emphasizes that while AI integration into the workplace may take shape in ways we don’t yet understand, it won’t change the fundamental principles behind organizational intelligence, and likely can’t fill in all of the same roles as humans.

For instance, while AI might be great at summarizing a meeting, it’s still up to people to sense the mood in the room or pick up on the wider context of the discussion.

Organizations have the same needs as before, including a structure that allows them to tap into each human team member’s unique expertise. Woolley said that artificial intelligence systems may best serve in “partnership” or facilitation roles rather than managerial ones, like a tool that can nudge peers to check in with each other, or provide the user with an alternate perspective..

Safety and risk

With so much collaboration happening through screens, AI tools might help teams strengthen connections between coworkers. But those same tools also raise questions about what’s being recorded and why.

“People have a lot of sensitivity, rightly so, around privacy. Often you have to give something up to get something, and that is true here,” Wooley said.

The level of risk that users feel, both socially and professionally, can change depending on how they interact with AI, according to Allen Brown, a Ph.D. student who works closely with Woolley. Brown is exploring where this tension shows up and how teams can work through it. His research focuses on how comfortable people feel taking risks or speaking up in a group.

Brown said that, in the best case, AI could help people feel more comfortable speaking up and sharing new ideas that might not be heard otherwise. “In a classroom, we can imagine someone saying, “Oh, I’m a little worried. I don’t know enough for my professor, or how my peers are going to judge my question,” or, “I think this is a good idea, but maybe it isn’t.” We don’t know until we put it out there.”

Since AI relies on a digital record that might or might not be kept permanently, one concern is that a human might not know which interactions with an AI will be used for evaluation.

“In our increasingly digitally mediated workspaces, so much of what we do is being tracked and documented,” Brown said. “There’s a digital record of things, and if I’m made aware that, ‘Oh, all of a sudden our conversation might be used for evaluation,’ we actually see this significant difference in interaction.”

Even when they thought their comments might be monitored or professionally judged, people still felt relatively secure talking to another human being. “We’re talking together. We’re working through something together, but we’re both people. There’s kind of this mutual assumption of risk,” he explained.

The study found that people felt more vulnerable when they thought an AI system was evaluating them. Brown wants to understand how AI can be used to create the opposite effect—one that builds confidence and trust.

“What are those contexts in which AI could be a partner, could be part of this conversational communicative practice within a pair of individuals at work, like a supervisor-supervisee relationship, or maybe within a team where they’re working through some topic that might have task conflict or relationship conflict?” Brown said. “How does AI help resolve the decision-making process or enhance the resolution so that people actually feel increased psychological safety?”

Creating a more trustworthy AI

At the individual level, Tepper researchers are also learning how the way in which AI explains its reasoning affects how people use and trust it. Zhaohui (Zoey) Jiang and Linda Argote are studying how people react to different kinds of AI systems—specifically, ones that explain their reasoning (transparent AI) versus ones that don’t explain how they make decisions (black box AI).

“We see a lot of people advocating for transparent AI,” Jiang said, “but our research reveals an advantage of keeping the AI a black box, especially for a high ability participant.”

One of the reasons for this, she explained, is overconfidence and distrust in skilled decision-makers.

“For a participant who is already doing a good job independently at the task, they are more prone to the well-documented tendency of AI aversion. They will penalize the AI’s mistake far more than the humans making the same mistake, including themselves,” Jiang said. “We find that this tendency is more salient if you tell them the inner workings of the AI, such as its logic or decision rules.”

People who struggle with decision-making actually improve their outcomes when using transparent AI models that show off a moderate amount of complexity in their decision-making process. “We find that telling them how the AI is thinking about this problem is actually better for less-skilled users, because they can learn from AI decision-making rules to help improve their own future independent decision-making.”

While transparency is proving to have its own use cases and benefits, Jiang said the most surprising findings are around how people perceive black box models. “When we’re not telling these participants how the model arrived at its answer, participants judge the model as the most complex. Opacity seems to inflate the sense of sophistication, whereas transparency can make the very same system seem simpler and less ‘magical,'” she said.

Both kinds of models vary in their use cases. While it isn’t yet cost‑effective to tailor an AI to each human partner, future systems may be able to self-adapt their representation to help people make better decisions, she said.

“It can be dynamic in a way that it can recognize the decision-making inefficiencies of that particular individual that it is assigned to collaborate with, and maybe tweak itself so that it can help complement and offset some of the decision-making inefficiencies.”

Citation:

Researchers explore how AI can strengthen, not replace, human collaboration (2025, November 1)

retrieved 1 November 2025

from https://techxplore.com/news/2025-10-explore-ai-human-collaboration.html

This document is subject to copyright. Apart from any fair dealing for the purpose of private study or research, no

part may be reproduced without the written permission. The content is provided for information purposes only.

Tech

US Special Forces Soldier Arrested for Polymarket Bets on Maduro Raid

The Department of Justice announced Thursday that it arrested Gannon Ken Van Dyke, an enlisted member of the US Army’s special forces, for allegedly using “classified, nonpublic” information about the capture of Venezuelan president Nicolás Maduro to notch more than $400,000 in profits on Polymarket trades. A grand jury indicted him on five counts, including multiple violations of the Commodity Exchange Act.

Van Dyke is the first person to be charged with insider trading on a prediction market in the United States. Lawmakers have been voicing concerns for months about the high likelihood that politicians and public servants could use nonpublic information to profit from trades on leading industry platforms like Polymarket and Kalshi, which have exploded in popularity over the past year.

The arrest comes just weeks after Department of Justice prosecutors met with Polymarket about potential insider tradition violations. In February, Israeli authorities arrested two citizens, an army reservist and a civilian, for allegedly leaking classified information by making wagers on Polymarket related to military operations. Kalshi, Polymarket’s primary rival in the United States, recently fined three politicians for breaking its insider trading rules, but it did not flag the violations for further enforcement to the Commodity Futures Trading Commission (CFTC), the federal agency that oversees prediction markets.

After Van Dyke’s arrest was made public, Polymarket posted a statement to social media noting that it had “identified a user trading on classified government information” and “referred the matter to the DOJ & cooperated with their investigation.” The company declined to comment further.

According to court documents, Van Dyke has been an active duty US soldier since September 2008 and rose to the level of master sergeant in 2023. At the time of the alleged trading activity, he was stationed at Fort Bragg in Fayetteville, North Carolina, and assigned to the Army’s Special Operations Command Western Hemisphere Operations.

“I have been crystal clear that anyone who engages in fraud, manipulation, or insider trading in any of our markets will face the full force of the law,” CFTC chair Michael Selig said in a statement. “The defendant was entrusted with confidential information about US operations and yet took action that endangered US national security and put the lives of American service members in harm’s way.”

The complaint alleges that Van Dyke was involved in the planning and execution of Maduro’s arrest and that he was aware that he wasn’t authorized to share nonpublic information about US military operations. The complaint says that Van Dyke signed a nondisclosure agreement that forbade him from revealing sensitive or classified government information “by writing, word, conduct, or otherwise.” The complaint also alleges Van Dyke saved a screenshot to his Google account “displaying the results of an artificial intelligence query” outlining how the US Special Forces maintains many classified files including “operational details that are not available to the public.”

On December 26, Van Dyke allegedly opened an account on Polymarket and took out around $35,000 from his bank account before transferring it to a cryptocurrency exchange.

The following day, Van Dyke allegedly made his first Venezuela-related trade on Polymarket, putting a little less than $100 on a “YES” contract that US forces would be in Venezuela by January 31, 2026. Prosecutors accuse him of ultimately making 13 Venezuela-related transactions on the platform, seven of those—totaling hundreds of thousands of shares—on a “YES” contract for “Maduro out by … January 31, 2026.” In other words, Van Dyke allegedly stood to make an enormous profit if the Venezuelan leader wound up out of power by the end of the month.

Tech

Newly Deciphered Sabotage Malware May Have Targeted Iran’s Nuclear Program—and Predates Stuxnet

Instead, Kamluk saw that it was a self-spreading piece of code with very different intentions. Using what was referred to within the code as “wormlet” functionality, Fast16 is designed to copy itself to other computers on the network via Windows’ network share feature. It checks for a list of security applications, and if none are present, installs the Fast16.sys kernel driver on the target machine.

That kernel driver then reads the code of applications as they’re loaded into the computer’s memory, monitoring for a long list of specific patterns—“rules” that allow it to identify when a target application is running. When it detects the target software, it carries out its apparent goal: silently altering the calculations the software is running to imperceptibly corrupt its results.

“This actually had a very significant payload inside, and pretty much everybody who looked at it before had missed it,” says Costin Raiu, a researcher at security consultancy TLP:Black who previously led the team that included Kamluk and Guerrero-Saade at Russian security firm Kaspersky, which did early work analyzing Stuxnet and related malware. “This is designed to be a long-term, very subtle sabotage which probably would be very, very difficult to notice.”

Searching for software that met the criteria of Fast16’s “rules” for an intended sabotage target, Kamluk and Guerrero-Saade found their three candidates: the MOHID, PKPM, and LS-DYNA software. As for the “wormlet” feature, they believe that the spreading mechanism was designed so that when a victim double-checks their calculation or simulation results with a different computer in the same lab, that machine, too, will confirm the erroneous result, making the deception all the more difficult to discover or understand.

In terms of other cybersabotage operations, only Stuxnet is remotely in the same class as Fast16, Guerrero-Saade argues. The complexity and sophistication of the malware, too, place it in Stuxnet’s realm of high-priority, high-resource state-sponsored hacking. “There are few scenarios where you go through this kind of development effort for a covert operation,” Guerrero-Saade says. “Somebody bent a paradigm in order to slow down or damage or throw off a process that they considered to be of critical importance.”

The Iran Hypothesis

All of that fits the hypothesis that Fast16 might, like Stuxnet, have been aimed at disrupting Iran’s ambitions of building a nuclear weapon. TLP:Black’s Raiu argues that, beyond a mere possibility, targeting Iran represents the most likely explanation—a “medium-high confidence” theory that Fast16 was “designed as a cyber strike package” that targeted Iran’s AMAD nuclear project, a plan by the regime of Ayatollah Khameini to obtain nuclear weapons in the early 2000s.

“This is another dimension of cyberattacks, another way to to wage this cyberwar against Iran’s nuclear program,” Raiu says.

In fact, Guerrero-Saade and Kamluk point to a paper published by the Institute for Science and International Security, which collected public evidence of Iranian scientists carrying out research that could contribute to the development of a nuclear weapon. In several of those documented cases, the scientists’ research used the LS-DYNA software that Guerrero-Saade and Kamluk found to have been a potential Fast16 target.

Tech

Rednote Draws a Line Between China and the World

Some Rednote users have reported that their accounts were automatically converted from the Chinese to the international version of the website recently. One American user, who asked to remain anonymous to avoid being punished by the platform, shared a screenshot with WIRED showing that when he logged into the platform in April, a banner appeared that read “Your account is a rednote account. We have automatically redirected you to rednote.com.”

The user says he registered his account with a Chinese phone number years ago, but suspects his account was converted because of using a non-Chinese IP address. “I have never posted from China. It’s always been in the United States. Obviously, in one glance, they can see this is an American posting in English,” he says.

Looming Split

After TikTok sidestepped a US shutdown by selling a majority stake in its American business, most of the “refugees” who had fled to Rednote went back to the video app or to other platforms. Those who stayed often did so because they value reading about and talking directly with Chinese people living in China. They now worry that a corporate split could destroy what had been one of the strongest bridges between the Chinese internet and the wider world.

Jerry Liu, a Vancouver-based TikTok influencer known for sharing funny content about Rednote itself, said in a November video that he was told by staff at the company’s Shanghai office that international users should expect to see less Chinese content and more North American content in the future. “I feel frustrated. I think it’s just gonna be less fun,” he said in the video.

Rednote had tried the TikTok localization playbook before—it launched a slew of regionally focused apps roughly three years ago with names like Uniik, Spark, Catalog, Takib, habU, and S’More that each catered to specific countries outside China, but they failed to catch on. The effort could have been a lesson for the company about the value of its massive Chinese content ecosystem to people in other countries, but as is often the case, regulatory and political considerations appear to have taken priority.

“I don’t want to see Americans talking about Coachella. I did that on Instagram, I didn’t join Xiaohongshu to see Instagram,” says the American user who was recently redirected to Rednote.

Security Concerns

As Rednote goes global, the company is no doubt looking to Chinese predecessors like WeChat and TikTok for ideas about how to navigate the minefield of content moderation and data privacy. So far, its approach looks to more closely resemble that of WeChat.

For over a decade, WeChat has sorted users based largely on one criterion: whether they used a Chinese or a foreign number to sign up. That has allowed users to cross Tencent’s digital border by unlinking and relinking their WeChat accounts to different mobile numbers.

Jeffrey Knockel, an assistant professor of computer science at Bowdoin College, found that Tencent censors content on WeChat and Weixin differently, even though the two platforms are integrated with one another and users can communicate across them. He says Chinese users are subject to a real-time keyword-matching filter to censor politically sensitive speech, but “if you registered for WeChat using a Canadian or an American phone number, your messages aren’t necessarily under that kind of censorship.”

Knockel says WeChat’s blended content moderation approach may have made some people wary about using the app. “Users are generally distrustful of the platform. They don’t know if they’re being watched and censored,” he says. As Rednote moves in a similar direction, it will be worth watching whether international audiences end up having similar misgivings.

This is an edition of Zeyi Yang and Louise Matsakis’ Made in China newsletter. Read previous newsletters here.

-

Fashion1 week ago

Fashion1 week agoFrance’s LVMH Q1 revenue falls 6%, shows resilience amid Iran war

-

Entertainment1 week ago

Entertainment1 week agoIs Claude down? Here’s why users are seeing errors

-

Sports1 week ago

Sports1 week agoPSL 11: Peshawar Zalmi win toss, opt to field first against Quetta Gladiators

-

Tech1 week ago

Tech1 week agoThe Deepfake Nudes Crisis in Schools Is Much Worse Than You Thought

-

Business1 week ago

Business1 week agoStandard Life buys rival in £2b deal to create savings giant

-

Tech1 week ago

Tech1 week agoCYBERUK ’26: UK lagging on legal protections for cyber pros | Computer Weekly

-

Fashion1 week ago

Fashion1 week agoRaymond unveils luxury Chairman’s Collection Store in Mumbai

-

Sports4 days ago

Sports4 days agoNCAA men’s gymnastics championship: All-time winners list