Tech

Who is Zico Kolter? A professor leads OpenAI safety panel with power to halt unsafe AI releases

If you believe artificial intelligence poses grave risks to humanity, then a professor at Carnegie Mellon University has one of the most important roles in the tech industry right now.

Zico Kolter leads a 4-person panel at OpenAI that has the authority to halt the ChatGPT maker’s release of new AI systems if it finds them unsafe. That could be technology so powerful that an evildoer could use it to make weapons of mass destruction. It could also be a new chatbot so poorly designed that it will hurt people’s mental health.

“Very much we’re not just talking about existential concerns here,” Kolter said in an interview with The Associated Press. “We’re talking about the entire swath of safety and security issues and critical topics that come up when we start talking about these very widely used AI systems.”

OpenAI tapped the computer scientist to be chair of its Safety and Security Committee more than a year ago, but the position took on heightened significance last week when California and Delaware regulators made Kolter’s oversight a key part of their agreements to allow OpenAI to form a new business structure to more easily raise capital and make a profit.

Safety has been central to OpenAI’s mission since it was founded as a nonprofit research laboratory a decade ago with a goal of building better-than-human AI that benefits humanity. But after its release of ChatGPT sparked a global AI commercial boom, the company has been accused of rushing products to market before they were fully safe in order to stay at the front of the race. Internal divisions that led to the temporary ouster of CEO Sam Altman in 2023 brought those concerns that it had strayed from its mission to a wider audience.

The San Francisco-based organization faced pushback—including a lawsuit from co-founder Elon Musk—when it began steps to convert itself into a more traditional for-profit company to continue advancing its technology.

Agreements announced last week by OpenAI along with California Attorney General Rob Bonta and Delaware Attorney General Kathy Jennings aimed to assuage some of those concerns.

At the heart of the formal commitments is a promise that decisions about safety and security must come before financial considerations as OpenAI forms a new public benefit corporation that is technically under the control of its nonprofit OpenAI Foundation.

Kolter will be a member of the nonprofit’s board but not on the for-profit board. But he will have “full observation rights” to attend all for-profit board meetings and have access to information it gets about AI safety decisions, according to Bonta’s memorandum of understanding with OpenAI. Kolter is the only person, besides Bonta, named in the lengthy document.

Kolter said the agreements largely confirm that his safety committee, formed last year, will retain the authorities it already had. The other three members also sit on the OpenAI board—one of them is former U.S. Army General Paul Nakasone, who was commander of the U.S. Cyber Command. Altman stepped down from the safety panel last year in a move seen as giving it more independence.

“We have the ability to do things like request delays of model releases until certain mitigations are met,” Kolter said. He declined to say if the safety panel has ever had to halt or mitigate a release, citing the confidentiality of its proceedings.

Kolter said there will be a variety of concerns about AI agents to consider in the coming months and years, from cybersecurity—”Could an agent that encounters some malicious text on the internet accidentally exfiltrate data?”—to security concerns surrounding AI model weights, which are numerical values that influence how an AI system performs.

“But there’s also topics that are either emerging or really specific to this new class of AI model that have no real analogues in traditional security,” he said. “Do models enable malicious users to have much higher capabilities when it comes to things like designing bioweapons or performing malicious cyberattacks?”

“And then finally, there’s just the impact of AI models on people,” he said. “The impact to people’s mental health, the effects of people interacting with these models and what that can cause. All of these things, I think, need to be addressed from a safety standpoint.”

OpenAI has already faced criticism this year about the behavior of its flagship chatbot, including a wrongful-death lawsuit from California parents whose teenage son killed himself in April after lengthy interactions with ChatGPT.

Kolter, director of Carnegie Mellon’s machine learning department, began studying AI as a Georgetown University freshman in the early 2000s, long before it was fashionable.

“When I started working in machine learning, this was an esoteric, niche area,” he said. “We called it machine learning because no one wanted to use the term AI because AI was this old-time field that had overpromised and underdelivered.”

Kolter, 42, has been following OpenAI for years and was close enough to its founders that he attended its launch party at an AI conference in 2015. Still, he didn’t expect how rapidly AI would advance.

“I think very few people, even people working in machine learning deeply, really anticipated the current state we are in, the explosion of capabilities, the explosion of risks that are emerging right now,” he said.

AI safety advocates will be closely watching OpenAI’s restructuring and Kolter’s work. One of the company’s sharpest critics says he’s “cautiously optimistic,” particularly if Kolter’s group “is actually able to hire staff and play a robust role.”

“I think he has the sort of background that makes sense for this role. He seems like a good choice to be running this,” said Nathan Calvin, general counsel at the small AI policy nonprofit Encode. Calvin, who OpenAI targeted with a subpoena at his home as part of its fact-finding to defend against the Musk lawsuit, said he wants OpenAI to stay true to its original mission.

“Some of these commitments could be a really big deal if the board members take them seriously,” Calvin said. “They also could just be the words on paper and pretty divorced from anything that actually happens. I think we don’t know which one of those we’re in yet.”

© 2025 The Associated Press. All rights reserved. This material may not be published, broadcast, rewritten or redistributed without permission.

Citation:

Who is Zico Kolter? A professor leads OpenAI safety panel with power to halt unsafe AI releases (2025, November 2)

retrieved 2 November 2025

from https://techxplore.com/news/2025-11-zico-kolter-professor-openai-safety.html

This document is subject to copyright. Apart from any fair dealing for the purpose of private study or research, no

part may be reproduced without the written permission. The content is provided for information purposes only.

Tech

A Humanoid Robot Set a Half-Marathon Record in China

Over the weekend in China, a humanoid robot shattered world half-marathon record—the human record—by seven minutes.

The star performer was a robot developed by the Chinese company Honor (the smartphone maker), which finished the 13.1-mile race in 50 minutes, 26 seconds. The human record, set by Ugandan Olympic medalist Jacob Kiplimo, is 57 minutes, 20 seconds. The result marks an impressive milestone especially considering that, just a year earlier, the fastest robot at this half-marathon event took two and a half hours to complete the same distance.

But Honor’s robot was not the only participant. The event consisted of more than 100 humanoid robots from 76 institutions across China. The robots lined up alongside 12,000 human runners in Beijing’s E-Town, albeit on separate courses to avoid accidents. The contrast in performance between humans and robots was more than evident.

Run, Robot, Run

A humanoid robot is designed to mimic the structure and movement of the human body, with legs, arms, and sensors that allow it to interact with its environment. In this case, the winning robot incorporated features inspired by elite runners: long legs (almost a meter), advanced balance systems, and a liquid cooling mechanism, similar to that of smartphones, to prevent overheating during the race.

In addition, many of the participating robots operated autonomously, meaning without direct human control. Thanks to artificial intelligence algorithms, they could adjust their pace, maintain balance, and adapt to the terrain in real time. Notably, the Honor robot that achieved the 50-minute mark operated autonomously. The Chinese manufacturer presented another robot, operated by remote control, that ran the same stretch in even less time: 48 minutes, 19 seconds.

As expected, there were some accidents in the race. Some robots fell down, others veered off the path, and several needed technical assistance along the way. While the physical performance of humanoid robots has advanced rapidly, their reliability is still developing. Of course, the laughter and jeers are no longer as frequent as they used to be, replaced by applause and exclamations of surprise.

Robot Superiority

Just like the robots that went viral for their impressive martial arts display a few weeks ago, this long-distance race is part of a broader strategy by China to show off its leadership in the development of advanced robots.

You don’t need to be a robotics expert to see that this achievement demonstrates that machines can outperform humans at specific physical tasks under controlled conditions. (It’s hard to imagine that the winning robot could achieve the same result, for example, if it started to rain during the race.) But humans still have a few tricks up their sleeve: Running in a straight line is very different from performing complex real-world activities, such as manipulating delicate objects or interacting socially.

However, it’s understandable that the image of a robot crossing the finish line in record time, ahead of human athletes, raises several questions. Is this the beginning of a new era in which machines redefine physical limits?

One could argue that a car is a machine, and those have always been faster than humans. But a humanoid robot is designed to mimic humans. It’s more alarming to see one beat humanity at its own game—even if so many of them are still tripping over themselves.

This story originally appeared in WIRED en Español and has been translated from Spanish.

Tech

War Memes Are Turning Conflict Into Content

As ceasefire announcements between the US and Iran—and separately between Israel and Lebanon—dominated headlines over the past two weeks, they also prompted a look back at how war spread online: through memes.

There were jokes about conscription. Captions about getting drafted, but at least with a Bluetooth device. The song “Bazooka” went viral, with users lip-syncing to: “Rest in peace my granny, she got hit by a bazooka.” Military filters followed. So did posts about Americans wanting to be sent to Dubai “to save all the IG models.”

Across the Gulf, the tone was different but the instinct was the same. Memes joked that Iran was replying to Israel faster than the person you’re thinking about. Delivery drivers were shown “dodging missiles.” “Eid fits” became hazmat suits and tactical vests.

Dark humor is one of the oldest responses to fear, a way of reclaiming control, however briefly, over events that offer none. Variations of that idea appear across psychology and philosophy, including Freud’s relief theory, which frames humor as a release of tension.

But social media changes the scale and speed of that instinct.

A joke once shared within a small community can become a global template in minutes. Algorithms do not reward depth or accuracy; they reward engagement. The memes that travel fastest are usually stripped of context, easy to recognize and simple to remix.

Middle East scholar and media analyst Adel Iskandar traces political satire back centuries, from banned satirical papyri in ancient Egypt to cartoons during revolutions and gallows humor in modern wars. “Where there is hardship, there is satire,” he says. “Where there is loss of hope, there is hope in comedy.”

That tradition still exists online. But today it is fused with recommendation systems designed to keep attention moving.

Memes Spread Faster Than Facts

The word “meme” was coined by Richard Dawkins in his 1976 book The Selfish Gene, where he described how ideas replicate like genes. On today’s internet, replication follows platform logic.

Fitness means generality. A meme does not need to be accurate. It needs to feel familiar. It needs the right format, paired with trending audio and the right emotional shorthand.

“A meme is like a virus,” Iskandar says. “If it doesn’t travel, it’ll die.”

The most visible response online is not always the truest one. It is often just the easiest to spread. And once context disappears, one crisis can start to resemble any other.

Geography shapes humor too, and adds another level of tension. “If you live far away from the threat, you’re capable of producing content that ridicules it with an element of safety,” says Iskandar. “Whereas if you happen to be within close proximity, it is more of a fatalism.”

That divide matters. For some users, war exists mainly as mediated spectacle: clips, edits, graphics, headlines, and reaction posts. For others, it is sirens, uncertainty, disrupted flights, rising prices, and messages checking who is safe.

The same meme can function as entertainment in one country and emotional survival in another. Take the American experience of violence, which Sut Jhally, professor of communication at the University of Massachusetts Amherst, says “is very mediated.”

What much of the Western world has consumed instead is what cultural critic George Gerbner called “happy violence”: spectacular, consequence-free, and detached from the aftermath.

Jhally argues that the September 11 attacks remain the defining modern American experience of war-adjacent political violence. Much else has been cinematic: distant invasions, blockbuster destruction, video-game logic, apocalypse franchises.

The teenager from the Midwest joking about being drafted is drawing from zombie films and superhero apocalypses. “There is almost no discussion about what an actual Third World War would look like,” he says. “People do not have a perception of what that really looks like.”

Tech

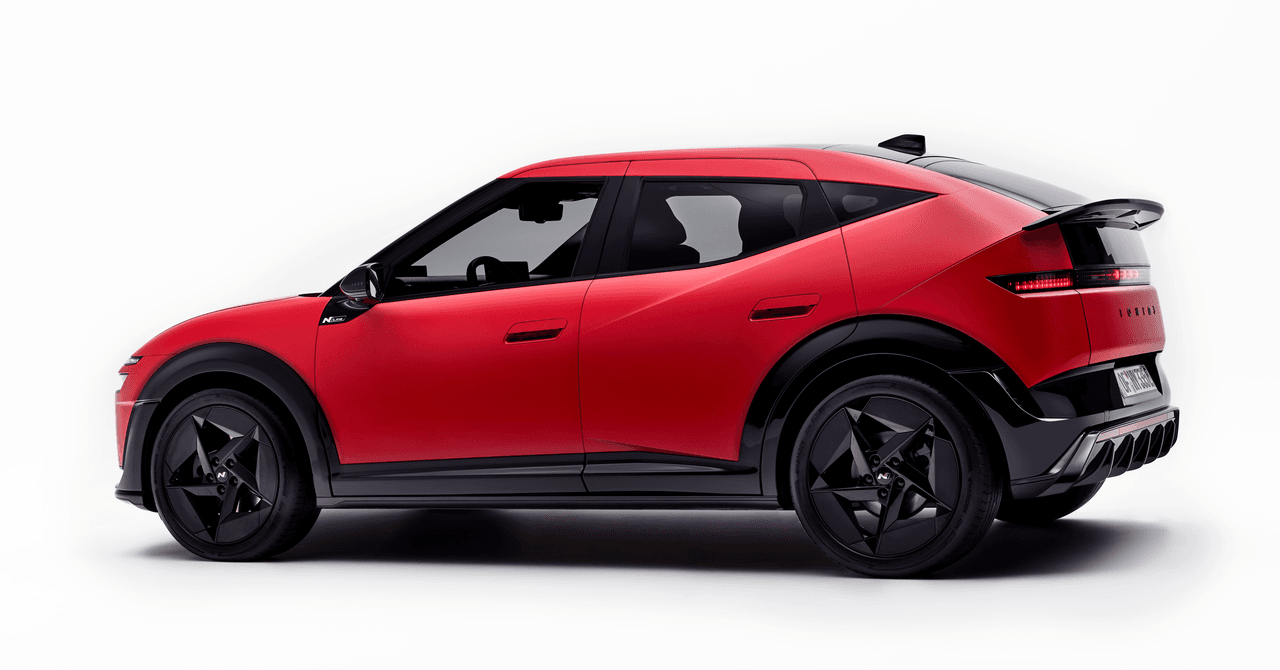

Hyundai’s New Ioniq 3 Has Hot-Hatch Looks, but Can It Beat BYD?

Hyundai has unveiled its Ioniq 3, a fully electric compact hatchback for urban driving designed to be as aerodynamically efficient as possible yet still offer up a surprisingly spacious interior—a trick the carmaker is loftily calling Aero Hatch. The 3 is intended to fill the gap between Hyundai’s Inster supermini and Ioniq 5 crossover.

In profile, the Ioniq 3 has a sleek front end that transitions into a roofline that stays straight over both front and rear occupants before dropping to merge with the rear spoiler. It’s this roofline that maximizes interior headroom for the rear passengers, but it also offers a supposed class-leading drag coefficient of 0.263.

The car has the same underpinnings as its sibling brand, Kia’s EV2. Two battery options will deliver a projected WLTP distance of 344 km (around 214 miles) for the Standard Range Ioniq 3; the Long Range version is supposedly good for a competitive 308-mile range. Built on the group’s Electric-Global Modular Platform (E-GMP), the car has a 400-volt architecture to lower costs rather than the 800-volt system of the Ioniq 5 N, 6, or 9 SUV. Still, this means that if you can find sufficiently fast DC charging, you can, in theory, top up from 10 to 80 percent in approximately 29 minutes (AC charging capability is up to 22 kW).

This is fine, but it is not a match for BYD’s new Blade 2.0 battery tech that WIRED tried, astonishingly allowing the Denza Z9 GT to charge its battery in just over nine minutes from 10 percent. True, that battery tech was in a $100,000 “premium” EV, but it’s coming to BYD’s wider models. And if BYD makes good on its plans to deliver a charging network to rival Tesla’s Supercharger, then very soon buyers will be expecting comparable charge times, and 30 minutes will quickly feel awfully long.

I asked José Muñoz, Hyundai Motor Company president and CEO, whether this new battery technology from BYD concerns him, whether Hyundai—leading the EV pack with 800-volt architectures for so long—needs to match the Blade 2.0’s performance. “We welcome the challenge,” Muñoz tells me. “Every challenge is an opportunity to do better. And I can tell you that, lately, we have a lot of opportunities to do better.”

“We are also working on fast charging,” Muñoz says, adding that Hyundai’s success will be built on not merely one leading technology but many. “There are not more elements that may be offered by the Chinese that we can offer. It’s only a matter of how you mix them. A lot of times, you get stuck into one indicator. I’m an engineer. And we always have the example of the airplanes: What is more important in an airplane, altitude or speed? There is only one answer. You need to achieve both.”

-

Fashion5 days ago

Fashion5 days agoFrance’s LVMH Q1 revenue falls 6%, shows resilience amid Iran war

-

Sports1 week ago

Sports1 week agoThe case for Man United’s Fernandes as Premier League’s best

-

Entertainment1 week ago

Entertainment1 week agoPalace left in shock as Prince William cancels grand ceremony

-

Business1 week ago

Business1 week agoUK could adopt EU single market rules under new legislation

-

Entertainment6 days ago

Entertainment6 days agoIs Claude down? Here’s why users are seeing errors

-

Fashion1 week ago

Fashion1 week agoEnergy emerges as biggest cost driver in textile margins

-

Business1 week ago

Business1 week agoDelta Air Lines unveils first new Delta One suite in premium cabin arms race

-

Tech1 week ago

Tech1 week agoA Lot of Shops Won’t Fix Electric Bikes. Here’s Why